How to Increase Website Traffic

How to

The leading indicator of the popularity and overall performance of any online resource is its website traffic amount. Correctly optimized sites with an effective semantic core, gripping content and the right promotion strategy are guaranteed to get into the top positions in Google search results.

If you are new to the concept of website traffic increase or simply don’t understand how to get millions of traffic to your website, we are here to help. Let’s figure out the stages you need to follow when you start off SEO optimization on your site and learn how to increase website traffic efficiently.

Solve technical issues with Netpeak Spider

So, how to get traffic to your website? Let’s start with the basics.

Your website is created for both users and search engines. Users evaluate your website by its content, search engines by content, and other numerous technical aspects. Let's look at the key ones in turn:

- Availability for search engines

- Loading speed

- Broken links and duplicate pages

- Mobile version

Availability for search engines

How to increase traffic on your website? First, make sure your site is indexed and added to Google Search Console. Tracking your website performance, checking if your site's pages are open for indexing, and seeing the pages how Googlebot sees them using the ‘URL inspection’ tool can also help you drive traffic to website.

Also, to get website traffic, give a look at your robots.txt file. It’s a common case when webmasters instruct robots not to crawl some pages, forget about it, and feel desperate looking for issues in some other places.

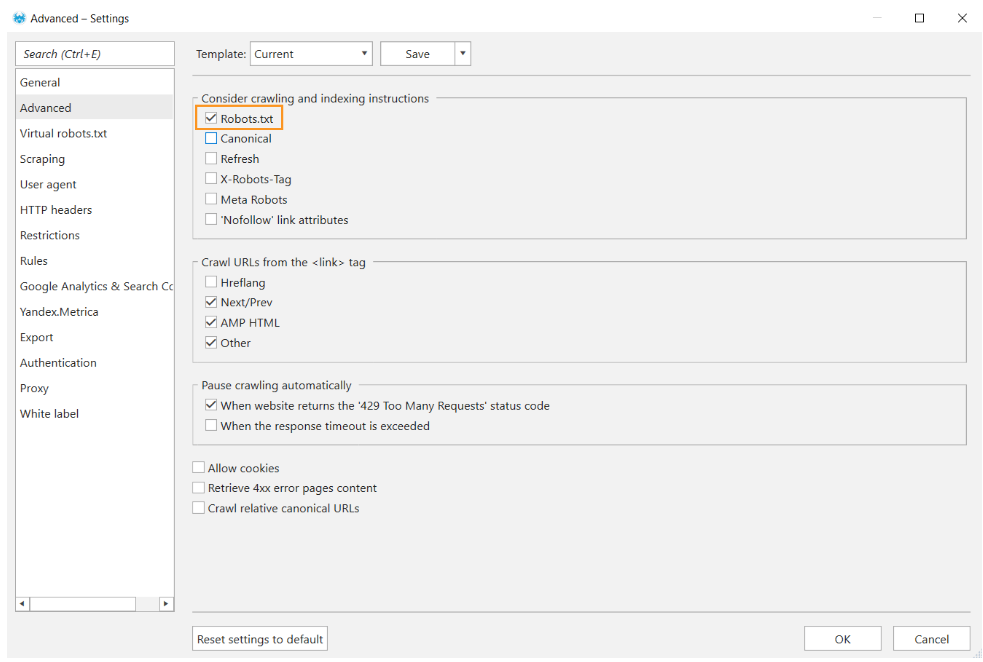

In Netpeak Spider, you can crawl websites following the same crawling instructions that you set for Google bots. To do so, go to ‘Advanced’ settings and tick the ‘Robots.txt’ item.

You can learn more about robots.txt file and how it can help you increase website traffic in our article.

Improve page speed

The next step of how to increase your website traffic would be enhancing the speed of your page. Google takes into account the loading speed of pages when indexing them since it really cares about serving content to the users with all possible haste. If it takes an eternity for your website to load, it may lead to high bounce rates (users click on your website and quickly bounce back in the SERP) and drops in ranking since robots will act the same way – open your site, yawn, and decide not to waste time on crawling your lame site.

Here are things that can pull your website back and help increase site traffic:

- Heavy code. Optimize your code, remove redundant data, ‘clear up’ space for fast loading. Google suggests minifying your HTML, CSS, and JavaScript resources.

- Too many redirects. Redirects take additional time for the HTTP request-response cycle to complete thus lowering the loading speed.

- Blocked JavaScript rendering. Once again, Google recommends avoiding or minimizing the use of blocking JavaScript. They say that the browser has to build the DOM tree by parsing the HTML markup. During this process, whenever the parser encounters a script it has to stop and execute it before it can continue parsing the HTML.

- Images as one of your main foes. Compress the image files, choose the right image format – .png is the best fit, and optimize alt texts – Google ‘reads’ them, so add additional value to them.

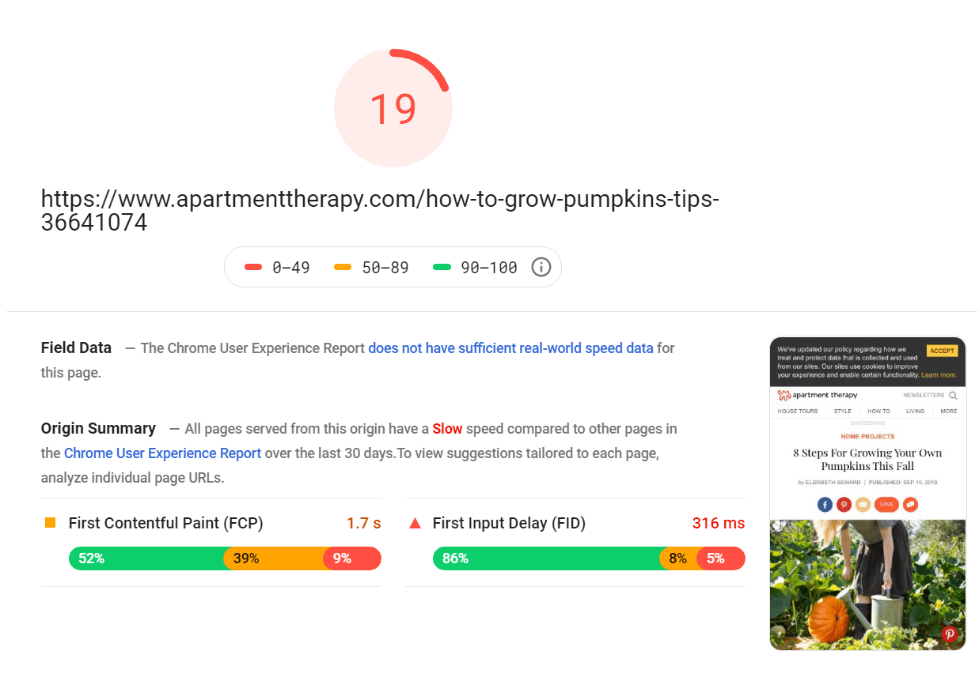

If you’re wondering, “How to get traffic to my website?” consider also analyzing website loading speed with Google PageSpeed Insights. This is an example of a slow website:

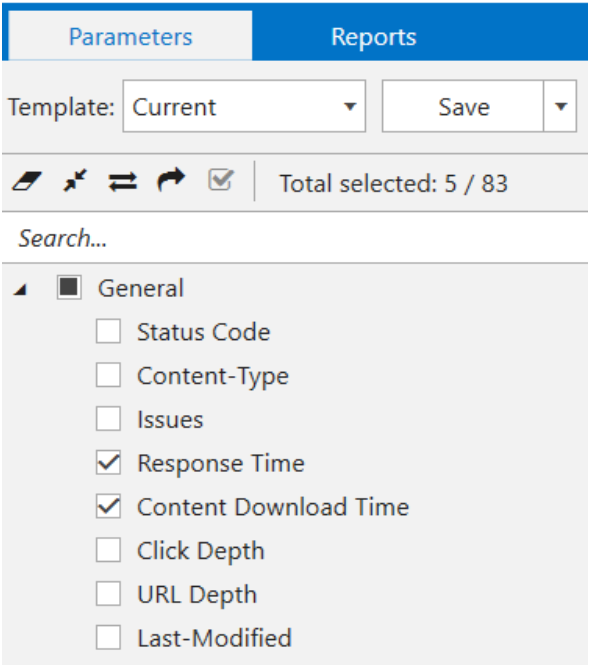

Also, you can check the loading speed in Netpeak Spider.

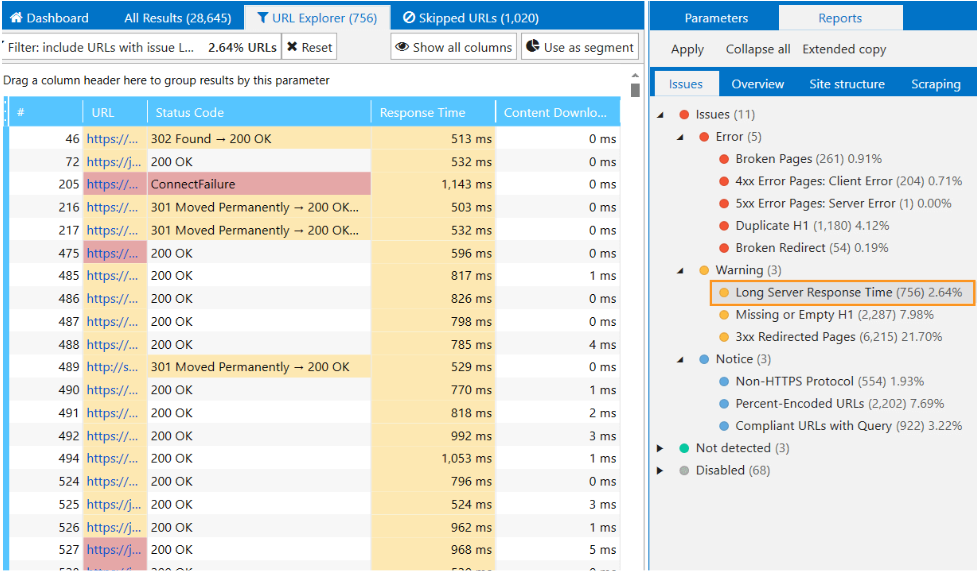

- Choose the 'Response time' parameter, which displays the corresponding value for each URL on your site, and the 'Content download time' parameter, which displays the download time of each URL of your site.

- And see which pages are loading slowly in the ‘Reports’ tab or on the dashboard.

We have an all-encompassing guide to help you figure out how loading speed works and improve it to boost website traffic. Check it out to gain valuable knowledge and insights.

Remove broken links and duplicate pages

How to increase traffic on website with the help of content? Ideally, you have to ensure that this content is original and updated on time. However, when you try to put it in practice, you realize that fully unique content is an inaccessible myth. Inevitably, you face duplicates.

So why should you avoid duplicate pages to generate traffic to website? When Google bots crawl duplicate pages, they are confused which page is the ‘source’ one and what which to index. To tackle this issue, use the rel= "canonical" tag. Canonicalization helps Google to understand what pages are preferred for indexing. Imagine that you say, ‘Hey, don’t index this page, go and index that one!’

Broken links are another gross issue that leads you to a nonexistent webpage, file, or image. In this case, the server returns 404 or 410 response codes. Usually, you see blank pages with a 404 error, but it’s the right tone to spruce up such pages to smooth the user’s experience. Even if you failed to find what you looked for, this cutie would amuse you at least for a while.

Checks for broken pages are a kind of daily rut for webmasters. Do it quickly and automatically with Netpeak Spider. This article can also help you learn how to check broken links without bumps and pitfalls.

You can check your website for broken links, duplicates, analyze page loading speed, and solve many other basic tasks even in the free version of Netpeak Spider crawler that is not limited by the term of use and the number of analyzed URLs.

To get access to free Netpeak Spider, you just need to sign up, download, and launch the program 😉Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the most suitable for you. Using this tool is one of the best ways to drive traffic to your website.

Optimize for mobile

The next step of how to increase traffic to a website would be mobile optimization. Over half of web traffic comes from mobiles. SimilarWeb research shows that mobile traffic is on the rise while desktop traffic is dramatically decreasing. That’s why Google urges websites to have a user-friendly mobile version. Google made it clear that mobile-friendly pages are a priority over non-mobile-friendly pages, and mobile-first indexing means that Google chiefly fetches the mobile version of the content for indexing and ranking.

Here are some of Google’s word-of-mouth recommendations:

- Configure the responsive design. Your mobile template should adapt to any device and screen size. All graphic elements, links, and function buttons should work correctly.

- Use AMP (Accelerated Mobile Pages). AMP helps deliver content faster because it uses its cache JavaScript (HTML, etc.) servers. The AMP version of the page can be featured on mobile search as part of the rich results and carousels.

To check your website for compatibility with portable devices, take the Mobile-Friendly Test. You can also use our smart checklist that can help you make a website mobile-friendly and generate website traffic easier.

Optimize your content with keywords

Now that you know how to increase the website traffic by fixing various technical issues, the next stage would be improving the quality of your content. This process starts with analyzing the semantic core.

The semantic core is a set of words and phrases that reflect the subject and structure of the site. Think about the semantic core before starting any SEO activity on the website, and try to ask the global question: is my content relevant to users’ intent, and why is it relevant? Determine what search queries users are looking for. Basically, there are five search query types:

- Informational. A searcher needs information such as ‘who is the president of the USA’, ‘how old is Emma Watson’, etc.

- Navigational. A searcher wants to visit a specific website or place on the Internet. The query looks like ‘netpeaksoftware’, or ‘Facebook’.

- Transactional. A user wants more interaction on your website, wants to complete a transaction (to purchase something), for instance, ‘tickets for Joker movie’.

- Commercial. The opposite to the informational one. A person looks for the name of a product, compares brands or checks the prices.

- Local query. A searcher wants to find something near them, such as a cafe, doctor, parking lot, etc.

The semantic core of a site can be of various sizes, like small lists of keywords, for example, 10-100, and tens and hundreds of thousands of keywords. It solely depends on the website size. To compose the semantic core for your site in order to increase web traffic, you need to select keywords thoroughly, and then distribute them across the site. It’s one of the key features of the Serpstat service.

The semantic core usually comprises high-frequency and low-frequency queries. It’s a common mistake to pump up the semantic core only with high-frequency keywords.

There are two reasons:

- it may be tough to rank in the highly competitive niche (for instance, your competitor is Amazon, God bless you)

- you promote in a very specific narrowly focused niche, and you understand the aspects of the offered product / service, then low-frequency queries will help you to get the audience

To compose a semantic core, you need to determine the main areas needed to be promoted – next, select the keywords. If the site is large, then collecting keywords can take you awhile. Use such services as Serpstat, Keycollector, etc.

The next step is clustering of the semantic core to increase web site traffic. Group your keywords based on the analysis of search results from Google and other services. Clustering helps save time, divide keywords into groups, and avoid keyword stuffing on one page.

We know that keyword research and analysis is a whole different story. That’s why we have prepared a beginner-friendly guide for you that will help you dig further.

Optimize content

It can be quite difficult to figure out how to drive traffic to website if its current content doesn’t meet the target audience’s needs well. Users should benefit from reading your content. It must be unique, relevant, and well structured.

So, how to generate traffic to your website with the help of content? Start with text optimization. Put the main keywords you rank for in the crucial places: title, description, and h1. Keywords should be placed naturally. Otherwise, search engines will perceive this as excessive spam, and lower your rating or, even worse, impose a penalty for spammy content. Apart from being punished for spammy content, you can be stung for thin content issues. Google created a Panda algorithm to guard those who abuse lousy quality content.

Such content is often called thin. To learn more how to identify it and fix the issues it may cause, check out our instruction.

Duplicate content is another sin Google can go mad at and become a hindrance of getting traffic to your website. As we’ve mentioned, duplicates are identical or semi-identical content within one or several domains. Sometimes, the reason for duplicate content lies beyond obvious copy-pasting. There may be some technical issues such as incorrectly set redirects from HTTP to HTTPs, additional get-parameters and UTMs in the URL, changes in website structure, etc.

To learn how to quickly spot and fix the duplicate content issue on your website, read our beginner-friendly guide.

Structure your text. The text dismantled into headings (h1-h3), paragraphs, and lists is consumed easily by people and search engines.

Proceed with image optimization. As of yet, search bots can’t understand image content but do understand the text written in the alternative text (ALT). This text also displays when the picture is broken or unavailable for some other reason.

While image optimization can seem intimidating, it often takes only a useful guide and some time to figure it out.

Do competitive content analysis, it’ll provide you insights on what missed optimization opportunities you can take to improve your content, see what competitor’s pages drive traffic, and what keywords they rank for. To see your competitor’s top pages, go to Serpstat. You can also read our introduction to the competitor’s SEO analysis to get a grasp of it quicker and eventually increase traffic on the website.

Off-page optimization

To figure out how to increase web traffic, you need to focus on SEO optimization too. Backlinks are the focal point of off-page SEO. These are hyperlinks from other sites, blogs, and social networks to the pages on your website. Backlinks work as the vouches for your content’s quality across the broad internet landscape. There are three types of backlinks:

- ‘Natural’ links are editorially given when someone likes your content and wants to share it.

- Manual links are part of link-building activities. Creating good content goes at inception, the next step is to amplify it throughout the web. It implies an agreement with the owners of other sites on the placement of links to your website.

- Self-created links. Google frowns upon such links and considers them a part of black hat practices.

To discover more about backlinks and learn how to work with them efficiently, check out our article on them.

Apart from link building, there is non-link-related off-site SEO that can also help increase traffic to website. It includes:

- Social media marketing – any social platform like Facebook, Twitter, or Instagram is a vast number of potential visitors or customers for your site. Social activity is guaranteed to attract a new audience and build brand awareness.

- Guest blogging – contributing to blogs in your niche is one of the best ways to speak directly to your target audience. It takes time and research to figure out, but the process can become much quicker if you read our guest blogging guide with outreach tips.

- Linked and unlinked brand mentions – also builds brand awareness.

- Influencer marketing – brand promotion via the influencer / opinion leader in your field.

How to track changes

After the optimization process is completed, you should measure whether the implemented changes bring tangible results. What metrics should you focus on at the outset to figure out how to get traffic for your website?

- Engagement – the metric that tracks visitors' behavior once they reach your website. It includes:

- conversion rate – the number of conversions (end goals) completed per one visit. It can be anything you define as a goal: subscription, purchase, sign up, etc.

- time on page – the time users spend on page. This metric is tailored to each business individually. If you have a blog with a long sheet of texts, 10 seconds on a page is a bad sign for your blog.

- bounce rate – indicates the number of sessions when a person visited your site and shortly after ‘bounced’ back to the search results.

- click depth – if you have an online store, your goal is to bring people deeper (into the categories / subcategories, etc.), this metric makes sense for you.

- Traffic – gauge how much traffic you get from organic search.

How to gauge all these metrics and drive traffic to a website as well as get traffic insights? First, you can use Google Analytics data to get detailed statistics. It is one of the most effective ways to evaluate your optimization efforts. Here’re some basic things you can track in GA:

- Traffic to your site over time – you can see total users / pageviews / sessions during a specified date range.

- Click-through rate (CTR) – this is the percent of people who clicked on your page in the search results. To track any activity or specific triggers on your website, deploy tracking pixels via Google Tag Manager tool.

- Traffic from a particular campaign. For instance, Black Friday is nearing and you want to track the overall conversions made during this campaign. If so, you’ll probably need UTM (urchin tracking module) that you cling on to the end of the URL.

Check website traffic for improved organic search visibility

Now you know how to increase traffic to your website. However, driving traffic to website is just a part of the process. It is also important to analyze it regularly to ensure efficient SEO performance and organic search visibility.

To achieve this, use Netpeak Checker, a free smart tool that quickly scans traffic and offers valuable insights. Here is how to do this.

1. Open Netpeak Checker. Start by opening up the Netpeak Checker tool.

2. Find Settings. Head over to the settings section of the tool to adjust the parameters required for your analysis.

3. Choose Services. At this step, you can select specific services, both free and paid, to gather all the stats you need.

4. Select Free Services. Use our preset template, 'All Free,' to activate all the available free services easily.

5. Add API. Integrate APIs to receive daily insights on your website visits. Use Serpstat Profile with Lite or higher subscription, SimilarWeb API Management page with PRO subscription, Ahrefs OpenApp with paid subscription, and SEMrush with API access subscription. Then, paste your API keys into Netpeak Checker to fetch data from these services.

6. Choose Data You Need. Customize the analysis by selecting the data points and parameters that are important to you.

7. Start Scan. Initiate the scan to get an estimate of your website traffic using the settings and services you've chosen.

Check URLs for SEO parameters with Netpeak Checker

Another important thing besides boosting traffic and analyzing it is the URL check. Checking your links for SEO parameters can help you improve the quality of your SEO strategy and also contribute to improving the website’s performance.

Netpeak Checker is one of the most efficient and user-friendly options for that. The tool supports integration with 25 top services, such as Serpstat, Ahrefs, and Google Analytics, to scan your URLs for over 450 various parameters. It allows bulk checks, supports proxies, and auto-solves the CAPTCHAs to help you obtain the necessary information as quickly as possible.

Try Netpeak Checker to see for yourself how efficient it can be for boosting your SEO and driving website increase in traffic!

Conclusion

Hope this hefty chapter gave you a gist of what the website optimization process looks like and how to get traffic on your website. Check it every time until it becomes a daily rut for you, and dive deeper in details to sharpen up your act.

The most critical aspects to get traffic to website are:

- Technical performance of your site – the ‘shadow’ side of your website that establishes the whole game rules

- Loading speed – deliver information lightning-fast and you won’t see your audience leave you for your competitors

- Semantic core – cluster keywords to precisely define the idea and theme of your site

- Website content – should be relevant, concise with a moderate number of keywords, and sufficiently covered topics

- Off-page SEO activities – go beyond the realm of your website to build brand awareness and drive audience

- Measure your optimization efforts – track the implemented changes to see the decrease and increase in traffic to the website. whether your efforts bring tangible results