75 Web Scraping Examples that Will Save Your Time

Use Cases

For a while now, web scraping (data search and extraction from HTML-pages) has become an ultimate tool for digital marketers and analysts that need to collect massive data sets for further research. I decided not to dig into the technical side of how to crawl data from a website, but take a look at why and when it could come in handy.

There’s an endless number of web-scraping use cases from price monitoring to content analysis. I'm going to fire up Netpeak Spider and Netpeak Checker tools to show you how to execute 75 real-life scraping cases.

P.S. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the one most suitable for you.

💥 As an awesome bonus, I’ve collected a cheat sheet with scraping parameters for the most popular sites like Google, Amazon, Facebook, etc.

- Ecommerce

- Search Engines: Google, Bing, Yahoo

- Social Media: Facebook, Youtube, Instagram, LinkedIn, Pinterest, Twitter

- Contact Information

- Content

- Amazon

- Wikipedia

- Whois

- SimilarWeb

- Specific Code Pieces on Websites or Separate Pages

- Business Directories: Yelp, Yellow Pages, BBB

- Digital Distribution Services: Google Play Store, AppStore, Steam, etc.

- Reddit and Hacker News

- Quora

- WikiHow

- G2 Crowd

- Stack Exchange

Click on the button to open a detailed table of contents you can use to jump straight to the scraping case that you need.

- Ecommerce

- 1. Price

- 2. Product Range

- 3. Product Description and Characteristics

- 4. Product Images

- 5. Product availability

- 6. Product ratings and reviews

- 7. Discount price and discount percentage

- 8. Special offer dates

- 9. Available product variations

- 10. Delivery options

- Search Engines: Google, Bing, Yahoo

- 11. SERP Scraping

- 12. Content of Snippets in SERP

- 13. Featured Snippet

- 14. Paid Search Results

- 15. People Also Ask and Searches Related To

- 16. Positions in Organic Search

- Social Media: Facebook, Youtube, Instagram, LinkedIn, Pinterest, Twitter

- 17. Results for a Target Query

- 18. Number of Likes, Dislikes, and Comments

- 19. Number of Views

- 20. Number of Publications

- 21. Number of Subscribers

- 22. Business Page Description

- Contact Information

- 23. Emails and Phone Numbers

- 24. Employees Names and Positions

- 25. Links to Social Media

- 26. Business Addresses

- 27. Contacts from Structured Data

- Content

- 28. Typos in Text

- 29. Author Names

- 30. Content Size

- 31. Meta Tags

- 32. Content Popularity Metrics

- Amazon

- 33. Product Details

- 34. Product Reviews

- Wikipedia

- 35. List of Sources

- 36. Verification Requests

- 37. Infoboxes

- Whois

- 38. Domain Creation and Expiration Dates

- 39. Domain Registrar Email

- SimilarWeb

- 40. Traffic Size

- 41. Average Visit Duration and Pages Per Visit

- 42. Traffic Sources

- Specific Code Pieces on Websites or Separate Pages

- 43. List of Used Technology and Scripts

- 44. Schema Markup

- 45. Hreflang

- 46. Google Analytics and Google Tag Manager

- 47. Open Graph и Twitter Cards

- Business Directories: Yelp, Yellow Pages, BBB

- 48. List of Local Businesses

- 49. NAP

- 50. Business Rating

- 51. List of Reviewers

- 52. Reviews

- Digital Distribution Services: Google Play Store, AppStore, Steam, etc.

- 53. Relevant Products

- 54. Product Statistics

- 55. Technical Characteristics

- 56. User Reviews

- Reddit and Hacker News

- 57. Number of Comments and Upvotes

- 58. Followers Count

- 59. List of Posts

- 60. List of Moderators and Their Activity

- 61. Active Users

- 62. Redditors’ Info From Snoopsnoo

- Quora

- 63. List of Questions

- 64. Number of Views, Answers, and Followers

- 65. Top Responders

- 66. Answer Wiki Presence

- WikiHow

- 67. Target Articles and Questions

- 68. Article Stats

- 69. Last Update Date

- G2 Crowd

- 70. List of Relevant Products

- 71. Ratings and Review Count

- 72. Product Reviews

- 73. List of Reviewers

- Stack Exchange

- 74. Relevant Questions

- 75. Question Parameters

Ecommerce

Ecommerce is one of the most popular areas for web scraping, where it’s mostly used for product data collection from competitors’ or suppliers’ websites.

The number of parameters you can scrape from Ecommerce sites is limited only by the specificity of each site. Here’s the list of key metrics almost all sites display.

1. Price

Price is probably the first thing that comes to mind in terms of web-scraping. Price scraping is useful for monitoring and comparing competitors’ prices with prices on your site. For example, you could run an Adwords campaign for products that are cheaper on your site. We recommend that you read the article on how to scrape prices from a website.

2. Product Range

Product range can be a number of products under a certain category or filter, or all products on a website. Scraping product names (IDs) and count is needed for a clear understanding of yours or competitors’ product range. It will help identify thin product listing pages that you need to work on.

3. Product Description and Characteristics

Product description is the most significant content part of a product page. For correct optimization, this text should be sizable and unique. Why would you scrape product descriptions from other sites? To analyze the content and set clear requirements for copywriters so they can create unique descriptions for your site.

4. Product Images

High-quality images are the main component of a successful Ecommerce website. If for some reason you don’t have unique photos of some products on your site, you can temporarily borrow them from the supplier’s website. Using scraping, you can quickly collect links to product images.

5. Product Availability

Scraping allows collecting a list of products that are out of stock on competitors’ sites. As an example, you could run an ad campaign promoting them on your site.

6. Product Ratings and Reviews

Ratings and reviews are other essential elements of success in the Ecommerce niche. Scraping and further analysis of this data allow finding the most popular and sought-after products. You can also collect customer reviews for more in-depth analysis (e.g., sentiment analysis).

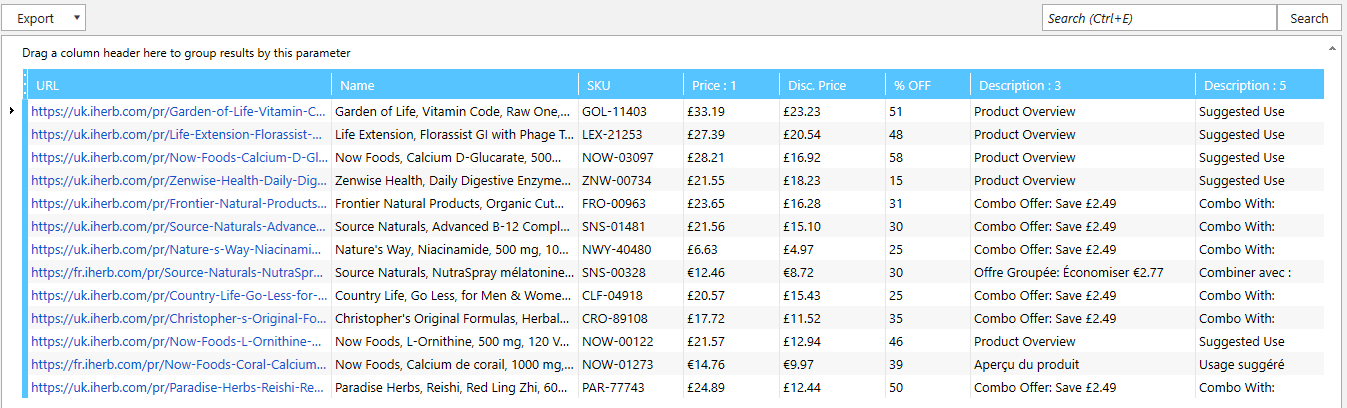

7. Discount Price and Discount Percentage

Typically, most of the Ecommerce sites show two types of prices – gross price and discount price. Scraping of discount prices and discount percentages allows monitoring competitors’ pricing policy and strategy.

8. Special Offer Dates

Try scraping the dates when special offers take place. It might be the whole category named ‘Discount’, ‘Sale’, ‘Special offer’, etc. Monitoring competitors’ special offers, you can plan your own campaign more efficiently.

9. Available Product Variations

Detailed analysis of available product sizes, colors, or other variations on competitors’ websites. Knowing this data allows identifying your strengths and weaknesses against your competitors.

10. Delivery Options

Scraping of delivery options, dates, prices from competitors’ sites allows creating a more advantageous offer. It’s especially useful when competitors have multiple delivery options.

Search Engines: Google, Bing, Yahoo

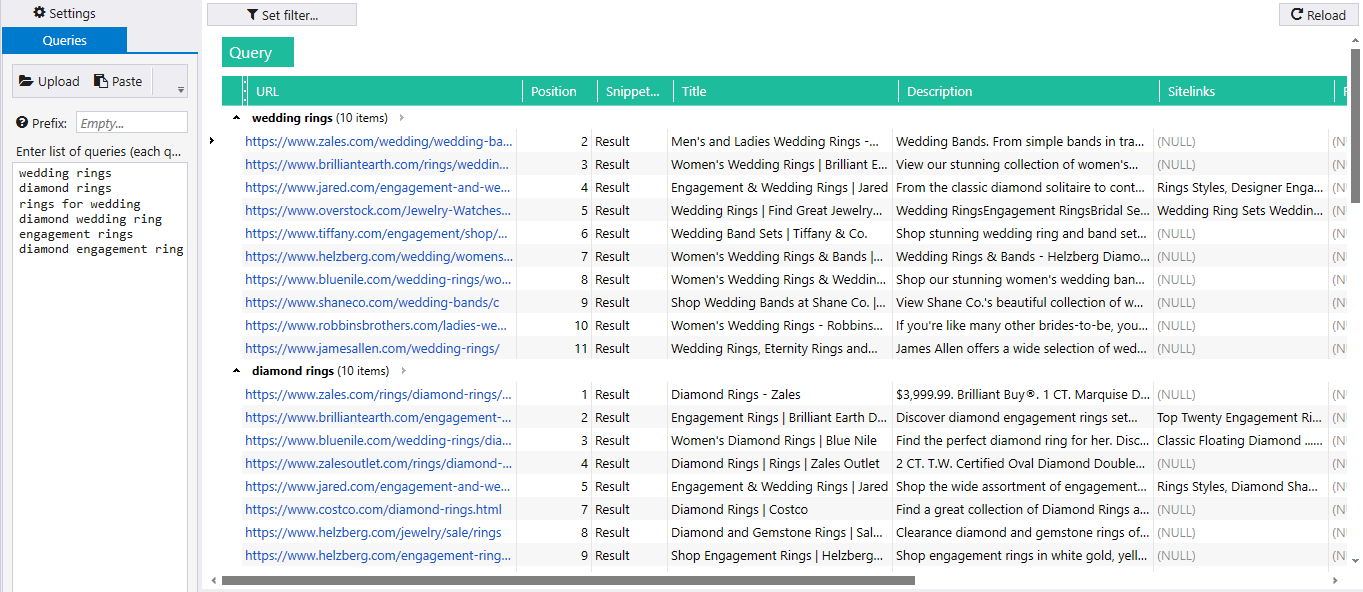

Search engine scraping for a list of queries is used in competitive and niche analysis, searching for potential clients or dropped domains, SERP analysis, and many other interesting tasks.

Using Netpeak Checker, you can extract comprehensive data on search results taking into account location, language, and other search settings. Some tasks can be completed in Netpeak Spider.

11. SERP Scraping

SERP scraping allows extracting search engine results for a list of search queries. Next, you can analyze the results by SEO parameters, scrape their contacts, or track changes. Scraping provides real-time data that is vital for organic results and niche analysis.

12. Content of Snippets in SERP

Scraping snippet content (title, meta description, ratings, site links) of top pages in organic search results. This data is essential for niche research, and understanding what content search engines prefer for the corresponding search term.

13. Featured Snippet

Featured snippet (also known as a position zero result) is a piece of content from one of the search results that Google finds the most relevant for the search query. Scraping allows checking a list of search queries for the presence of the featured snippet and extracting its content.

14. Paid Search Results

Paid search can occupy up to four results from both the beginning and end of a search results page. Using scraping, you can collect paid search results for queries from your list and their snippets.

15. ‘People Also Ask’ and ‘Searches Related To’

Google displays two types of blocks with related searches – ‘People also ask’ somewhere in the middle of search results and ‘Searches related to’ at the very end. You can scrape all the related searches from these blocks to find similar keywords and topics.

16. Positions in Organic Search

SERP scraping is perfect for position tracking. There are multiple position tracking services, but if you don’t need to check lots of keywords on a daily basis, custom SERP scraping will suit you.

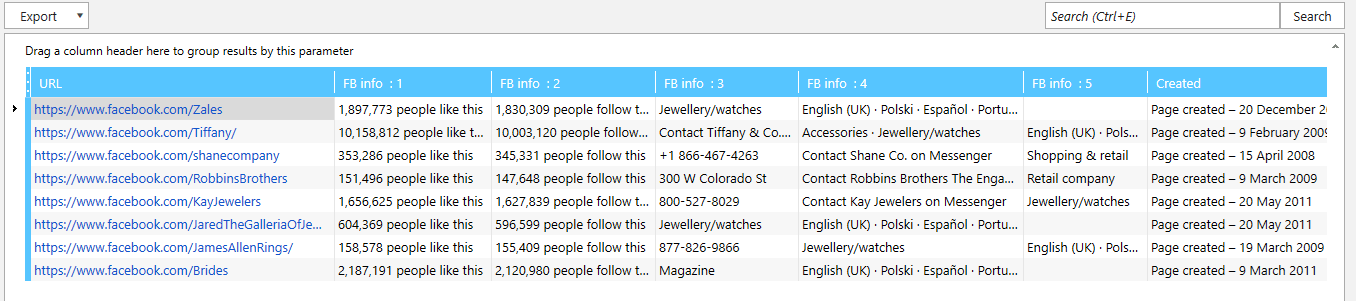

Social Media: Facebook, Youtube, Instagram, LinkedIn, Pinterest, Twitter

All of us interact with social media one way or another. They store and present lots of valuable data that can be collected through scraping.

Web scraping for marketing will be beneficial for digital marketers, sales specialists, and brand managers who want to analyze social pages for further collaboration.

17. Results for a Target Query

Social media are basically search engines of some sort. All of them have an internal search, which means we can collect the results for target queries. It significantly boosts niche analysis and allows you to identify competitors for further research.

18. Number of Likes, Dislikes, and Comments

Likes and comments are key audience engagement metrics that define how popular the community, business page, or channel is. Bulk analysis of user engagement is essential if you want to find the best fit for collaboration or advertising. It also allows tracking competitors’ activity and growth in social media.

19. Number of Views

Number of views is another metric that defines the demand for particular content. Scraping allows collecting the view count of various publications to find the most popular content or analyze competitors’ content.

20. Number of Publications

Automated web scraping of the publication count for a list of accounts allows you to track how many content pieces your competitors normally produce per certain period. This data is extremely useful for competitive analysis.

21. Number of Subscribers

Scraping can also automate subscriber count collection for a list of pages or channels to evaluate their reach. Regular monitoring allows tracking growth rates of your competitors and the whole niche.

22. Business Page Description

Data scraping of public information from business pages. You can collect contacts, address, pricing, and other data present in the company’s business profile.

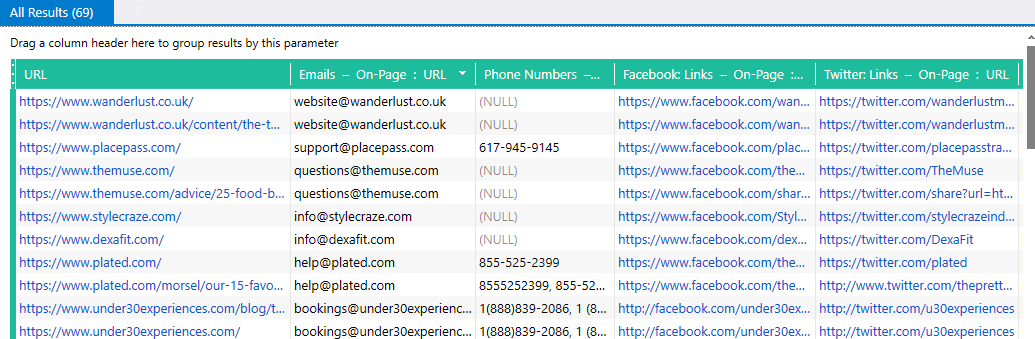

Contact Information

Contact scraping is a widespread use case. If you want to quickly collect contacts of potential customers or websites for further outreach – scraping is what you need.

Using Netpeak Checker, you can automatically scrape phone numbers, emails, and links to social media pages from a list of pages. If you need to check the whole site, you can use custom scraping in Netpeak Spider.

FYI you can scrape contact information in Netpeak Checker absolutely for free. It's the feature that goes with Freemium plan 💚

23. Emails and Phone Numbers

Netpeak Checker allows to automatically extract emails and phone numbers available on a page. You can also scrape the whole site for emails and phone numbers using regular expressions.

24. Employee Names and Positions

Many companies have special pages showcasing their employees. You can scrape their info for recruiters or sales specialists.

25. Links to Social Media

Using Netpeak Checker, you can automatically collect links to Facebook, Twitter, Youtube, Pinterest, LinkedIn, and Instagram, from a list of pages.

26. Business Addresses

Scraping of physical addresses from a list of websites. For example, you can also scrape the addresses of local outlets if your competitors are retail chains.

27. Contacts from Structured Data

Schema.org vocabulary allows providing search engines with structured data about their address, contact info, business hours, and other essential information. Subsequently, you can scrape all this data.

Content

Scraping is extremely popular in content marketing and content analysis. The thing is that scraping automates the collection of all the critical content parameters and significantly eases content analysis.

28. Typos in Text

Custom scraping using regular expressions allows automating the detection of common mistakes or outdated brand and product names. This way, you can find all pages containing specific words with mistakes.

Now there’s a built-in spell checker in Netpeak Spider! You can check the spelling on the entire page and separately in the title, description, image alt tags, H1-H6 headings. In short, spell checker drills down all important and necessary places on your website. And the biggest kicker is that you can use this feature on the Freemium plan.

29. Author Names

Another remarkable scraping use case in content marketing is the extraction of author names from popular blogs. It can be done for further outreach or adding this data to content analysis to find the most popular and successful authors.

30. Content Size

Scraping allows analyzing content for the number of words, characters, images, videos, headings, etc. This will be helpful for those who:

- want to check and optimize the content on their site

- want to analyze competitors’ content or leading pages in SERP for target queries

31. Meta Tags

If you want to analyze competitors’ content strategy, you have to collect all their content pieces, including the meta tags. Scraping allows extracting the title tag, meta description tag, H1-H6 headings. Having all the data in one place, you can uncover optimization techniques and check how competitors structure their content.

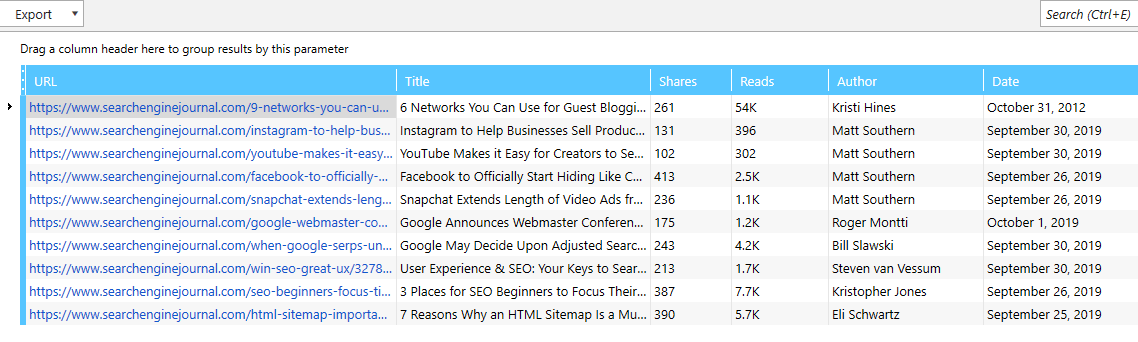

32. Content Popularity Metrics

There are lots of metrics that define content popularity and virality. Some of them are a number of views, likes, upvotes, shares, and comments. Using web scraping, you can collect all public metrics of content pieces to analyze the most popular topics.

Amazon

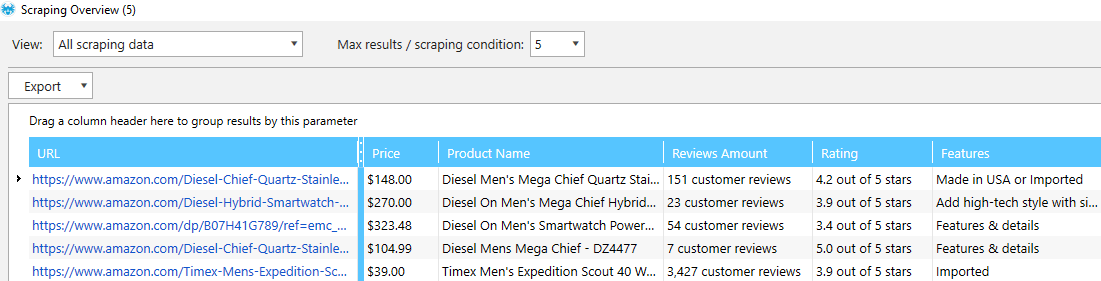

Amazon scraping will mostly resonate with webmasters building niche websites monetized by the Amazon affiliate program.

33. Product Details

There’s a bunch of product data you can scrape from the Amazon product pages: ratings, price, description. Later, you can use it as the basis for product reviews on your site.

34. Product Reviews

You can also scrape Amazon reviews from target product pages. Analyzing hundreds of product reviews allows identifying what words people use to describe specific products. Knowing such insights, you can create content that will better resonate with the target audience and convert.

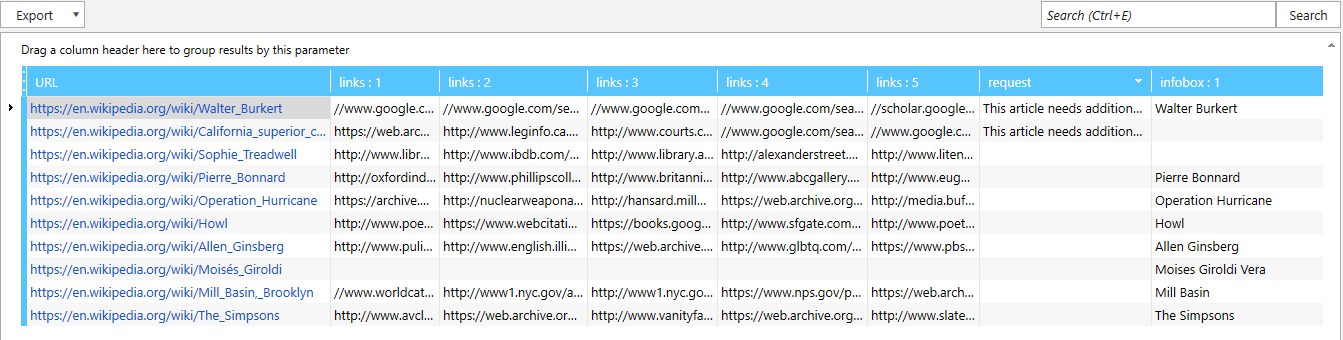

Wikipedia

Wikipedia is the biggest online encyclopedia out there. An abundance of publicly available content gives you lots of scraping opportunities. Wikipedia scraping is especially beneficial in terms of link building and searching for dropped domains.

35. List of Sources

Each article may have links to external sources that are specified in the ‘References’, ‘Further reading’, and ‘External links’ sections. Scraping external links from relevant wiki articles you can find broken links and dropped domains for further link building.

36. Verification Requests

Scraping allows automating search of relevant articles that require verification and additional citations. You can enlarge them with relevant content and citations to increase your reputation, or specify your website as a data source.

37. Infoboxes

An infobox is a table containing facts about the subject. It’s mainly present in articles about celebrities, places, films, etc. You can scrape infoboxes to get content for articles on your website.

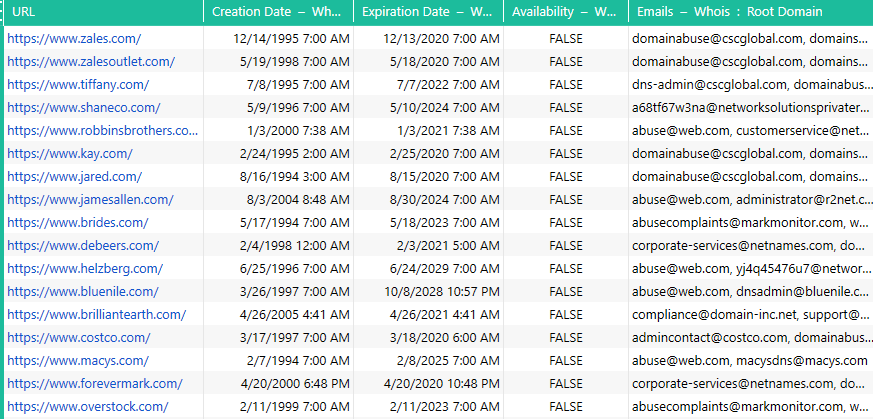

Whois

Whois is a query and response protocol used to retrieve data on domains. You can automate Whois data collection for a list of domains with Netpeak Checker.

38. Domain Creation and Expiration Date

Whois shows domain registration and expiration dates. Operating this data, you can identify domain age, and find domains that are going to expire soon or are already expired.

39. Domain Registrar Email

Another useful thing you can scrape from Whois is an email of the domain registrar. It’s usually used to report abuse.

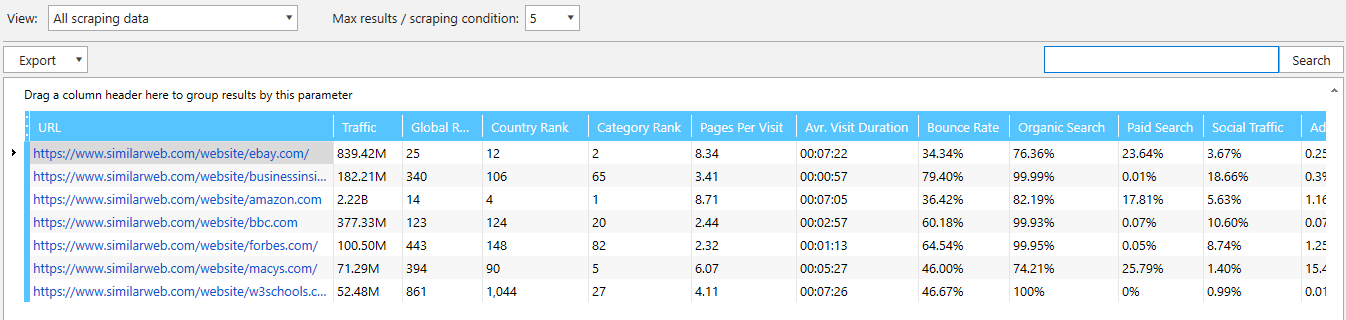

SimilarWeb

SimilarWeb is a world-famous analytics service using which you can get traffic data on any website and many other parameters. Those who have the paid version of SimilarWeb can automate data collection through an API integration in Netpeak Checker.

Though, using custom scraping in Netpeak Spider, you can extract all the key parameters from SimilarWeb for a list of URLs. You will find the corresponding scraping conditions in the cheat sheet.

40. Traffic Size

Traffic analysis is the main feature of SimilarWeb and a key metric of website evaluation. Scraping allows analyzing a list of domains and getting their estimated monthly traffic.

41. Average Visit Duration and Pages Per Visit

SimilarWeb shows an average session duration, number of pages per visit, and a bounce rate of a website. These parameters offer a more detailed understanding of website traffic quality.

42. Traffic Sources

Another great feature is an opportunity to get a traffic source ratio from the main traffic channels. You can also scrape the top 5 countries driving most visits and top 5 referring domains. These metrics are essential for traffic analysis and understanding of competitors’ strategy.

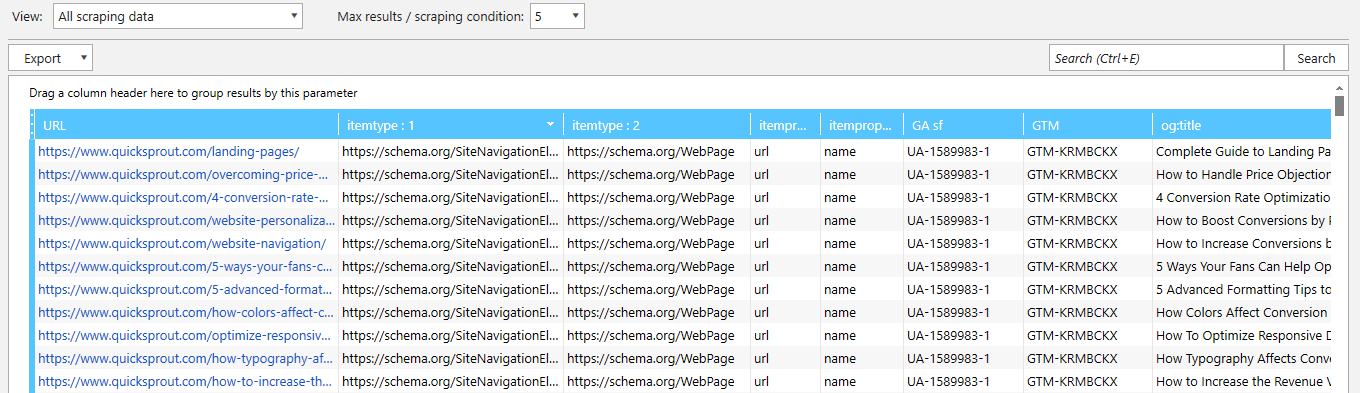

Specific Code Pieces on Websites or Separate Pages

Using scraping, you can check the whole site or a list of sites for specific pieces of code. For example, to understand what technology is being used.

43. List of Used Technology and Scripts

If you want to check what sites from a list use online chat or any other technology available in the source code, you can check them by specific code pieces corresponding to one or another script.

44. Schema Markup

Schema markup is used to structure and present data to search engines. There are more than 600 types of Schema for different needs. Scraping schema, you can get key data from website pages. For instance, Organization Schema allows specifying a business address, logo, contacts, etc.

45. Hreflang

Hreflang allows setting up multiple language and region page versions for international websites. To check if all tags are set correctly, you can set several scraping parameters and crawl the whole site.

46. Google Analytics and Google Tag Manager

It’s common when several issues occur due to incorrectly placed GA or GTM codes. Scraping allows checking the presence of analytics code on all pages.

47. Open Graph и Twitter Cards

Open Graph and Twitter Cards markups allow specifying the content you want to use while sharing pages in socials. Scraping helps to check the presence and correctness of these meta tags.

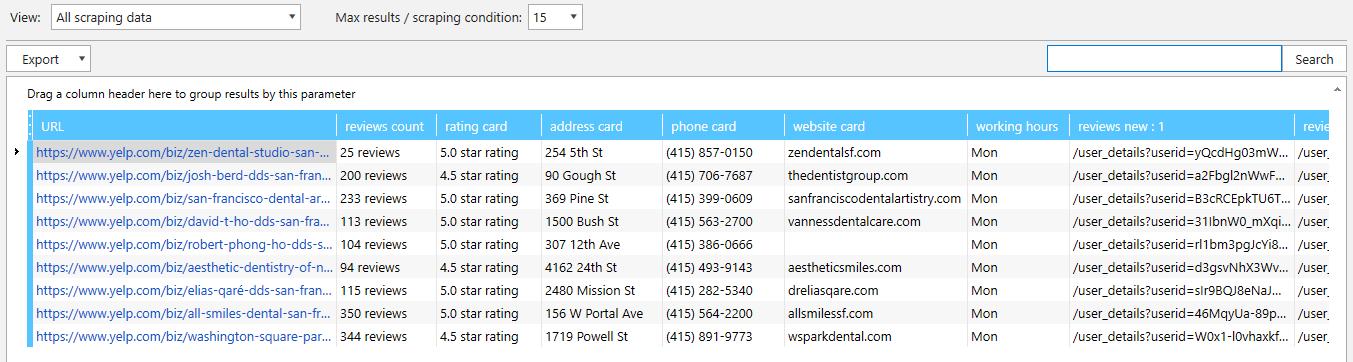

Business Directories: Yelp, Yellow Pages, BBB

Yelp, Yellow Pages, and Better Business Bureau are the most prominent examples of local business directories. In reality, there are dozens of others.

Millions of local businesses add their NAP data, and visitors can leave their feedback. Using scraping, you can automate the collection of local businesses, their contacts, and other valuable data.

48. List of Local Businesses

Want to get a list of local cafes in South Chicago or dentists in Rome? Business directories are a perfect source of such data. Use advanced sorting to get the most precise results and scrape them in a couple of minutes.

49. NAP

NAP data is the basis of any directory. You can easily scrape phone numbers, address, website, business hours, etc. Your sales team will be grateful.

50. Business Rating

Each directory has a custom rating system. You can scrape business rating and a number of reviews to compare various businesses or their local outlets. Regular scraping allows monitoring of competitors’ growth rates.

51. List of Reviewers

Customers who reviewed your competitors are your perfect target audience. You can scrape their profiles and invite to your place or provide a special offer.

52. Reviews

Currently, there are more than 190 million local business reviews on Yelp. You’ll need plenty of resources and time to scrape them all, but you can easily scrape yours or competitors’ reviews. This will help identify your strengths and weaknesses.

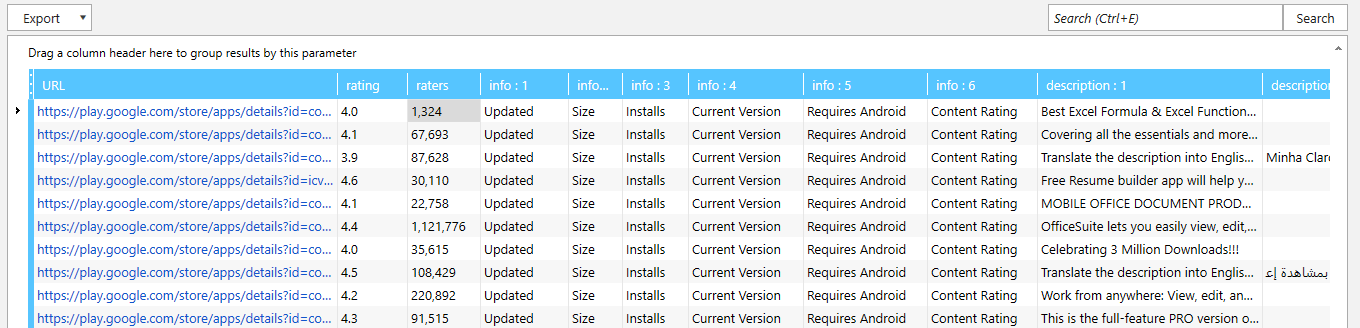

Digital Distribution Services: Google Play Store, AppStore, Steam, etc.

Scraping of digital distribution services will spark the interest of marketers and entrepreneurs working in the app development niche. You can automate competitive analysis and find what people want to see in your app.

53. Relevant Products

Scraping helps to collect a list of relevant products for the target keyword for further analysis. Using filtering, you can extract the most popular or only free products.

54. Product Statistics

After scraping the list of relevant apps, you can collect their stats to compare and find the most popular ones. For example, you can extract a number of downloads, ratings, updates, etc.

55. Technical Characteristics

Another critical data you can extract is a technical description of each product. This could be its size, price, developer, and so on. Afterward, you can compare products to identify their strengths and weaknesses.

56. User Reviews

Similarly to Amazon reviews scraping, you can extract reviews from yours or competitors’ product pages. User reviews let you execute sentiment analysis and back up your SWOT-analysis with real data.

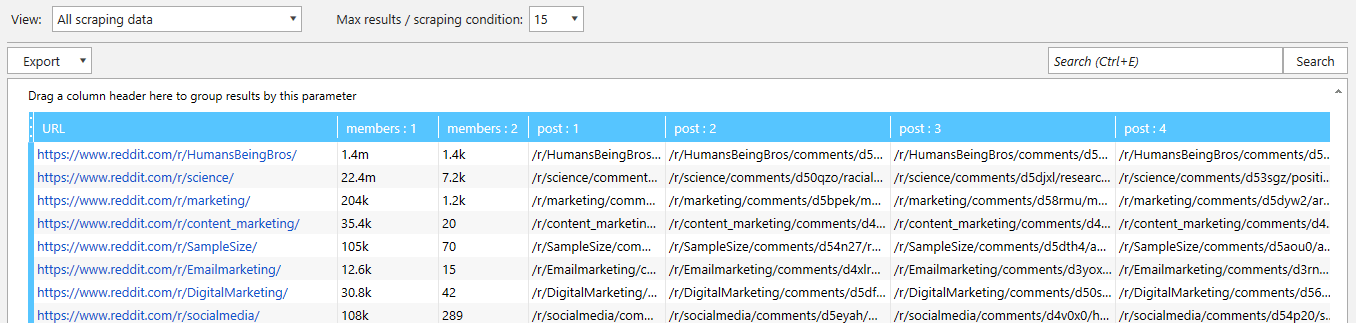

Reddit and Hacker News

Reddit is one of the biggest UGC platforms in the world, which monthly traffic exceeds 1.4 billion people. Having such a broad audience, Reddit became popular among marketers and entrepreneurs. Scraping Reddit data, you can collect and analyze relevant niche subreddits and export popular posts.

Hacker News is a news aggregator collecting IT and business news. Scraping such websites allows monitoring viral content in certain niches.

57. Number of Comments and Upvotes

Since websites of this kind generate a huge amount of content every day, it’s important to understand what resonates with your target audience best. Scraping of metrics like comments and upvotes allows finding the most popular content and measuring its virality.

58. Follower Count

The number of subreddits on Reddit has already hit 1 million. Some of them are dead, but many are active and attract thousands of users each day. If you want to analyze the exposure of relevant subreddits, you can automate this process with web scraping.

59. List of Posts

Want to collect the most popular publications on certain topics? Setting custom filtering, you can sort posts by their freshness and popularity and scrape links to them for further analysis.

60. List of Moderators and Their Activity

Each subreddit has at least one moderator. Scraping a list of mods and dates of their last activity you can find inactive mods. The next step will be to make a request to moderate these subreddits.

61. Active Users

Going to launch a new product and want to get feedback from the target audience? Active users of relevant subreddits are a perfect focus group. All you have to do is collect links to their accounts and offer freebies for testing your product.

62. Redditors’ Info From Snoopsnoo

Snoopsnoo is a service offering analytics of Reddit users and subreddits. After scraping a list of target users, generate links to their Snoopsnoo profiles (snoopsnoo.com/u/user-name). The service allows getting their possible hobbies, place of residence, marital status, etc.

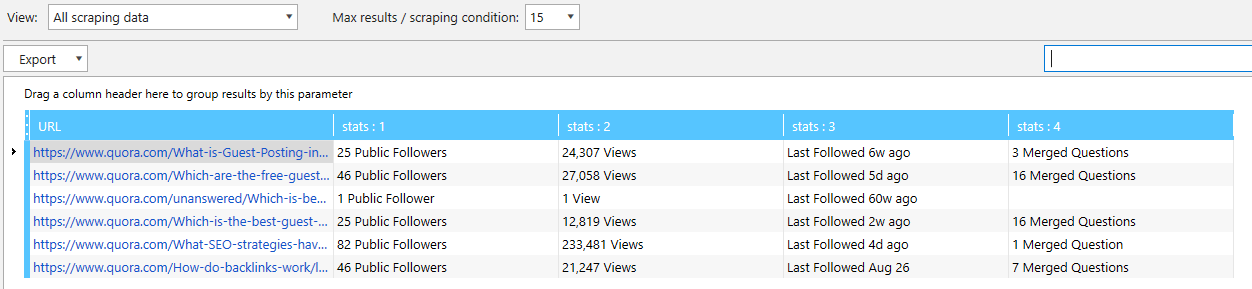

Quora

Quora is one of the most popular Q&A sites. More than 300 million users ask questions, share their knowledge, and use Quora for marketing purposes.

Quora scraping will automate getting a list of relevant questions, top responders, evaluation of questions, etc.

63. List of Questions

Scraping allows extracting a list of new or popular questions within a topic or for certain searches. This helps analyze the demand of your target audience and improve your content strategy.

64. Number of Views, Answers, and Followers

Having a list of relevant questions, you can analyze them for metrics like views, answers, and followers. For example, this way, you can find questions that have a lot of views and followers but a few answers.

65. Top Responders

Each category on Quora has a list of the most popular authors (those with the most answer views). Scraping helps automate collecting links to their profiles for further analysis and outreach.

66. Answer Wiki Presence

Answer wiki is a block with a quick answer or useful links. If you are going to promote your business on Quora, having links to your site in all relevant answer wikis is a must. Web scraping lets you check a list of questions for answer wiki opportunities.

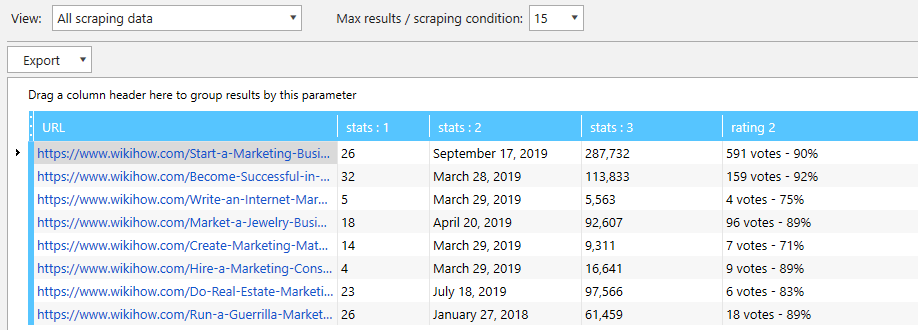

WikiHow

WikiHow is a wiki-type community consisting of more than 200,000 ‘How-to’ articles. Scraping helps automate the collection and evaluation of relevant articles and find questions that still don’t have a ‘How-to’ answer.

67. Target Articles and Questions

You can extract two types of questions using a custom search. The first includes already written articles for a specific search query. The second – questions that don’t have an instruction. These lists will help find relevant topics you can write content on or articles you can update with links to your site.

68. Article Stats

Each article has an info panel containing statistics on views, ratings, and authors. Later, you can use this data to find the most popular topics.

69. Last Update Date

One of the reasons to use wikiHow is link building. The best way to put your link in a relevant article is to find the outdated ones and update them with useful content adding a link back to your site.

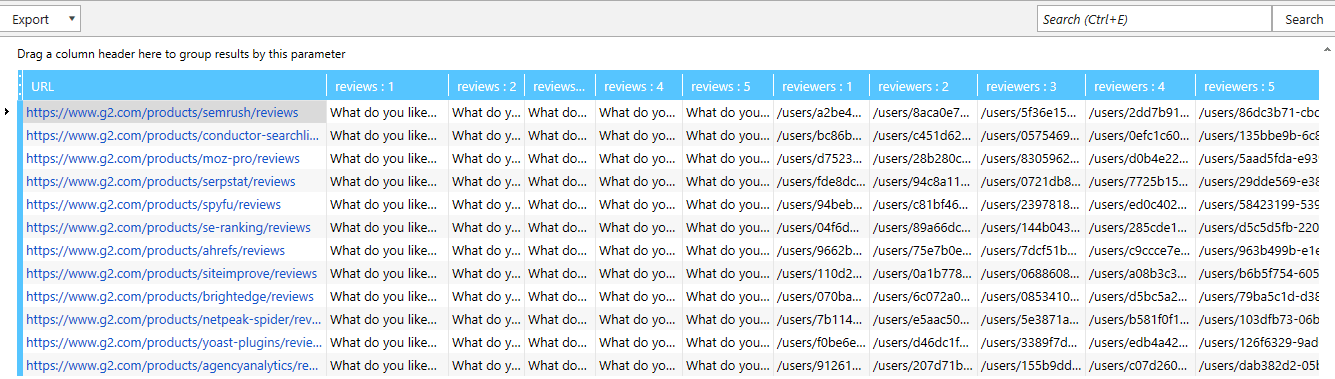

G2 Crowd

G2 Crowd is one of the most popular software review platforms. At the time of this writing, the site included more than 840,000 reviews. Web scraping allows getting a list of relevant programs, their reviews, and profiles of users that left a review.

70. List of Relevant Products

G2 Crowd sorts products by various categories and subcategories. Choose the relevant category and apply sorting to get a list of products you want to scrape for further analysis.

71. Ratings and Review Count

You can scrape a number of reviews and an average rating of competitors’ products. This data helps analyze their activity on G2 Crowd and overall popularity.

72. Product Reviews

G2 Crowd reviews scraping allows extracting reviews of yours or competitors’ products. The data is vital for SWOT or sentiment analysis, and should definitely be used to tweak your marketing strategy. When reviews are scraped, you can divide them into the pros and cons parts using a simple Excel function.

73. List of Reviewers

As you scrape product reviews, you can also extract links to reviewers’ profiles. For example, you can outreach them offering to try your product.

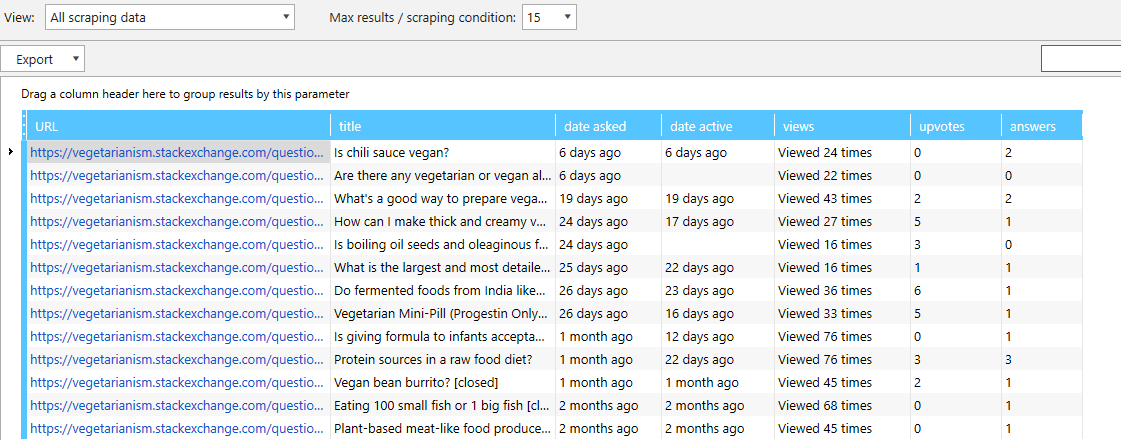

Stack Exchange

Stack Exchange is a question-and-answer network consisting of 173 niche categories, one of which is the most popular resource for developers – Stack Overflow. Scraping helps find hot topics and relevant questions to increase your rep.

74. Relevant Questions

Once you set an appropriate sorting, you can collect links to relevant questions. Then you can check their parameters and choose the ones you need to answer.

75. Question Parameters

When you have a list of questions, nothing stops you from scraping data on each question. For instance, you can extend the list with the view and answer count, creation and last activity dates. This way, you can choose the best questions to answer.

These 75 web scraping examples only represent the most widespread use cases when scraping can boost the efficiency of digital marketers, sales specialists, webmasters, and everyone who needs to find and collect data for further analysis.

Have you ever used scraping? Share your experience in the comment section below!