8 Tips on Internal Linking for Better SEO

How to

Links are always a milestone in SEO. Somebody buys tons of backlinks in chase of link equity and forgets the main thing – quality. Somebody spares no efforts to ‘PageRank Sculpting’. But the point is in combined activities. And in this post we’ll talk about the nuts and bolts of your website optimization – internal linking and its must-haves.

Why Is Internal Linking Important for Your Website?

Internal links are all links inside your website pointing to the page within the same domain. They are mostly placed in such navigation elements as menu, breadcrumbs, footer, header, sidebar or in the text (for example, in your posts). And this is why you should invest your efforts in internal linking.

- You can achieve better user experience. If user can easily navigate through your website, your conversion will probably increase. Try to provide appropriate information for user in the most convenient way.

- Internal linking influences SERP. Keep in mind that behavioral factor is one of the ranking signals. Search engines use tracking metrics such as pages viewed per session and time spent on website or even click through rate. So the first leads to the second. And the ultimate goal is boosting your organic traffic.

- Internal linking defines page hierarchy. It helps search engines determine the quality and convenience of the website and understand what pages should be promoted in SERP.

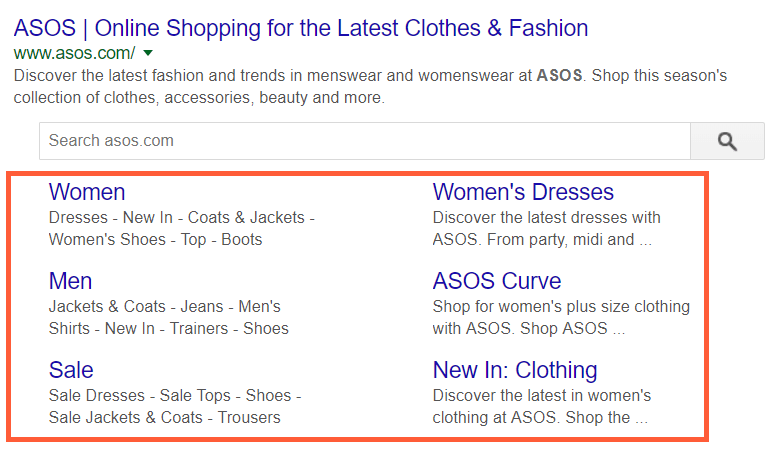

- Sitelinks. They make you more visible in search results and can boost your organic traffic. According to Google, if the structure of your website allows their algorithms to find good sitelinks and they are relevant to the user's query, you can get sitelinks.

We gathered the most common but sometimes not so obvious tips for making your website better. So let's deal with it.

Read more → Enhance link juice distribution and organize your website’s inner linking with our free internal link checker

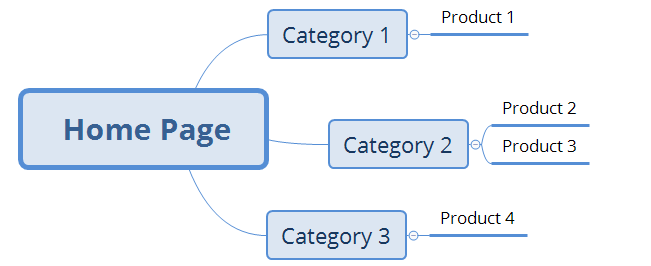

Tip #1. Create Logical Website Structure

Well-developed website structure has a high priority among other significant aspects. So I gathered some good practices :) First of all, think the website hierarchy over before developing it. It should be clear, simple, structured, logical and useful for user. Moreover, keep in mind that all important pages should be reachable from the home page.

For an online-store with a large number of products use rel=next and rel=prev to make paginated series. It will improve your website usability. For instance, ‘Show More’ button slows down your page speed, while rel=next and rel=prev don’t.

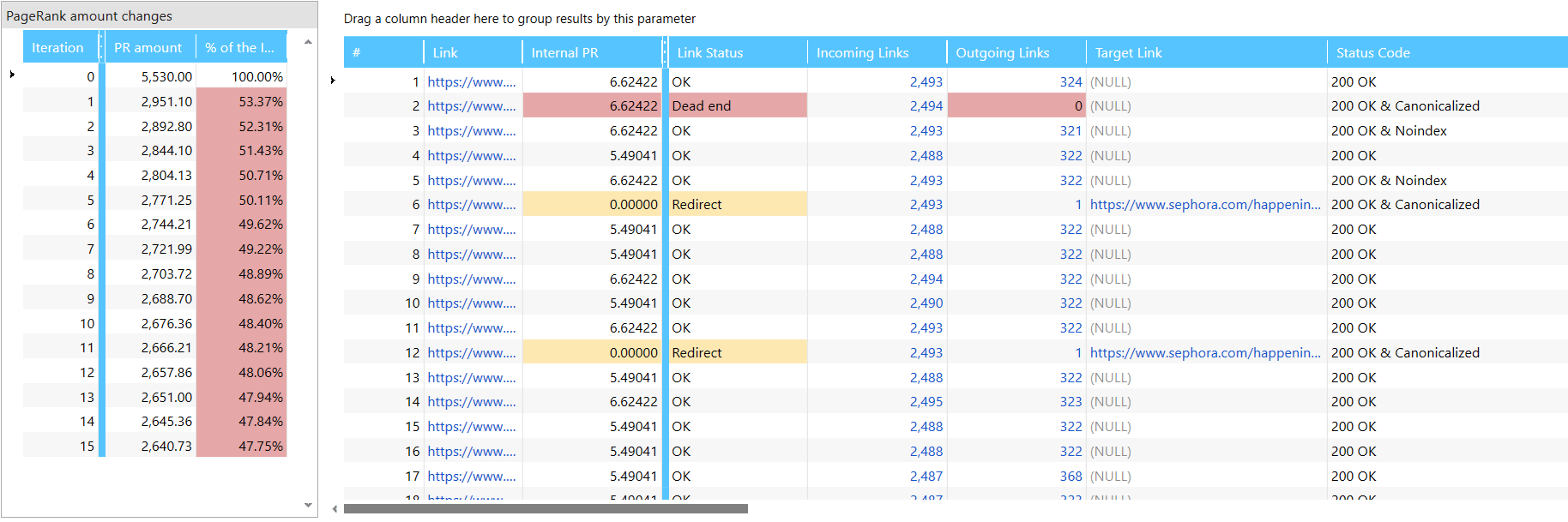

Another lifehack is to calculate your internal PageRank and check what important pages need more link equity. You can use Netpeak Spider for it, just check out a detailed guide to internal PageRank.

Read more → What is pagination in SEO

Tip #2. Create Clear URL Structure According to the Hierarchy

It means that your URLs should reflect your website's structure. And if you’ve prepared logical website structure, it will be easy. For example, this is how you create clean URLs according to the website structure above:

www.example.com/name-of-category-1/name-of-product-1

www.example.com/name-of-category-2/name-of-product-2

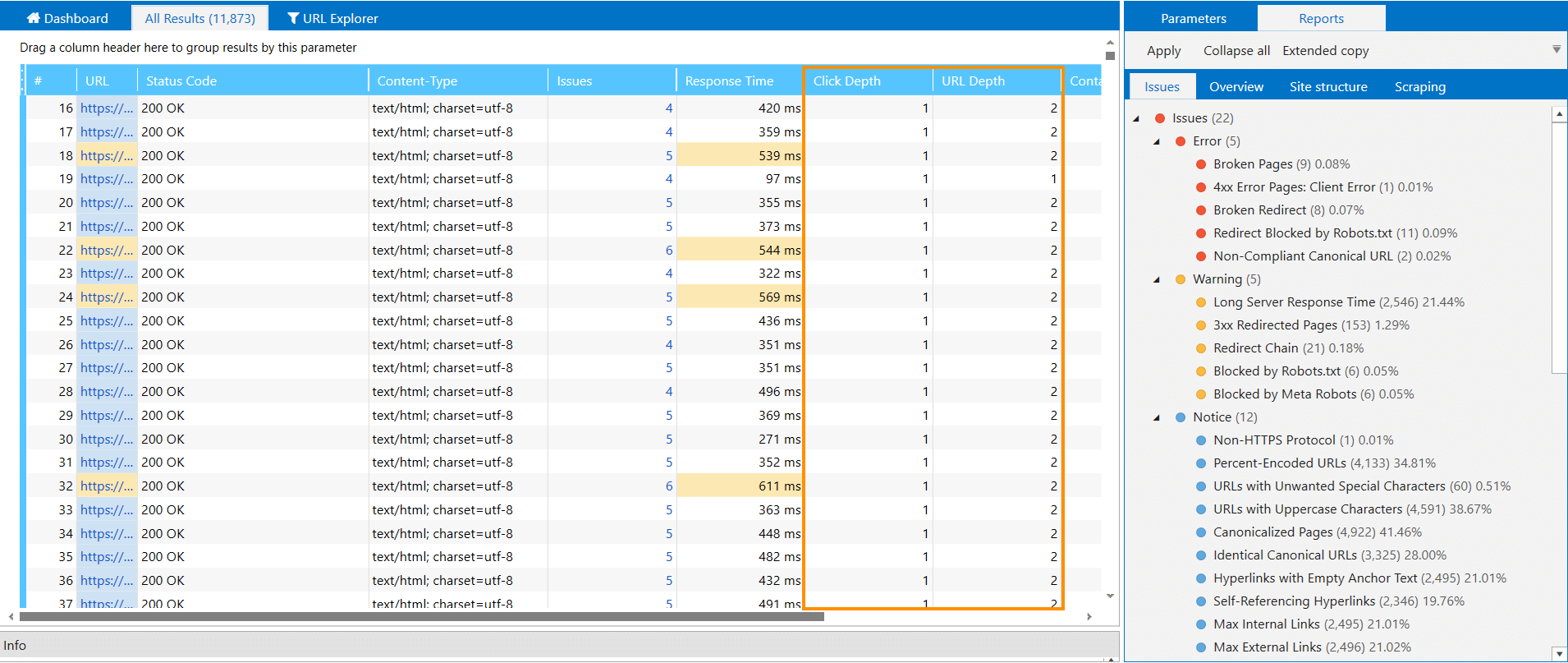

Tip #3. Keep a Shallow Website Structure

Each page should have 2-3 click depth from the home page. So make sure to reduce the number of clicks to get to necessary pages. First of all, it will improve user experience. The more redundant actions users make, the higher chances that they will leave your website. Secondly, remember about crawling budget. If you have a very complicated website with dozens of thousands pages, the search robot can’t crawl all of them at once. In such cases, using breadcrumbs, internal search or tag clouds will simplify crawling process and user's navigation.

You can check click depth using Netpeak Spider. Just crawl your website and check the ‘Click Depth’ and 'URL Depth' columns in the main table.

You can check your website for more than 80 parameters and work with other basic features even in the free version of Netpeak Spider crawler that is not limited by the term of use and the number of analyzed URLs.

To get access to free Netpeak Spider, you just need to sign up, download, and launch the program 😉

Sign Up and Download Freemium Version of Netpeak Spider

P.S. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the most suitable for you.

Tip #4. Get Rid of Orphan Pages and Dead Ends

These types of pages can become a real disaster for your website. Try not to overlook them and check your website regularly to spot and fix them.

So orphan pages have no incoming links. They are actually useless because, in fact, user will not be able to find them on your website.

And dead ends have no outgoing links, therefore don’t pass link equity. As a result, link equity is concentrated on one page disrupting link equity spreading on your website. Dead ends can include pages blocked by robots.txt, non-HTML 2xx pages that cannot have outgoing links, pages with endless redirect and so on. You can read more details about them in our blog post about PageRank.

To find them using Netpeak Spider, start crawling. After finishing, click on ‘Tools’. Choose ‘Internal PageRank Calculation’. You clearly see dead ends and orphan pages in the results table ↓

Tip #5. Use Keywords to Improve Internal Linking

Moreover, you should use keywords for more effective internal linking and ranking. Let’s say, you have an online store. You can create breadcrumbs with high-volume keywords. In other words, try using keywords as anchors on your website – it will improve your topical relevance. Remember about common sense and logic, though.

Also, SEOs should remember about Google updates penalizing websites for keyword stuffing and spammy links. So use natural and descriptive anchors to help user navigate and everything should be fine (Matt Cutts said so too).

One more advice – it’s better to use simple dofollow links for internal linking. Yes, guys from Google said they can parse links in JavaScript, CSS, etc. now, but let’s make it easier for crawlers ;)

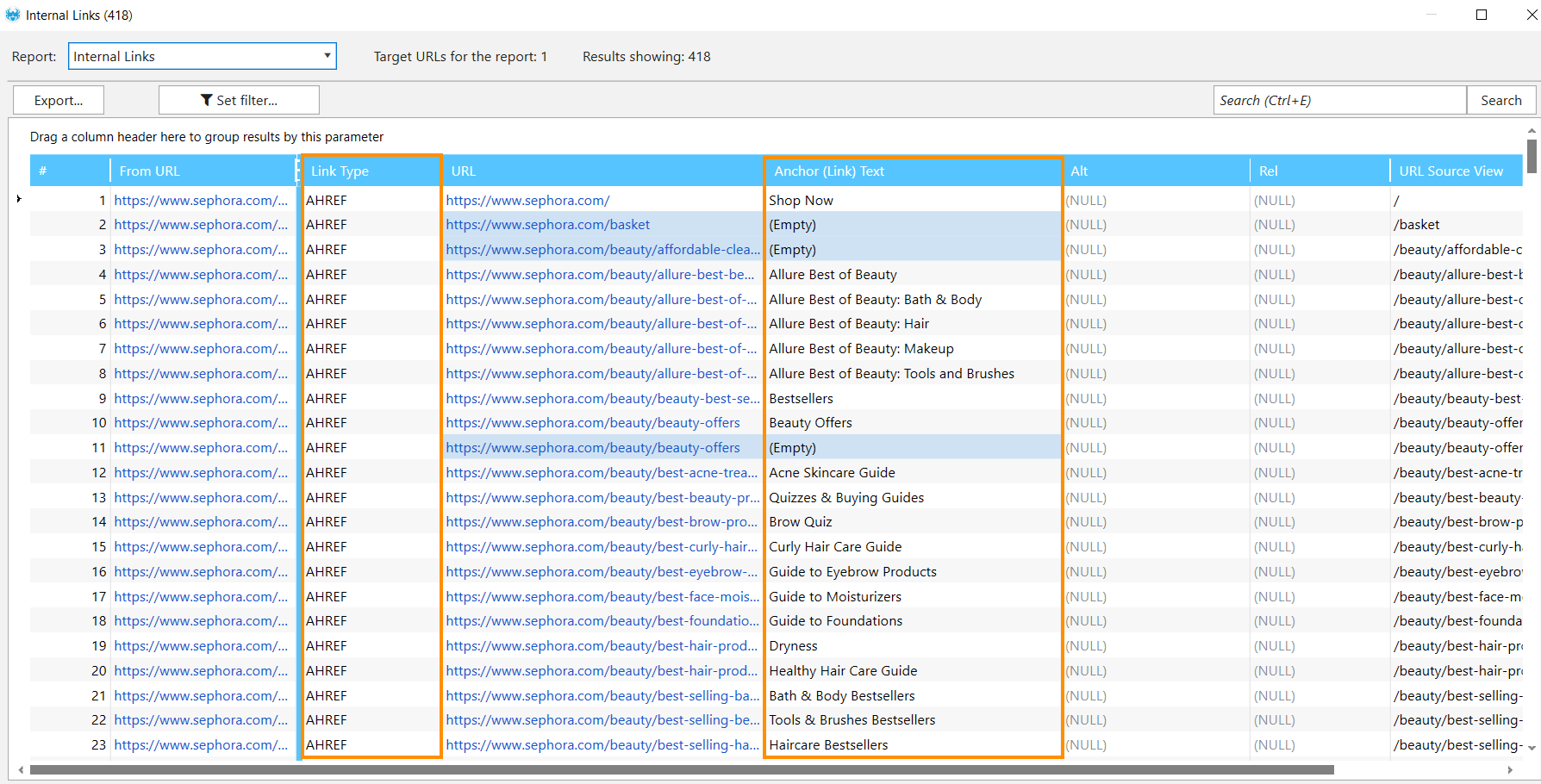

You can check anchor texts and link types by crawling your website with Netpeak Spider. Start crawling and after completing it choose ‘Internal Links’ in the context menu. After that a window with necessary data will appear.

Tip #6. Use Target Blank for Non-Navigational Links

Do you know this feeling when you found an interesting article and suddenly saw a link to another interesting post, clicked and found yourself on another web page? It’s highly unlikely that you will return to the previous post, right? So don’t divert user’s attention from your awesome content to another. How? Use target="_blank" attribute to open a link in a new tab. It will keep a user on your website longer.

But make sure to apply this tip only to non-navigational and, frequently, outgoing links. Don’t use ‘target blank’ for links leading user through the conversion funnel!

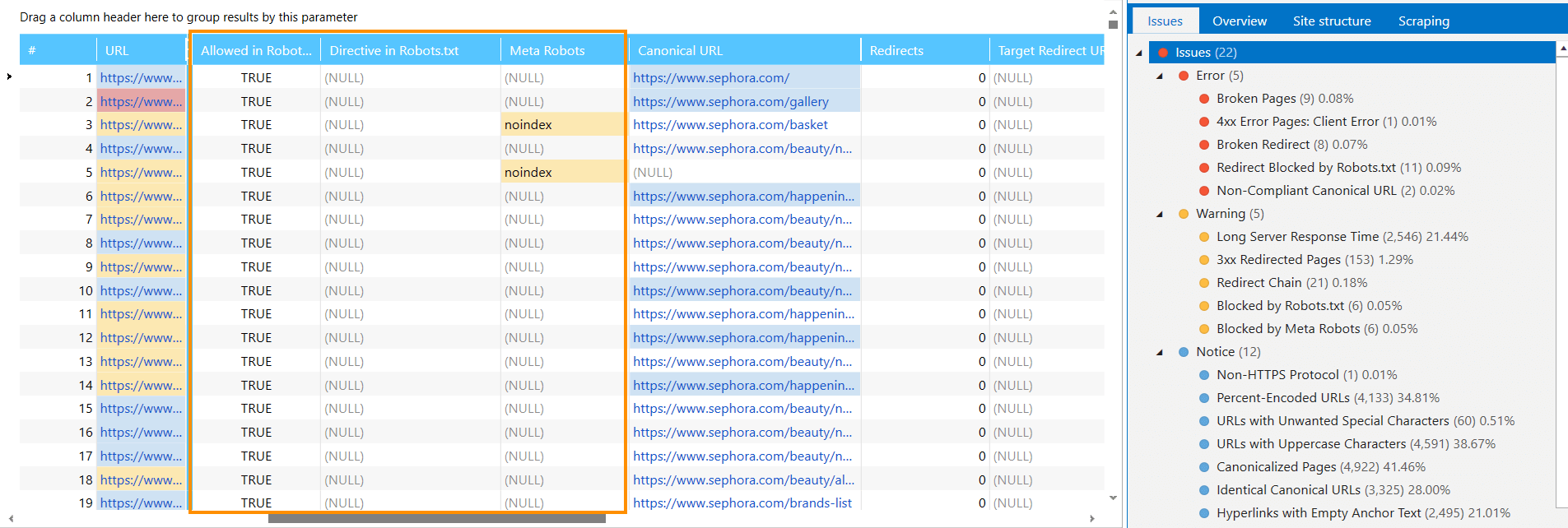

Tip #7. Links Blocked by Robots.txt, Meta Robots or X-Robots-Tag

Using robots.txt, Meta Robots and X-Robots-Tag file you can disallow search robots to crawl a web page. As a result, links on this page will not be indexed. So it’s useful to check which ones are blocked by indexing instructions.

Learn more about directives:

1. In robots.txt: 'What Is Robots.txt, and How to Create It'.

2. In X-Robots-Tag: 'X-Robots-Tag in SEO Optimization'.

Tip #8. Do Not Use Nofollow Links

A long time ago, SEOs thought that ‘PR Sculpturing’ can improve their ranking. So they have been choosing which links must be dofollow and nofollow to control the flow of authority from page to page. But nowadays it’s a completely outdate technique. Why? Because search engines divide link equity among all links coming from a page, including the nofollow links. But while nofollow links do get some link equity, they do not pass it through to the pages they point to. So when talking about internal links, it's better to remove a link (when it’s reasonable to do so) than make it nofollow.

But if you don’t want to pass your link authority to external resources, you can use nofollow link for them. For example, if you refer to https://en.wikipedia.org/wiki/Giant_panda in your post, in order not to pass your link authority to this post, you can write the following attribute for the link:

You can crawl your website with Netpeak Spider to check nofollow links on your website. Go to ‘Crawling Settings’, click on the ‘Advanced’ tab and tick ‘Nofollow link attributes’. Then start crawling and after finishing check ‘Link Type’ column as I mentioned in tip #5.

In a Nutshell

Internal linking is one of the major aspects for a quality SEO, as it influences your position in search results and even conversion. Keep in mind that you can make your internal linking more effective using these tips:

- make logical website structure

- create clear URL structure

- the less click depth, the better

- fix orphan pages and dead ends

- use keywords in internal linking

- use target="_blank" for non-conversional links

- check links blocked in Robots.txt and Meta Robots

- use rel=nofollow for outgoing links

Do you know any more tips on internal linking? Share your experience in comments below and ask questions, we’ll be glad to answer :)