On-Page SEO: A Start-to-Finish Guide for SEO Newbies

How to

Over the past few years, Google has released several updates that affected the way webmasters tailor their websites.

The fact is that Google’s ultimate goal is to bring users to websites that provide high-quality content and good user experience. This is the reason why you can’t ignore on-page SEO if you want to be noticed by both Google and your target audience.

In this article, you'll find out what is on page SEO optimization, what important ranking factors it covers, and how to conduct basic on page SEO audits.

Let’s dive right into our onpage SEO guide!

What is on-page SEO exactly?

Let’s start with the most critical question: what is onpage SEO?

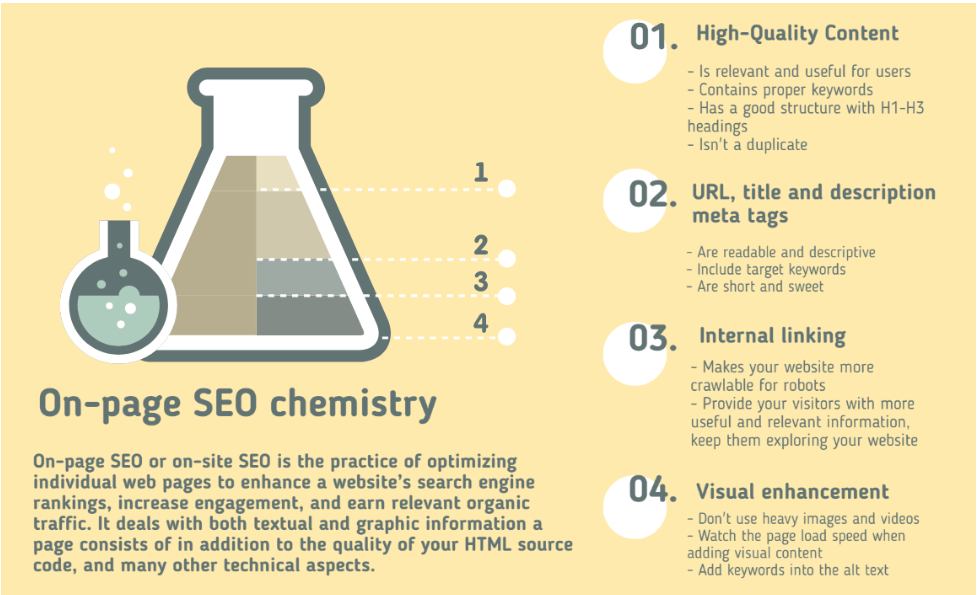

On page SEO definition is the following. On-page optimization, or on-site SEO, is the process of adjusting webpages and their content to make them more appealing for search engines and people who visit your site. This helps your pages rank higher on Google and brings in more visitors.

Common SEO on page tasks include adjusting titles, internal links, and URLs to match what people are searching for.

On-page SEO vs. off-page SEO

You already know what is on-page SEO, but how does it differ from the off-page SEO? The answer is quite simple.

Off-page SEO focuses on the backlinks (the links which you receive from other website pages to yours) and other external signals happening beyond your website, like how often your website link is shared via social networks, how often it was mentioned, and all other external marketing activities.

On page search engine optimization embraces the optimization of both the content and HTML source code of a page.

Typical SEO on page optimization tasks include optimization of:

- HTML meta tags – title and description meta tags

- content – its structure, headers, target keywords

- visuals – their size and alt texts

- internal linking

- URLs

- anchor texts

- secure HTTPS protocol

- page load speed

With on page optimization, you can control all practices within the realm of your website, while off-page SEO focuses on the collaboration with external partners, such as outreaching a partner for a link exchange to get traffic to your web pages.

Why does SEO on page optimisation matter?

Even though it’s believed that off-page and onpage optimization work together to improve your website’s search engine rankings in a complementary way, SEO onpage is the roof for your entire SEO efforts.

Since Google’s cornerstone is making a website friendly, relevant, and helpful for users, its search robots analyze the content of the web pages and assess whether the pages contain information relevant to what searchers are looking for (it’s called a searchers’ intent).

At this stage, websites that have been through onpage SEO will be more likely to flesh out as the search robot gets the gist of what a web page is about, then identifies if it matches the searcher’s query. That’s what makes on page in SEO incredibly imperative to every website.

The search engines estimate the relevance of a web page to the searcher’s query based on various factors. Keywords usually dominate the narrative. If your site has keyword-rich content (don’t confuse it with keyword-stuffed content, which is a serious blunder), it has more chances to appear on the top position of the search engine result pages (SERPs).

In simple words, with optimized web pages, you can relish such merits as a high ranking position, authority, and trustworthiness of your domain in the future. This, in turn, allows you to build reliable and advantageous relationships with other domain owners and work on the off-page promotion. Because nobody wants to link to the low-authority website with pages that appear to be dull and poorly written, do they?

On-page SEO ranking factors, and how to shape them up

High-quality content

Google’s been advocating for quality content for quite a while, introducing many core updates that changed the game on the Internet significantly. The last update that took place in May 2020 suggests stepping up efforts in creating even more exceptional content.

It probably sounds like the same old same old to you, but let’s delve into the very essence of what quality content actually is. It’s the piece of information that is easy to read, unique, helpful, and supplied with all necessary media means like audios, videos, presentations, and so forth that contributes to the better disclosure of the subject.

The relevance to a searcher intent plays not the last role. Before creating any content, try to answer the question: ‘Why should people visit my website?’. Search crawlers take into account behavioral factors (how people behave when they land on your webpage), such as dwell time, time on page, click depth, etc. So if you write quality and compelling content, use the words your audience speaks with, comprehensive text structure, and cover the suggested issue in-depth, this means you succeeded.

Ranking factors that affect the quality of your content are:

- Keywords. It’s critical that you target the right keywords for your content and put them in the right places on your SEO pages. Search engines estimate how relevant your web pages are to the searchers’ query based on the keywords. Therefore, you can get ideal keywords by being in your visitors’ shoes. What will they type in search engines when looking for a product or a solution? What intent do they have? Your content should be concise enough to provide answers.

Remember to always include keywords in the title, description, and H1 heading. Search engines rely on the keywords in the title tag and meta description to identify what a page is about, so it’s essential to mention keywords early in the title.

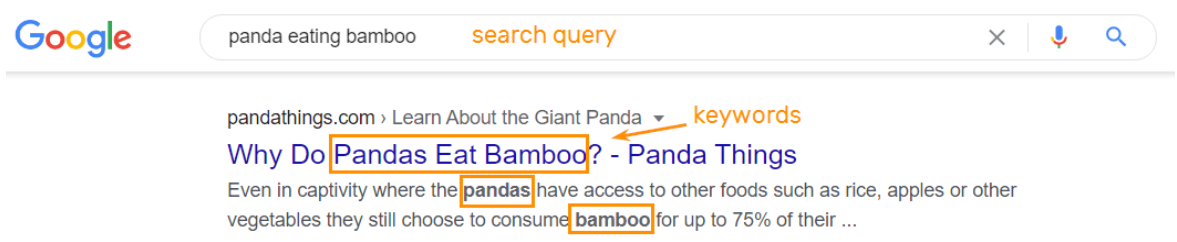

Let me illustrate. So if you want to see the pictures of pandas eating bamboo or read an article explaining why pandas eat bamboo or how these bears survive on a bamboo-only diet, you google something similar to my query and see that most titles and descriptions contain the necessary keywords.

You can learn more about keyword research from keyword and on page SEO guidelines. - Language. Go for a natural language and enrich your content with synonyms and close variants, which Google uses to identify a page’s relevance better. Shakespearean sophistication is a good thing only if you know that your audience shares your preferences.

- Content length. Writing long content is no use at all if it doesn’t provide any value to the readers. Ensure that it’s comprehensive and viable. The ideal length of content ranges within 2500 words.

Target keyword placement

To succeed in your on page SEO optimization strategy, you need to have the right target keywords and put them in the right places in your content. Google checks your content to understand what your page is discussing — and readers usually do the same.

Make sure to include your target keywords in these important spots:

- The main heading (H1)

- The first paragraph

- Subheadings (like H2s, H3s, etc.)

Doing this helps Google understand your page's content and helps users quickly determine if it matches what they're looking for.

Title tags

Another essential element of on page optimization in SEO is the title tag. Title tags are placed in the <head> section of the HTML code on each page and look like this:

<head><title>What Does CTR Mean in SEO? – Netpeak Software Blog</title></head>

You can see the HTML code of each page hitting the ‘Ctrl+U’ shortcut.

The title should include keywords. Keywords make it easier for Google search engines to classify the topic of the page, determine its content, and know when to serve it on the search engine. Even though you should make a title unique and descriptive, Google can adjust the title when displaying it in search results.

So to make your title tag effective:

- Use keywords.

- Watch the length – don’t make it too long since Google truncates long titles on the SERP.

- Use brand words – it increases brand awareness and gives the touch of authenticity.

- Avoid using ‘clutter’ words.

- Use brackets to give a brief recap of what is inside this article. Like:

Meta descriptions

Google also checks on meta description. Like title tags, the meta description is an HTML element located in the <head> section. It can be (sometimes it cannot) used as a display feature for snippets (a small paragraph under the title on the SERPs). It should contain the keywords or keyword phrases. Usually, you see the keywords that match the query are highlighted in bold.

Besides, the title tag and meta descriptions are the first information searchers see about your page that creates their first impression of your page and makes them decide on whether to click on the snippet. To figure out how to work with meta description tags, check out our detailed guide.

Headings and subheadings

H1 tags and other headings play a crucial role in how users navigate your page and how Google interprets its structure.

Properly optimized headings make it easier for users to skim your content and locate specific details. They also help Google understand your page's organization and improve your chances of ranking higher for relevant searches.

Including keywords and their variations in headings grants Google with additional context about your page's content. Make sure to use H1 for your main page title or headline, and use H2s for subtopics. If you need to dive deeper into details, use H3s, H4s, and so on.

Optimized URLs

URL (Uniform Resource Locator) is a location of a SEO page on your website. It’s seen by visitors so that they can rely on URLs to get what your page is about. Also, the URL provides informative signals that enable search engines to understand a page’s content.

To optimize URLs, you can follow a few tips below:

- Use human-readable and descriptive URLs. The shorter, and more comprehensive, and descriptive URLs are, the easier for both visitors and search engines to grasp your page’s content and determine to rank it higher.

It’s the kind of URL that gives you an overall summary of what the page is about: - While this one is twisted up and gives you nothing:

- Include target keywords in URLs. Add at least one keyword to the URL, but refrain from keyword stuffing.

- Use non-case sensitive URLs. At their nature, URLs are case sensitive. If the developers didn’t add a .htaccess file to automatically make any uppercase URLs lowercase, a user is likely to hit the 404 page.

- Don’t use underscore to separate content in the URL. Use a hyphen to do it.

- Add mobile-friendly URLs to Sitemap. This technique is for your mobile-friendly pages to rank higher in mobile search results.

To discover more information about the URL structure and its details, check out this article.

Internal links

Internal links are the links that redirect to other pages within your own website. Internal linking makes your website more crawlable for robots. When they find a page and start crawling it, and there appears to be a link to another page on your website, robots will follow this link. It contributes to link equity (ranking weight) passing to this page. This linking structure creates a web effect that is crawlable and accessible.

Remember to cross-link all pages since if too many links lead to one page and no links go from these pages to others – this can create a dead end issue and stop robots from further journey on the website.

Speaking of humans, internal links provide your visitors with profuse information, and keep them on exploring your website. It increases the number of page visits and reduces the bounce rate. You can check out this article to discover tips on how to use internal linking.

External links

External links are links on your website that lead to other websites. They're helpful because they improve user experience and build trust with your audience.

Google recommends adding links to reliable external sources as it adds value to your content and enhances user experience.

Here are some tips to follow:

- Only link to trustworthy sites related to your topic.

- Use descriptive and natural anchor text.

- Avoid adding too many external links or placing them in a way that seems spammy.

Visual content

Visual elements also add their bit into pages’ rankings since they nourish a plain text with eye-stoppers, and explain things that can’t be explained with sheer words. But heavy images and videos are the main enemies of page load speed. Although with a bit of content and page optimization, you can ward off these nasties.

- Comprise heavy images through tinifiers (like TinyPNG).

- Use adaptable formats: PNG, or JPEG.

- Add alt text (alternative text) to the pictures. Alt texts are used to describe the content of the picture, which helps readers with visual impairments understand it. Sometimes, something derails, and the images don’t display correctly, so alt texts also can ‘tell’ what the image is about. With that in mind, think about search robots who also aren’t good enough at understanding the content of the page – they read the alt texts, which gives them a clue of what is depicted.

- Optimize your videos: craft an engaging title, description, and thumbnail for your video.

- Don’t host videos on your website. If you host video content right on the website, it may slow your website load speed, and harm it rather than bring benefits. Instead, embed a YouTube video player on your website and show videos from your YouTube channel.

This article will help you learn more about SEO optimization of images.

How to do on-page SEO audit with Netpeak Spider

The next step of our on page SEO guide is the audit itself. If you made it up to the end of this post, and now you feel like having even more questions than in the beginning, we’ll try to answer at least one: ‘How do I find all these things on my website?’

There are many SEO tools on the market that you can use to conduct at least basic SEO on page analysis and audits. But there are also more advanced tools that are used for a broader range of SEO purposes. Netpeak Spider perfectly fits both demands.

You can carry out a comprehensive page SEO analysis even in the free version of Netpeak Spider crawler that is not limited by the term of use and the number of analyzed URLs. Other basic features are also available in the Freemium version of the program.

To get access to free Netpeak Spider, you just need to sign up, download, and launch the program. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the most suitable for you.

Here’s how to conduct a basic on-page audit:

- Launch the program.

- Enter the website address into the ‘Initial’ URL field. We’ll inspect www.sephora.com website.

- Go to the sidebar and select the parameters which are enough for basic audit:

- General → ‘Status Code’, ‘Content Type’, ‘Issues’, ‘Response Time’, ‘Content Download Time’.

- Crawling and Indexing → ‘Compliance’, ‘Allowed in Robots.txt’, ‘Canonical URL’.

- Head Tags → ‘Title’, ‘Title Length’, ‘Description’, ‘Description Length’.

- Content → ‘Images’, ‘Content-Length’.

- H1-H2 Headings → ‘H1 Content’, ‘H1 Length’, ‘H1 headings’.

- Here how it looks:

- Start crawling, hitting the ‘Start button.’

- When the crawling is completed, you’ll be able to see the results in the main table with issues overview in a sidebar.

- Go to the ‘Export’ tab in the upper right corner to export table results or SEO audit in the PDF format (can be reached from the main window).

Here how some parts of the PDF audit look like:

Check URLs for SEO parameters with Netpeak Checker

Another thing that can help you boost your on page optimization SEO strategy is the URL check. You can do it quickly and efficiently using the Netpeak Checker tool.

Netpeak Checker has various features required to make URL checks as smooth and quick as possible. These features include seamless integration with the top 25 SEO services, a bulk link check option, proxy support, and CAPTCHA auto-solving.

Try Netpeak Checker to see that the process of SEO analysis can be very simple, quick, and yet efficient.

Final thoughts

Now you know what is SEO on page, how to do on page SEO, and why it plays a pivotal role when it comes to optimizing your website’s rankings. If you don’t take it seriously, all your off-page effort might not pay off as you expected. We hope that with these practical on-page SEO guide, you can leverage on-page SEO and make your site rank higher.

Apart from content optimization, SEO onpage optimization includes many other technical aspects, which require the help of a webmaster or developer.

An on-page audit can be easily conducted in the Netpeak Software SEO tool. It takes a few minutes to analyze the whole website. In the end, you receive a comprehensive report on the detected issues.