How to Detect and Optimize Old Content

Use Cases

Sometimes, it's easy to leap into the false conclusion that old blog posts that bring little or no traffic at all are impossible to liven up. Optimization of old blog posts would make for traffic growth as well as the creation of new ones.

But the crux of the matter is how to detect and wisely optimize existing blog posts. And that's why I'm here.

- 1. Four Types of Blog Posts that Need Optimization

- 2. How to Detect Old Content for Further Revival

- 3. How to Optimize Old Blog Posts

- 4. Prioritize the Tasks

- 5. What Is Next?

- Summing Up

1. Four Types of Blog Posts that Need Optimization

I singled out these four major types:

- Blog posts with a drastic decrease in organic traffic. These blog posts brought traffic in the past, but over time they lost their pertinence or succumbed to more fresh (according to publiсation date) blog posts in the SERP.

- Old blog posts with low, nearly zero traffic volumes. These were likely to have been underoptimized, or they spotlighted an unclaimed topic.

- Blog posts with outdated information.

- Blog posts with missing high-frequency keywords.

2. How to Detect Old Content for Further Revival

2.1. Search for the Blog Posts of First Type

To gather the blog posts of the first type, I’m going to export data from Google Analytics. I'll need stats for two date ranges to compare them and find the posts that have significant traffic drops.

You can use the integration with Google Analytics and Search Console even in the free version of Netpeak Spider that is not limited by the term of use and the number of analyzed URLs. Other basic features are also available in the Freemium version of the program.

To get access to free Netpeak Spider, you just need to sign up, download and launch the program 😉

Sign Up and Download Freemium Version of Netpeak Spider

P.S. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the one most suitable for you.

Thus I'll find out how much organic traffic they received. The date range varies according to the blog age.

To get and export the results, I'll use Netpeak Spider.

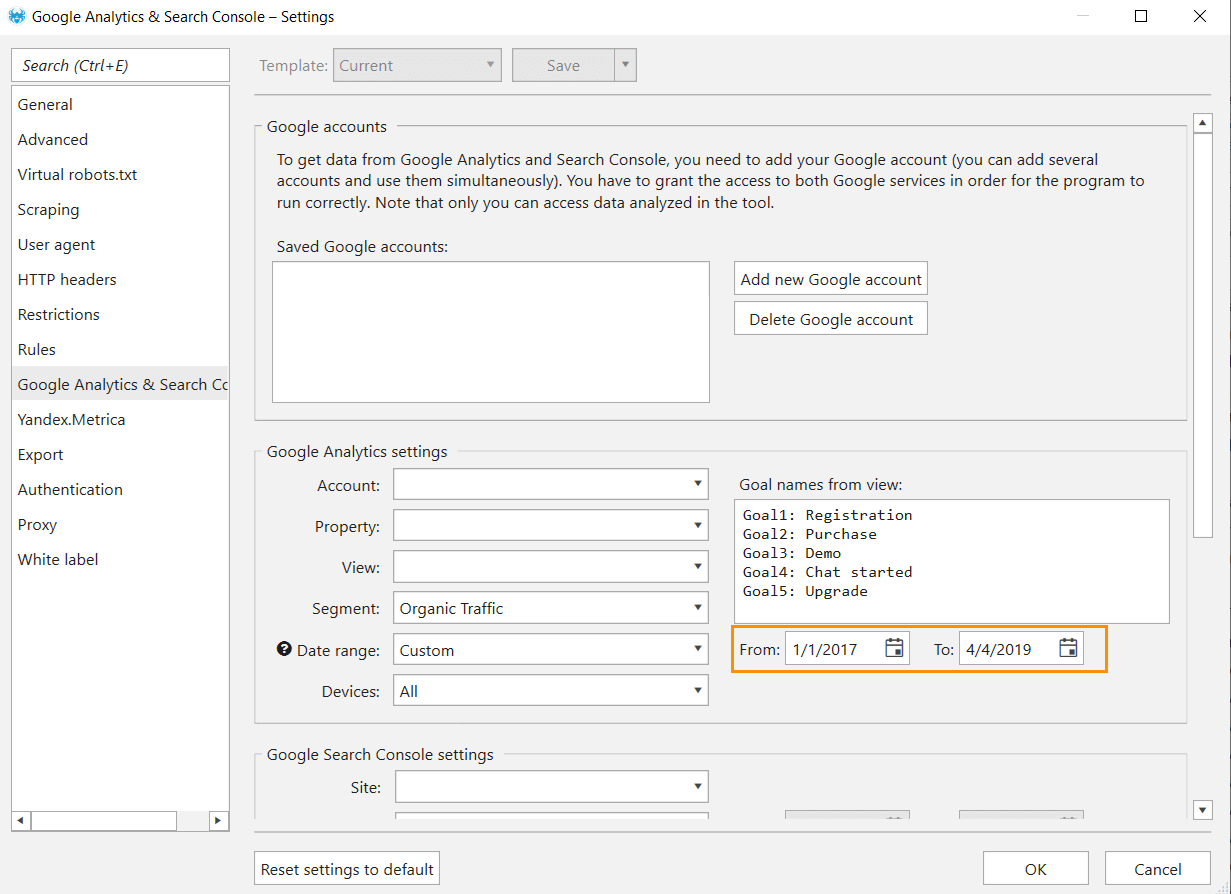

- In the ‘Google Analytics & Google Search Console' settings, I add a Google account. I choose the property I need, the view and select the date range to track traffic for the older period. Then I choose the 'Organic Traffic' segment. After everything is checked off, I save the settings.

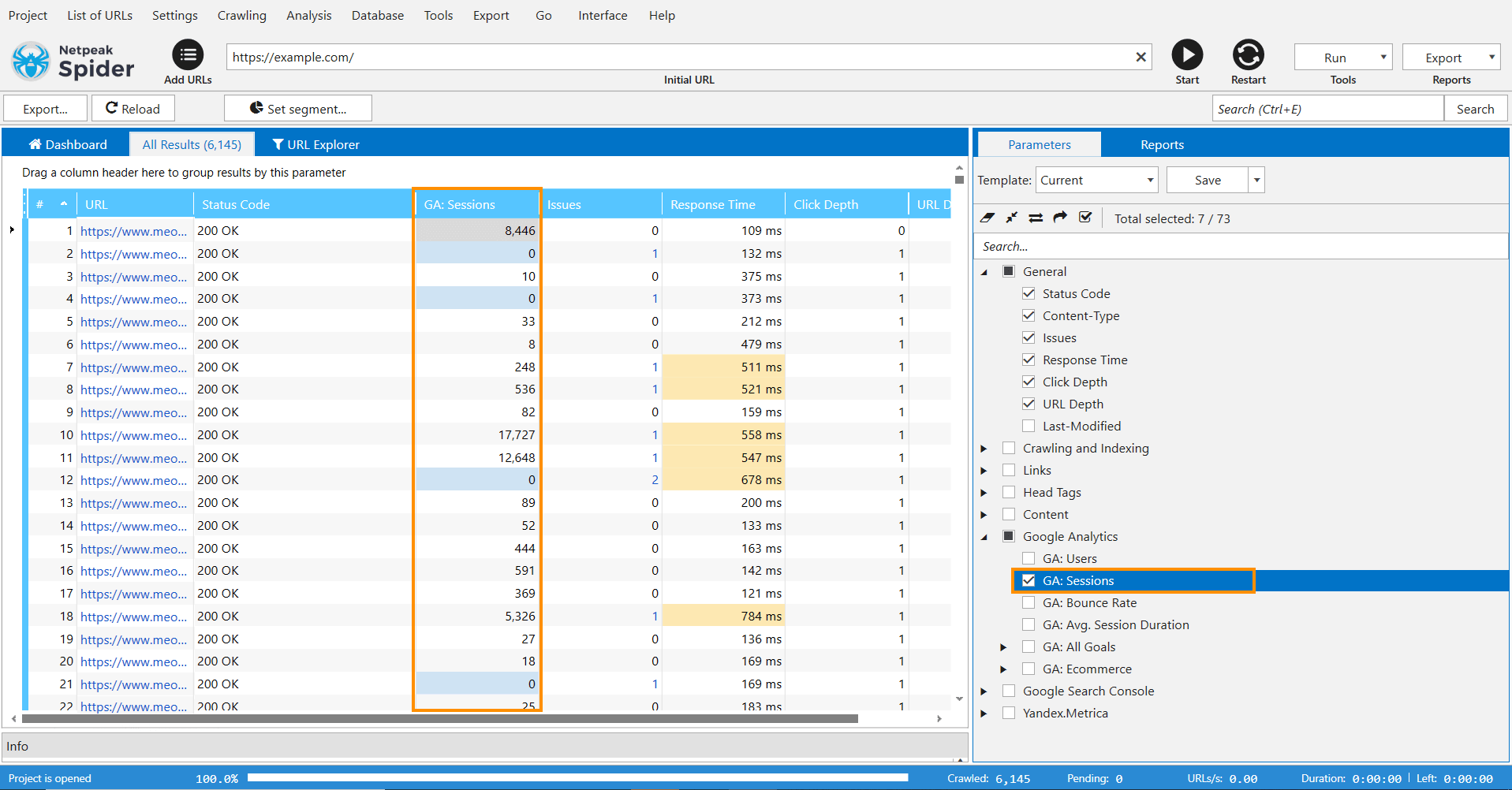

- In the sidebar, I tick the 'GA: Sessions' parameter in the 'Google Analytics' group.

- Next, I switch to the main window and enter the initial website URL.

- Finally, I hit the 'Start' button. When the crawling is completed, all data will be displayed in the main table.

- I export the data from Netpeak Spider and bring it to a separate table.

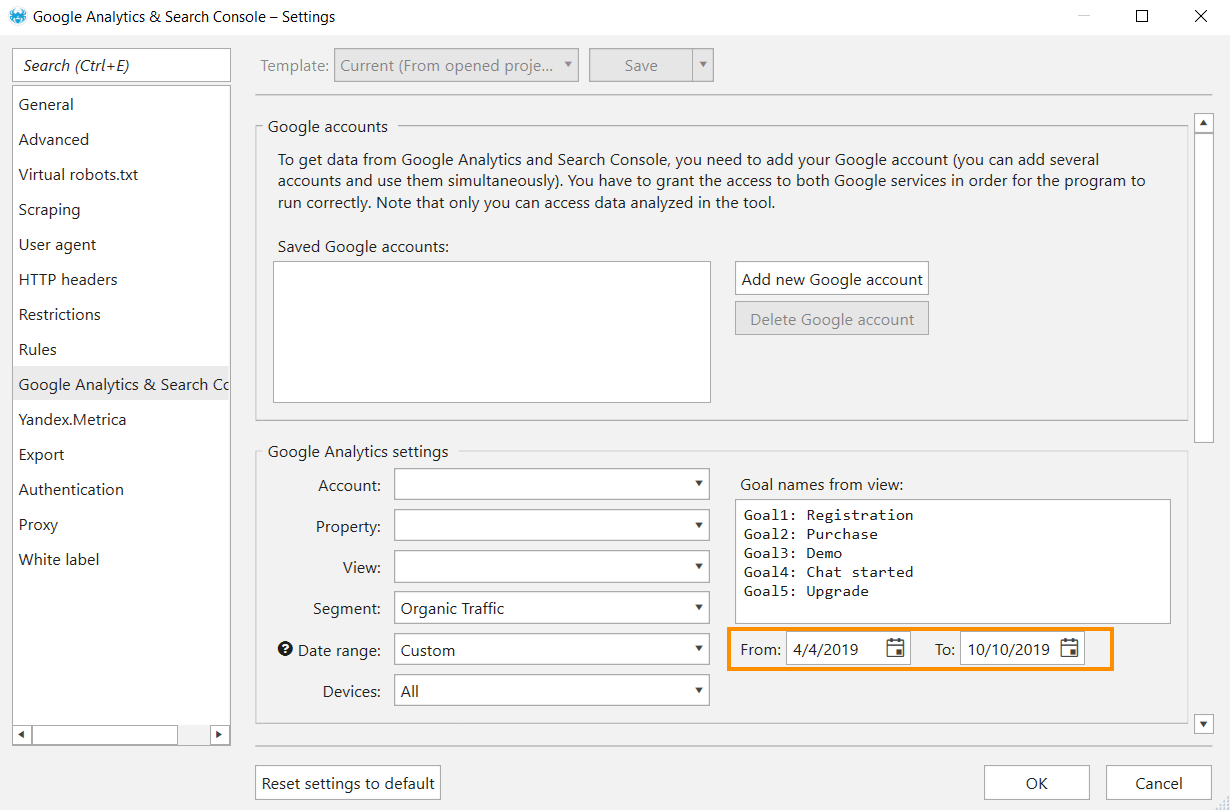

I reiterate the same steps when I gather data on new sessions: the start point – six months before the current date, the endpoint – the current date.

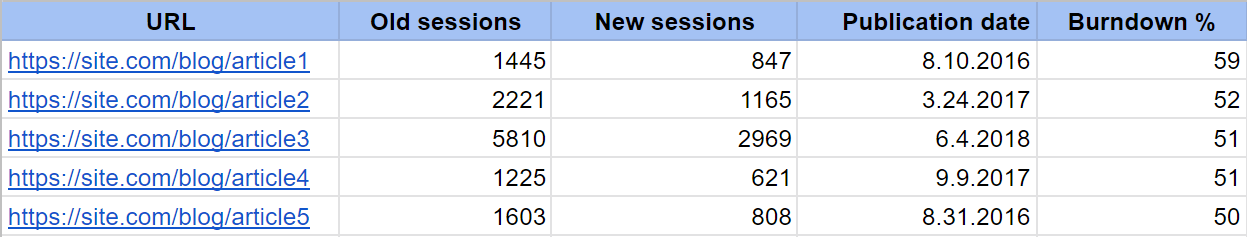

I export data from the crawler with the help of the VLOOKUP formula and compare them to the older period's data in a single table. To diagnose pages that are responsible for underperforming and need optimization more than others, I add this data:

- the publication date – a go-to metric that helps understand the relevance

- the number of page views

- engagement (upvotes, shares)

- burndown percentage – the remaining traffic compared to the volumes of the past

I use scraping of the source code to get the data on the publication date, the number of page views and comments.

2.2. Search for the Blog Posts of Second Type

This is the moment when you'll need the publication date to detect pages that accumulated a small number of sessions and the reasons behind it: insufficient optimization or freshness of the blog post. The pages which were published long ago and failed to garner the minimum number of sessions will be the pages we're going for.

2.3. Search for the Blog Posts of Third Type

Sad tidings are about the fact that the search for blog posts with outdated information is a purely manual process that can't be easily automated 😅

2.4. Search for the Blog Posts of Fourth Type

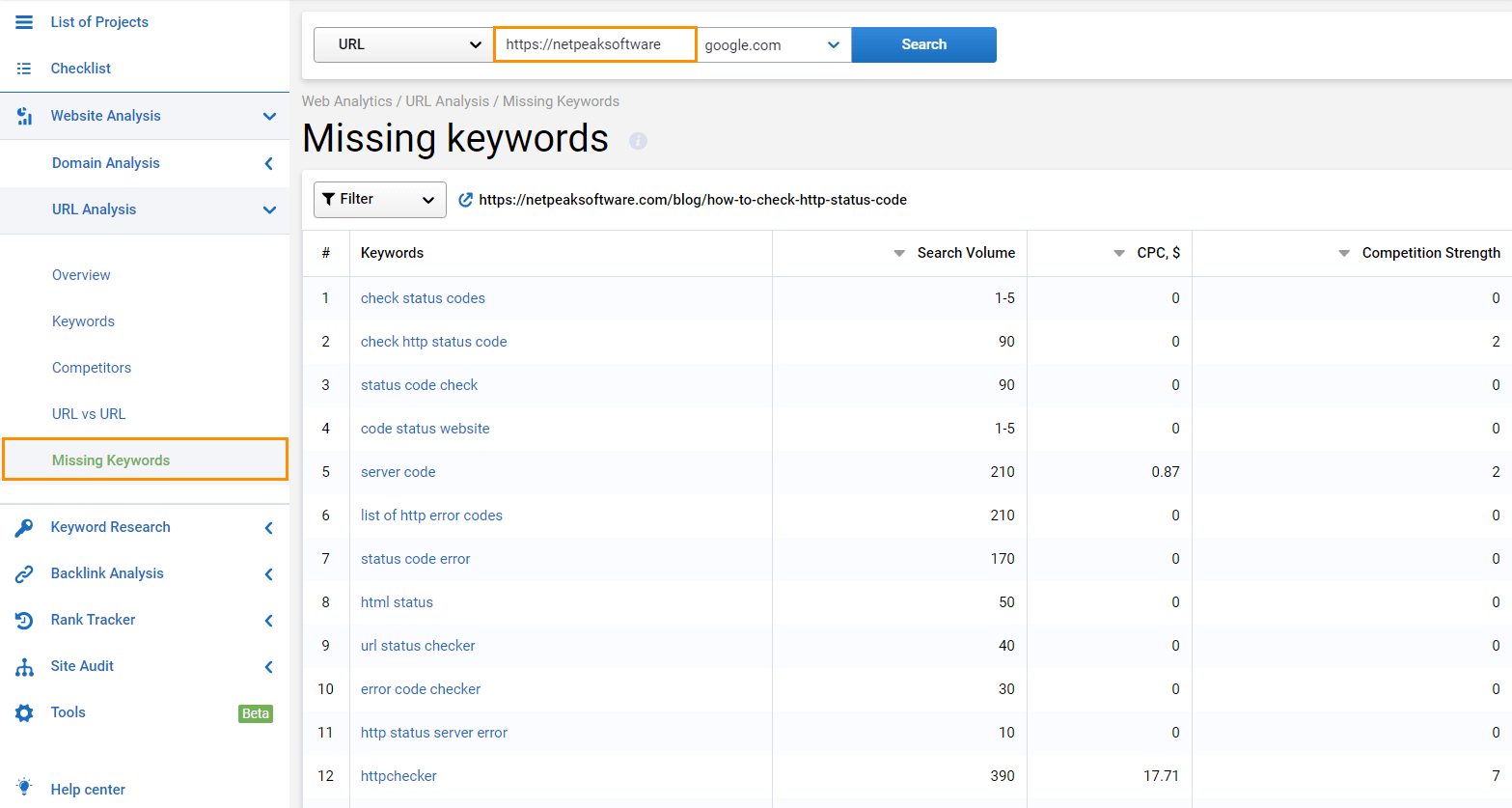

I use Serpstat to search the blog posts that miss meaningful keywords. In this service, I enter each URL in turn, and go to the 'Missing Keywords' section after the analysis is done.

All data end in my table.

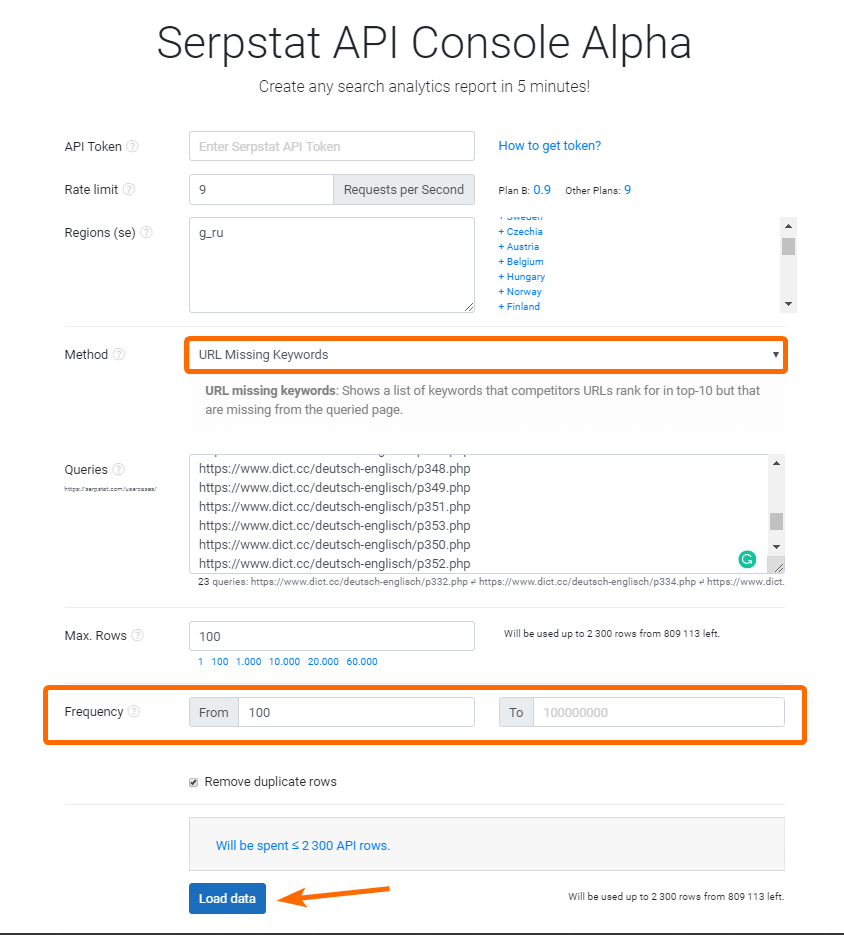

To quickly check the missing keyword phrases for the bulk of URLs, use the 'URL Missing Keywords' method in Serpstat API Console. This is the condition you can use to obtain the necessary keywords:

2.5. Which Blog Posts Should Be Deleted and which Ones Optimized

After all blog post types are gathered, I'm going to scrutinize which ones will go through optimization and which ones will be ruthlessly deleted so that they don't burden the website.

I'm going to delete:

- The blog posts that are useless to update. For instance, any tutorials on Google+. They won't reinstate their relevance because social media is shut down.

- The blog posts that received little, almost zero traffic or the issue they touch upon is irrelevant for your target audience's needs.

Which blog posts to optimize?

- Blogs posts with missing meaningful keywords.

- Pages that received traffic, but over time they went outdated and no longer brought value. They can be livened up and brought up to date.

3. How to Optimize Old Blog Posts

3.1. Sharpen Up Technical SEO

Technical SEO embraces many aspects, but I'll narrow down on three of them:

- website load speed

We've already covered this topic in the blog post: 'How to Speed up Your Website with Netpeak Spider.'

- internal linking

- structured data

When these fundamental aspects of technical SEO are fine-tuned, it will be easier for website visitors to navigate across your website, search robots to get more information about it, and search engines to put on your plate positive changes during ranking.

3.2. Work with Missing Meaningful Keywords

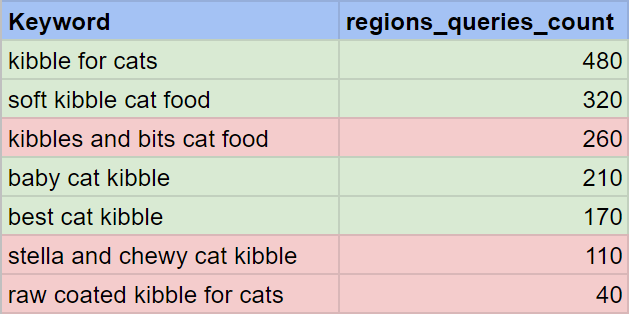

Earlier, I described how to search for missing keywords. When all keyword phrases are gathered, I turn them into clusters. I declutter the irrelevant and garbage keywords, the rest, I filter and group into clusters, and calculate the overall frequency.

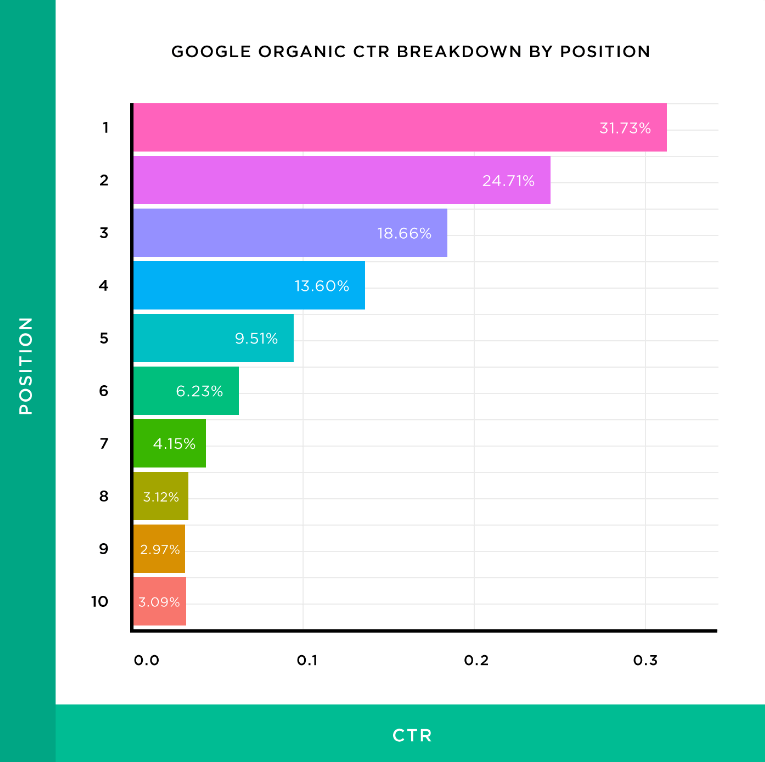

Next step, I weigh the chances of getting to the top search results with these keyword phrases. Approximate traffic can be measured by multiplying the overall frequency rate by CTR (based on the position, the page is resting at the moment). For example, if you're tougher than your competitors and lodge in the top-3 positions, the overall frequency can be multiplied by a higher rate (~0.5).

You can take average rates from the chart compiled by Brian Dean based on his research.

Then I compare the amount of potential traffic in clusters and try to make predictions to prioritize further work with these clusters.

3.3. Optimize Old Content

When I already have all needed keywords at hand, I start filling up these content elements:

- title tag

- meta description tag

- H1-H3 headings

- first paragraphs of the text

When it's done, I'm gearing up for partial or full blog post editing. It should be done when the text's language is overly complicated or when the topic is stingily covered.

Read more → Updating your old content

Going further, I check whether the images and videos are optimized in the blog posts.

Another source of traffic for the blog post is quality content in the comments. Encourage the readers to leave meaningful and comprehensive comments because search engines often show the page counting on the relevance of the answer in the comments. A good example is the Moz blog, where comments contribute to better coverage of the topic.

3.3. Get Backlinks

The ways of getting backlinks are numerous. For instance, you can collaborate with other blogs like yours: backlink swaps, backlink placement on barter terms, etc.

You'll have to reiterate all these steps as soon as the content gets old.

4. Prioritize the Tasks

I prioritize the work on the old content in this order:

- Blog posts with missing meaningful keywords.

- Blog posts receiving impressions for irrelevant queries.

- Blog posts with outworn data.

- Pages with content affected by technical SEO mistakes.

My approach may differ from yours. Every specialist prioritizes the tasks in a way that suits their pace the most. So it's totally up to you 😊

5. What Is Next?

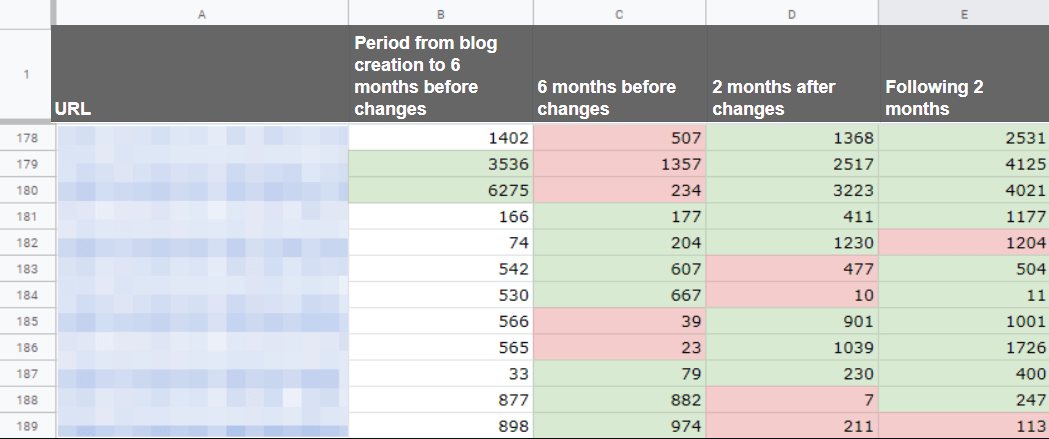

A mandatory process after optimization is a regular page-by-page tracking of traffic changes. Thus you can identify what exactly brings results and decide what is worth your efforts.

I recommend tracking changes every two months.

Summing Up

To increase website traffic by optimizing old content, you'll need to diagnose pages that suffer from underperformance first. Then identify which of them can be livened up and which are useless to reanimate. And only after this 'prep' work, you can get down to main activities:

- hone the technical optimization rates

- work with missing meaningful keywords

- fill up the essential parts of the content with keywords

- get the backlinks

And, of course, regular tracking of traffic changes after the work is done is a must.

How do you optimize old content on your blog? It will be swell if you share your experience in the comments 👇