Why New Websites Aren't Ranking on Google News [Expert Research]

From Experts![Why New Websites Aren't Ranking on Google News [Expert Research]](https://static.netpeaksoftware.com/media/en/image/blog/post/d8d906bf/900x300/google-news.png)

Until December 2019, you had to send a request to be added to the News app via Google Publisher Center to get your website ranked on Google News. The request was verified in a half-automatic way, and many information resources were refused.

On December 10, 2019, Google announced the launch of the new Publishing Center. At the same time, in the Publisher Help Center, it was written:

‘Publishers no longer need to submit their site to be eligible for the Google News app and website. Publishers are automatically considered for Top stories or the News tab of Search. They just need to produce high-quality content and comply with Google News content policies..’

In other words, now the selection of websites for indexing and ranking on Google News is exclusively the algorithm’s duty, and the human factor (the influence of reviewers) is excluded.

Earlier it was quite challenging to get into the News app, but the new process of algorithmic site selection has become even more stringent. Publishers have started to complain en masse that their websites aren't ranking in the News tab after registering in the new center.

The Google team said that registering a website doesn't guarantee its ranking on Google News. In fact, the revamped center is just a publisher center profile, where you can customize the details and features of displaying news. Unfortunately, 9 months after the new center launch, the Google News team has yet to provide an official explanation as to why sites aren't ranking on the News tab.

Answering the publishers' questions on the Google News Publisher Help Community, I analyzed websites claimed to be ranked. It turned out that many of the websites don't meet basic content and technical requirements or violate Google's guidelines for webmasters. However, there were also fairly high-quality sites.

I decided to do my own research on this issue.

Disclaimer: All the conclusions are my personal opinion based on the results of the analysis of my dataset. Your experience and the findings of other experts may differ from mine.

These results can be used to improve the website's quality and increase the likelihood of its ranking on Google News.

1. Investigation Course

1.1. First Stage

With the help of SEMrush, I got a list of the main search queries of one of the largest Ukrainian publication, which publishes news on various topics. Using a self-written scraper for each of 644 queries, I got the top 30 links from the News tab.

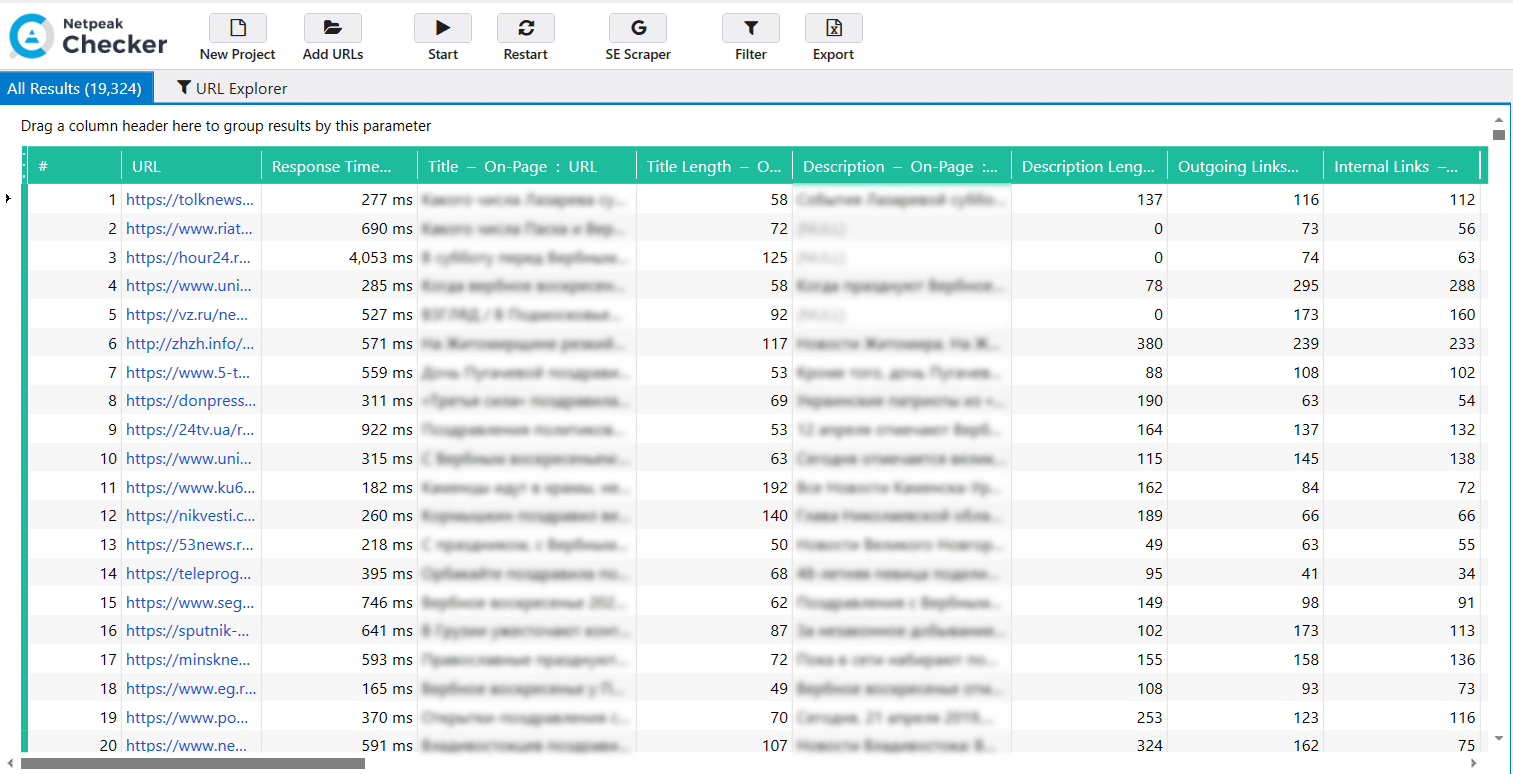

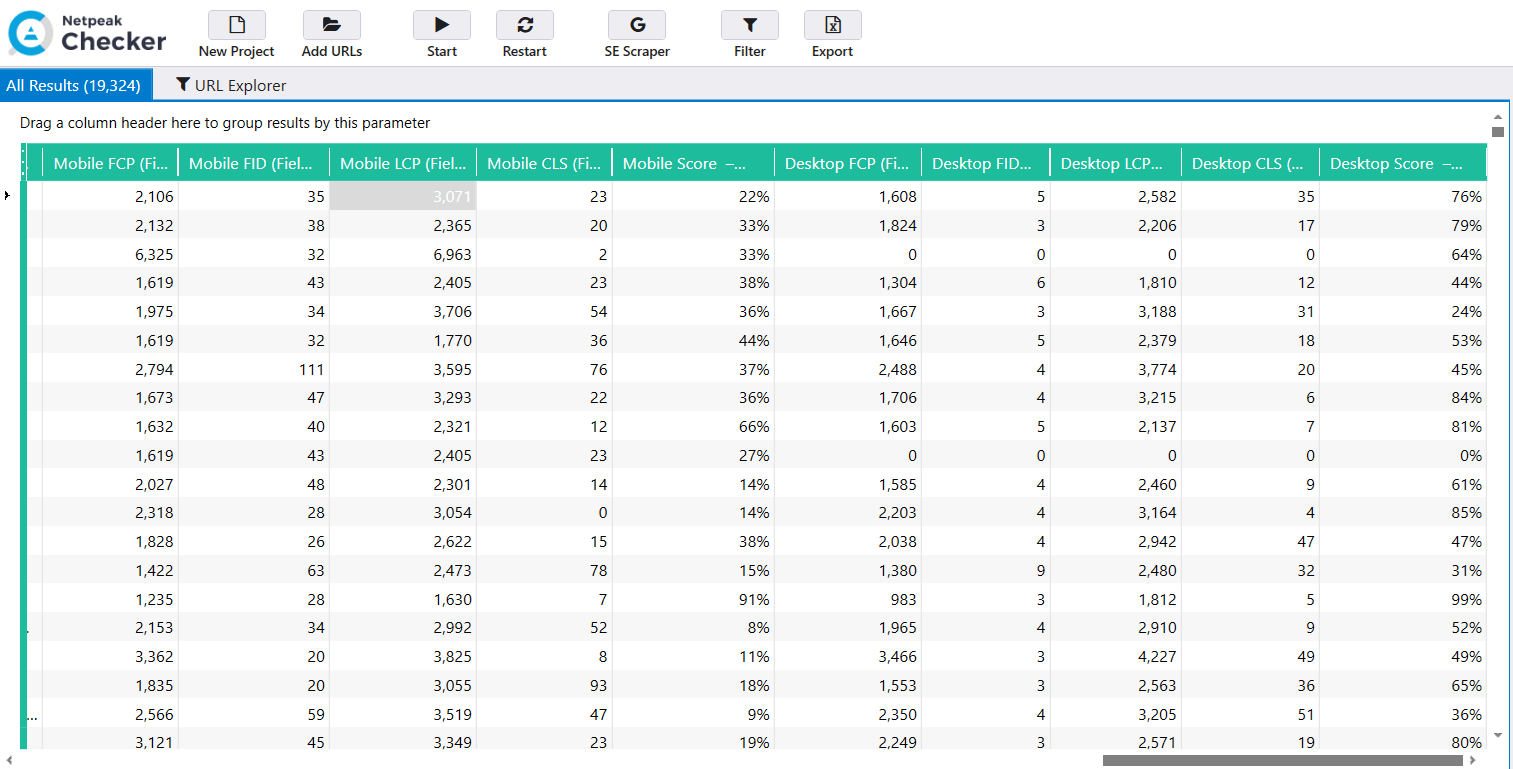

Then I uploaded these links (19320) into the Netpeak Checker and extracted the data for these parameters:

- ‘Status Code’

- ‘Title’

- ‘Title Length’

- ‘Description’

- ‘Length Description’

- ‘Outgoing Links’

- ‘Internal Links’

- ‘External Links’

- ‘H1 Content’

- ‘H1 Length’

- ‘Content Size’

- ‘Words’

- ‘Content-Length’

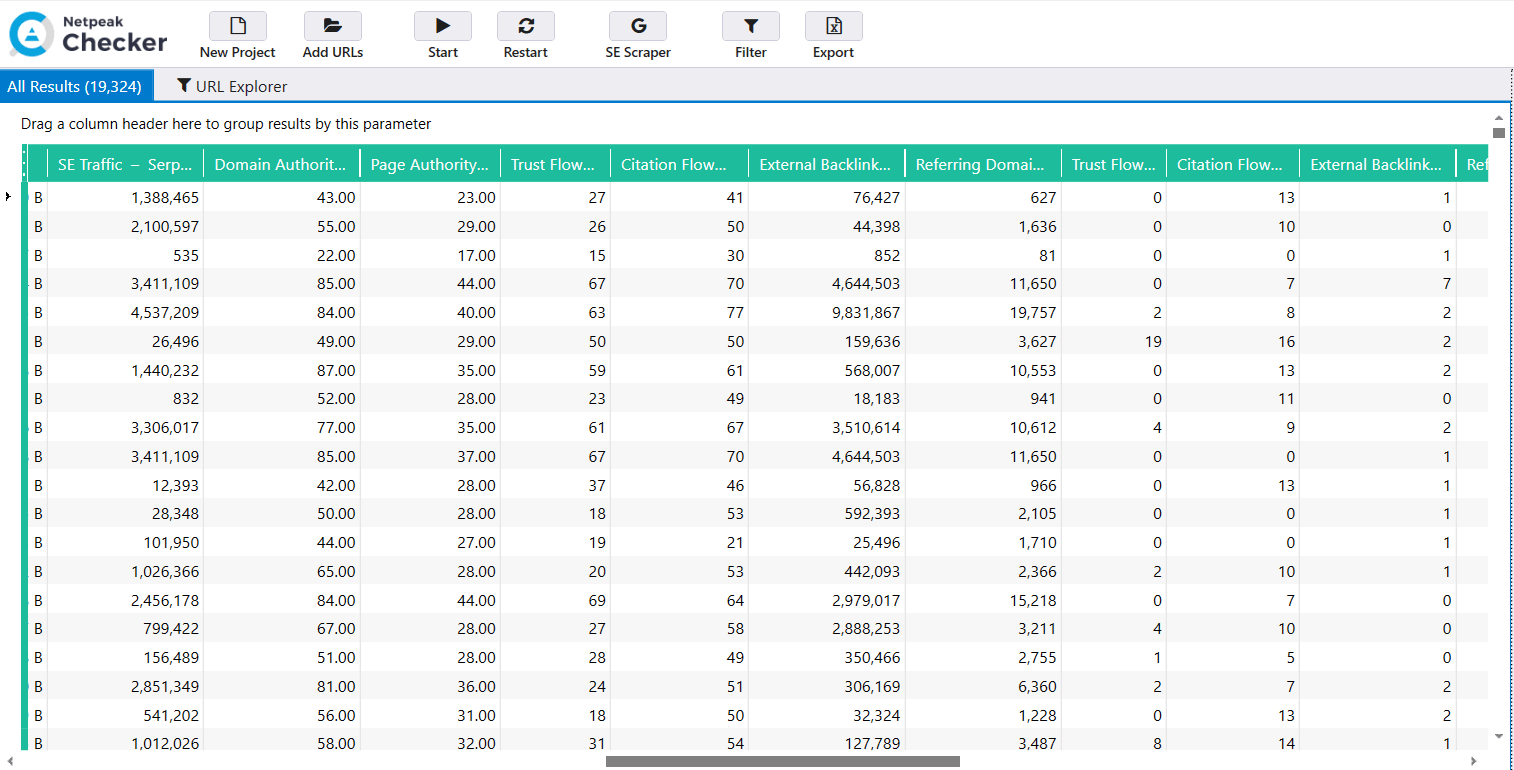

Using the Serpstat API in Netpeak Checker, I received data on traffic ('SE Traffic' parameter').

Majestic API helped me receive the data for these parameters:

- ‘Host Parameters’: Trust Flow, Citation Flow, External Backlinks, Referring Domains.

- ‘URL Parameters’ Trust Flow, Citation Flow, External Backlinks, Referring Domains.

The data on these parameters for the mobile and desktop versions were obtained from the Google API: FCP, FID, LCP, CLS, Mobile Score, and Desktop Score Google PageSpeed Insights.

You can check parameters and gather data from the services even in the free version of Netpeak Checker that is not limited by the term of use and the number of analyzed URLs. Other basic features are also available in the Freemium version of the program.

To get access to free Netpeak Checker, you just need to sign up, download, and launch the program 😉

Sign Up and Download Freemium Version of Netpeak Checker

P.S. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the most suitable for you.

Since the time interval is the main problem (the date the website was created, and when the new Publisher Center was launched), I assembled data from Whois for the Creation Date and Root Domain parameters.

After collecting and purging all data, 18588 links remained from the Google Search News tab.

As it appeared, all these links belonged to 2270 sites, of which only 12 (0.5%) were launched after the release of the revamped Publisher Center. Some of these newbie websites are located on 'drop domains', the other ones are well-known information resources that have changed their domain for some reason.

With that in mind, we can draw the first conclusion: in most cases, the new Google News ranking algorithm indeed ignores websites that are less than a year old.

However, given that some new websites still rank, I can assume that chances are, it’s not a technical problem (a bug). To my mind, when training the BERT algorithm, Google used overestimated thresholds according to the criterion that can be conventionally called ‘Date launch’.

1.2. Second Stage

At the second stage of detecting possible differences and dependencies, I gathered 10 websites in Google News Help Community, owners of which complained about ranking difficulties.

I received 7549 links for 10 websites in SEMrush. Then, in Netpeak Checker, I collected the same data as in the first selection (section #1.1.) and merged them.

As a result, I divided the merged selection into two classes according to two criteria:

- Website age → Age: More 1 year, Less 1 year.

- Ranking feature → News Ranking: Yes, No.

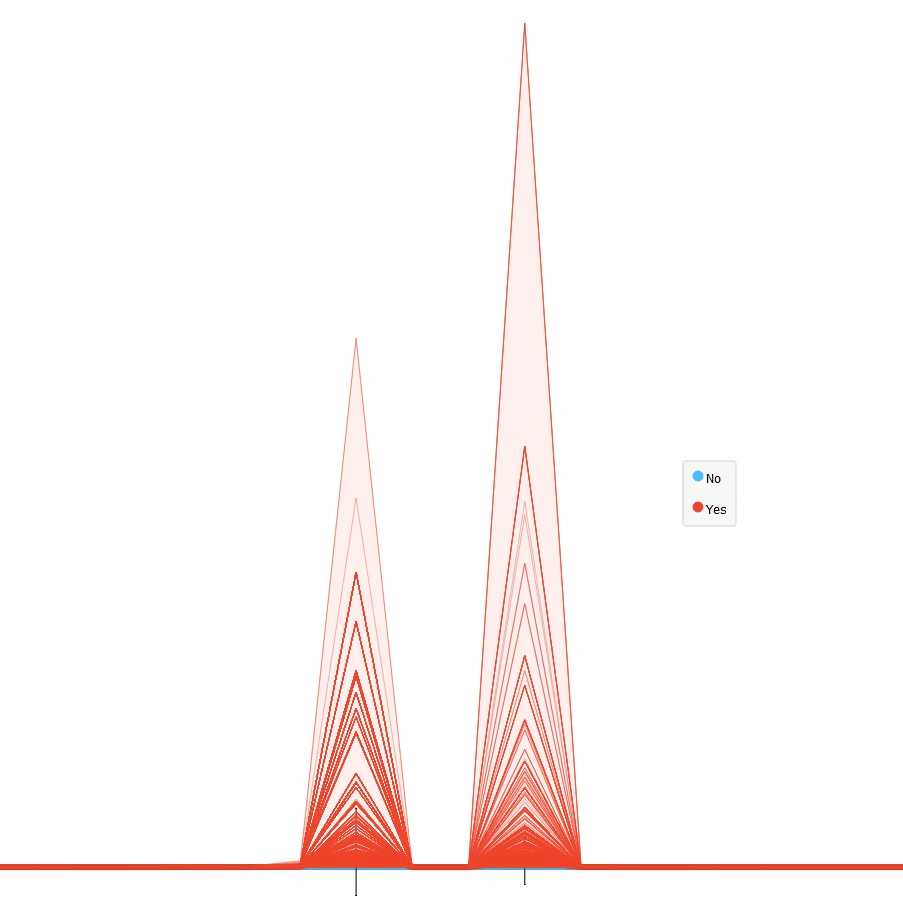

During the data mining process, I used a 'Line Chart', a standard visualization widget for displaying data profiles, usually in the numeric data order.

It showed that the best attributes of class separation are traffic (SE traffic according to Serpstat) and the number of external backlinks (Majestic: Host Parameters → External Backlinks).

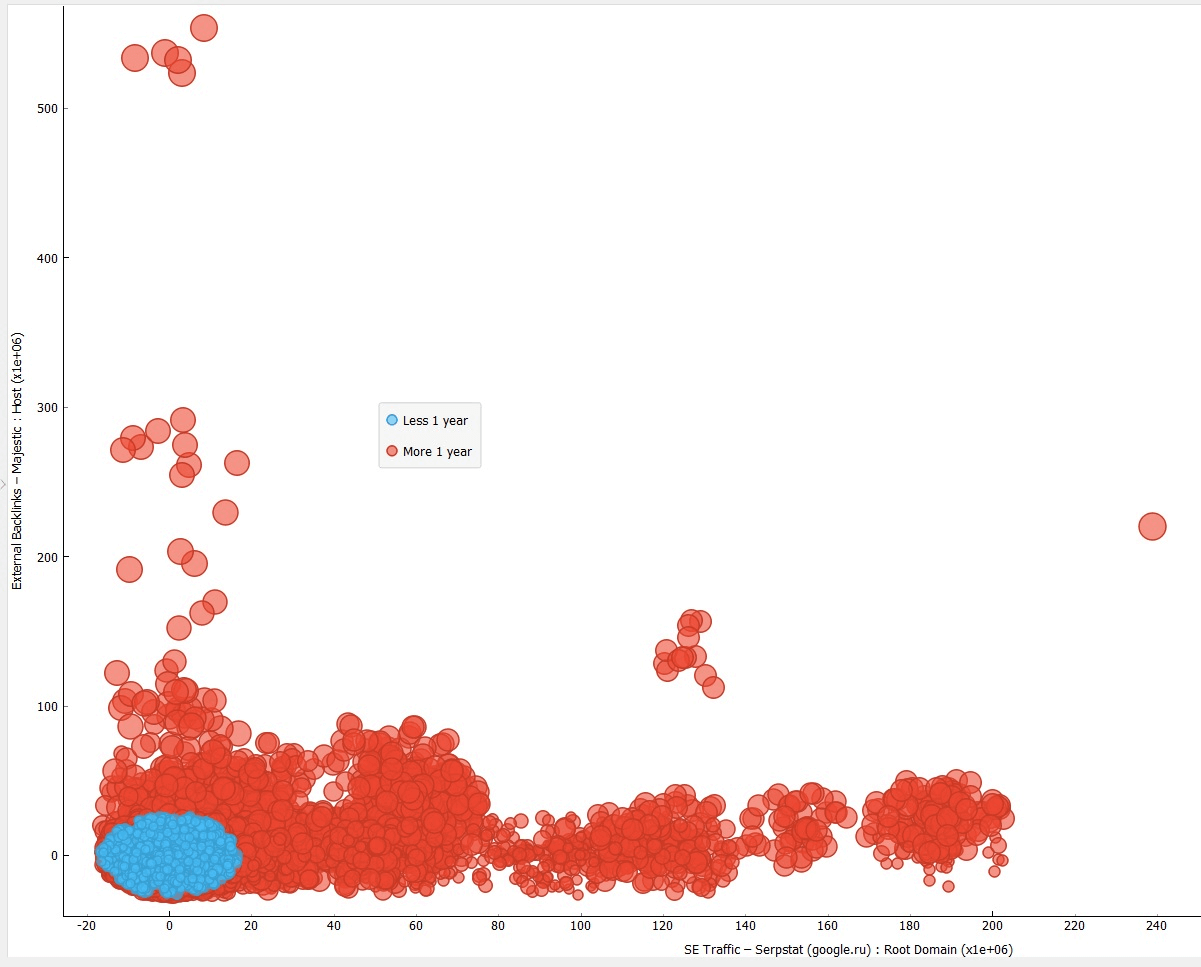

The screenshot below demonstrates the distribution of websites according to the launch date.

The traffic volume is displayed horizontally, the number of external links that lead to the website is displayed vertically, the size of the elements — ‘Host parameters’: Majestic Trust Flow. The sites themselves are divided into two classes by the date of creation: ‘Less than 1 year’ (blue elements) and ‘More than 1 year’ (red elements).

As you can see in the screenshot, websites less than a year old have significantly fewer quality backlinks and traffic. This makes sense since new websites aren't very popular and usually have few pages with high-quality content.

Google Search and Discover are undoubtedly different, but share the same 'E-A-T' principles when talking about the content. A little refresher: 'E-A-T' stands for Expertise, Authority, and Trust.

With that in mind, a paragraph from Search Console Help about Discover might provide some explanation for website ranking on Google News:

‘Our automated systems surface content in Discover from sites that have many individual pages that demonstrate expertise, authoritativeness, and trustworthiness (E-A-T).'

If you compare the two publisher centers, the new and the old one, of course, the second will have many more pages that can demonstrate a high level of ‘E-A-T’.

In my opinion, looking at the graph above, the number of pages in the Google index and quality traffic may signify that the content is written by experts. The number of backlinks that transfer PageRank make up for the authority of the page. To some extent, the website's launch date may be a criterion for the website's trust.

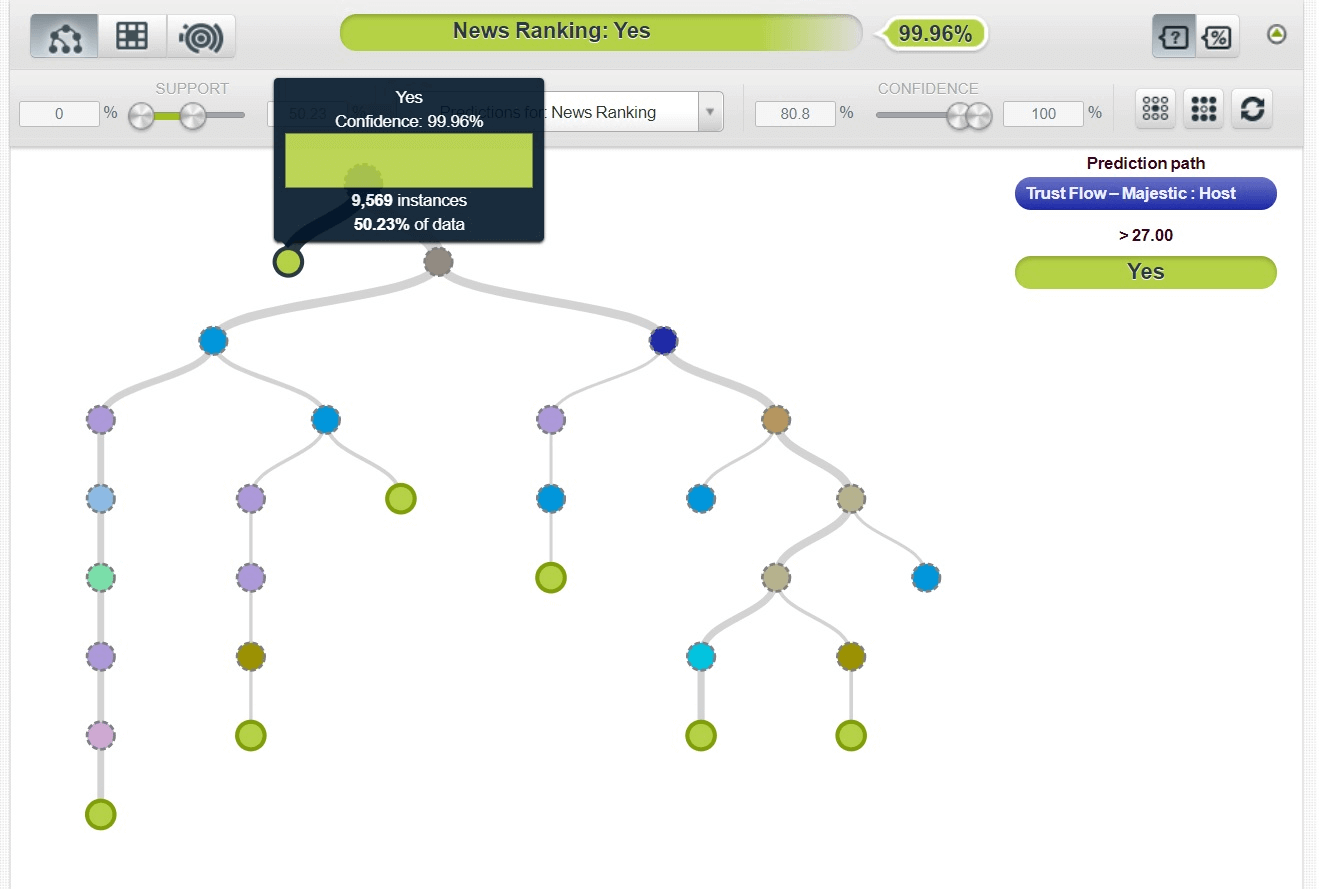

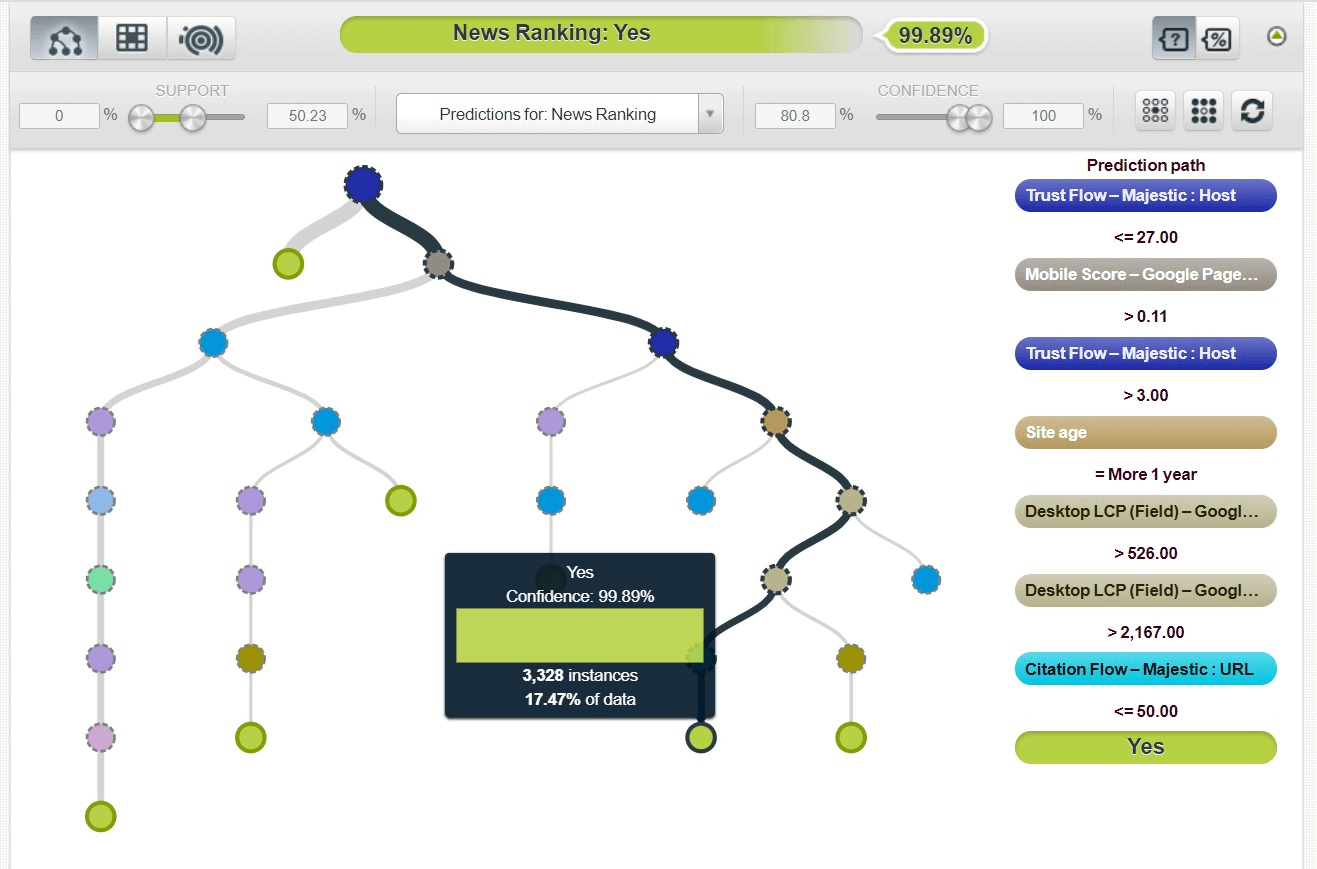

Using machine learning, I created a decision tree model in BigML with an accuracy of 99.9%. The decision tree predictor is the Majestic Trust Flow metric: Host.

Most likely scenario: if Majestic TF is over 27, the website is expected to rank in the Google News tab. It's pretty hard to grow the Trust Flow of this level in a year. It seems fair that in this scenario, peer websites have problems with ranking on Google News.

In the second most likely scenario, you can see that even if the Majestic Trust Flow score is in the range from 3 to 27, but the website has been around for more than a year, it is highly likely that it will rank in the News tab.

Considering the second scenario, we can assume that according to the ‘E-A-T’ model for new websites, the primary selection criterion is the Authority parameter, which, in most cases, is formed due to high-quality backlinks (PageRank). The second most important criterion is the website age (launch date).

These findings align with the results previously obtained using data mining (screenshot above, showing website distribution by launch date).

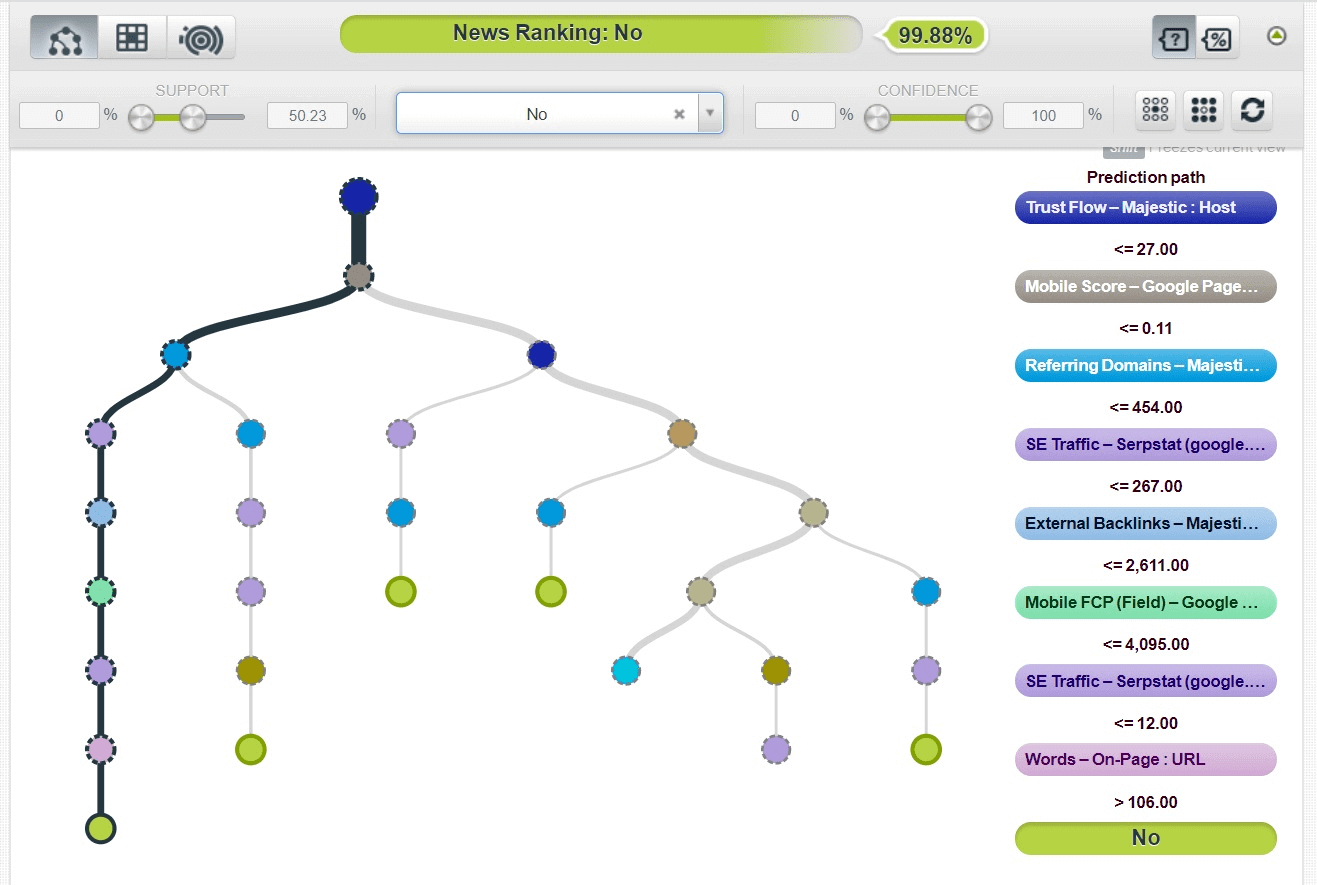

If we approach the most likely scenario of a site not ranking in the Google Search News tab, we'll see these results:

It might be that a website doesn’t rank in the News if it has:

- few quality backlinks and referring domains

- slow mobile version speed

- poor organic traffic

Takeaway

Many new websites don’t meet the technical requirements and content policies of Google News. It is absolutely true that such sites should not rank in the Google Search News tab.

Google uses machine learning to select sites for ranking, and apparently, the selection criteria are relatively high. Perhaps, the developers intended to reduce the number of spam and low-quality site impressions on Google News.

News websites have long been equated to the YMYL category and must be evaluated using the high E-A-T standards.

The results show that essential ranking signals most likely are:

- The quality and quantity of backlinks as the main signal.

- Trust and the elements that play a role in its forming, including website age (launch date).

- The volume of organic traffic, which correlates with engagement in publications and the expertise of an author (or publisher).