How to get your website to show up on Google: Tips that work

Site Audit Issues

If your website is not indexed, it doesn't exist. If you want to be shown in Google search results, you need to regularly monitor, update, and optimize your content. If your aim is not just to make it to the list but to rank high in SERPs, you’ve got to work on it. In this blog post, you’ll learn how to get your website to show up on Google and improve your SEO metrics with simple but effective steps.

What Is Crawling, Indexing, And Ranking, Anyway?

Crawling, Google indexation, and ranking are the full-time jobs of search engines. It’s a never-ending process where crawling bots systematically discover content on different websites, index it, and analyze how high to rank these pages.

In simple words, crawling is the process where a team of search engine bots discover new and updated content with the help of links. Google fetches new content and then follows the links on these pages to find new URLs.

After being crawled, all these pages get into the database called Google Index. It’s a library that contains information about each page, such as its text and metadata like titles, headers, links, images, etc.

However, being indexed doesn’t mean being ranked. Google’s algorithms pick only the most relevant and user-friendly pages from its index and rank them accordingly. Here’s what you need to know to ensure that the process of crawling-indexing-ranking is seamless and beneficial for your website.

The Page Not Only Has To Be Valuable, But Also Unique

How to get your website to show up on Google? In 2023, Google has updated its EEAT guideline and now the first-hand experience has become a central ranking factor. This means authentic content, based on real-life and experience, will beat generic information. While more and more content makers use AI to write generic content, first-hand experience differentiates general, dull content from authentic content. That’s why it’s crucial to write unique content, backed up by proven data and real-life experience.

Have A Regular Plan That Considers Updating And Re-Optimizing Older Content

How to get your website on Google? You can’t just roll out tons of content, leave it there, and hope for the better. You need to regularly optimize and improve your old content, update or redirect content that doesn't bring you traffic, and improve high-performing content to make it work even better.

The first thing to do to index my site on Google is to include updating and re-optimizing your content in your annual content plan. Here are the main benefits of content audit for your website:

- Understand what content performs best

- Find “weak points” in your content

- Analyze main content metrics and improve your content

According to Semrush, 53% of marketers say updating their content helped increase engagement, while 49% saw increased traffic and rankings.

Remove Low-Quality Pages And Create A Regular Content Removal Schedule

With the help of such tools as Google Analytics, Ahrefs, Google Console, or Netpeak Spider, you can take an inventory of your content and analyze the following metrics:

- Traffic

- Main keywords

- The average ranking position of the primary target keyword

- Backlinks

- Date of publication

- The cluster it belongs to

After that, try to answer the following questions for better Google website indexing:

- Is there content that generates little traffic?

- What content gathers the most views?

- What content has the best conversion rates?

- Is there any content that gets a lot of impressions in Google search results but little or no clicks?

If you have any duplicate or outdated content, feel free to delete it or redirect it to the relevant page. If you see that your content ranks on the first page for some keywords, optimize it even better to hit first positions. Here’s what the content audit may look like:

Now, let’s see how to get Google to crawl your site.

Make Sure Your Robots.txt File Does Not Block Crawling To Any Pages

Your robots.txt file may block the crawling of some pages by giving instructions to search engines. Sometimes, you may want Google not to index duplicate pages, private pages, or resources like PDFs and videos.

But if your robots.txt file tells search engines not to crawl some important pages or even the whole websites, you won’t be indexed. Robots.txt file consists of 2 parts:

- “User-agent” identifies the crawler

- The “Allow” or “Disallow” tells the crawler to crawl or not to crawl a specific page or website

That’s why for indexing website on Google, you need to review your robots.txt and check if there is no directive that prevents Google from crawling your site.

Check To Make Sure You Don’t Have Any Rogue Noindex Tags

"Noindex" tags are directives in the HTML code that instruct search engines not to index that particular page. If a page has a "noindex" tag, search engines will skip indexing that page, and it won't show up in search results. To check if your content has a "noindex" tag, you can follow these steps:

- Open the webpage in your browser.

- Right-click on the page and select "View Page Source" or "Inspect."

- Look for the <meta> tag in the HTML code. The "noindex" tag is usually in the <head> section of the HTML code.

Make Sure That Pages That Are Not Indexed Are Included In Your Sitemap

Take the following steps to check if not indexed pages are included in your sitemap:

- Access your sitemap

Sitemap is an XML file that lists all pages on your site that you want search engines to index. You can find it at sitename.com/sitemap.

- Check the sitemap content

You can view the content with a text editor or use online tools that allow you to visualize the sitemap.

- Analyze individual pages

For each page that is not indexed, inspect the HTML code to see if there's a noindex meta tag. You can do this by right-clicking on the page, selecting "View Page Source" (or a similar option depending on your browser), and searching for the meta tags. Look for something like <meta name="robots" content="noindex">.

- Use Google Search Console

Google Search Console provides detailed information about your website's performance in Google search. Navigate to the "Coverage" report in Google Search Console to see information about indexed and non-indexed pages and any issues your website may have.

Ensure That Rogue Canonical Tags Do Not Exist On-Site

Rogue canonical tags can signal search engines about the preferred version of a page in the wrong way. Follow these steps to ensure that rogue canonical tags do not exist on your site.

- Review Site Code:

Inspect the HTML code of your website's pages. Look for the canonical tag in the <head> section of each page. The canonical tag typically looks like this: <link rel="canonical" href="https://www.example.com/correct-url">.

- Check Content Management System (CMS):

If you're using a CMS, check for any automated processes or plugins that might add canonical tags. Some CMS platforms automatically generate canonical tags based on configuration settings or plugins.

- Search for Incorrect Canonical Tags:

Use tools like Google Search Console or third-party SEO auditing tools to identify pages with canonical tags. Verify that the canonical URLs align with your SEO strategy and do not direct to incorrect or irrelevant pages.

Submit Your Page To Google Search Console

Last but not the least - submit your page to Google Search Console. It’s the easiest way to maintain a healthy website, as it requires almost no effort from you. All you need to do is to submit your website and receive regular emails about any issues or errors that need to be fixed.

How To Improve Your SEO Metrics With With Netpeak Spider

How to index site on Google? Netpeak Spider is an all-in-one SEO tool that provides insights about any website. You can quickly check if Google indexes your page, get tips on what to improve in your optimization strategy, and define the most important metrics for SEO. With Netpeak Spider, you can check Google index status and detect any related issues in just a few moments. Here is how to use it.

- Launch Netpeak Spider and select the necessary analysis parameters

Choose the parameters you need to analyze and add the target URLs. Go to the "Crawling and Indexing" section in Parameters and select the following: "Compliance," "Allowed in Robots.txt," "Directive in Robots.txt," and "Meta Robots."

- Start scanning and retrieve all the data you need for analysis

Sort out the links and parameters and click "Start" to initiate the crawling process. You will see the results in a table where you can easily track every link together with the requested data.

- Export the report with issues

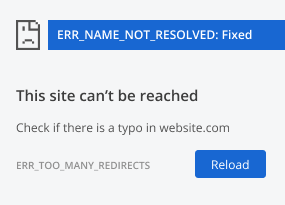

Netpeak Spider not only checks Google indexed page but also detects other SEO-related errors or problems. Go to "Reports" and then "Issues" to see the complete list, which includes broken links or redirects, non-compliant canonical URLs, 4xx error pages, etc.

Conclusion

Now you know how to get Google to crawl your website. First of all, you need to spare enough time to optimize your website content, keep it updated, and regularly scan your website for any errors. However, if your website is indexed, it doesn’t automatically mean it will rank. To rank, you need to regularly analyze the main keywords, optimize them, and create human-oriented, unique, detailed, and authentic content.

.png)