Netpeak Checker 3.0: SERP Scraping

Updates

Folks, in this post you'll find out what we've prepared for you in Netpeak Checker 3.0 release. Moreover, I'll describe how this tool has changed and why you need Netpeak Checker in the first place, as it's one of the most frequent questions from our users :)

- Changes in Netpeak Checker 3.0

- Important Changes Over the Past Year

- What Tasks Netpeak Checker Solves

- In a Nutshell

- Surprise

Watch a detailed video overview about the update → 'Netpeak Checker 3.0: New Version Overview'

I. Changes in Netpeak Checker 3.0

1. SE (Search Engines) Scraper

We always wanted to highlight that Netpeak Checker is a multifunctional tool with numerous options of implementation in digital marketing. And now you'll have even more implementation options. With a new tool under an 'SE Scraper' nickname you can get Google, Bing, Yahoo, and Yandex search results in a structured table with a lot of useful data.

SERP Scraping is a Pro feature of Netpeak Checker. Eager to have access to this and other PROfessional features such as estimation of website traffic and export to Google Drive / Sheets + all features from the Standard plan?

Hit the button to purchase the Pro plan, and get the most inspiring insights!

1.1. How to Use SE Scraper

Follow these easy steps to get desired results:

- Open 'SE Scraper' on the dashboard.

- Enter the list of search queries to the 'Queries' tab. Enter each query from a new line, you can also use search operators.

- Go to the 'Settings' tab.

- Choose search engine: Google, Yahoo, Bing, or Yandex (or all of them at once).

- Choose the number of results you want to get. You can get 1, 3, 10, 50, maximum (the tool will try to obtain the maximum number of search results) and custom (any number of results you want to get but no more than 1,000).

- Choose what additional types of snippets you want to scrape except regular results: video, images, news, sitelinks.

- Use additional settings if necessary: 'Search engines' (you can read more about it below), 'CAPTCHA' (use CAPTCHA auto-solving to scrape search results faster), 'List of proxies' (use proxy in order not to run into CAPTCHA).

- Press 'Start' button to launch scraping. You'll get results in table with a lot of parameters.

1.2. What Data You Can Get

The main features of our search results scraper are the parameters you can check:

- URL → page address.

- Position → indicates URL position in corresponding SERP. Pay attention that 'Sitelink' URLs hold the same position as the main result.

- Snippet type → it can be a regular result, video, image, news, or sitelink.

- Title → page title in SERP snippet. It is usually generated from <title> tag, but be attentive because title depends on the query. It means that one page can be shown differently depending on the search query.

- Description → description of the page under Title in SERP snippet. Just like a Title, description can be formed depending on the search query.

- Highlighted text → indicates text marked as bold in SERP snippet: this way search engines highlight exact match for the query or similar terms (synonyms). If there are several values, they are divided by commas.

- Sitelinks → texts (anchors) used for sitelink extensions in SERP snippet for certain search result. Divided by commas, if there are several links in snippet.

- Rating (review snippet) → indicates rating of the page, if it's shown in SERP snippet. Rating value is formed according to structured data in source code of the page.

- Featured snippet → indicates if the URL is mentioned in a special featured snippet block at the top of the search results page.

- Host → indicates host of the page from SERP. For instance, if an initial URL type is [https://subdomain.domain.com/page.html], then the cell will contain [subdomain.domain.com] value.

- Query and Search Engine → these parameters help you navigate because the table can contain data from different search queries and search engines.

1.3. Peculiarities and Tips

- Use special symbols and search operators in the query box. It will help you solve almost all tasks in SERP. You can read in details about how to use them in our friends' post 'Google Search Operators: Making Advanced Search Easier'.

- If you have entered several queries and/or selected several target search engines, then for your convenience the data in the table will be grouped by query / search engines by default. If you need to view or export data without grouping, you can remove it manually by dragging the corresponding parameters from the top part of the table.

- The tool saves all received data for the current session, that is, after the 'SE Scraper' window is closed and reopened, the data will not be lost. Keep in mind that if you close the program completely, the data cannot be restored.

- The 'Move URL' and 'Move Hosts' functions make it easy to use the data from this tool within the basic Netpeak Checker functionality. So you can transfer all unique URLs or hosts (in the {protocol}://{host}/ format, where the protocol is taken from the source link) to the main program table for further analysis (all duplicates are deleted during the transfer). Please note that the indexing status is also transferred — we understand very well how draining SERP scraping can be, so we made sure you don't have to check the same parameters several times.

- Click 'Save URL' to export all URLs from the current table in a text file format (TXT). This is convenient because these files can be easily opened in both Netpeak Checker and Netpeak Spider. If you need data in the table format (XLSX or CSV), you can click the 'Export' button.

- The 'Open SERP' item in the context menu of the table allows you to open the page with the original query the program makes to the search engine. Try to open this URL in the incognito mode of your favorite browser to make sure that we are not lying ;) And if something goes wrong (usually mismatches can occur because of different search results when JavaScript is turned on and off), contact us via Support – we will try to sort the situation out!

- You can apply the same complex filter in the 'SE Scraper' as in the main program table – just click 'Set filter...' button above the table.

- The table is automatically updated during the scraping (no more than once in a minute and every 20% of the progress). If you need to urgently get the most up-to-date scraping data, click the 'Reload' button.

- If you conduct extensive research and make too many inquiries to search engines, sooner or later they will block your requests or ask for a captcha. To minimize distraction, we recommend using the captcha auto-solving function as well as the proxy list.

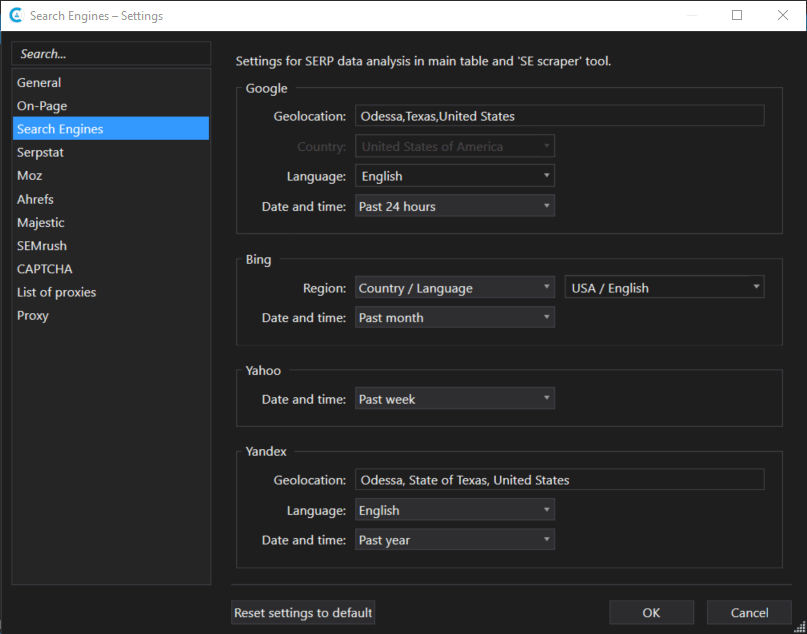

2. 'Search Engines' Settings

Now Netpeak Checker can be called a program optimized for local SEO and regional SERP research. The program settings have acquired a new 'Search engines' item which affects both the standard parameters of search results (Google / Bing / Yahoo / Yandex SERP) and the new 'SE Scraper' tool.

Let's take a closer look at new settings.

2.1. Google

- Geolocation → now you can use such feature as geolocation setting. Choosing the needed geolocation, you can see search results for specific city / region. Just start entering geolocation and choose your option from dropdown list. Hints are written in different languages, exactly as Google shows them.

- Country → this is a standard setting which allows you to choose the country, and search results will be shown accordingly. 230+ regions are available and by default the search is performed for all countries.

- Language → this is also a standard setting which allows you to choose one of the 40+ languages (and by default there is search for all languages). Keep in mind that people from one country can speak different languages, that's why we recommend using this parameter together with 'Country'. Also, it'll be useful to refresh 'Managing multi-regional and multilingual sites' recommendations by Google in your memory.

- Date and time → this setting allows you to limit search results by the date of their appearance in the internet (at least, Google is sure about this date): past hour, past 24 hours, past week, past month or past year. This limit is absent by default.

2.2. Bing

- Region → Bing is not so easy: you can choose either 'Country' or 'Country / Language' parameter. Depending on it, 36 countries are available (where this search engine is functioning) or 40 country / language combinations.

- Date and time → this one is similar to the Google settings but has fewer variations: past 24 hours, past week or past month.

2.3. Yahoo

- Date and time → as the saying goes 'you're welcome to all we have' :) All variations are similar to the Bing settings.

2.4. Yandex

- Geolocation → now your local websites promotion (or research) in Yandex will be easier and more convenient: start entering location and choose one of the variations.

- Language → this is a simple one, you can choose among 10 languages: Russian, Ukrainian, Belarusian, English, French, German, Kazakh, Tatar, Turkish, and Indonesian.

- Date and time → and this is also an easy setting: past 24 hours, past week, past month or past year.

Use these settings to exploit the potential of local SEO and conduct a quick niche research in the particular region. And to make it more convenient, we've prepared the following feature.

3. New 'Proxy Anti-Ban' Algorithm

Proxies allow you to receive data from services faster and reduce Captcha appearing because requests are made from different IP addresses. We really appreciate the resources you invest in purchasing proxy, so we came up with a special algorithm that will minimize the chance of proxy to be banned by the corresponding service.

We've conducted many tests to determine the time delays / the number of proxies that can be used simultaneously for each service and can provide reliable data scraping, and included them into the program. Also, we've improved the proxy switching function in case of a ban from a particular service and introduced the conditions for further unbanning. By the way, if all proxies are excluded, at the end of the analysis you will see a notification about which services were stopped and why.

I'll remind you that now the proxy list is used when connecting to the following services:

- On-Page (parameters that are determined when you directly connect to the website)

- Alexa

- Google SERP

- Bing SERP

- Yahoo SERP

- Yandex SERP

- Wayback Machine

4. Custom Filter and Parameter Templates

Now you can save and choose default templates for parameters and filters in Netpeak Checker as well as in Netpeak Spider.

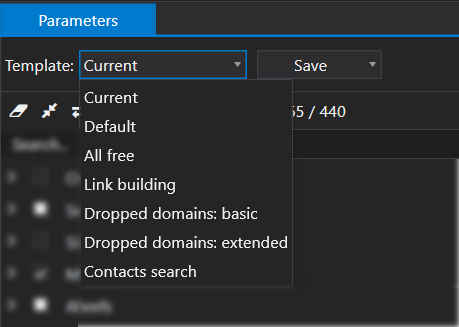

4.1. Parameter Templates

Choose the necessary parameters and save a template with the name you choose or use one of the default templates:

- All free → the most accessible template for parameters in Netpeak Checker in just one click :) This is some kind of answer to the question 'Why should you pay for Netpeak Checker if you still have to pay for additional services like Ahrefs, Majestic, Serpstat, etc.?'. Be careful, the Moz settings are also included to this template, however, to access them, you need to register an account, get and enter the Access ID and Secret key in the settings of the program and switch the API access type to 'Free (1 request / 10 sec; 10 results / request)'.

- Link building → this template is based on our colleagues' experience in evaluating link sources using the first version of Netpeak Checker back in 2010. This template includes basic On-Page parameters, data from Alexa, Whois, Google, Facebook as well as backlink parameters from the free version of Mozscape that can optionally be replaced by any paid analog (Moz Pro, Ahrefs or Majestic).

- Dropped domains: basic → here are the most basic parameters that will allow you to find dropped domains (the ones that have already been used but owners didn't extend them for some reason). After the expiration date, any person can register such domains and use them to promote their main websites. Before checking domains availability, try 'pinging' them using the 'IP' parameter. So you can quickly identify domains that are already occupied, even if there are more than 1 million of them.

- Dropped domains: extended → after applying a basic set of parameters, you can go on to a more detailed analysis and find out which domains are worth your attention.

- Contacts search → this template includes the server response code (to make sure that the website is accessible), Title, Description, all social network settings (if there are links to social networks on the page, Netpeak Checker will show them), all available email address parameters (both on the page and in Whois-records).

4.2. Filter Templates

Netpeak Checker is made for different researches, benchmarks, etc. You more than likely use the same filters for results and now you can create your own templates for it and use them in one click.

5. Filtration and Search

5.1. Updated 'URL Explorer'

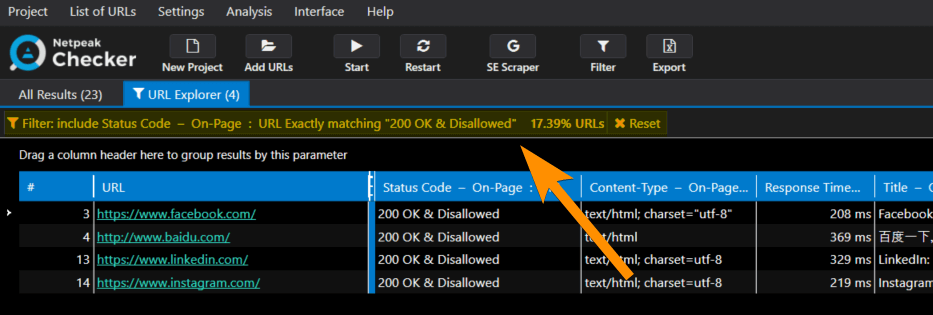

Now 'URL Explorer' tab shows filtration conditions for data and the ratio of the filtered URLs to the total number of results (in percentage, %).

You can open the window with settings in three ways:

- press 'Filter' button on the dashboard

- via 'Analysis' item → 'Set filter...' in the main menu

- with Ctrl+F keyboard shortcut

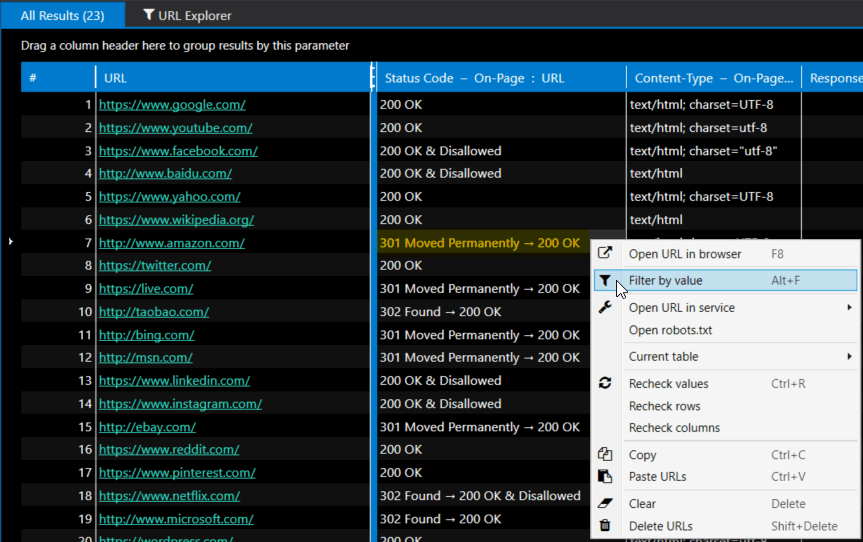

5.2. 'Filter by Value' Function

Please welcome a new function that will allow you to quickly filter results based on selected data in cells. For example, you've analyzed 1 million URLs, and some of them have 301/302 redirects. To find all pages that have a redirect, simply select the cell with the necessary response code and select the 'Filter by value' item in context menu:

Note that you can select several cells at the same time, but only within one row. This function is called from the context menu or by pressing Alt+F.

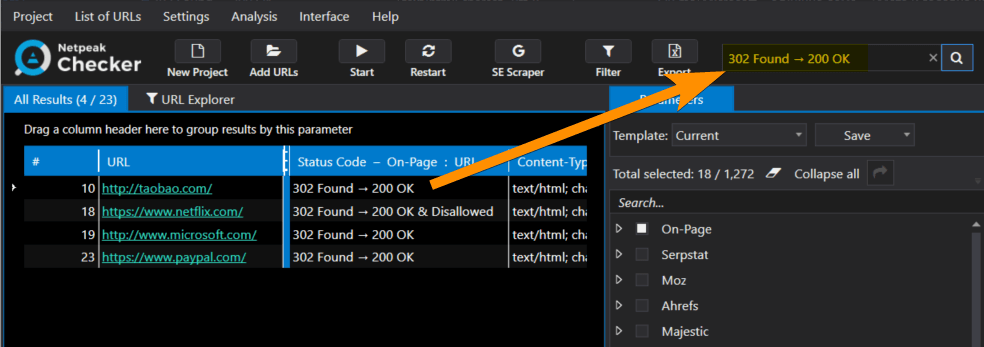

5.3. Quick Search in Table

Quick search is implemented in all tables of the program ('All Results' and 'URL Explorer' tabs in the main table, as well as 'SE Scraper' tool) – just press Ctrl+E and enter search query:

Be careful: this function is applied to all table columns with search type 'Contains' and non-case-sensitive letters.

6. Optimization of RAM Consumption (×2)

In the new version of Netpeak Checker, we applied the latest developments (our own) to optimize RAM consumption. As a result, we've reduced RAM consumption by more than 2 times.

It is even more critical in Netpeak Checker rather than in Netpeak Spider because here the bottleneck is the number of columns and Netpeak Checker has a lot of parameters. That's why RAM optimization really comes in handy when working with large datasets.

7. Interface and Usability

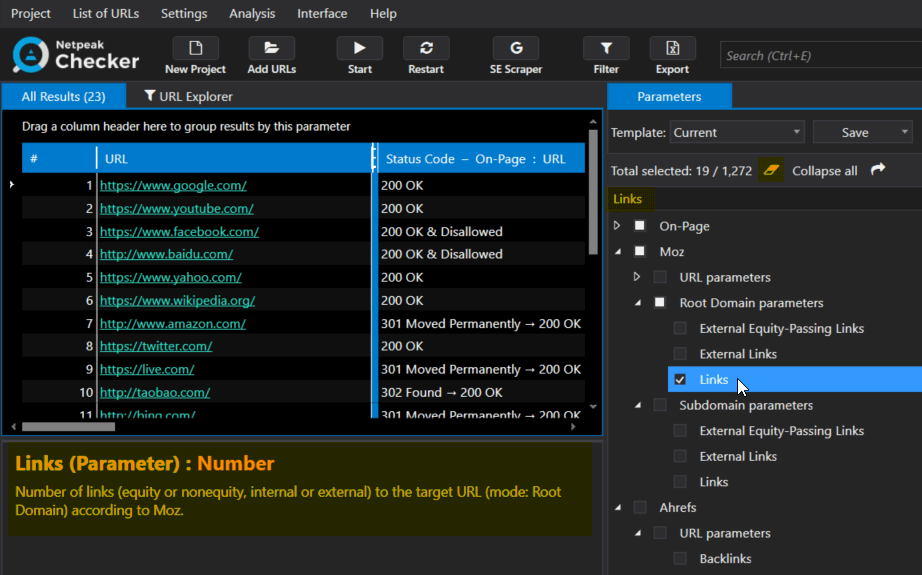

7.1. Parameters

'Parameters' tab has been moved to the right sidebar and inherited several handy functions from Netpeak Spider:

- Choose parameter / service and you will see its extended description in the info panel below.

- By clicking on the parameter, you will scroll to it in the current table. And if you click on the service, the current table will be scrolled to the first parameter of this service.

Just reminding you that parameters tab has search panel and it comes in handy if you need to scroll the table to the necessary parameter. Find the needed parameter via search panel and click on it, it's a cute little hack, isn't it? :)

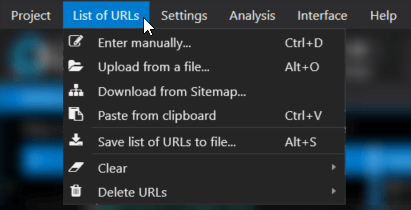

7.2. Adding List of URLs for Analysis

In the new version of the program, URLs for analysis can be added in the following ways:

- Manually → this method will open a separate window with a text field for entering a list of pages where each URL should be placed from a new line.

- From a file → we have seriously reworked this function and provided the ability to import URLs from files with .txt extensions (plain text file), .xlsx (Microsoft Excel), .csv (comma-separated values), .xml (Extensible Markup Language), .nspj (project Netpeak Spider) and .ncpj (project Netpeak Checker).

- From XML Sitemap → the following formats are supported: XML Sitemap, XML Sitemap index, TXT Sitemap, and their gzip-archives.

- From clipboard → just press Ctrl+V combination in the main program window and the list of URLs from the clipboard will be added to the table. Meanwhile, the notification will contain a brief information (what was successfully added, what is already in the table, and what was not added to the table because of specific reasons).

- Drag and Drop → you can simply transfer any file with the extension mentioned above from the folder directly to the main table. Netpeak Checker will analyze files and load the necessary data.

Pay attention to two nuances:

- All URLs that you upload to the program will go in the order they were placed in originally. This will facilitate the data search and allow you to compare results easily.

- If URLs were originally in [example.com] format, Netpeak Checker will automatically add the protocol to them and translate them into [https://example.com/] format. If you don't know which protocol the website has, I advise you to add all URLs and analyze them with enabled 'Status Code' and 'Target Redirect URL' On-Page parameters, so that you can find out where to change the protocol to HTTP.

7.3. Saving URL in TXT File

Now you can export the current list of URLs to the text file. Try to organize your own file storage structure with URL lists, where each needed list will be in a separate file that can be easily uploaded to both Netpeak Checker and Netpeak Spider.

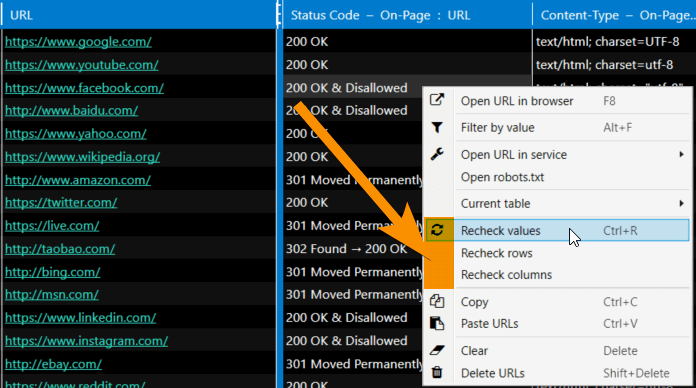

7.4. Data Rechecking

Also, now you can use the following functions in 'All Results' and 'URL Explorer' tabs:

- recheck values → starts rechecking all values you've highlighted

- recheck rows → rechecking all parameters for the selected URLs

- recheck columns → rechecking selected parameter for all URLs in the current table

7.5. Improved Reports

Our reports are becoming legendary. You can check this out just by exporting any report:

- Now when exporting a table, we do a so-called 'snapshot'. This means that sorting, grouping, applying a quick search, changing order, attaching columns, and filtering are considered in the export file.

- We have worked on the case of the Microsoft Excel limits (1,048,575 rows) when exporting to XLSX format. Now if the results don't fit in one file, Netpeak Checker will automatically split the data into several files and number them.

Note that you can upload reports in .xlsx (Microsoft Excel) and .csv (comma-separated values) formats.

You can hide other changes of this and previous releases and find out what tasks Netpeak Checker solves

8. Other Changes in This Release

8.1. Features

- 'Restart' function (F6 key) was added. When you click on the corresponding button, all data will be cleared and analysis of parameters for all added URLs will restart.

- We take data safety seriously, so now your new project won't replace the old one while saving. Instead you will be asked to save a separate project with a new name (Ctrl+S). If you don't have time to fill in the project name, then you can rely on the new 'Quick save' function (Ctrl+Shift+S), which will fill the name for you and save the project without any further questions :)

- Monitoring memory limit → checking the amount of free RAM and disk space: there should be at least 128 MB of both available for program to run. If the limit is reached, the crawling stops and the data remains intact.

- Now in case of redirection Netpeak Checker follows it and enters the values of the final page (not the initial one as it was before) for all On-Page parameters taken from the source code. The original page retains only parameters received from HTTP headers.

- 'Open URL in service' item is added to the context menu allowing you to open selected URL in Google services, Bing, Yahoo, Yandex, Serpstat, Majestic, Open Site Explorer (Mozscape), Ahrefs, Google PageSpeed, Mobile Friendly Test, Google Cache, Wayback Machine (Web Archive), W3C Validator or all at once (very fun function for your browser). Also the 'Open robots.txt' item was added to the context menu, it (by sheer coincidence) opens a robots.txt file in the root folder of the selected host.

- We've implemented highlighting links in the table. Now you will not mix up URLs and plain text in cells.

- When you click on any cell in the table, the same table will be shown in the 'Info' panel with the analyzed parameters, only vertically and specifically for this URL. Previously, this panel showed the full contents of the cell and it wasn't always useful.

- We've completely removed a separate bar with grouping by services in the main table. Instead of it, column name indicates the service and the 'target' (for example, URL or Host).

- Finally, we've added the program logo to the interface! We are well aware that our users can get confused because of the new similar interfaces of Netpeak Spider and Netpeak Checker, therefore we've provided at least this brand difference. By the way, when you click on the logo, you will be redirected to the 'All results' tab with all the functions that change data (sorting, grouping, quick search, etc.) cancelled. So this is an equivalent to the 'jump to the main page' function.

- Multi-window → it is now possible to open several Netpeak Checker windows directly from the 'Project' menu → 'New Window' (Ctrl+Shift+N) and run separate analysis.

- We've improved saving the positions of all windows and panels → and if something goes wrong, you can always reset their positions using the 'Interface' menu → 'Reset all positions', 'Reset window items', or 'Reset panel positions'.

8.2. Parameters Changes

Yandex has recently released an update in which the 'TIC' (thematic index of citation) was replaced by 'SQI' (site quality index) showing how useful the website is for users according to Yandex. We want to keep our tools up-to-date, so we added this parameter to Netpeak Checker (even though its verification is extremely unstable for reasons beyond our control). You can check out a new Yandex Webmaster parameter in the sidebar of the program.

The following services were also completely removed:

- LinkedIn → the API for receiving data on-demand was closed

- Twitter → similar situation

- StumbleUpon → service moved to Mix.com (we are still thinking over the need to integrate with this service)

II. Important Changes Over the Past Year

I have to say that sometimes we were so carried away by releasing new program versions that we simply didn't have time to describe significant changes for our users. I'll try to fix this situation and tell you what we've implemented in Netpeak Checker over the past year.

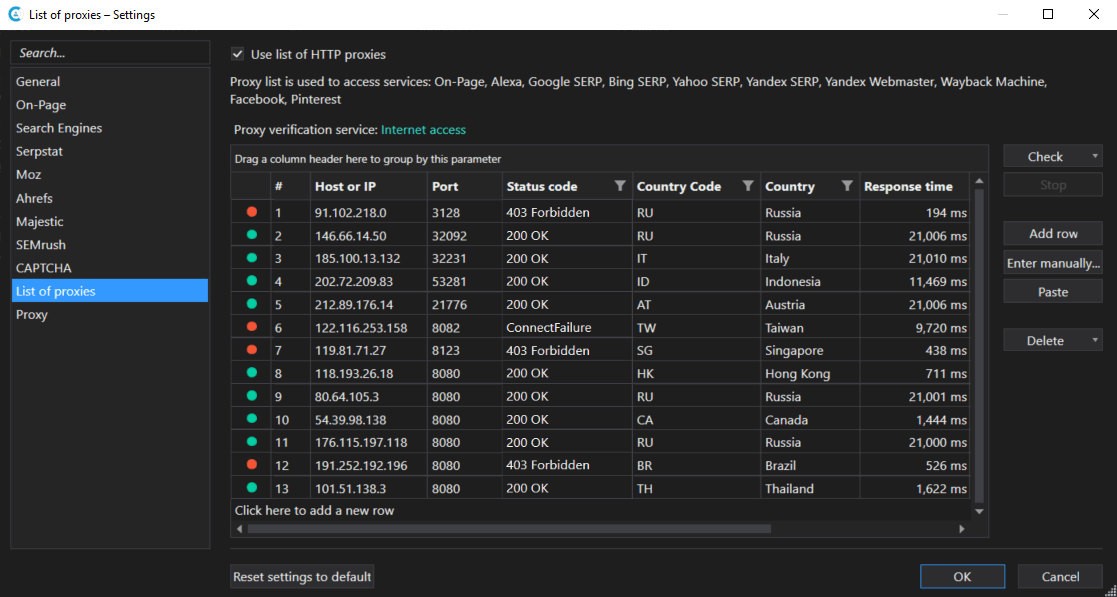

9. Verifying Proxy Operation Status

We've developed special functionality to verify one proxy or the entire proxy list right in the program settings. You can download your proxy list and check their availability to one of the pre-configured services:

- access to the Internet → this basic check shows whether the proxy is still alive

- Google → one of the most common directions of using the proxy list, therefore I recommend checking it through this service

- Bing

- Yahoo

- Yandex

In addition to checking the availability, you can also find out the response code, the response time and the country the corresponding proxy server belongs to.

10. Limits and Balance Display

Now you can see the remaining limits for accessing the following paid services in the settings of the program:

- Serpstat → shows how many API rows have been used and how many are available in the current period of time.

- Ahrefs → similarly, shows the number of already used and available API rows, as well as the type of subscription.

- Majestic → shows the remaining limits for different types of units: Retrieval Units, Analysis Units, and Index Item Units.

- SEMrush → shows a simplified API balance.

Also, the captcha auto-solving service anti-captcha.com hasn't been left behind. Enter your account key and you will see the balance right in the program settings. For the constant captcha solution, I advise you to drop in here once in a while to replenish the balance in time.

11. Analyzed Parameters

New parameters were implemented:

- 'Language' On-Page parameter → defines the language of the target page in the ISO 639-1 format. The algorithm for determining the language is based on the use of N-grammes and works more precisely on voluminous texts.

- Host parameters Yandex SERP → 'Indexed URL' (the number of pages of the site indexed by Yandex), 'Indexing' (whether the target host is indexed by Yandex) and 'Address' (the physical address of the organization that appears in the snippet on the Yandex search results page).

I also want to remind you the useful parameters hidden under the On-Page item and not yet included to the Netpeak Spider:

- Email addresses → list of unique email addresses found on the landing page.

- Social networks → a number of unique links to social networks (Facebook, Twitter, LinkedIn, Google+, YouTube, Instagram, Pinterest), as well as a list of the links themselves.

- Hreflang → the number of corresponding <link> tags, as well as the values of these attributes in alphabetical order.

Note that now when exporting a table to XLSX format, you can see the hints with a description of each parameter in the column name.

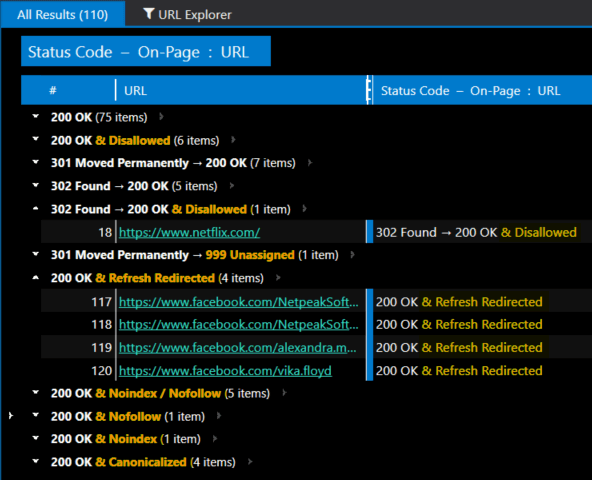

12. Special Status Codes

We've implemented new unique notations for the most common situations with status codes (Status Code, server response code). Now we display them with the & symbol:

- Disallowed → URL is blocked in robots.txt file.

- Canonicalized → URL contains Canonical tag pointing to another URL (note that if the page has Canonical pointing to itself, you won't see this notation).

- Refresh Redirected → URL contains Refresh tag (in HTTP response headers or Meta Refresh in the <head> section of the document) pointing to another URL (it works similarly to Canonical, so you won't see this if Refresh is pointing to itself).

- Noindex / Nofollow → URL contains instructions not to index and/or follow links accordingly (instructions can be placed in HTTP response headers or in the <head> section of the document).

So if the page returns 200 server response code and is not allowed to be indexed in the robots.txt file, now you will see '200 OK & Disallowed' in the 'Status Code' column:

13. Other Improvements

- Since we had lots of requests from our users, you no longer need admin rights to install, update and run Netpeak Software programs.

- An option to configure User Agent and use basic authentication for analyzing On-Page parameters is added.

- We significantly improved the algorithm for manual adding the list of URLs → now the program recognizes a URL with a path, but without a protocol (for example, [example.com/category]). It also defines several URLs in one line (in this case all URLs except the first one need a protocol). And we've implemented escaping #! for Ajax.

- Now you have an option for manual and automatic (with the help of anti-captcha.com) Google reCAPTCHA solving.

III. What Tasks Netpeak Checker Solves

Our users often ask what Netpeak Checker is needed for and much less often why they need our second tool, Netpeak Spider. It seems to me that the main reason for this is that Netpeak Checker is a multifunctional tool with a huge number of usage options that can be confusing. And, trust me, the release of the 3.0 version will only expand the list of options :)

To simplify your conception of this tool, we have prepared an approximate portrait of our target audience, as well as examples of tasks that can be solved using this program.

Who Uses Netpeak Checker

1Link builders

2SEO specialists and webmasters

3Marketing and content teams

4Bloggers

5Sales teams

6PPC specialists

If you haven't found yourself above, then please check out the examples of tasks below. Maybe we just don't know how to define your profession!

Examples of Tasks

You can see who Netpeak Checker can be useful for in the table below:

| Task | Who Face it |

|---|---|

| Comparing a large number of URLs by 1000+ parameters | 123 |

| Evaluating website’s quality for link building | 14 |

| Custom SERP scraping | 123456 |

| Reviewing domain age, expiration date, and availability for the purchase (searching for dropped domains) | 12 |

| Analyzing competitors’ promotion strategy | 1234 |

| Quick topic research for successful articles | 34 |

| Analyzing social media performance by list of URLs | 34 |

| Checking On-Page parameters for improving website’s SEO | 1234 |

| Scraping contacts (for example, email addresses) | 1345 |

| Researching backlink profile: your website’s, your competitors' websites | 123 |

| Quick search for potential customers in any business field via SERP scraping | 345 |

| Checking codes and response time of the landing pages in ads and sitelinks | 26 |

| Checking the indexation / snippets in Google, Bing, Yahoo, and Yandex | 126 |

IV. In a Nutshell

Netpeak Checker 3.0 has become a faster, more reliable and useful tool allowing you to work with the most interesting and complicated tasks in digital marketing. First of all, it can be a real lifesaver for link builders, SEO and PPC specialists, webmasters, marketing teams, bloggers, and sales managers.

Here is a list of the most significant improvements of this release and over the past year:

- 'SE Scraper' tool

- 'Search Engines' settings (Google, Bing, Yahoo, Yandex)

- 'Proxy Anti-Ban' algorithm

- Custom filter and parameter templates

- 'Filter by value' option

- Quick search in tables

- 2 times less RAM consumption

- Quick scroll to the selected parameter in the table

- Rechecking values, rows or columns

- Improved export

- New SQI (Site Quality Index) parameter from Yandex.Webmaster

- Verifying proxy operation status

- Displaying balance and limits for paid services

- Special status codes

- On-Page parameters: language, emails, social media, and hreflang

- And a lot of other improvements...

V. Surprise

Netpeak Checker 3.0 release doesn't happen every day, so we want to share happiness with our users and give them gifts!

Free Trial

- If you have already used Netpeak Checker, we offer you an opportunity to try a new version for free for a whole week (until September 25th inclusive).

- If you haven't tried our products yet, then you have 14 days of free trial right after registration.

Feel free to ask our Support specialists any questions about new functions, they will be happy to help you and provide useful insights according to your tasks. Just click on the button and follow the instructions:

Giveaway

We've prepared one more surprise for you – a giveaway with a cute owl-assistant and several free Netpeak Checker licenses on Facebook:

- 1st place gets annual license and an owl-assistant

- 2nd gets 6-month license

- 3d place gets 3-month license

To take part you need to:

- Like our page on Facebook – Netpeak Software.

- Share this post with our release announcement.

- Leave a comment under the same Facebook post describing how you plan on using SERP scraping.

- You can take part in the giveaway till 3 p.m. September 25th and we'll choose the winner via random.org at 5 p.m. at the same day.

Note that your shared post must be visible for all users ('Public' in Privacy Settings), otherwise we will not be able to track it and include you to our giveaway. Also, only those who completed all giveaway conditions will be added to the participants list.

Fellow colleagues, thank you for reading till the end!

I would like to inform you that while you are testing the 3.0 version of Netpeak Checker on Windows, we are working on a major Netpeak Spider 4.0 release which will cover almost all common requests from our users that we have received over the past two years. But before that, we are going to release a few patches dedicated to issues our tool spots and usability improvements.

We'd love to hear your comments and feedback. After so many changes, we need it more than ever!