What Is A Log File Analysis And How It Can Boost Technical SEO

Site Audit Issues

If you want to improve your website's performance and visibility using technical SEO, you may have come across the term logfile analysis. But what is it, and why do you need it? Find out in the article below.

What Do Log Files Stand For?

Let’s start with the basics. What are log files? Simply put, they are specific documents containing data about requests to your site’s servers. This information is crucial to boost your company’s ranking in search engines, improve site speed, and even enhance user experience.

Each time when Internet users, including potential customers, and bots interact with your website, a record about it appears in your log files. It contains details on the IP address, request type, user agent data, timestamp, HTTP status codes, URLs, etc.

However, these records are not stored forever. The server periodically erases them to make room for more up-to-date information. That's why you should perform log file analysis regularly to avoid missing a thing.

What Does Log File Analysis Mean?

When you know the answer to the question, “What is a log file?” it is easier to understand what analyzing these records means.

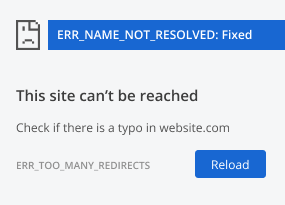

The log files analysis involves downloading and checking information on your website’s requests. It can help you find errors, crawling problems, and other issues related to your site’s rankings and visibility in search engines. Then, all that is left to do is to use the received insights for SEO optimization.

Why Is Log File Analysis Vital for SEO?

But what is log file analysis in terms of SEO? Why is it so important?

It is a no-brainer – such a survey shows how search engines crawl your website, namely, how bots discover updated and new web pages to be added to their index.

Here are more specific insights you can get by analyzing log files:

- Frequency of search engines crawling your site

- Most popular pages and sections

- Unexpected changes in your website’s performance

- Website’s loading speed

- Crawl problems and irrelevant pages, etc.

Thus, after conducting a log analysis, SEO improvements become more obvious.

How Can You Analyze Log Files?

It may seem complicated if you have never conducted log file analysis. Use our short guide to get the most out of it.

Get Access to Log Files

The first step is to get your website’s log files. There are a few ways to do it.

The simplest way is to request access from your IT department or development team. Here, specialists can pay attention to some issues:

- Log files may have fragmented data across several servers, such as origin servers and CDNs, requiring compilation for a full view.

- They can grow to terabytes for busy sites, complicating transfer.

- These files contain PII-like user IPs, raising privacy concerns.

Another popular way is to use an FTP client, for example, a free, open-source solution, FileZilla. You will find log files after connecting to your server with this FTP tool and authorizing by entering your logging data. Depending on the server type, you can see them in the following locations:

- IIS: %SystemDrive%\inetpub\logs\LogFiles

- Nginx: logs/access.log

- Apache: /var/log/access_log

Once you have the log files ready, it's time to start analyzing them.

Start Log File Analysis

There are several methods to analyze log files. You can choose one according to time, effort, and technical possibilities.

The first is a manual analysis using Google Sheets, Excel, or any other convenient tool. But there is a huge disadvantage – it is time-consuming. Moreover, you can miss something because of fatigue.

The better option is to use tools specially designed for log file analysis. They check data quickly and efficiently, providing detailed reports. Some examples of such software are:

- Splunk

- Logz.io

- Screaming Frog Log File Analyser

- Netpeak Spider

What should you pay attention to when conducting this task?

- Status codes and HTTP errors

- Potential crawl budget wastage

- Orphan pages

- Crawling speed.

These characteristics will give you directions on improving your technical SEO.

Prioritize Making Your Site Crawlable

What is a log file and its analysis? These questions no longer confuse you, but you should not stop here. Aim to enhance your website’s crawlability.

Why should you give this so much meaning?

- It ensures that search engine bots can easily navigate your website and index its content.

- Bots will better understand your site's structure and hierarchy, which helps them determine the importance of pages.

- They will also quickly assess meta tags, structured data, and internal linking, optimizing your site for better rankings.

You can enhance your site’s crawlability by conducting regular log file analysis and deep SEO audits. Using an advanced tool, you can get a list of specific recommendations for making bots crawl your site faster and better. We will talk about it in more detail in a few seconds.

How Can You Conduct A Website Audit Using Netpeak Spider

Netpeak Spider is a modern and easy-to-use website crawler that will help you conduct log file analysis and audits effectively. In addition, it will provide you with a detailed report for any number of sites or pages within minutes.

How to use it? Before you get access to functions, you have to complete the following steps:

- Visiting the official page of Netpeak Spider

- Downloading the tool

- Signing up and logging.

Then, you will see an intuitive and interactive dashboard. All you need is to type in your website’s URL and start crawling. Soon, you will get an overview of what issues you have to solve to enhance your technical SEO. When clicking on a problematic page, you will see a threat description and recommendation for fixing it.

For your convenience, you can choose specific parameters to fasten your log file analysis, like status code, response time, URL depth, and more.

To Wrap Up

The log file analysis is vital to make your website visible to search engines. Understanding data on your server’s requests can boost your ranking and enhance your site’s performance.

Tools like Netpeak Spider make this process easier by giving instant and clear suggestions on improving your website. Take advantage of it now and improve your technical SEO.

.png)