How To Fix Crawl Errors

Site Audit Issues

Content:

- What is a crawl issue?

- Most common causes for crawl errors

- How to fix different types of crawl errors

- How can Netpeak Spider fix crawl errors?

- Bottom line

SEO and digital marketing specialists use various analytical tools. This way, they keep track of how well their company's website performs, its current ranking, and whether it's well-optimized enough to generate organic traffic . These tools are called crawlers, and their primary function is to discover the website's content by following links on your pages and indexing that content.

However, you may stumble upon different crawl errors along the way, and this article will cover some of the most popular ones, together with tips on fixing them.

What is a crawl issue?

Crawlability mistakes occur when a crawling tool can't analyze a website as usual. When this happens, Google or another search engine can’t completely understand the content or structure of the site. This can prevent your pages from being indexed, meaning they won't appear in search results or drive organic traffic to your site.

Most common causes for crawl errors

Google recognizes two main types of crawl errors : site issues and URL issues . If you're dealing with the first type, they might affect your whole website , while the URL -related ones only relate to a particular page on your website .

Speaking of the causes of site crawl errors , the most popular ones include server, robots.txt, and DNS problems. For example, you may stumble upon a website error when a server prevents a page from loading, a search engine can't reach your domain, or it can't retrieve your robots.txt file.

Regarding the URL issues , they may occur because of duplicate content, improper link formatting, broken links, etc. Some of the most well-known types include 404 and soft 404 errors, 403 forbidden errors, and redirect loops.

Even though fixing site errors should be a higher priority, promptly treating any problem is essential. All of the abovementioned problems may cause traffic and credibility decline, poor on-site content quality, and eventually lost customers.

How to fix different types of crawl errors

Now that you know the main types of issues and what can cause them, let's focus on how to fix crawl errors . Below, you'll see a list of tips to solve some of the most common site and URL issues.

Fixing 404 errors

This is one of the most common crawl issues . If you see a 404 error, it means the search engine bot couldn’t locate the link. A 404 error can occur if you change the URL and don't update old links pointing to it, if there are broken links, or when you delete a page without adding a redirect.

Here are some of the tips you can try to fix this type of issue:

- Remove useless links;

- Implement 301 redirects for the links you no longer need;

- Refresh and update old content;

- Update internal links;

- Check for broken or improperly formatted links;

- Detect any site changes and see if any links were affected by them.

Fixing broken links

A broken link means there's an error in the URL. Here's what you can do to fix it:

- Inserting a 301 redirect: this is a highly recommended option for SEO purposes; this way, you'll get rid of a link you no longer have use for — instead, lead your visitors to similar product / service pages and get properly indexed pages;

- Updating or removing a broken link: if there's a typo in the URL, editing it is the easiest way to deal with the issue; it's also possible to remove a link if you don't need it anymore;

- If your site contains broken external links, you'll need to either remove it or replace it with an alternative, properly working one.

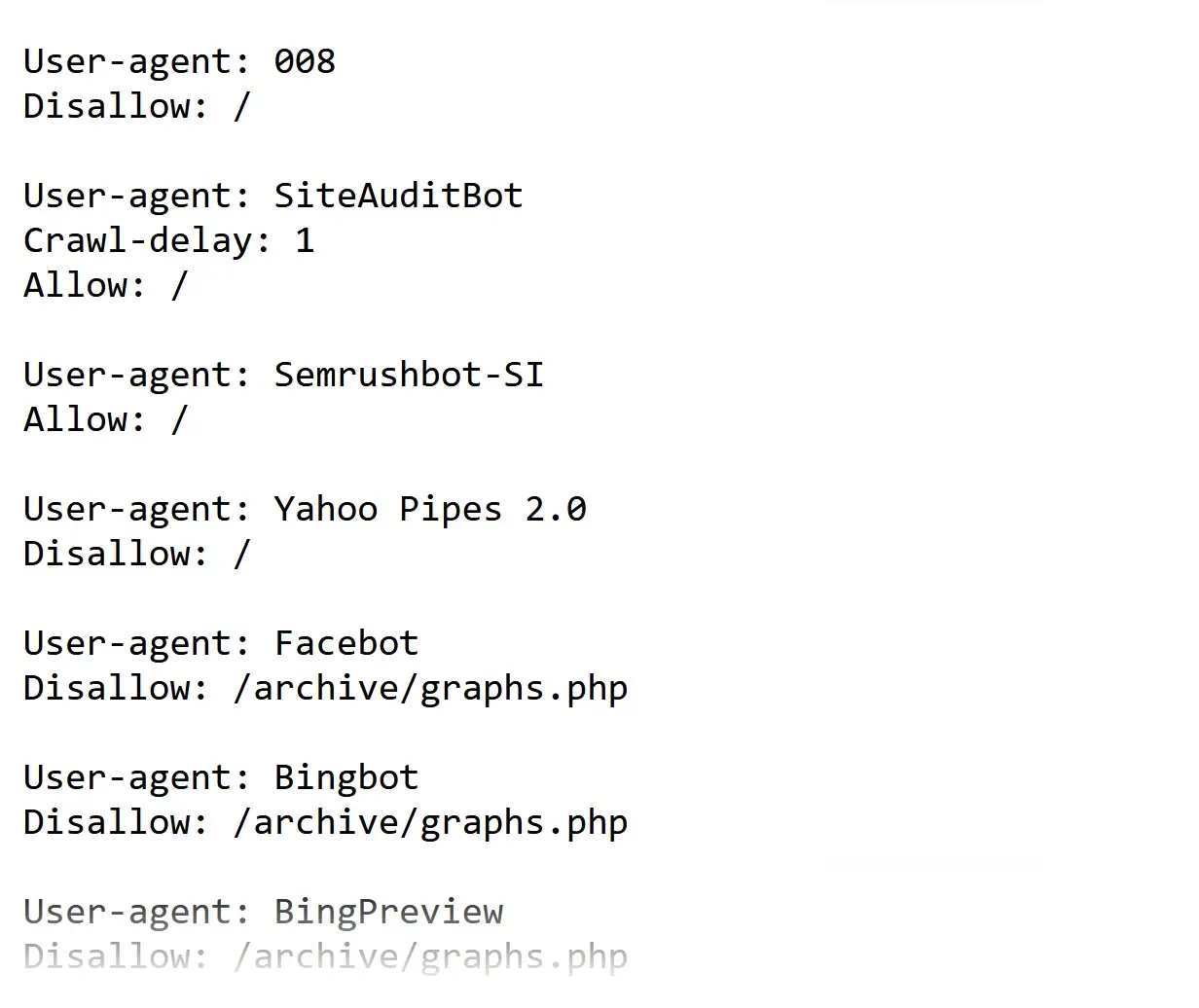

Fixing robots.txt errors

Robots.txt errors occur when a search engine can’t access your robots.txt file. This file shows Google and other search engines which pages they can or can’t crawl .

To fix related crawlability mistakes, try adding a sitemap index URL (the main sitemap keeping all of your sitemaps together) to a robots.txt file. This must help crawlers detect and understand your website’s structure easier and faster.

How can Netpeak Spider fix crawl errors?

To make sure your website is clear from crawl errors , you should run regular checkups — especially after you add new content to your site, replace or delete particular pages, and deal with redirect setups. To help you with that, try crawling your website using Netpeak Spider. This handy tool will detect and prioritize all kinds of issues in a matter of a couple of minutes. It allows you to simultaneously check as many URLs as you need and receive helpful troubleshooting tips.

So, how does this tool work? Follow this quick step-by-step below.

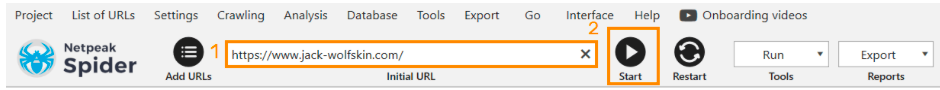

Step 1. Add a target URL

You can start by scanning a single link (but adding multiple URLs at once is also possible). You can either paste them one by one or upload a compiled file. Once you're done, click Start to run the crawling process.

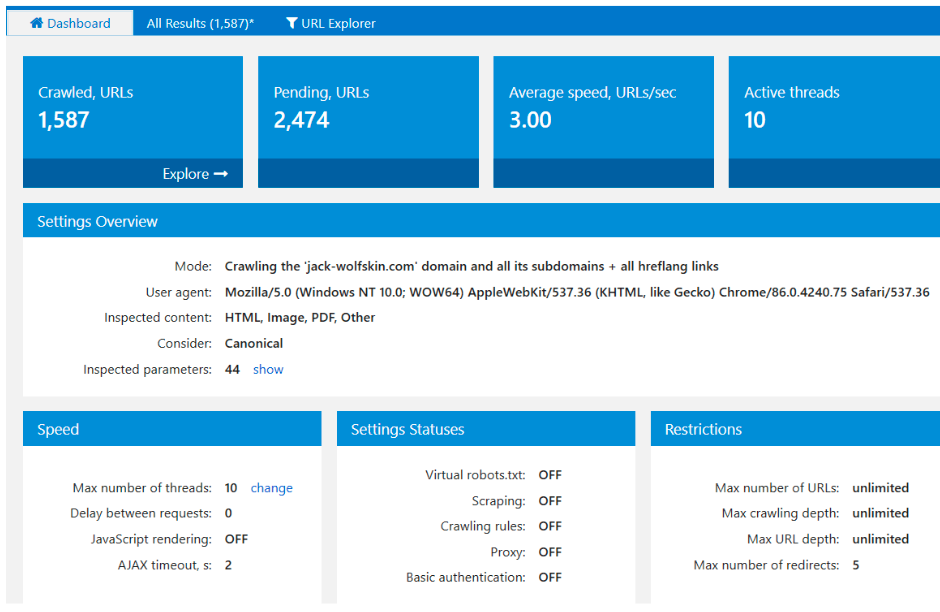

Step 2. Go to Dashboard to track results

While the tool is still processing submitted URLs, you can keep track of the crawling results in real time. Go to the built-in interactive Dashboard tab to see the latest research data on your website's performance.

Analyze the checkup results

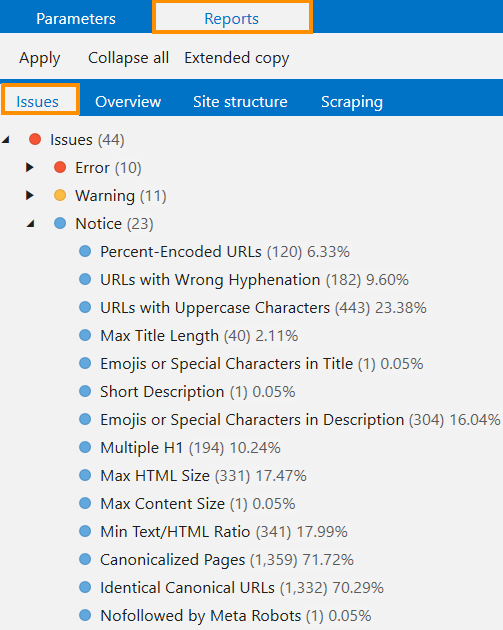

Once the checkup is done, you'll see right away if any submitted URL has crawl issue . The app will also evaluate how critical it is and show what you can do to solve it. This makes Netpeak Spider an indispensable means of troubleshooting crawling issues that will help you quickly deal with any kind of problem.

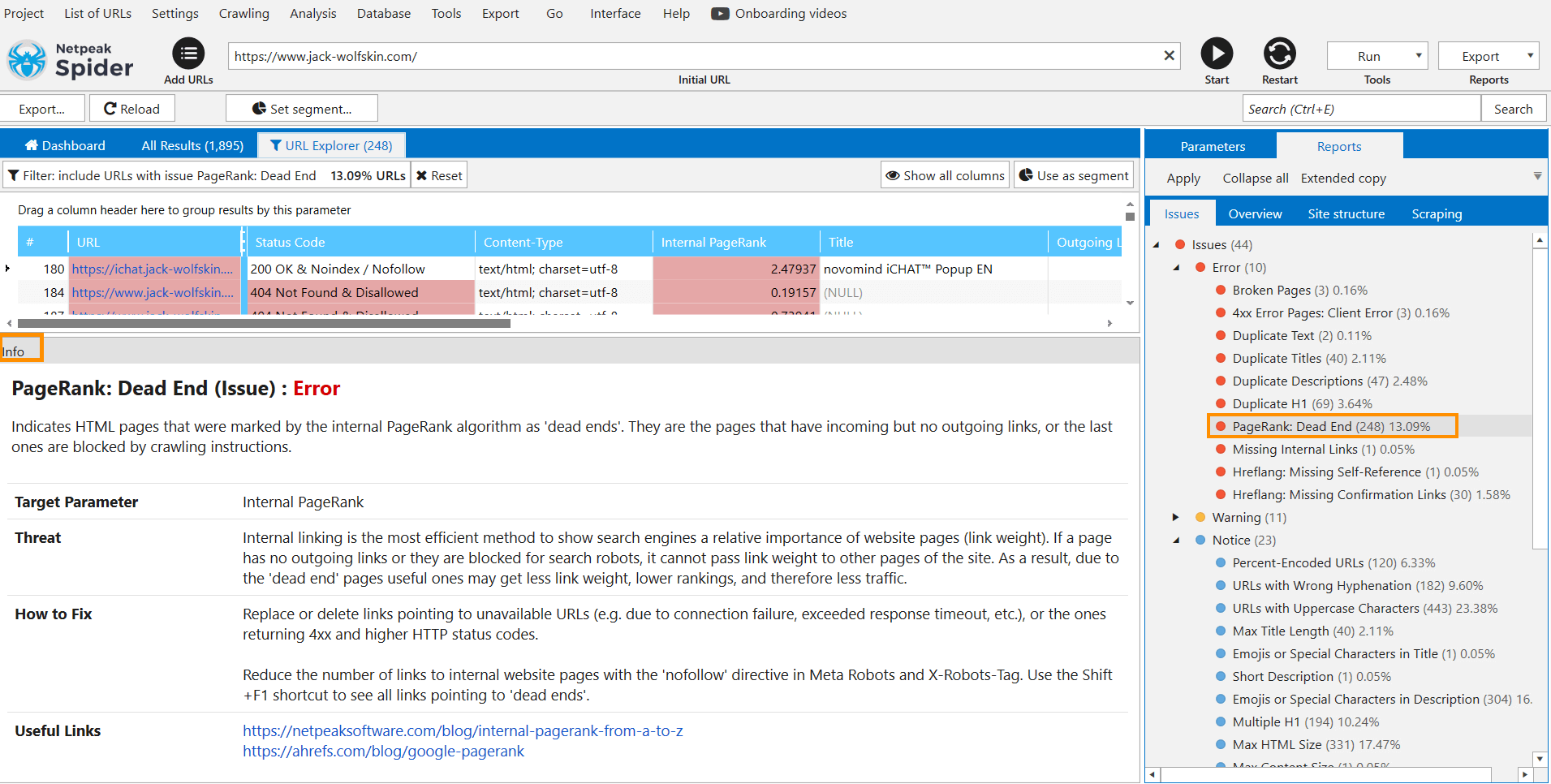

Access troubleshooting tips and retrieve a crawl report

One of the most helpful features of Netpeak Spider is the troubleshooting section. It offers practical tips on fixing particular errors. To access this tab, click on a specific issue you see on the right and get detailed information about the threat itself and how you can handle it. If you want to dig deeper into the problem, there are also useful links where you can find out more related information.

Once you're done crawling , you can retrieve research data by downloading a PDF report. You can get it at any time and use it for your further operations.

Bottom line

If a crawling tool can't detect content on your website, you're at risk of leaving particular pages unindexed, meaning they won't appear in the search results and stop bringing additional organic traffic . Such crawlability mistakes can cost you a lot of potential customers and extra revenue, not to mention credibility and trust from existing clients.

Now you know what is a crawl issue and how you can deal with some of the most widespread ones. For that purpose, try Netpeak Spider. This tool will help you quickly detect and prioritize any known site- or URL-related problems and provide recommendations on fixing them. Get a free trial to see if it's a good fit, and start improving your SEO metrics right away!

.png)