Checklist for Regular Monitoring of a Website with Netpeak Spider and Checker

Use Cases

Working with sites, sooner or later you face problems when something is broken, not loaded, not displayed. When this happens, it is important to detect the issues as soon as possible and fix them.

In this post, you can check out and use my checklist to check the site for the most common issues. It’ll come in handy for large websites because these issues can cause serious damage to your business. I'm going to use Netpeak Spider and Checker tools to showcase the process.

Netpeak Spider and Checker have free versions that are not limited by the term of use and the number of analyzed URLs, which allows you to regularly monitor the website and work with other basic features of the programs.

To get access, you just need to sign up, download, and launch the programs 😉

Sign Up and Download Freemium Programs

P.S. Right after signup, you'll also have the opportunity to try all paid functionality and then compare all our plans and pick the most suitable for you.

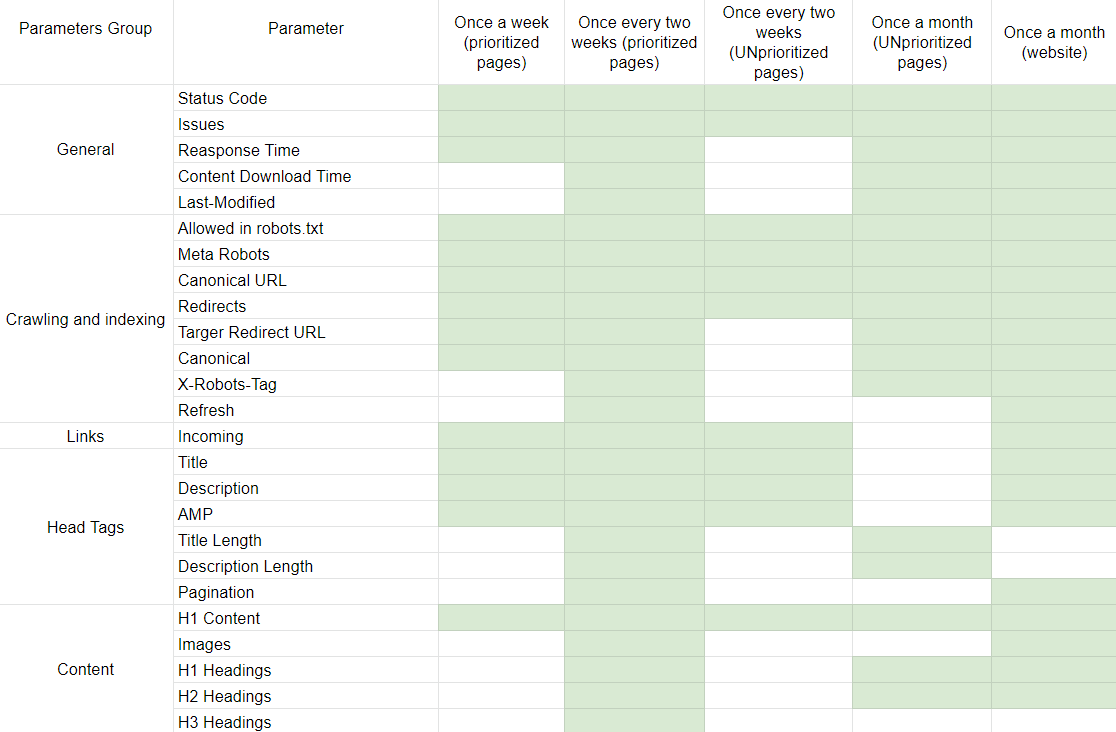

1. Web Pages Clustering

Before using checklists, I recommend to split your landing pages on prioritized and unprioritized.

- Prioritized are the most significant pages for you. In my case, these are the pages generating the most traffic (including the main page).

- Unprioritized are the other landing pages.

We’ll work with the list of URLs, so keep in mind to upload pages in Netpeak Checker to check if they are indexed by the needed search engine.

I divided my checklist into three parts (except prioritized and unprioritized):

- Check once a week

- Check once every two weeks

- Check once a month

I use these parts to check important parameters more frequently. This is all conditional, of course, you can change the frequency and parameters of checking :)

You can copy checklist here.

2. Checklist for Monitoring Prioritized Pages

First of all, prepare the list of prioritized URLs to check them.

- Once a week check the list of prioritized URLs using Netpeak Spider and the following parameters and settings.

- Settings

- General → Basic crawling settings → Crawl only in directory (nothing more)

- Advanced → Consider ALL crawling and indexing instructions

- Parameters

- General:

- Response time

- Issues

- Status Code

- Crawling and indexing:

- Allowed in robots.txt

- Meta Robots

- Canonical URL

- Redirects

- Target redirect URL

- Canonical

- Links: Incoming → Internal links

- Head tags:

- Title

- Description

- AMP (if it’s necessary)

- Content: H1 content

- General:

- Settings

- Once every two weeks check the list of prioritized URLs using Netpeak Spider and the following parameters and settings.

- Settings

- Use settings from the ‘once week check’.

- In Advanced settings tick all ‘Crawl URLs from the tag’ parameters

- Parameters

- General (in addition to the ‘once a week check’ parameters):

- Content download time

- Last-Modified

- Crawling and indexing (in addition to the ‘once a week check’ parameters):

- X-Robots-Tag

- Refresh

- Links: the same parameters – Incoming → Internal links

- Head tags (in addition to the mentioned before):

- Title length

- Description length

- Content (in addition to the mentioned before):

- Images

- H1 headings

- H2 headings

- H3 headings

- General (in addition to the ‘once a week check’ parameters):

- Settings

3. Checklist for Monitoring Unprioritized Pages

Before starting, prepare the list of unprioritized URLs to check them.

- Once every two weeks check the list of unprioritized URLs using Netpeak Spider and the following parameters and settings.

- Settings

- General → Basic crawling settings → Crawl only in directory (nothing more)

- Advanced → Consider ALL crawling and indexing instructions

- Parameters

- General:

- Status code

- Issues

- Crawling and indexing:

- Allowed in robots.txt

- Meta Robots

- Canonical URL

- Redirects

- Links: Incoming → Internal links

- Head tags:

- Title

- Description

- AMP (if it’s necessary)

- Content: H1 content.

- General:

- Settings

- Once a month check the list of unprioritized URLs using Netpeak Spider and the following parameters and settings.

- Settings

- Use settings from the ‘once week check’

- In Advanced settings tick all parameters in the ‘Crawl URLs from the tag’ section

- Parameters

- General (General (in addition to the mentioned before):

- Response time

- Content download time

- Last-Modified

- Crawling and indexing (in addition to the mentioned before)

- X-Robots-Tag

- Target redirect URL

- Canonical

- Links: the same parameters – Incoming → Internal links

- Head tags (in addition to the mentioned before):

- Title length

- Description length

- Content (in addition to the mentioned before):

- H1 heading

- H2 heading

- General (General (in addition to the mentioned before):

- Settings

4. Checklist for Monitoring the Whole Website

Checking certain pages, you can miss issues on new pages. So to see the overall picture of the website, I advise you to crawl your entire site into Netpeak Spider.

Once a month check the website using Netpeak Spider and the following parameters and settings.

- Settings

- General → Basic crawling settings →

- Crawl only in directory (or with subdomains if they exist)

- Check images

- Advanced → Consider ALL crawling and indexing instructions

- Advanced → tick all ‘Crawl URLs from the tag’ parameters

- General → Basic crawling settings →

- Parameters

- General:

- Status code

- Issues

- Response time

- Content download time

- Last-Modified

- Crawling and indexing:

- Allowed in robots.txt.

- Meta Robots

- Canonical URL

- Redirects

- Target redirect URL

- X-Robots-Tag

- Refresh

- Canonical

- Links: Incoming → Internal links

- Head tags:

- Title

- Description

- Pagination

- AMP (if it’s necessary)

- Content:

- Images

- H1 content

- H1 headings

- H2 headings

- General:

Pro tip. Save the settings templates to save the time in the next checks.

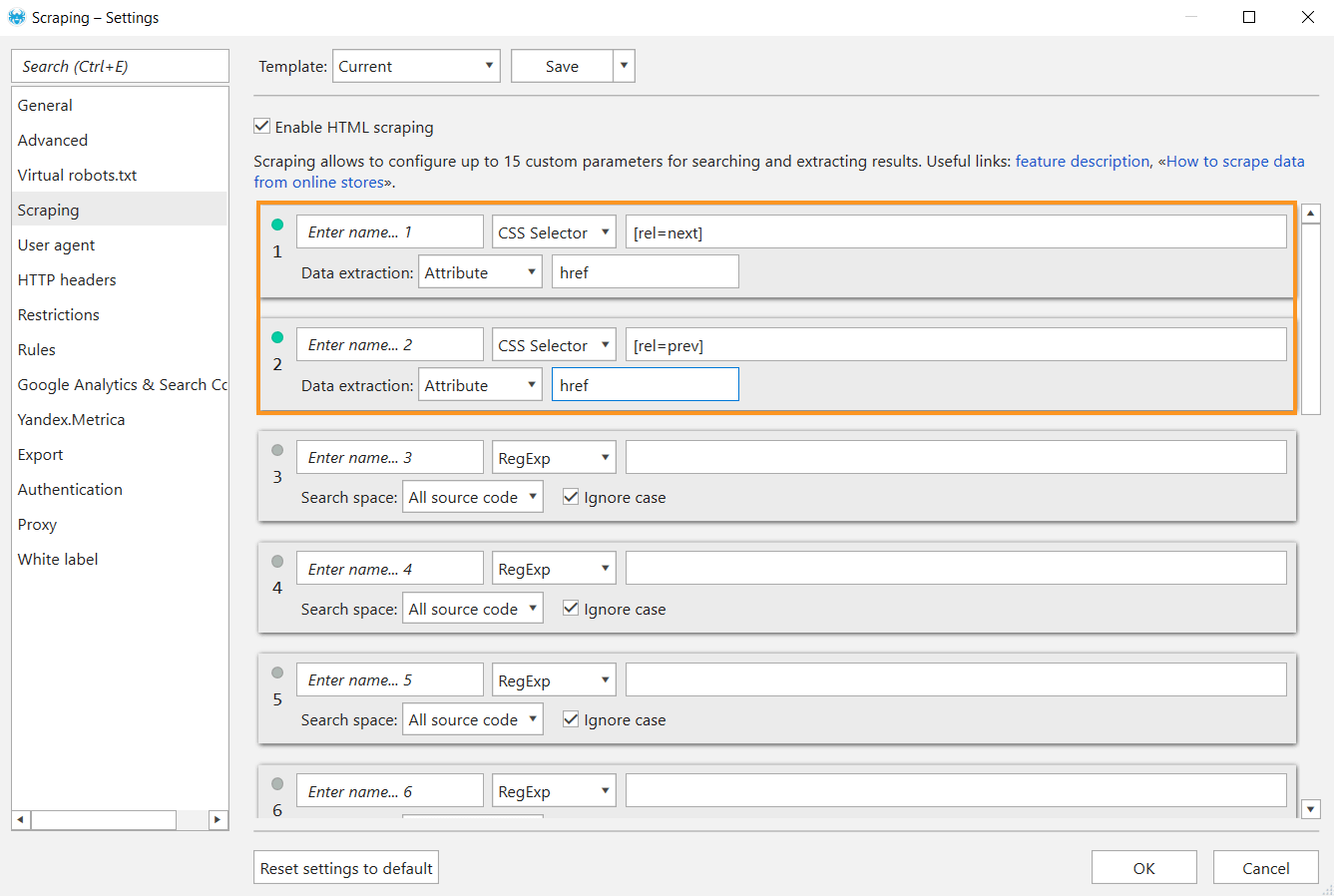

If you need to check for rel=next/prev markup, and collect links in the pagination tags, set them in the scraping parameters.

5. Cases

These checks helped me to find and fix a lot of issues in time. For example I:

- found that the redirects were configured incorrectly after the category restructuring.

- found duplicate meta tags on prioritized pages.

- found that programmers did not transfer SEO texts to some pages after the redesign.

- found that some important pages were closed from indexing.

- found redirect issues during the change of the language version of the site.

Summing up

Having such software as Netpeak Spider and Checker in the arsenal, we can easy set up the process of systematically checking prioritized and non-priority pages on the website.

Feel free to share your checklists for audits in the comments or discuss this one :)