82 Resources to Learn SEO Basics from Scratch

Lists

If you’re new to SEO and website audit, consider this your starting page. In this post, we'll share the key SEO guidelines and aspects to consider while running a website audit or developing an optimization strategy. Keep reading to find out more!

Follow the jump links to switch to the desired topic.

What is SEO?

Search Engine Optimization (SEO) is a set of different practices that are applied to get more quantity and quality traffic to the website. Plainly speaking, it’s all about getting the right audience to reach the highest possible position in the organic search engine result pages (SERP).

Though at first glance, SEO may seem a bunch of dull technical techniques that need to be reiterated each step at a time, it requires strong analytical and creative skills. Despite a good website structure, optimized technical background, proper keywords in the proper places, you should also think about relevant content that should meet the website visitors' needs and the links from other websites as proof of trust and worthiness of your project. So it’s an undying process.

These days, SEO is vital as never before since many businesses switch online because online means more opportunities and broader audience.

On the one hand, there is a user who has an urge or a question, and he types in a search engine bar, let’s say, 'how to make a smoothie.' As soon as he hits 'Search,' a list of results appear, he clicks on the one that seems the best fit and jumps to the website to find the answer to the question (in best case scenario).

On the flip side, there's backstage magic that makes all these things happen. The main point is to decipher the user’s intent, understand what they behave online, what words they are using, and what results they expect to receive.

The main goal of any search engine is to satisfy the user's needs by effectively delivering results. The main objective of SEO is to make this happen.

Why is SEO important?

It's most likely that your potential customers or fellow users are searching for your products or services using similar terms. Ranking for those terms will attract more visitors to your website. But nothing comes without effort – that’s where SEO comes into play. It helps Google understand that your website deserves high rankings.

What are the main benefits of doing SEO?

Most people click one of the first several search results. It means that higher rankings usually lead to more traffic. Search traffic tends to be consistent and passive, unlike other channels. That is the case if the number of searches doesn’t decline.

Moreover, organic search traffic is "free" (although SEO still requires time and effort), which makes a real difference because of the ads' prices.

How do you do SEO efficiently?

Understanding SEO and working on the best tactics consists of five main steps:

- Keyword research;

- Content creation;

- On-page SEO;

- Link building;

- Technical SEO.

Setting up successful SEO tactics

SEO concepts are much easier to understand and implement if you optimize your website correctly from the very beginning. Let’s examine how you can ensure that.

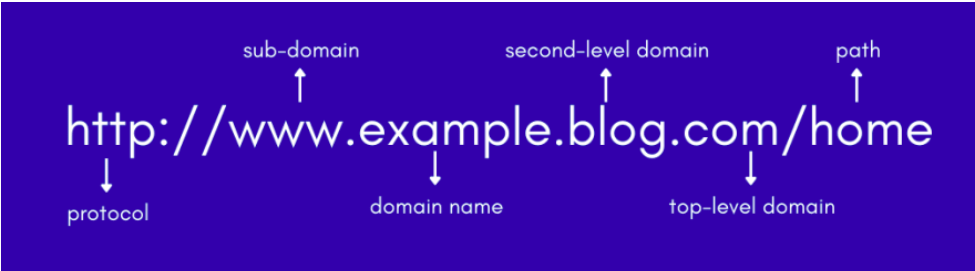

Getting a good domain for your website

Most domains work well for SEO, so there's no need to worry about changing yours if you already have one. However, if you’re still in the process of getting a domain, consider these two SEO essentials that make a good domain:

Domain name

Your domain name should be short and catchy, without hyphens or special characters. Also, avoid "shoehorned" keywords.

Top-level domain (TLD)

It’s the part after the domain name (the most famous example is .com). Your choice of TLD makes no difference for SEO. But .com would be the best in most cases — it’s the most recognizable and trustworthy option. If you have a charitable website, .org or your local equivalent would also work well. If you only do business in one country (outside the U.S.), a local country code top-level domain (i.e., a ccTLD), such as .co.uk, is a viable option too.

It’s best to avoid using TLDs like .info and .biz, as people usually associate them with spam. But it’s not bad if you have one — you can still build a legit website that ranks high in search results.

Using a website platform

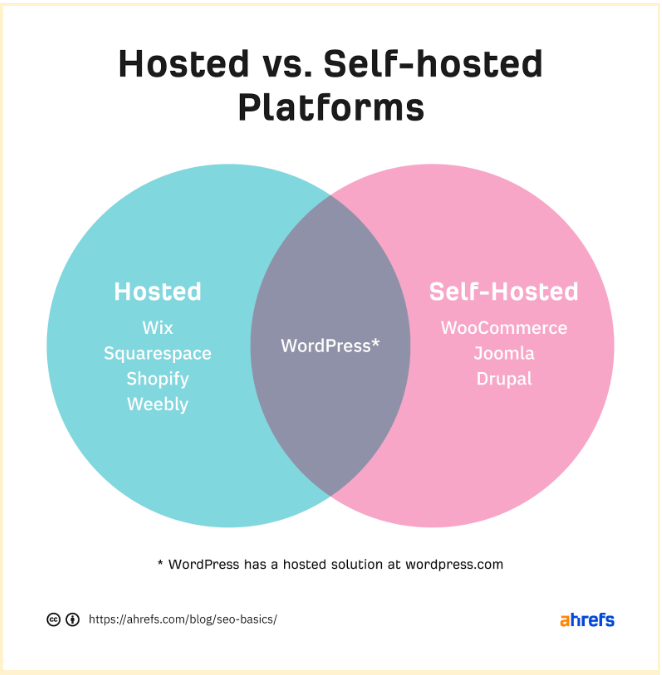

Site platforms let you easily create and manage a new website. Their most common types are the following:

- Hosted platforms. You receive website hosting services and ready-made designs. Moreover, it’s possible to add and edit content without interfering with the code.

- Self-hosted platforms. Here, you can also add and edit content without changing code. The only difference is that you have to host and install the content yourself.

When it comes to SEO, a self-hosted, open-source platform like WordPress is your best bet. Here are the reasons why this option is so popular:

- It’s customizable. You can edit the open-source code as you like. Plus, a vast developer community knows the platform inside out and can help you at any time.

- It’s extensible. There are millions of plugins for extending your website's functionality, including SEO.

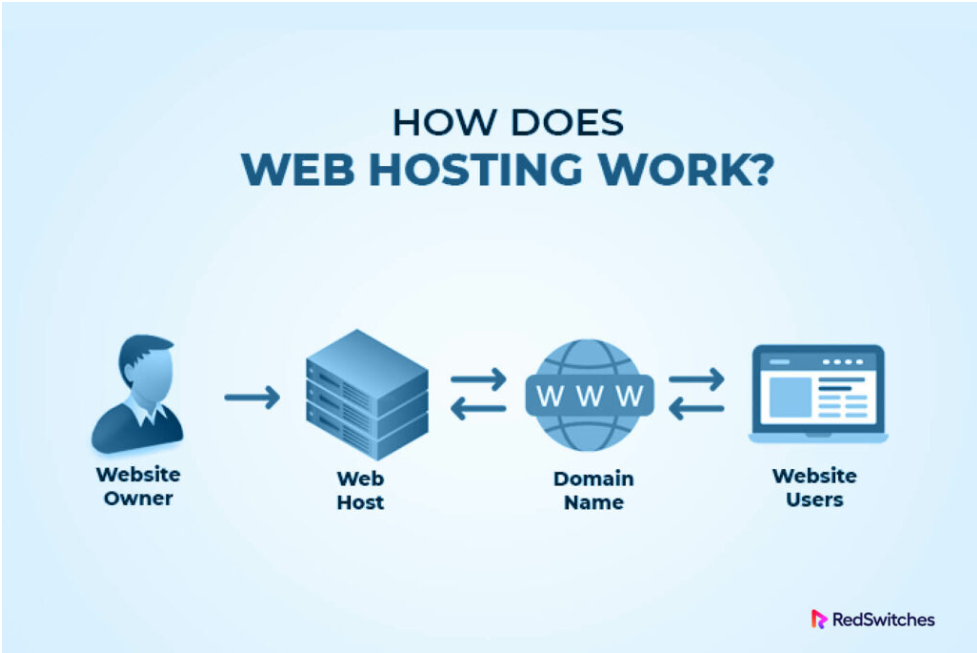

Using a reliable web hosting provider

If you use a hosted solution for your website, you’ll need a web host to store it on a hard drive available to anyone with Internet access. Consider these three points when choosing one:

- Security. Get a free SSL/TLS certificate or at least support for Let’s Encrypt – a nonprofit that provides free certificates.

- Server location. It takes time for website data to transmit from the server and a visitor. Hence, it would be best to pick a host with servers in the same country as most of your traffic.

- Customer support. Getting a host with 24/7 support is a perfect option. To test support quality, ask about the points above before signing up for such services.

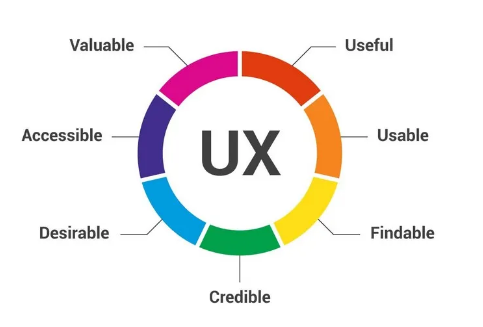

Creating a positive UX

Google will rank pages that provide an outstanding user experience. Here's how you can achieve this goal:

Use HTTPS

Ensure your site doesn’t expose your users' confidential data to hackers. To avoid that outcome, always encrypt your website with an SSL/TLS certificate.

Create an appealing design

Nobody likes old-fashioned, sloppy websites. Your brand's site should look well-groomed, be easy to navigate, and reflect your vision.

Make your website mobile-friendly

Many people now prefer mobile devices to desktop ones. Therefore, it is critical to make your website as pleasant to use on a smartphone or tablet as on a laptop.

Ensure a readable font size

People browse the web using all kinds of gadgets. Ensure your content is readable across all devices and operating systems.

Avoid intrusive pop-ups and ads

Ads often annoy Internet users, although adding them may be crucial to your business. If that’s the case, avoid intrusive interstitials, as they prevent your website from ranking as high as expected.

Ensure a fast loading speed

Page speed is an important ranking factor on desktop and mobile devices. But it doesn’t mean your site needs to load lightning-fast. It only affects pages that provide the slowest user experience.

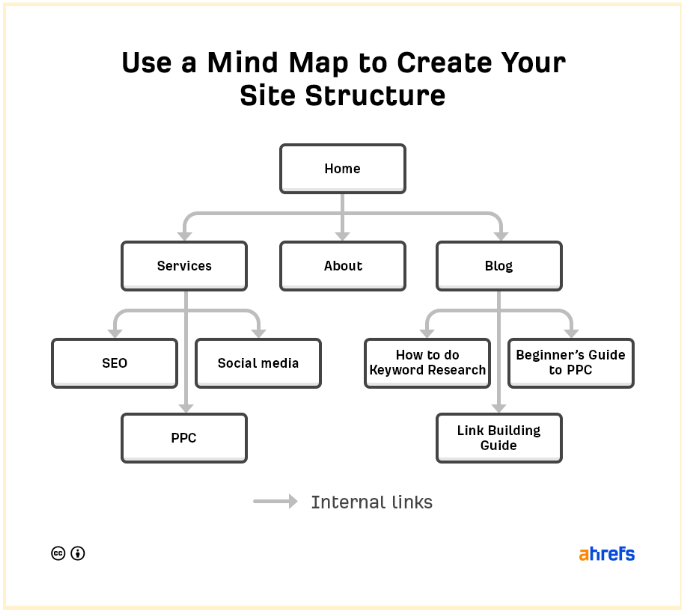

Developing a logical site structure

Visitors and search engines should find it easy to access content on your website. That’s why creating a logical content hierarchy is vital. Sketching a mind map could come in handy here.

Each branch on the map becomes an internal link, which is crucial for UX and SEO for several reasons:

- Enable search engines to find new pages (they can’t index undetectable ones).

- Facilitate passing PageRank (the foundation of Google search) around your site. Google evaluates page quality by analyzing the links that point to it.

- Let search engines understand what your page is about. For that, Google checks the anchor text behind each link.

Using a logical URL structure

URLs are another type of SEO fundamentals, letting searchers understand the page's content and context. Most site platforms make it easier to structure your URLs effectively. WordPress, for instance, offers five main options for that:

- Plain: website.com/?p=123

- Day and name: website.com/2021/03/04/seo-basics/

- Month and name: website.com/03/04/seo-basics/

- Numeric: website.com/865/

- Post name: website.com/seo-basics/

If you want to set up a new website, make your structure as clear and descriptive as possible. That would be the post name. If you’re working with an existing website, it’s better not to change the URL structure — this way, you'll avoid errors and crashes.

Installing an SEO plugin

Most site platforms handle basic SEO functionality out of the box. However, if you’re using WordPress, it’s worth installing an SEO plugin. Otherwise, running even the basic SEO set up will be challenging. You can choose between Yoast and Rank Math.

Getting on Google

Submitting your site to Google speeds up the success of using SEO strategies you've selected. This way, Google can find your website even without backlinks. Here's what you should do to achieve that:

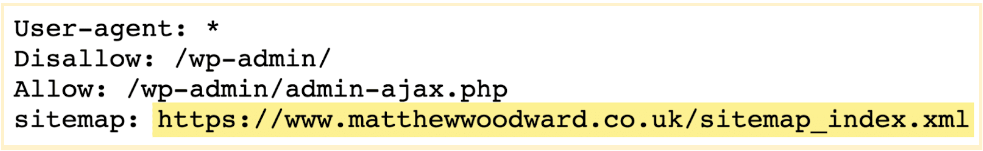

Find or create a sitemap

Sitemaps store your website's essential pages—those that you want search engines to index. If you already have a sitemap, you'll likely find it at either of these URLs:

- site.com/sitemap.xml

- site.com/sitemap_index.xml

If you can’t find it there, check site.com/robots.txt. Still can’t find one? That means you don’t have a sitemap. If that is your case, consider creating one for your website.

Submit your sitemap

You can submit your sitemap via Google Search Console (GSC) in no time.

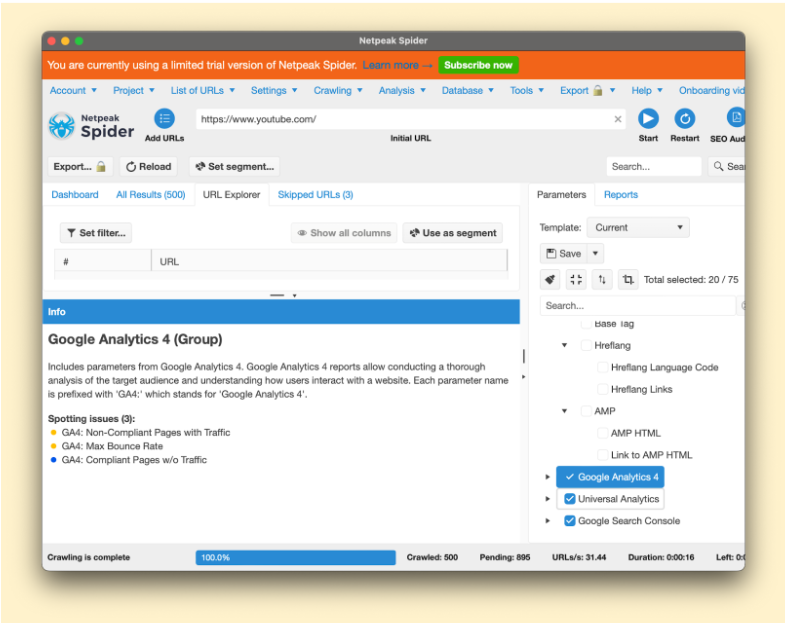

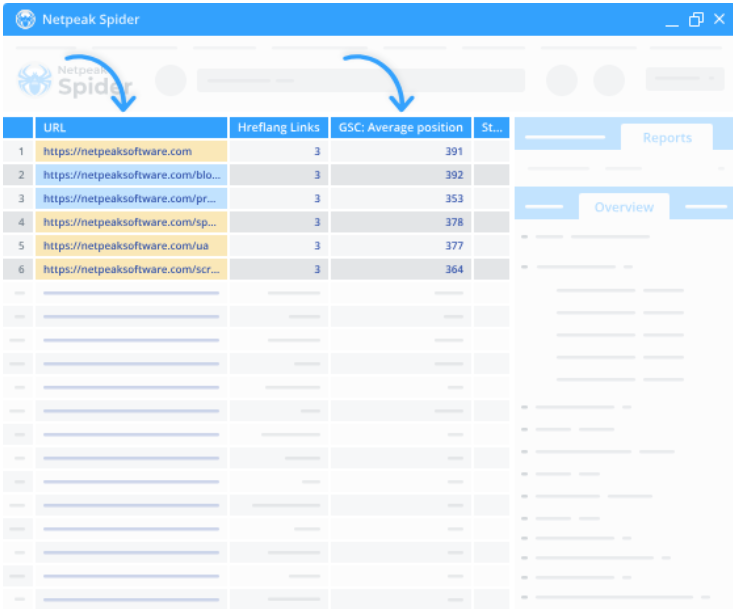

How to track SEO success with Netpeak Spider

Running regular website audits will boost your site performance and show if you've efficiently used your knowledge of SEO fundamentals. Netpeak Spider is an excellent option for that.

This tool monitors your website's key performance metrics in real time and downloads crawling results in a convenient PDF format. Also, it integrates data from other SEO tools like Google Analytics for in-depth analysis.

Moreover, Netpeak Spider is super-easy to work with. Here's all you should do to start crawling your website with this solution:

- Create a list of URLs you need to check or paste them all from a clipboard into the search bar.

- Check all the metrics you want to analyze on the right sidebar.

- Click "Start" to launch the crawling process.

Here's what else you can monitor and analyze with Netpeak Spider:

Organic traffic

Our tool shows the compliant and non-compliant pages that receive some traffic or don't get it. You can do so by enabling integrations with Google Analytics and Search Console.

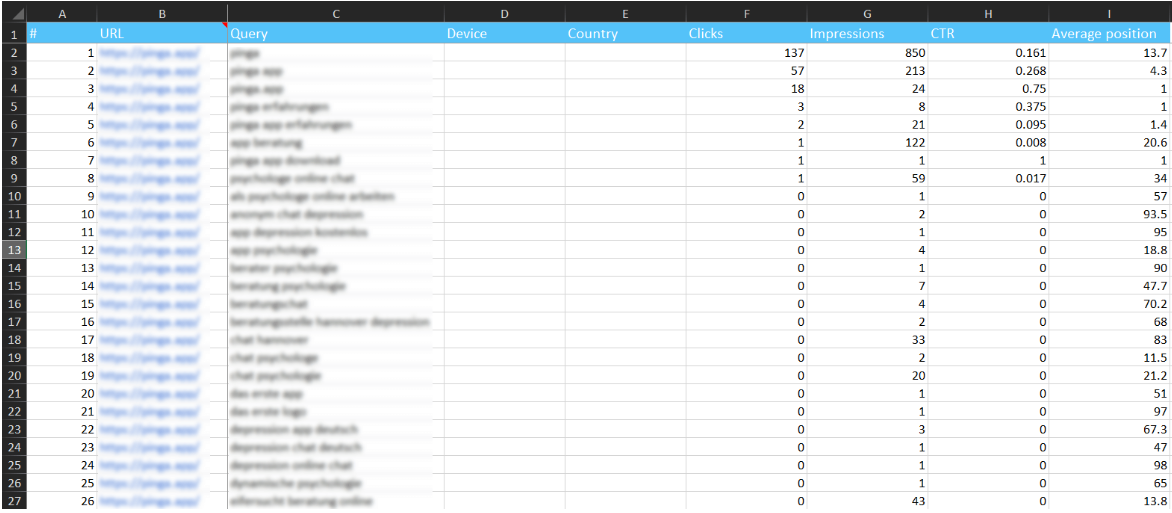

Keyword rankings

Netpeak Spider quickly analyzes the best-performing keywords your web pages rank for. Also, it explores the metrics related to that parameter:

Visibility

Check the number of clicks, impressions, and your page's average position based on the keywords it ranks for with Netpeak Spider. Once the crawling process is complete, you can monitor all these metrics on a live dashboard.

Knowledge base

If you're only starting SEO, here are some beneficial resources you can use:

How Google Crawls

In this post, you'll learn how Google crawls the web, indexes websites and separate pages, and delivers research results.

How Google Works

If you want to get into SEO basics, you should understand how search engines generally work. This video provides a detailed description of how Google functions.

Getting the Basics

Optimizing your site is vital to getting traffic from Google and ranking well. It will make Google consider your site high-quality. This article will show you the essential attributes you should work on to make it happen.

PageRank

Learn more about PageRank to understand Google and why it ranks some sites higher than others. This Wikipedia article provides a good background on that matter—even Google employees reference it.

Quick Sprout’s Guide to SEO

It’s a nine-chapter, infographic-style beginners guide to SEO. We recommend it as a good source if you want something more thorough than a simple explanation yet much briefer than a comprehensive book.

Moz’s Guide to SEO

Don’t want to learn SEO basics through long articles and complex infographics? Check out this 10-chapter guide by the folks at Moz, then.

SEO Starter Guide – Written by Google

Another valuable resource is a 32-page document explaining how to do SEO in simple terms.

8 First Step SEO Tips for Bloggers

Are you a blogger trying to boost traffic to your website? Then, dive into this article first to learn how to streamline your SEO knowledge and skills.

SEO Tips for Beginners

Are you a complete SEO newbie? This short article features five things you can do now to guide your SEO tactics in the right direction.

SEO Community Resources

If you want to get professional assistance or join the community of SEO experts, try the following sources:

- Talk with an Expert. Need to turn to someone for help or advice with SEO? Check out the experts available on Clarity.

- Contact a Recommended SEO Company. Reach out to one of Moz’s recommended SEO companies.

- Inbound. It’s a Hacker News-like discussion board covering many inbound marketing issues. There’s a heavy focus on SEO here.

- Webmaster Central Forum. This large forum hosted by Google will help you solve various SEO issues.

- Moz Community. This community hosted by Moz features a Q&A, articles written by SEO experts, and webinars.

- SEO Subreddit. Consider turning to the SEO community on Reddit. It features news, Q&A, case studies, and more.

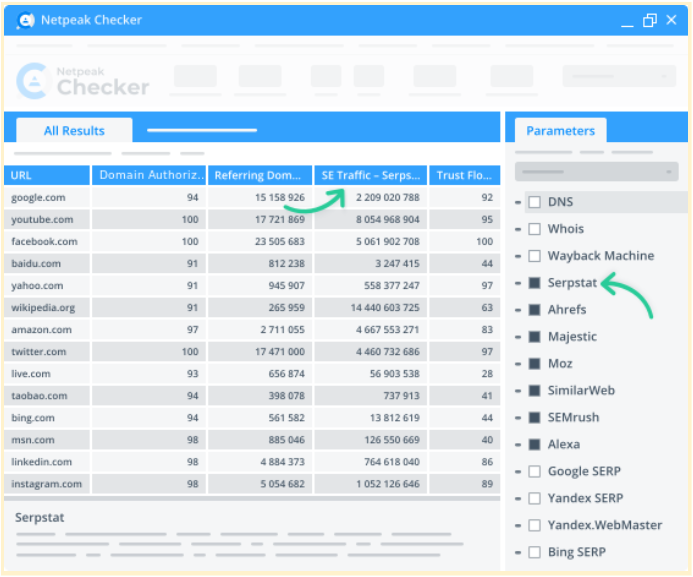

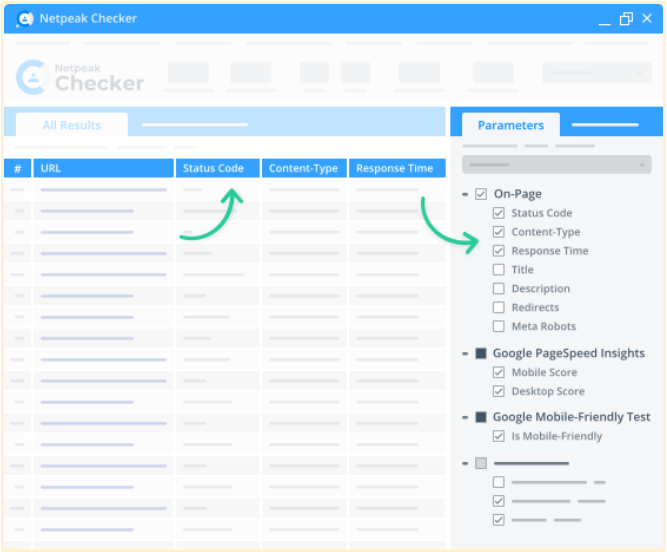

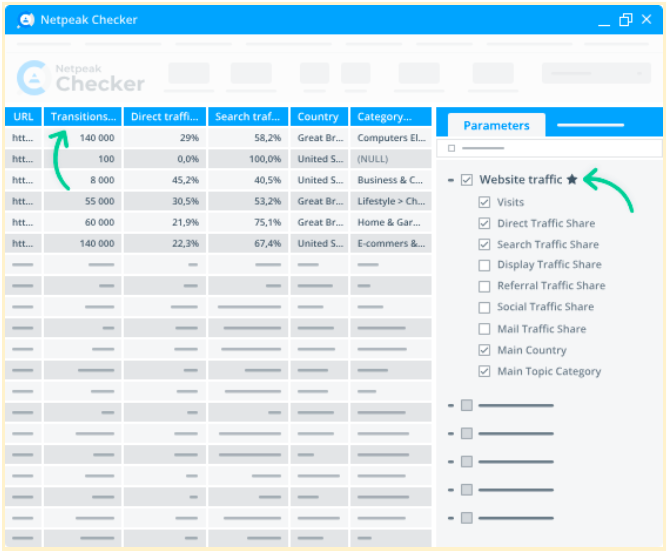

Check URLs for SEO parameters with Netpeak Checker

To learn and understand the basics of search engine optimization, try Netpeak Checker — a powerful SEO analytics tool.

This app offers dozens of helpful features and enables integrations with many SEO-related services. Apart from that, here's what else Netpeak Checker offers:

Integration with 25 other services to analyze 450+ parameters

Netpeak Checker enables over 25 integrations with other services, including Moz, SimilarWeb, Ahrefs, Serpstat, Google Analytics, and more.

50+ on-page parameters

Netpeak Checker shows the on-page parameters you've selected on an interactive dashboard. These metrics include redirects, titles, response time, status codes, and mobile friendliness. To see them, select the required stats and hit "Start."

Website traffic estimation

Netpeak Checker evaluates the traffic volumes on any target page, potential link-building donors’ share ratios, and traffic by location. On top of that, it shows the prevailing types of traffic on each page (search, organic, direct, mail, social, etc.).

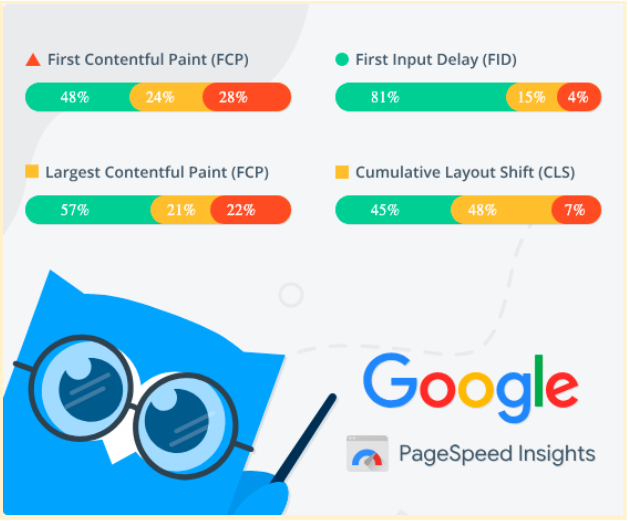

Batch Core Web Vitals checkup

Retrieve data from Google PageSpeed Insights to retrieve insights on your website's load speed, responsiveness, and visual stability.

Integration with Google Drive & Sheets

Pair your Google Drive account with Netpeak Checker to export any report to Google Sheets. Share it with colleagues or partners within seconds.

Bottom line

Learning and implementing the key search engine optimization basics isn't as difficult as it might seem from the outset. With this kit, you'll get a grasp of the main aspects of SEO. To dig deeper, do several website audits to put your skills into work. For only practice makes perfect.