Как просканировать сайт и найти на нём ошибки с помощью Netpeak Spider

Кейсы

За время существования нашего блога мы написали много мануалов и кейсов по работе с Netpeak Spider, но поняли, что самого главного и простого кейса всё же не хватает. Ведь иногда разобраться в инструменте и понять основные принципы работы с ним бывает непросто! И в этом посте я решила подробно объяснить и показать новичкам, как выполнять сканирование и проверку сайта в Netpeak Spider.

- 1. Как просканировать сайт в Netpeak Spider

- 2. Как посмотреть ошибки, найденные в ходе сканирования

- 3. Как экспортировать отчёты из программы

1. Как просканировать сайт в Netpeak Spider

Основные причины, зачем может понадобиться сканирование сайта — это проведение SEO-аудита и поиск ошибок на сайте. Чтобы их выполнить в Netpeak Spider, сделайте следующее:

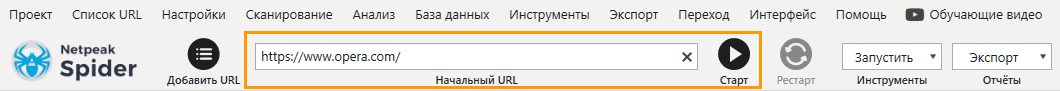

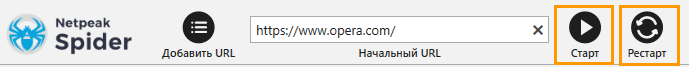

- Скопируйте адрес сайта, который вы хотите просканировать, и вставьте его в поле «Начальный URL».

- На панели управления нажмите на кнопку «Старт».

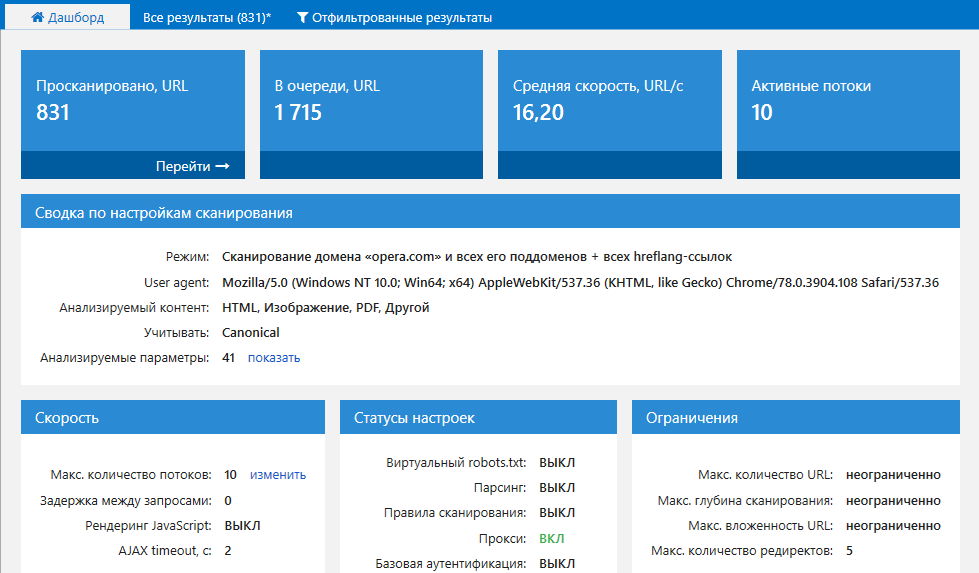

- Наблюдайте за состоянием сканирования на вкладке «Дашборд».

- Вы можете вручную остановить сканирование, для этого нажмите на кнопку «Пауза». Чтобы продолжить сканирование, нажмите снова на кнопку «Старт», а чтобы перезапустить заново — кнопку «Рестарт». Перезапуск сканирования нужен, если вы внесли изменения в настройках программы и хотите пересканировать проект с учётом новых настроек.

2. Как посмотреть ошибки, найденные в ходе сканирования

Прежде всего дождитесь, пока программа полностью закончит сканирование и анализ. Затем проделайте следующее:

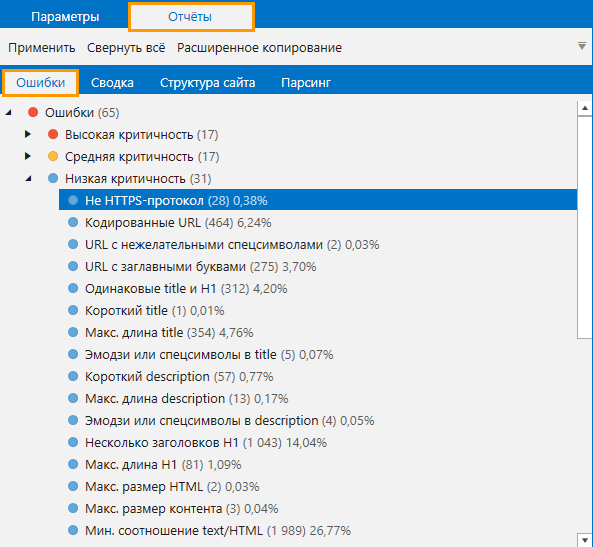

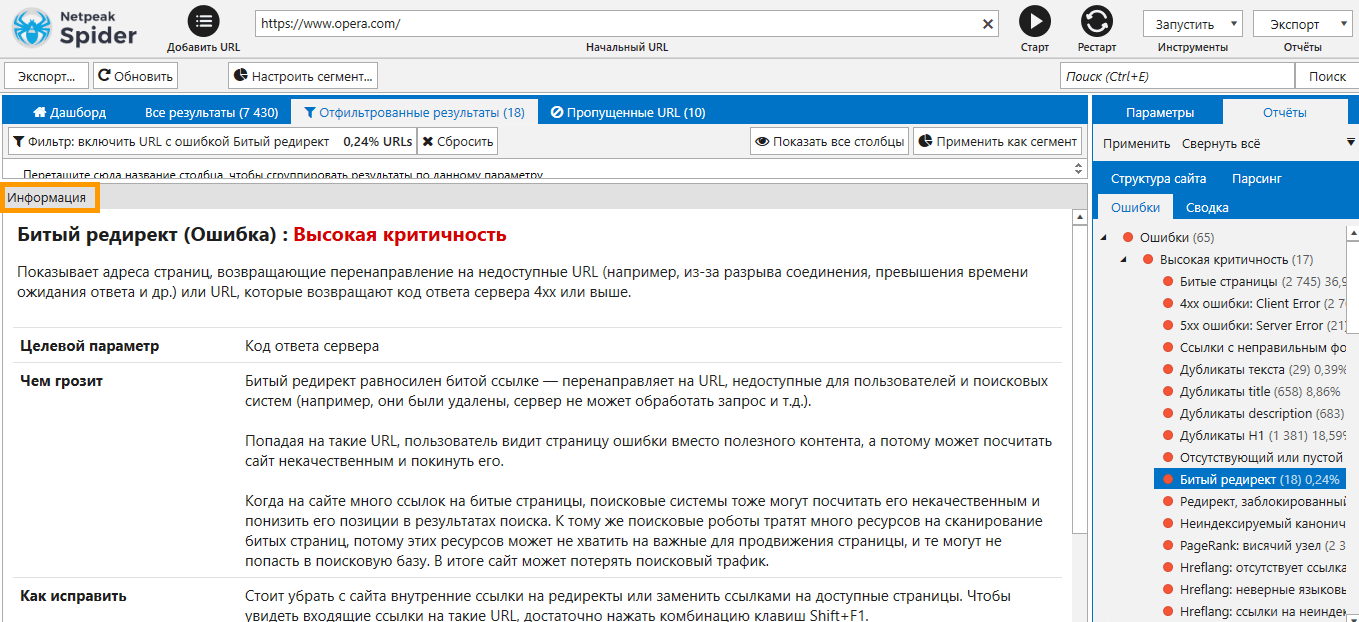

- На боковой панели откройте вкладку «Отчёты» → «Ошибки». Ошибки, подсвеченные красные цветом, имеют высокую критичность, жёлтым — среднюю и голубым — низкую. Не все ошибки низкой критичности обязательно нужно исправлять, так как некоторые не несут угрозу → например, страницы с эмодзи в description могут и не быть проблемой, но если эмодзи там быть не должно, то программа подготовила вам подходящий отчёт для работы.

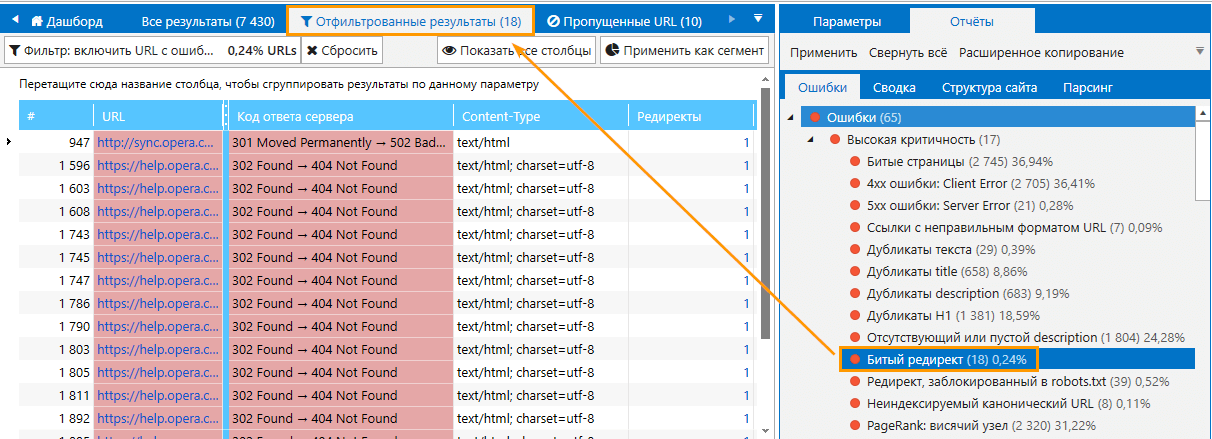

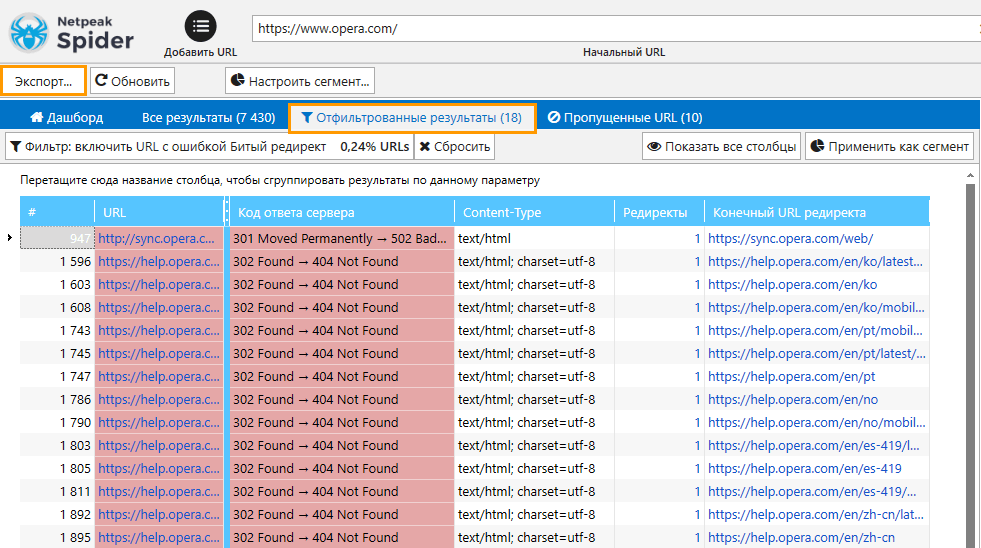

- Чтобы увидеть, какие именно URL содержат ту или иную ошибку, кликните по её названию → откроется вкладка «Отфильтрованные результаты» со списком всех URL.

- На панели «Информация» вы можете посмотреть, что означает ошибка, чем она грозит, и как её исправить → чтобы расширить панель, потяните левой кнопкой мыши вверх. Также помимо основной информации вы найдёте полезные ссылки, по которым можете перейти прямо из программы и более подробно узнать об ошибке.

Хотите сканировать сайт с помощью Netpeak Spider, фильтровать данные и экспортировать разные типы отчётов? Эти и другие фичи (анализ 80+ SEO-параметров, встроенные инструменты, интеграции с сервисами аналитики, парсинг и многое другое) доступны в арифе Lite. Если вы ещё не зарегистрированы у нас на сайте, то после регистрации у вас будет возможность сразу же потестировать платные функции.

Ознакомьтесь с тарифами, оформляйте доступ к понравившемуся, и вперёд получать крутые инсайты!

3. Как экспортировать отчёты из программы

Выгрузить отчёт по ошибке можно такими способами:

- Находясь в таблице отфильтрованных результатов нажать на кнопку «Экспорт» → тогда выгрузятся все результаты из текущей таблицы.

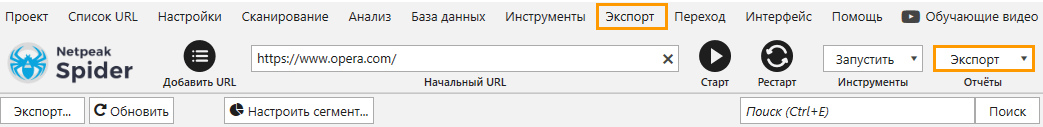

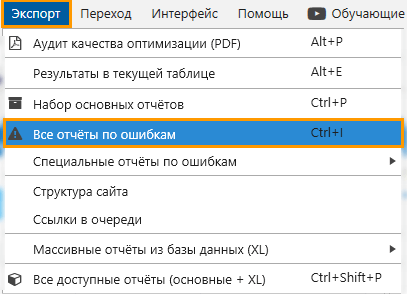

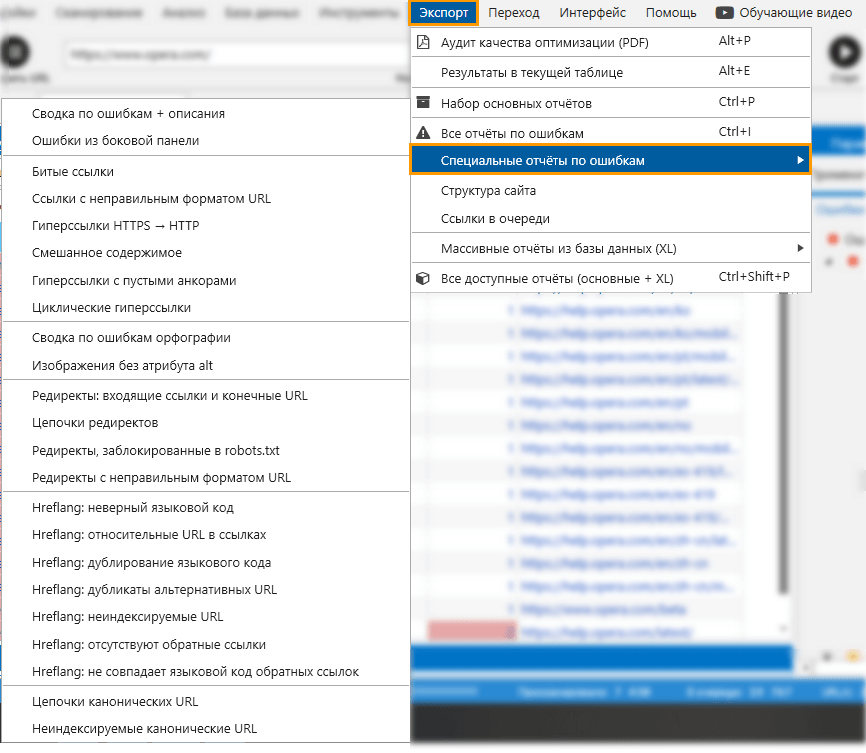

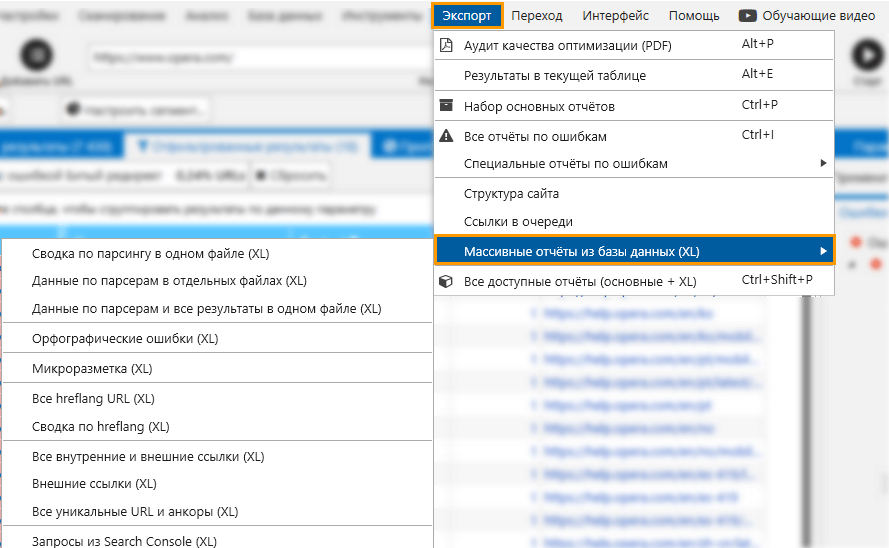

- Открыть меню «Экспорт» на верхней панели или в крайнем правом углу.

С помощью этого меню вы сможете выгрузить разные виды отчётов: например, одним кликом вы можете экспортировать сразу все отчёты по ошибкам.

Специальные отчёты по конкретным ошибкам.

А также массивные отчёты, которые содержат большое количество данных.

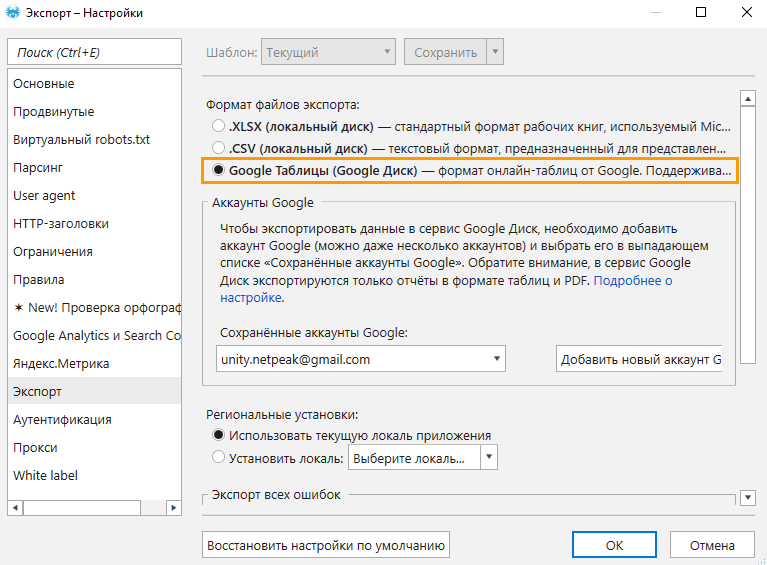

Кстати, владельцы версии Netpeak Spider Pro могут экспортировать отчёты и результаты сканирования из программы сразу в Google Таблицы. Для этого нужно перейти в «Настройки» → «Экспорт», добавить свой Google аккаунт и в разделе «Формат файлов экспорта» выбрать «Google Таблицы (Google Диск)».

Покажу на примере самые популярные виды отчётов:

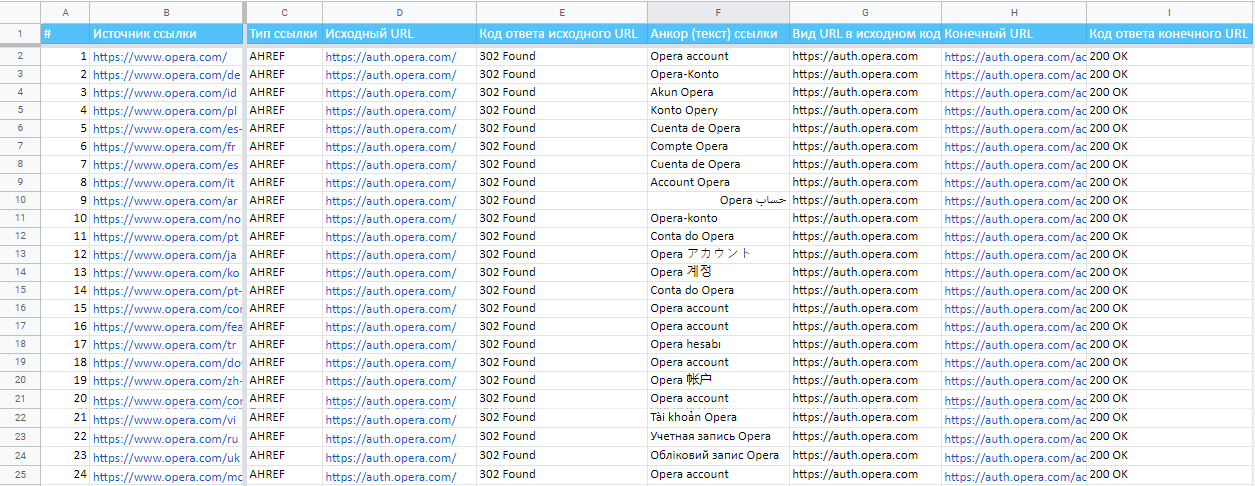

- Редиректы: входящие ссылки и конечные URL.

Читайте также: «Руководство по редиректам: как их обнаружить и настроить».

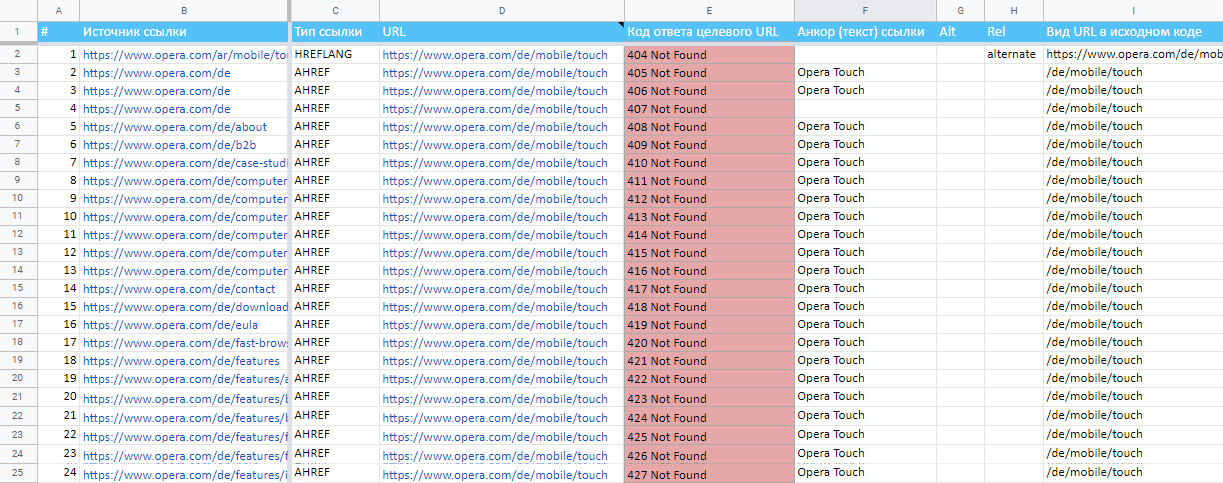

Читайте также: «Руководство по редиректам: как их обнаружить и настроить». - Битые ссылки.

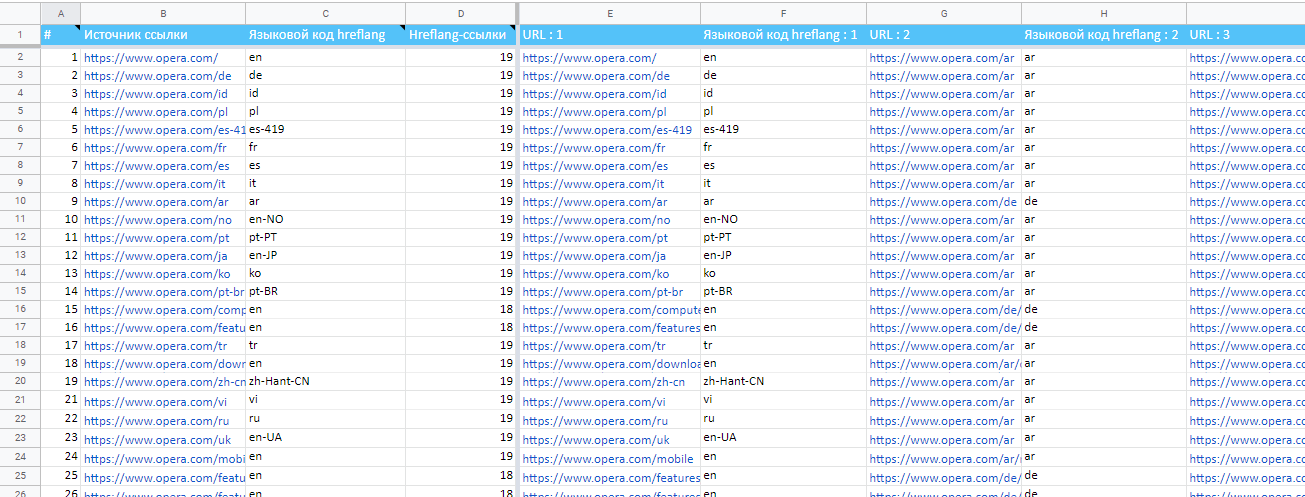

- Сводка по hreflang.

Читайте также: «Атрибуты hreflang и alternate — зачем и как их использовать».

Читайте также: «Атрибуты hreflang и alternate — зачем и как их использовать».

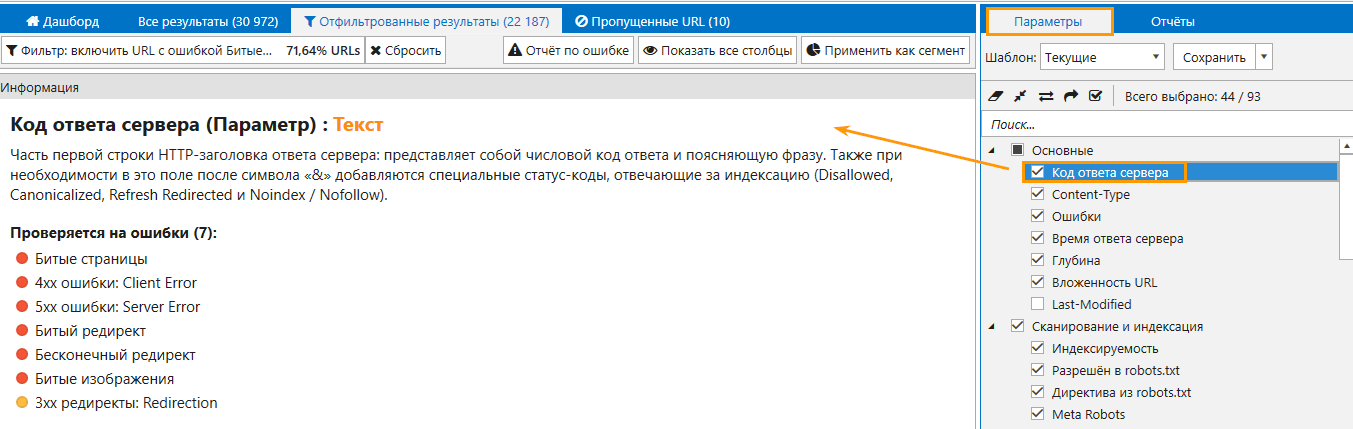

Обратите внимание, что определение ошибок зависит от выбранных параметров. Узнать, какие параметры проверяются на те или иные ошибки, вы можете в панели «Информация» → просто кликните на название параметра и откройте панель:

Подводим итоги

Чтобы просканировать и проверить сайт на ошибки в Netpeak Spider, необходимо выполнить простые действия:

- Вставить адрес сайта в поле «Начальный URL».

- Запустить сканирование кнопкой «Старт».

- Дождаться полного окончания сканирования и открыть отчёт «Ошибки» на боковой панели. Кликнуть по названию ошибки и посмотреть отфильтрованные результаты → в таблице отобразятся URL, на которых найдена ошибка.

Остались вопросы? Задавайте их в комментариях, мы обязательно ответим и, если нужно будет, дополним пост 😊