Что такое сегментация в Netpeak Spider, и как её использовать

Кейсы

В этом посте мы подробно рассмотрим функцию сегментации в Netpeak Spider. Сегментация позволяет выделить определённый сегмент данных для анализа страниц с общими характеристиками.

1. Что такое сегментация

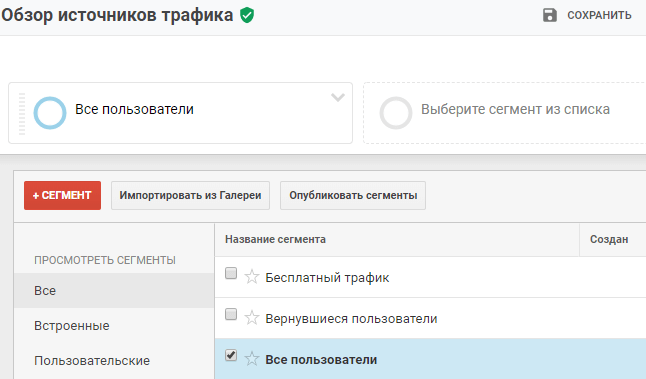

Прежде всего, давайте начнём с определения. Сегментация ограничивает наборы данных URL-адресами, которые соответствуют указанным условиям. Вы можете просматривать все отчёты на основе этого набора данных и находить ценную информацию на ваших сайтах или сайтах конкурентов. Чтобы было понятнее, вспомните сегменты в Google Analytics.

Сегментация в Netpeak Spider работает аналогично. Вы можете сегментировать URL-адреса по необходимым критериям и анализировать их отдельно.

2. Как создать сегмент

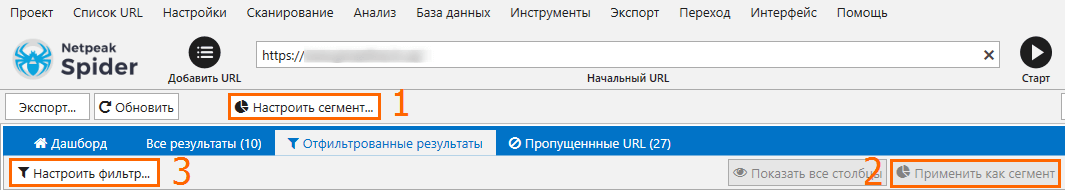

Вы можете создавать сегменты в Netpeak Spider тремя способами.

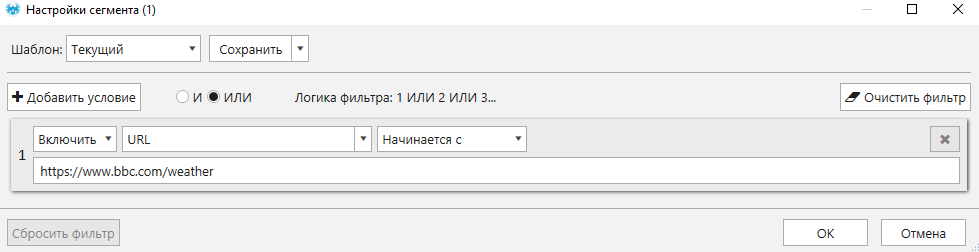

- Первый способ — нажать кнопку «Настроить сегмент». Вы можете установить необходимые условия, и увидеть результаты в панели управления.

- Вы можете установить фильтр на вкладке «Отфильтрованные результаты», а затем нажать кнопку «Применить как сегмент».

- Третий способ — перейти на вкладку «Отчёты» на боковой панели. Выберите нужную строку, нажмите на неё, а затем нажмите кнопку «Применить как сегмент». Теперь вы можете работать только с соответствующими условию URL-адресами.

Совет. Вы можете сохранить свои шаблоны с необходимыми ошибками и параметрами и использовать их при необходимости.

3. Примеры использования сегментации

Как я уже упоминала выше, сегменты пригодятся, если вам нужно проанализировать страницы с одинаковыми характеристиками. В этом разделе я поделюсь несколькими кейсами использования сегментации.

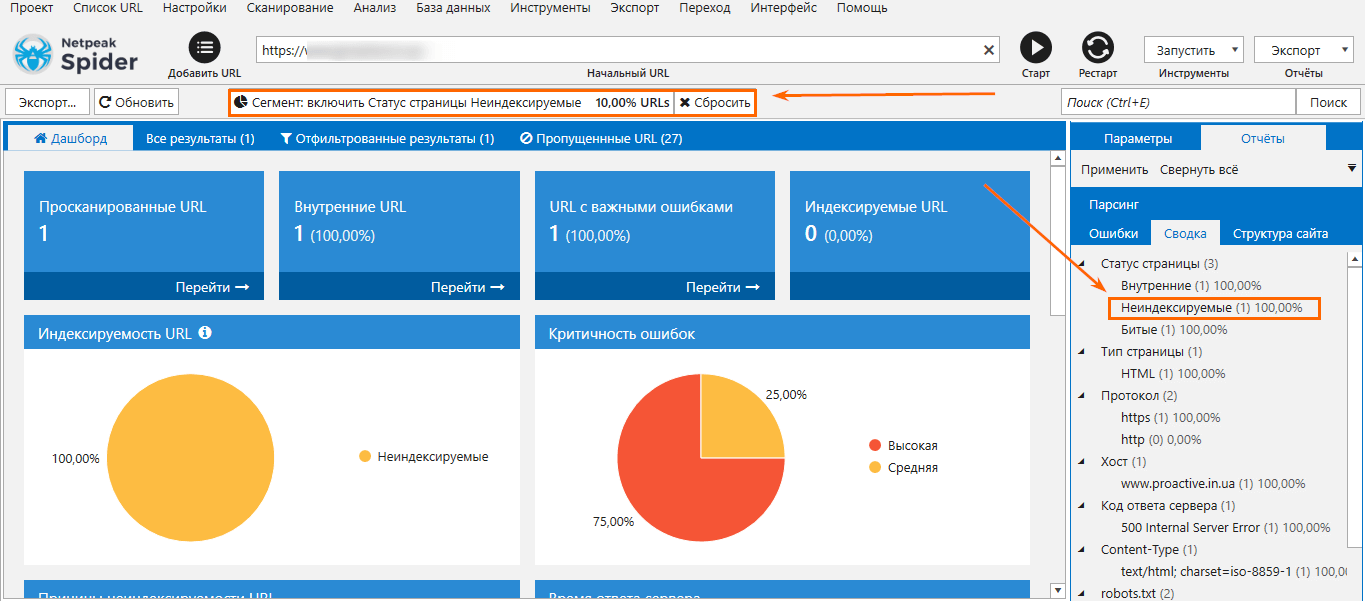

3.1. Сегментация страниц по статусу

Две дополнительные буквы «no», случайно добавленные разработчиком в <meta name = ”robots”>, могут существенно снизить трафик.

После сканирования вашего сайта, вы можете перейти на вкладку «Сводка» и сегментировать страницы по их статусу для более глубокого анализа. Например, вы можете выбрать неиндексируемые страницы, использовать их в качестве сегмента и посмотреть, какие типы страниц не индексируются и почему.

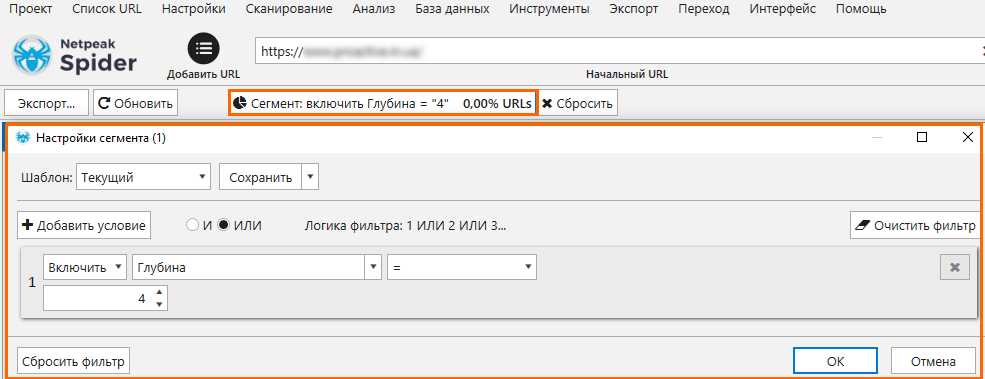

3.2. Сегментация страниц с определённой глубиной кликов

Простая структура сайта является важным аспектом внутренней перелинковки. Желательно, чтобы каждая страница имела глубину 2-3 клика от главной страницы. Для этого есть несколько причин: пользовательский фактор и краулинговый бюджет. Учтите, что важные страницы (например, лендинги) должны иметь минимальную глубину.

Для анализа страниц с определённой глубиной клика вы можете настроить сегмент и проанализировать их типы, а также узнать, индексируемые они или нет.

3.3. Сегментация типов страниц по части URL и использование структуры сайта

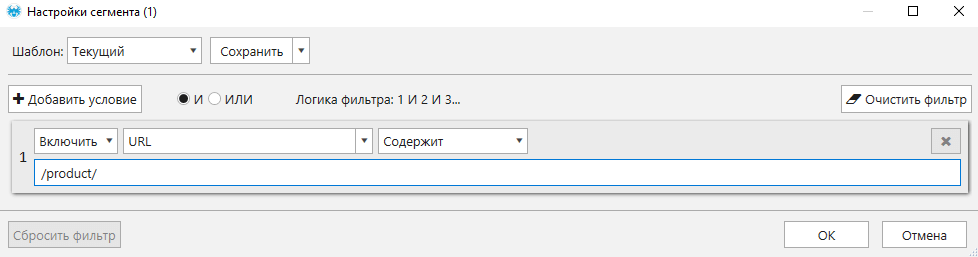

Использование этого сегмента может быть полезным для анализа проекта e-commerce. Например, вы хотите проверить время ответа сервера карточек продукта. Давайте установим сегмент для URL с соответствующей частью.

Вы сможете найти не только страницы продуктов, но также страницы категорий, фильтров и так далее. Теперь вы можете анализировать также время отклика только для карточек товара.

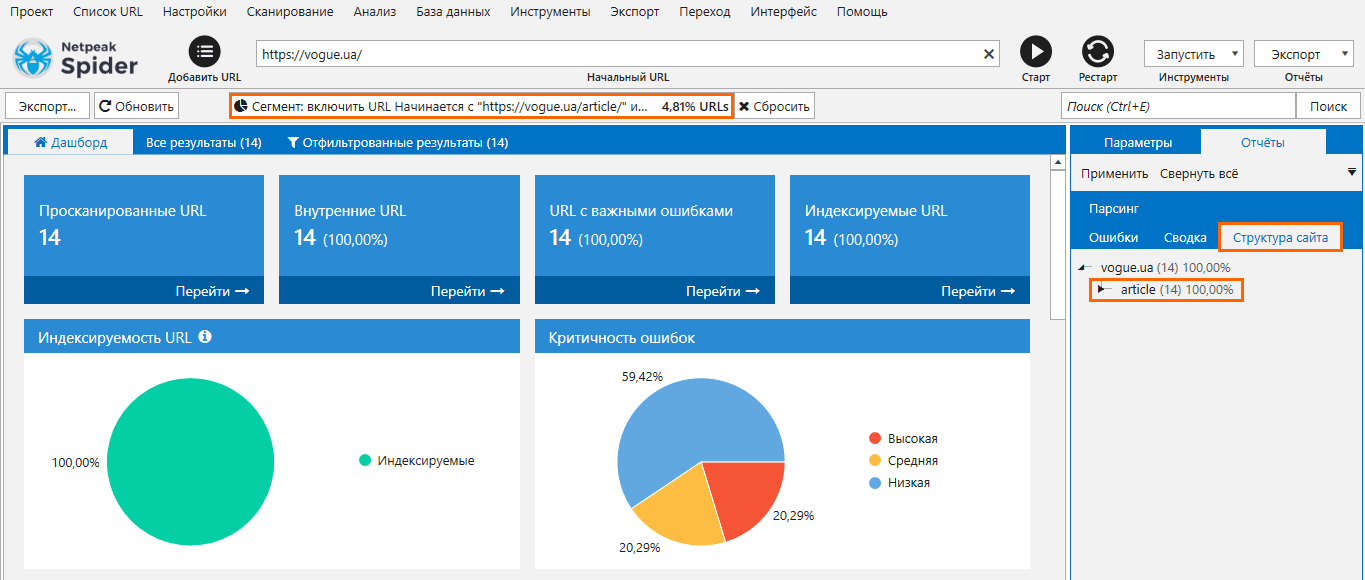

Чтобы ещё проще проанализировать определённую часть сайта, вы можете использовать вкладку «Структура сайта». Это довольно очевидный, но очень полезный пример использования сегментации. Поэтому, если вам нужно проанализировать определённую категорию на своём сайте, перейдите на вкладку «Структура сайта» и используйте нужную категорию в качестве сегмента.

В приведённом ниже примере мы просканировали сайт, и чтобы узнать больше информации о разделе со статьями можно просто щёлкнуть по нему и затем использовать в качестве сегмента. Теперь можно проанализировать результаты только по этой части сайта.

3.4. Сегментация страниц по количеству слов

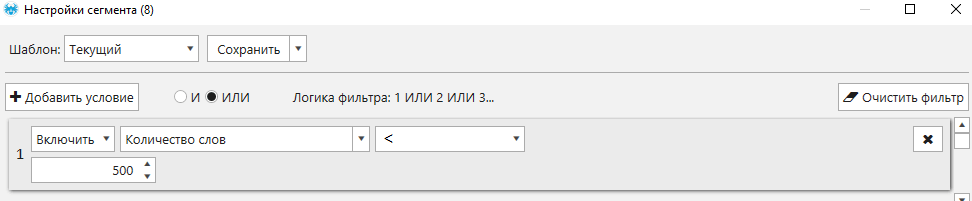

Этот пример поможет вам найти индексируемые страницы с небольшим содержанием.

Чтобы найти страницы с малым количеством контента на индексируемых страницах, вам просто нужно установить следующий сегмент.

3.5. Сегментация страниц по типу контента (HTML, изображение, JS, CSS, PDF)

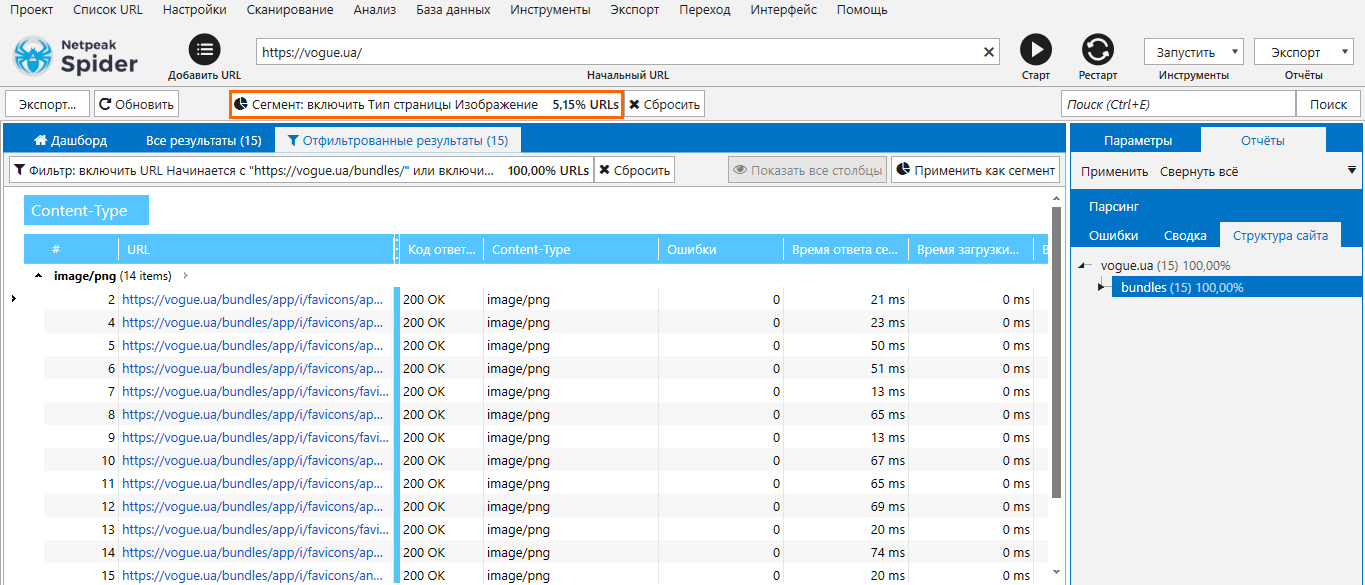

Это небольшой лайфхак для анализа различных типов контента на вашем сайте. Если вы включите проверку других типов страниц на вкладке «Основные» перед сканированием, то будет легко сегментировать данные по типу контента и соответствующим образом проверять ошибки.

В приведённом ниже примере мы используем «Изображения» в качестве сегмента, и теперь мы имеем результаты только для изображений на нашем сайте и можем легко анализировать и экспортировать необходимую информацию.

Совет. После сегментирования откройте вкладку «Структура сайта» и убедитесь, что все изображения размещены в нужном месте.

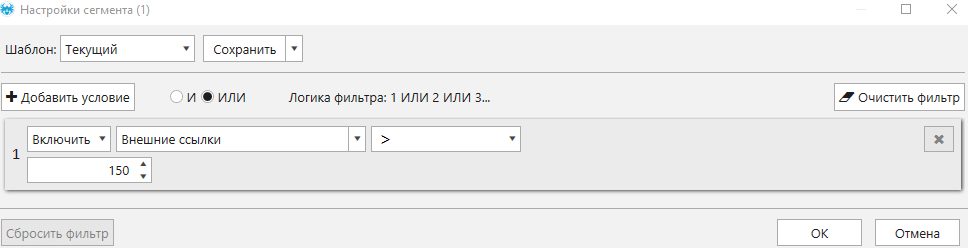

3.6. Сегментация страниц с чрезмерным количеством внешних ссылок

Используя этот сегмент вы можете найти страницы с чрезмерным количеством внешних ссылок. Такие страницы могут быть подозрительными и неравномерно распределять PageRank страниц. Просто установите сегмент с необходимыми параметрами, чтобы убедиться в отсутствии таких страниц.

Использование функций фильтрации и сегментации доступно на тарифе Netpeak Spider Lite, где также вы можете проводить анализ 80+ SEO-параметров, парсить сайты, экспортировать множество различных отчётов, сохранять проекты и многое другое. Если вы ещё не зарегистрированы у нас на сайте, то после регистрации у вас будет возможность сразу же потестировать платные функции.

Ознакомьтесь с тарифами, оформляйте доступ к понравившемуся, и вперёд получать крутые инсайты!

Подводим итоги

Сегментация может стать полезным инструментом для анализа большого количества данных. Используя Netpeak Spider, вы можете не только сканировать большие сайты и обнаруживать проблемы, но и сегментировать результаты в соответствии с вашими потребностями. В нашем инструменте вы можете установить сегмент с помощью гибких настроек и сортировать страницы по ним. Кроме того, вы можете использовать любой параметр из вкладки «Отчёты» в качестве сегмента.

Я описала несколько примеров сегментации, чтобы упростить вашу работу: как фильтровать страницы по типу контента, статусу, глубине клика, количеству слов, части URL-адреса и количеству исходящих ссылок.

А как вы используете эту функцию в своей работе? Поделитесь своими примерами в комментариях ниже, и мы добавим их в пост :)