Какие виды аудита можно проводить с помощью Netpeak Spider

Кейсы

Порой настройки Netpeak Spider, которые помогают более эффективно решать определённый круг задач, могут быть не очевидны. Из данного поста вы узнаете о видах seo-аудита сайта, которые помогут справиться с разными специфическими задачами, а вы сможете получать разнообразные отчёты по одному проекту.

- 1. Быстрый аудит «глазами поискового бота»

- 2. Аудит для оптимизации краулингового бюджета

- 3. Аудит на старте продвижения

- 4. Аудит на Pre-sale

- 5. Аудит внешних ссылок

- 6. Аудит после переезда сайта на HTTPS-протокол

- 7. Аудит скорости

- 8. Аудит нагрузки на сайт

- 9. Аудит метаданных

- 10. Аудит изображений и медиафайлов

- 11. Аудит сайта-гиганта

- 12. Аудит мультиязычного сайта

- 13. Аудит группы сайтов

- 14. Аудит перелинковки

- 15. Аудит приоритетности оптимизации

- Подводим итоги

1. Быстрый аудит «глазами поискового бота»

Аудит поможет понять, какие страницы в первую очередь будет анализировать поисковый бот. А вы будете знать, какие ошибки и на каких страницах необходимо устранять в первую очередь. Я буду показывать, как провести каждый вид аудита.

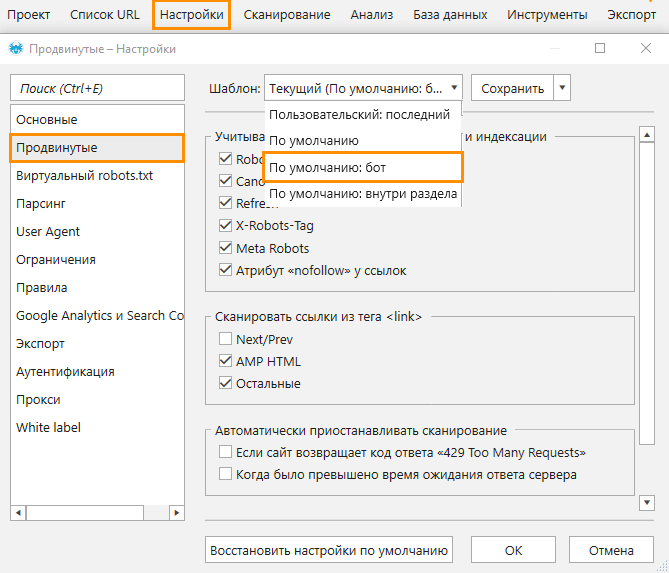

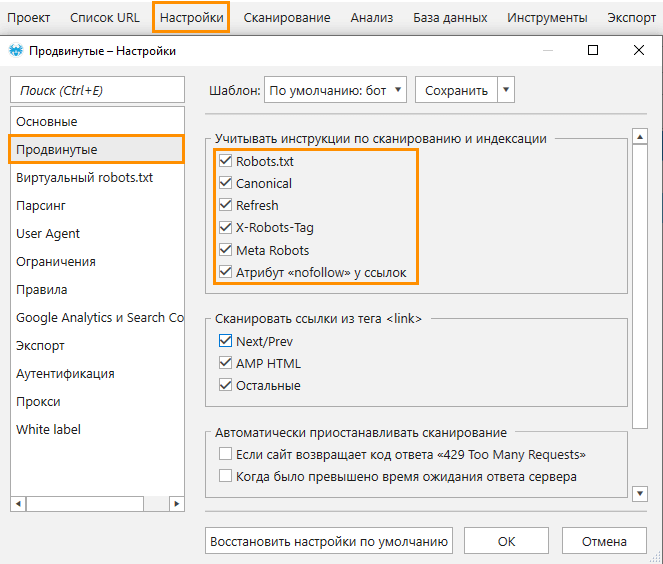

Для начала необходимо включить настройки, которые позволят программе имитировать поведение бота:

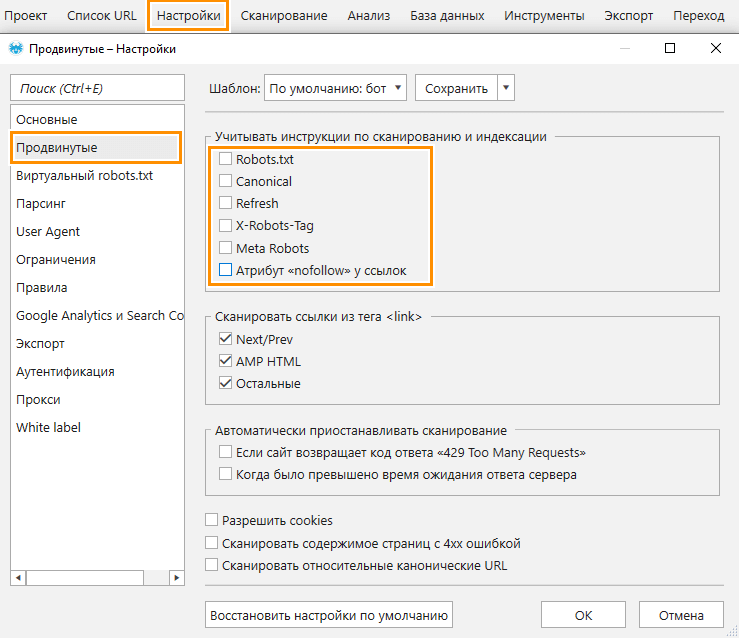

- В продвинутых настройках выберите шаблон «По умолчанию: бот», который активирует учёт всех инструкций по сканированию и индексации.

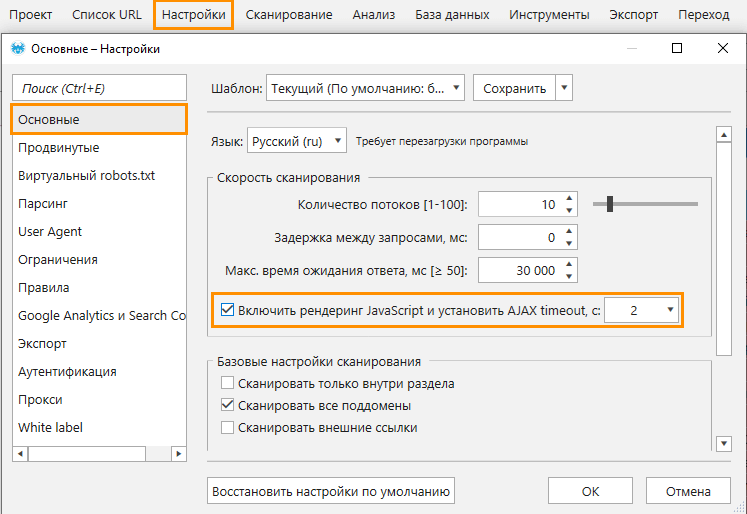

- Если на страницах сканируемого сайта используется JavaScript для отображения части контента, или это SPA-сайт, на вкладке «Основные» включите рендеринг JavaScript.

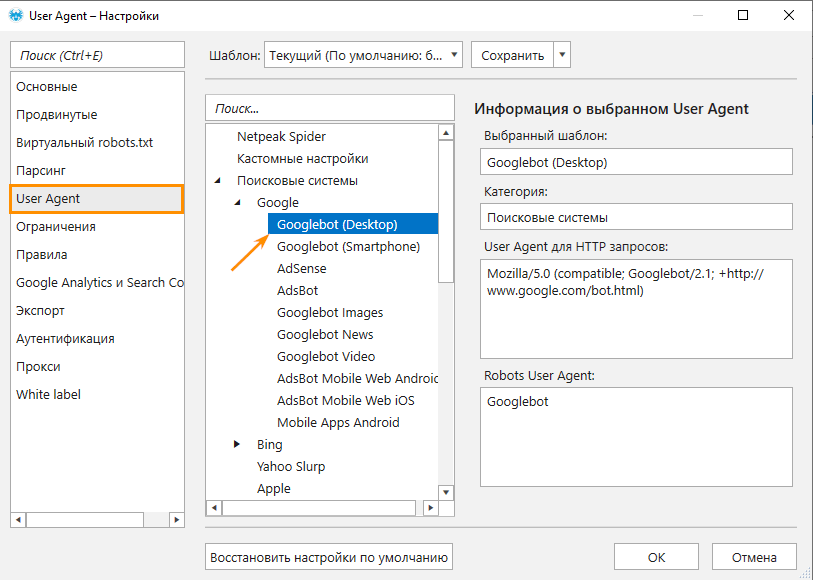

- На вкладке «User Agent» выберите бота поисковой системы, под которую вы продвигаете сайт, например: «Yandex Bot», «Bingbot», «Googlebot» и т.д.

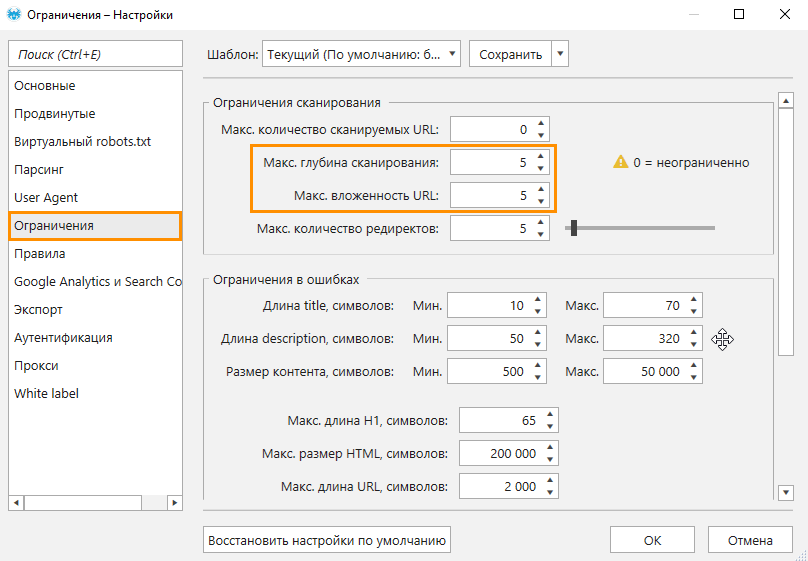

- Поисковые боты не всегда сканируют сайт целиком за одну сессию, особенно если это крупный сайт. Поэтому задаём ограничение по глубине и вложенности сканирования.

Когда все настройки выставлены, запустите сканирование. По окончании в основной таблице вы увидите только те страницы, которые потенциально могут находиться в индексе поисковых систем.

Полученные данные можно дополнить страницами из карты сайта. Для этого воспользуйтесь инструментом «Валидатор XML Sitemap». Также есть возможность дополнить отчёт данными из Google Analytics и Search Console по трафику, кликам и показам, что поможет определить:

- есть ли индексируемые страницы, не получающие трафик, клики или показы;

- есть ли неиндексируемые страницы, которые получают трафик, клики или показы.

Netpeak Spider не допускает наличие одинаковых URL в основной таблице. Если в GA, GSC или карте сайта содержатся страницы, которые есть в таблице, они не перенесутся, а вместо них будут выгружаться только не найденные в ходе сканирования. Этому могут послужить следующие причины:

- Ссылки на эти страницы не видны поисковому боту. Например, если ссылки подгружаются при нажатии на определённую кнопку.

- На сайте нет ссылок на эти страницы.

- Ссылки находятся глубоко от начальной страницы. В этом случае необходимо правильно оптимизировать краулинговый бюджет, и в этом вам поможет пример аудита сайта, который рассмотрим ниже.

2. Аудит для оптимизации краулингового бюджета

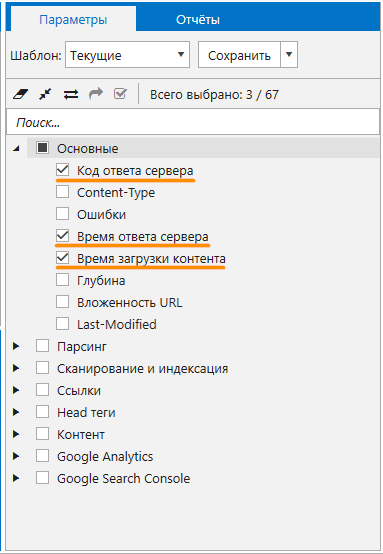

В этом типе аудита применяется сегментация, а настройки перед сканированием вы можете задать на своё усмотрение, главное включить следующие параметры:

- «Код ответа сервера»,

- чекбокс «Сканирование и индексация»,

- чекбокс «Ссылки»,

- «Глубина».

После сканирования вы проверьте наличие / отсутствие таких проблем:

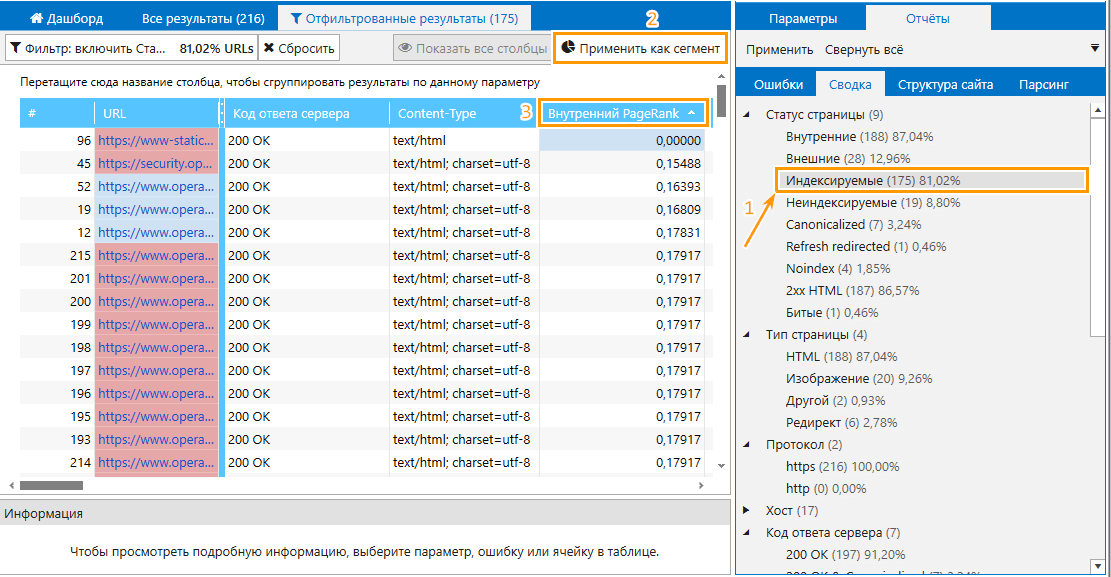

- Индексируемые страницы с низким значением PageRank. Индексируемые страницы должны получать больше ссылочного веса, чем неиндексируемые, так как первые потенциально могут приносить трафик на сайт. Следовательно, вам необходимо обнаружить страницы, которые получают недостаточное количество ссылочного веса и проставить больше внутренних ссылок на них.

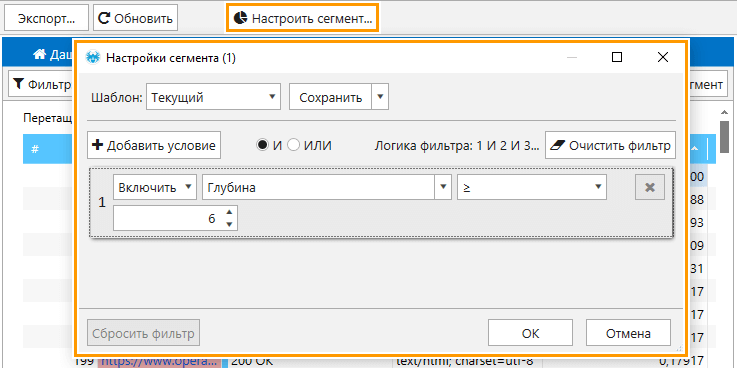

Обнаружить такие страницы можно с помощью сегмента и сортировки значения PageRank по возрастанию, как это представлено на скриншоте.

- Неиндексируемые страницы с большим PageRank.

- Страницы, которые находятся на глубине больше значения «5» от главной страницы. С помощью сегмента по глубине вы сможете узнать, какие важные страницы находятся далеко от начальной.

- Страницы, не получающие ссылочный вес.

Также рекомендую обратить внимание на ссылки, ведущие на страницы с редиректом, они также негативно влияют на краулинговый бюджет.

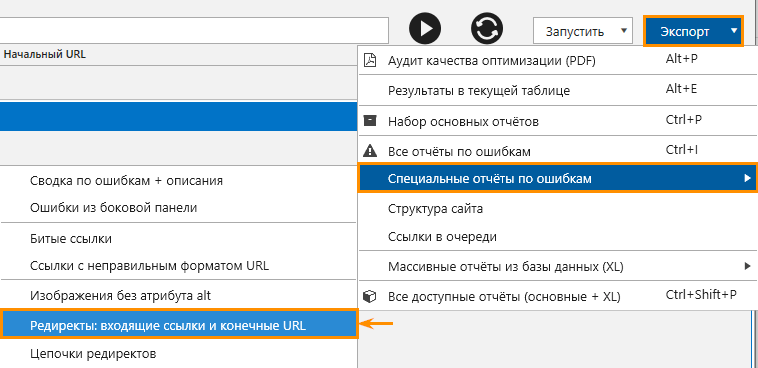

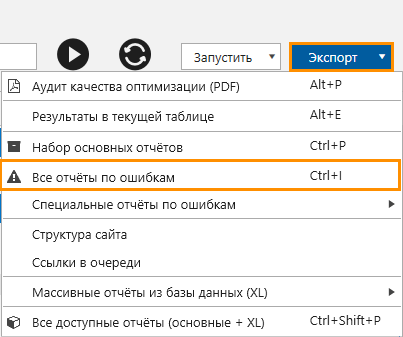

Выгрузить подробный отчёт по редиректам можно в меню «Экспорт».

Как и в предыдущем аудите вы можете дополнить данные выгрузкой страниц из карты сайта, чтобы найти те, которые не были обнаружены в ходе сканирования.

3. Аудит на старте продвижения

Этот вид технического аудита сайта является противоположностью аудита «глазами поискового бота», так как на начальном этапе продвижения любого проекта важно дать сильный толчок позициям, а для этого необходимо найти максимум ошибок оптимизации.

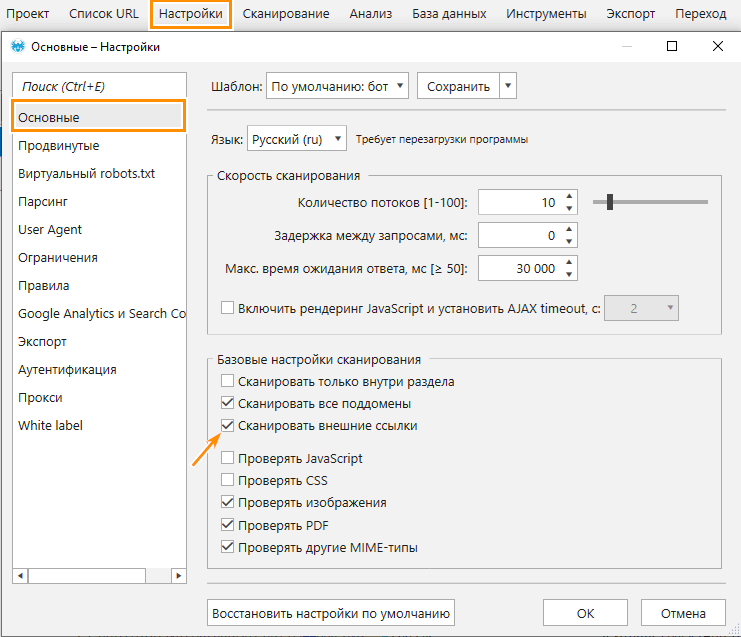

Для этого задайте следующие настройки:

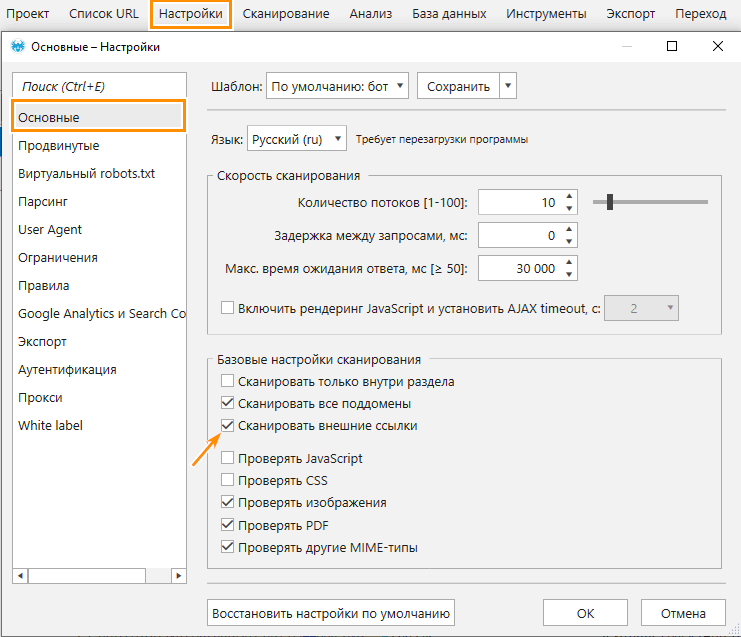

- Включите сканирование внешних ссылок.

- Отключите учёт инструкций по сканированию и индексации.

- Сбросьте ограничения и правила (если заданы).

- Включите все параметры.

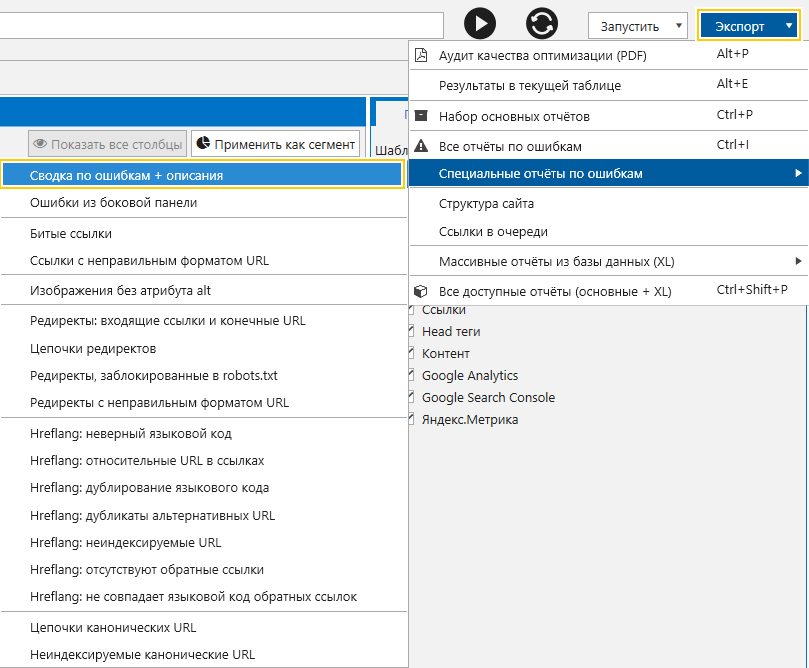

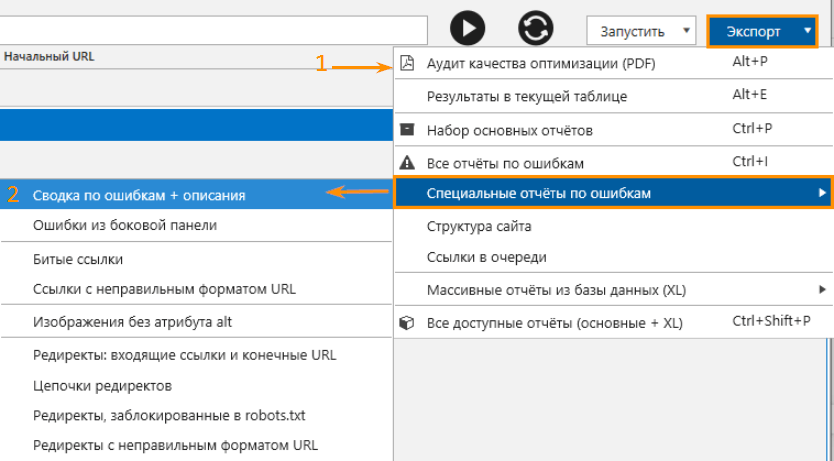

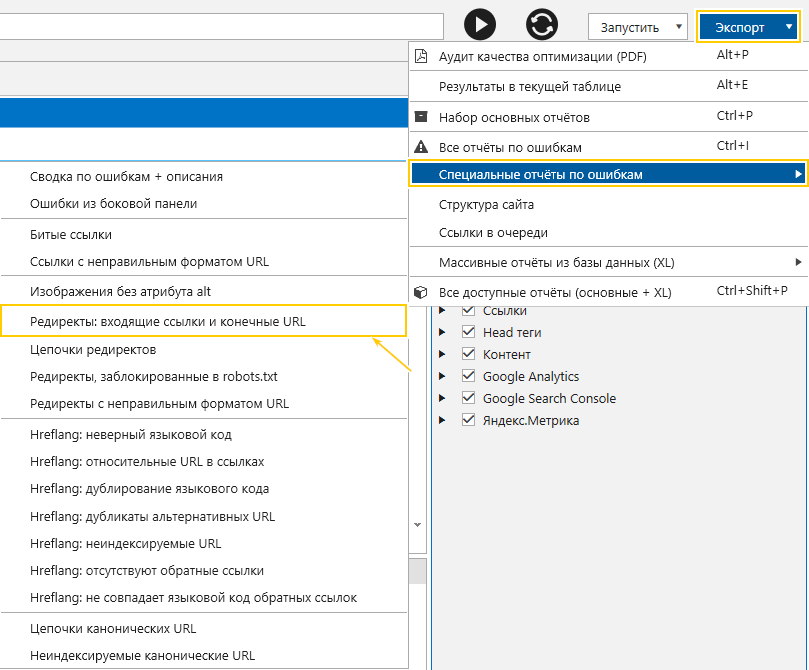

С учётом таких настроек программа проверит страницы сайта на все доступные SEO-ошибки. Чтобы выгрузить все отчёты по найденным ошибкам, воспользуйтесь меню «Экспорт».

Для качественного SEO-аудита на старте продвижения важно в первую очередь сосредоточиться на исправлении:

- битых ссылок,

- некорректного содержимого в теге Canonical,

- дубликатов контента (Title, Description, контент в теге <body>),

- отсутствия содержимого в тегах Title и Description,

- низкой скорости отклика страниц,

- ссылок на внутренние страницы с редиректом.

Отчёты по этим ошибкам находятся в списке «Специальные отчёты по ошибкам» в меню «Экспорт». Кстати, отчёт «Сводка по ошибкам + описания» поможет составить техническое задание по их устранению для разработчиков. При необходимости экспортируйте этот отчёт.

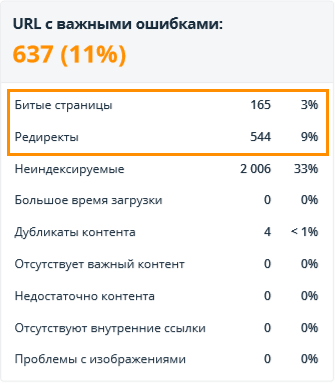

4. Аудит на Pre-sale

Если перед вами стоит задача быстро и качественно продемонстрировать клиенту основные ошибки оптимизации на сайте и дать рекомендации по их решению, вам пригодятся:- White Label отчёт;

- сводка по ошибкам, в которой отображён перечень всех найденных ошибок, их описание, а также рекомендации по устранению.

Отчёт находятся в меню «Экспорт».

Для аудита на Pre-sale используйте настройки из предыдущего пункта, чтобы программа просканировала все страницы сайта и собрала максимум возможных ошибок.

5. Аудит внешних ссылок

Оптимизация внутренних страниц важна, но не стоит забывать про оптимизацию внешних ссылок. Этот аудит поможет вам определить, есть ли на сайте некачественные внешние ссылки, а именно:

- битые ссылки;

- ссылки, ведущие на странницы с медленным ответом сервера и большим временем загрузки контента;

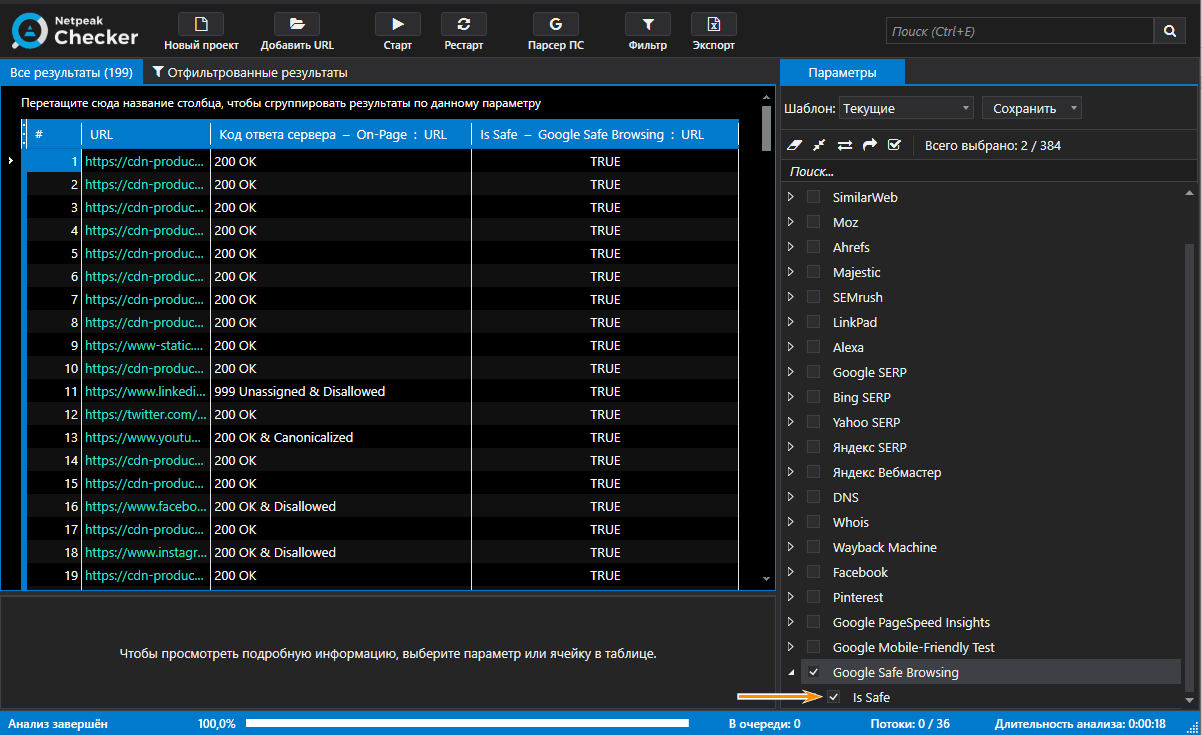

- ссылки на некачественные сайты или на сайты с вредоносными ресурсами (проверка выполняется с помощью Netpeak Checker).

Для такого вида аудита в Netpeak Spider:

- Включите сканирование внешних ссылок.

- Отметьте параметры «Код ответа сервера», «Время ответа сервера» и «Время загрузки контента».

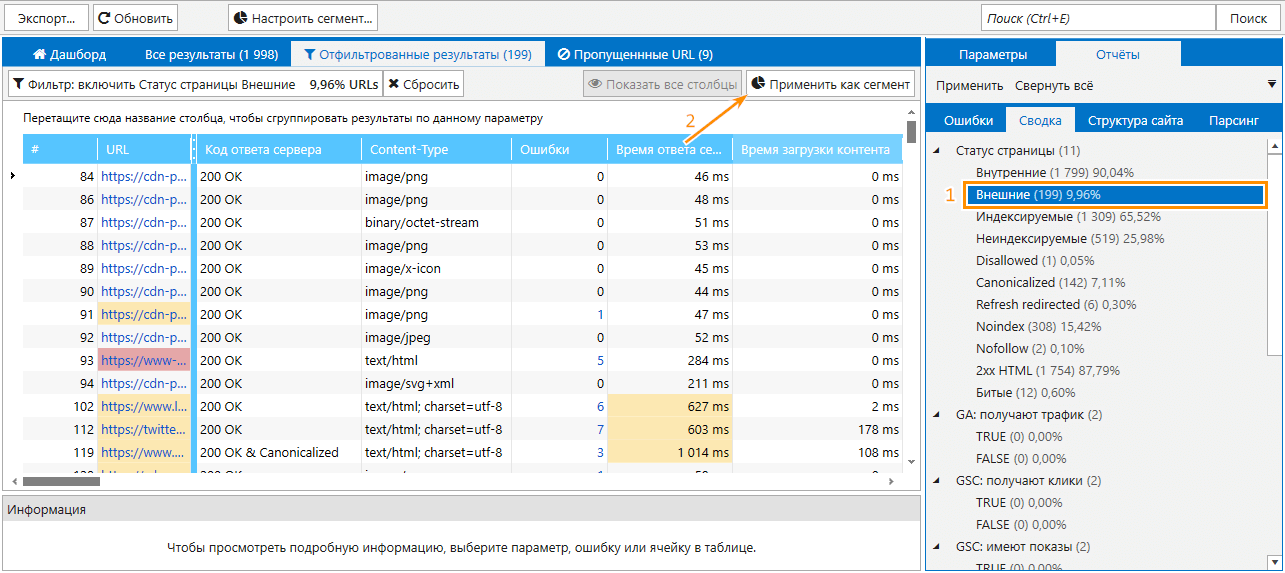

По окончании сканирования задайте сегмент по внешним ссылкам.

В результате вы получите список всех внешних ссылок и отчёты по ошибкам, найденным на страницах, на которые ссылается ваш сайт.

Вы также можете сохранить этот проект и открыть его в Netpeak Checker, чтобы убедиться, что ни одной из этих страниц нет в «чёрном списке» Google. Это делается с помощью сервиса Google Safe Browsing.

Скачать отчёты Netpeak Spider и Checker

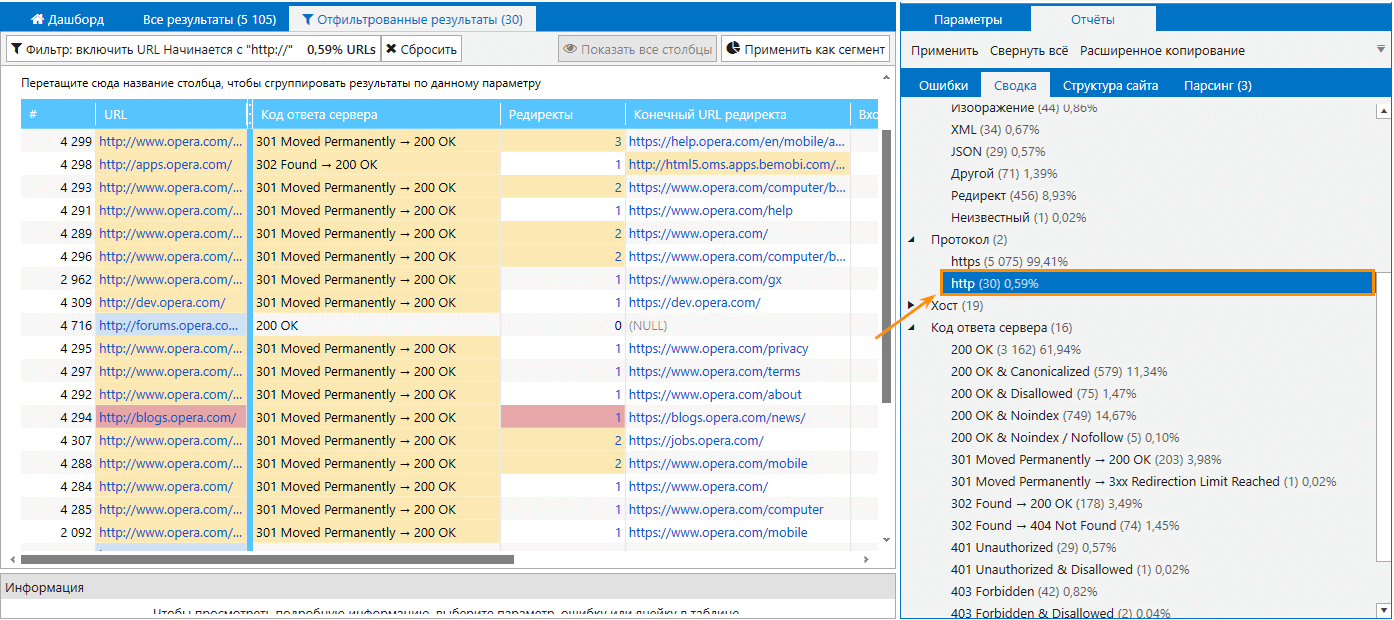

6. Аудит после переезда сайта на HTTPS-протокол

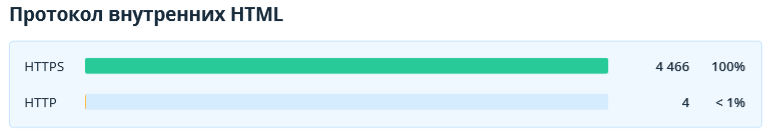

При переезде с HTTP на HTTPS-протокол важно убедиться, что на сайте не осталось HTTP-ссылок, заданы прямые ссылки на HTTPS без редиректов, и отсутствует смешанный контент.

Перед сканированием включите следующие параметры:

- «Код ответа сервера»,

- «Входящие ссылки»,

- «Редиректы»,

- «Конечный URL редиректа»,

- «Хеш-страницы».

Необходимо также задать регулярные выражения в качестве пользовательских параметров для поиска смешанного контента. Сделать это можно с помощью парсинга.

В настройках также включите сканирование служебных страниц и файлов (JS, CSS и т.д.).

После завершения сканирования на вкладке «Сводка» вы можете открыть отчёт по ссылкам с HTTP-протоколом.

Чтобы посмотреть список входящих ссылок для выбранной страницы, воспользуйтесь контекстным меню или клавишей F1. Для просмотра входящих ссылок для всех страниц отчёта → Shift+F1.

В Netpeak Spider также есть отчёт, который показывает URL страниц с редиректом, куда ведёт редирект и входящие ссылки этих страниц.

В PDF-отчёте отобразится следующая информация:

- Общее количество ссылок на страницы с редиректом и количество битых ссылок.

- Количество ссылок с HTTP протоколом.

В отчёте по парсингу вы можете посмотреть, нет ли на страницах с протоколом HTTPS незащищённых скриптов и элементов. Для этого перейдите на вкладку «Отчёты» → «Парсинг» и выберите «Все результаты».

7. Аудит скорости

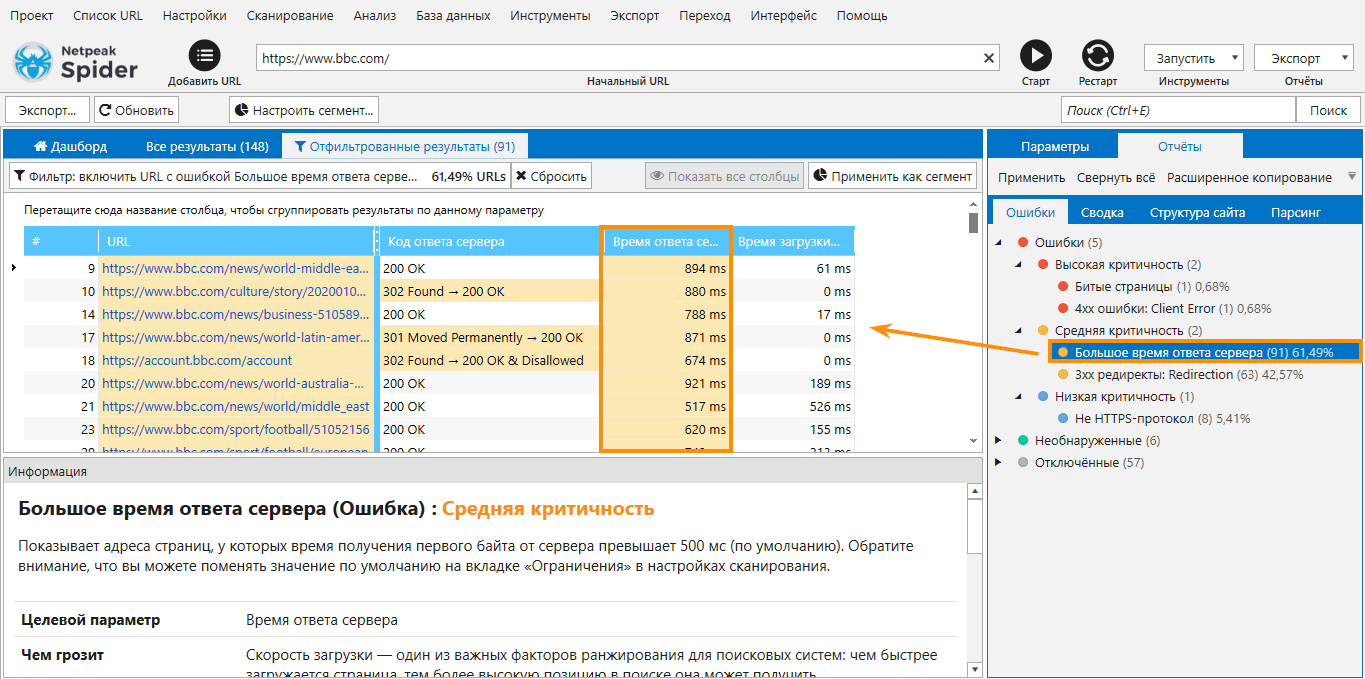

Скорость работы сайта — важный показатель оптимизации и в целом один из основных факторов ранжирования в поисковых системах.

Чтобы проанализировать скорость работы сайта, необходимо просканировать его с использованием следующих параметров:

- «Код ответа сервера»,

- «Время ответа сервера»,

- «Время загрузки контента».

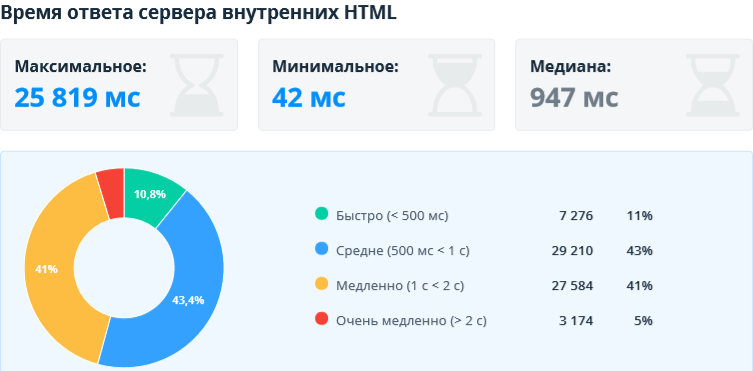

Таким образом вы быстро просканируете сайт с минимальным потреблением ресурсов вашего устройства и в результате получите информацию о том, насколько быстро сервер отвечает на запросы, и какие проблемы могут возникнуть со скоростью ответа сервера.

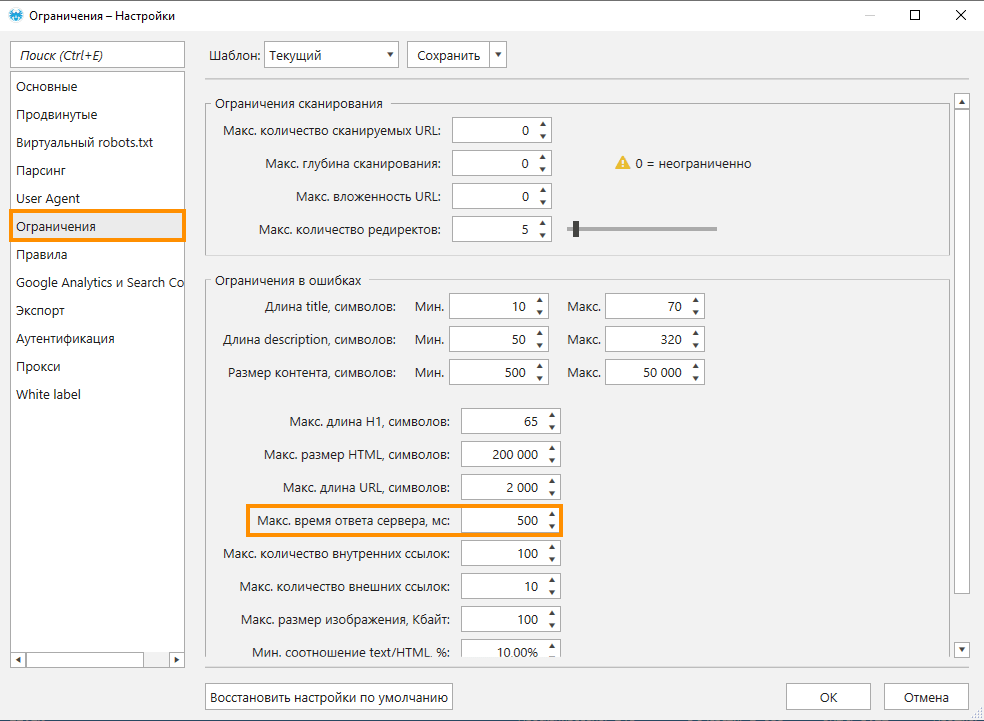

По умолчанию в Netpeak Spider страницы, которые отвечают более 500 мс, попадают в отчёт по ошибке «Большое время ответа сервера», однако вы можете выставить свои лимиты на вкладке «Настройки» → «Ограничения».

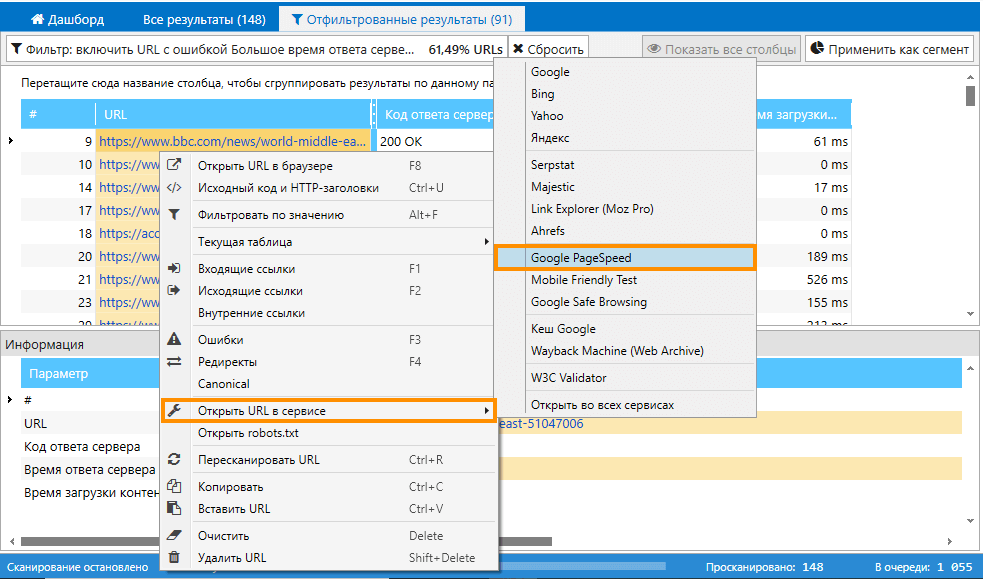

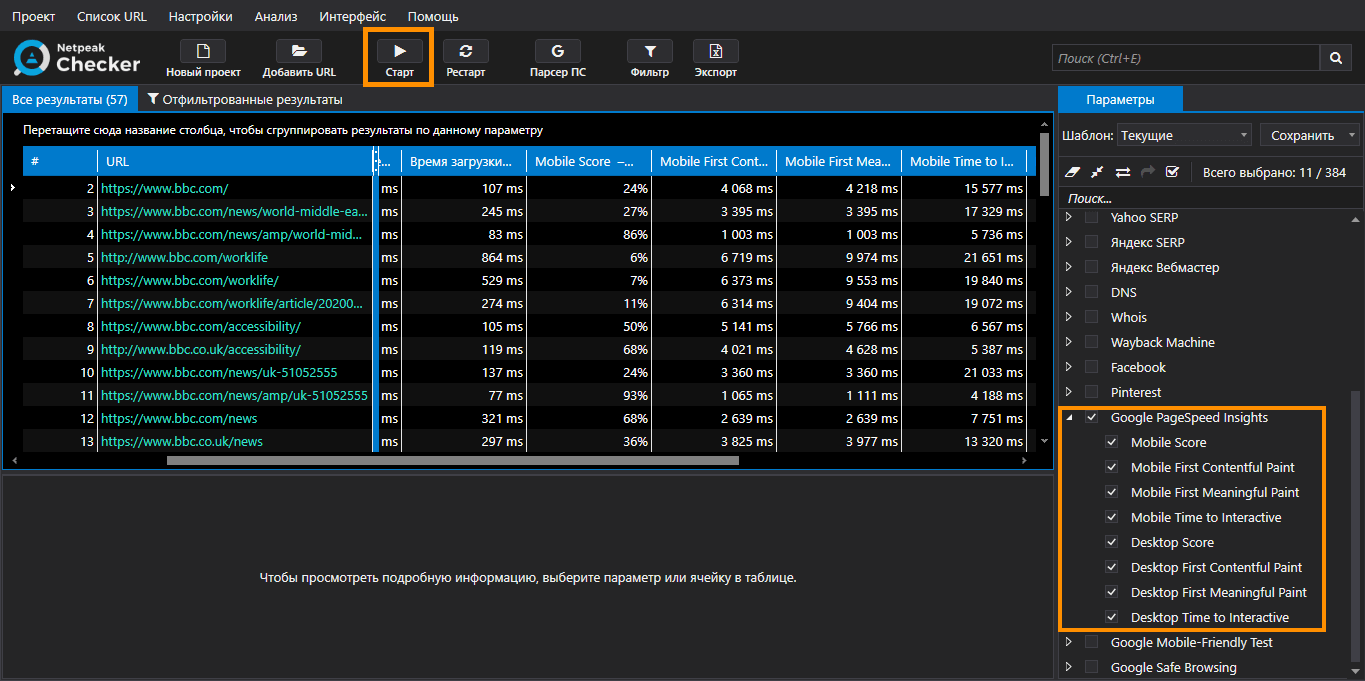

Дополнительно вы можете проверить страницы в сервисе Google Pagespeed Insights. Для этого необходимо вызвать контекстное меню на интересующем URL правой кнопкой мышки и выбрать сервис.

Также есть возможность выполнить массовую проверку URL в Google Pagespeed Insights с помощью Netpeak Checker. Переносим полученные URL из Netpeak Spider в Checker, выбираем на боковой панели параметры сервиса и запускаем анализ.

В экспортированном PDF-отчёте, сгенерированном Netpeak Spider, вы увидите следующую информацию о времени ответа сервера.

Время ответа страниц и загрузки контента может меняться в зависимости от нагрузки на сервер сайта. Например, страницы могут загружаться молниеносно, если на сайте в данный момент мало посетителей, но при увеличении их количества работа сервера замедлится.

В Netpeak Spider можно увеличивать количество запросов, которые отправляются параллельно серверу сайта, тем самым создавать бо́льшую нагрузку на сервер и анализировать, как меняется скорость обработки запросов. В этом вам поможет следующий вид аудита.

8. Аудит нагрузки на сайт

Перед стартом аудита отключите все параметры, кроме параметров, проверяющих скорость. Просканируйте сайт с использованием максимального количества потоков. Так вы поймёте, как будет вести себя сервер при высокой нагрузке.

Будьте максимально осторожны, так как такое сканирование может временно вывести сервер из строя.

В отчёте помимо информации о скорости рекомендую также обратить внимание на коды ответа сервера и проверить, нет ли страниц с кодом ответа «Timeout».

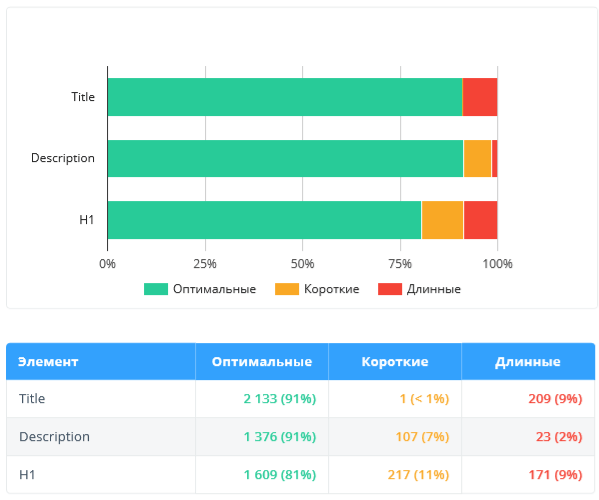

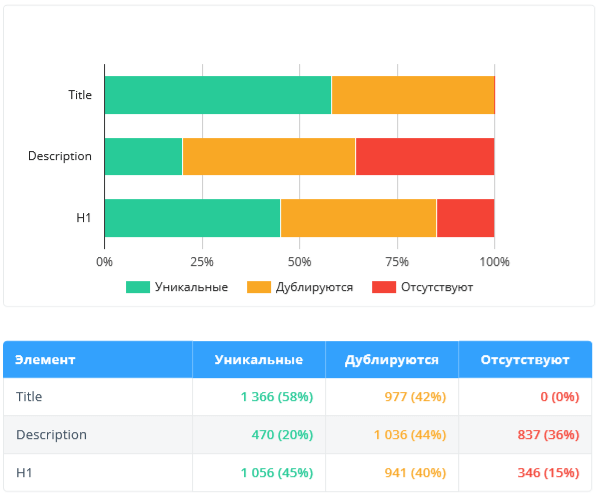

9. Аудит метаданных

Для проверки корректности метаданных (аудита контента сайта) перед сканированием необходимо включить такие параметры:

- «Код ответа сервера»,

- «Meta Robots»,

- «Title»,

- «Длина Title»,

- «Description»,

- «Длина Description»,

- «Keywords»

- «Длина keywords»,

- «Содержимое H1».

В настройках включите учёт всех инструкций по сканированию и индексации.

В результате программа проверит страницы на наличие ошибок, связанных с метатегами:

- «Дубликаты Title»,

- «Дубликаты Description»,

- «Дубликаты H1»,

- «Отсутствующий или пустой Title»,

- «Отсутствующий или пустой Description»,

- «Несколько тегов title»,

- «Несколько тегов description»,

- «Одинаковые title и H1»,

- «Макс. длина title»,

- «Короткий description»,

- «Макс. длина description».

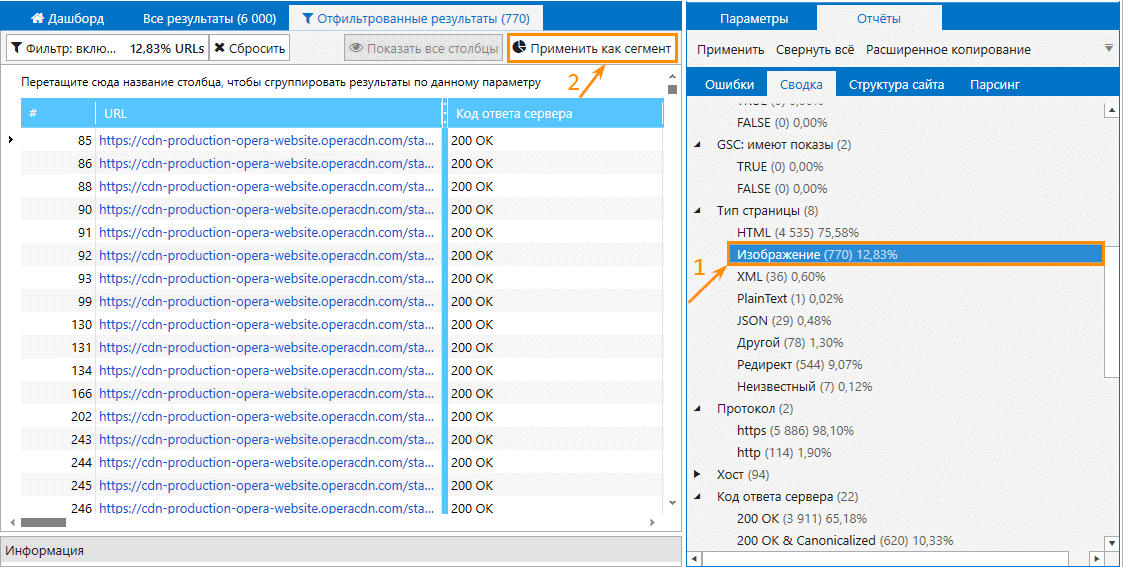

10. Аудит изображений и медиафайлов

Оптимизация изображений — один из важных аспектов продвижения сайта, потому важно соблюдать рекомендации поисковых систем касательно визуального контента на страницах. Netpeak Spider проверяет изображения и прочие медиафайлы (аудио, архивы и тд.) на основные ошибки:

- «Битые изображения»,

- «Изображения без атрибута alt»,

- «Максимальный размер изображения»,

- «Другие SEO-ошибки».

Для медиафайлов определяются общие ошибки (редирект, битая ссылка и тд).

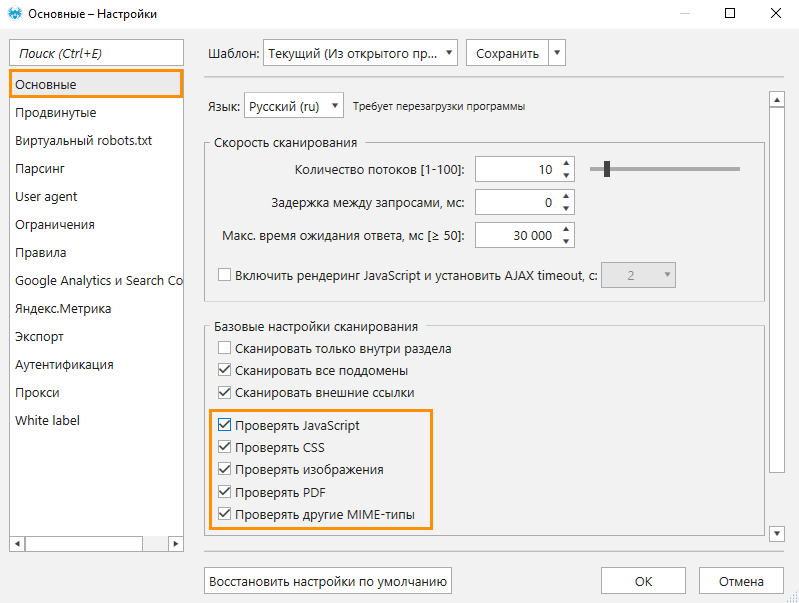

Чтобы провести аудит на наличие проблем с изображениями и медиафайлами, достаточно просканировать сайт со стандартными настройками программы.

После завершения сканирования вы можете отфильтровать результаты с помощью функции сегментации. Например, как показано на скриншоте.

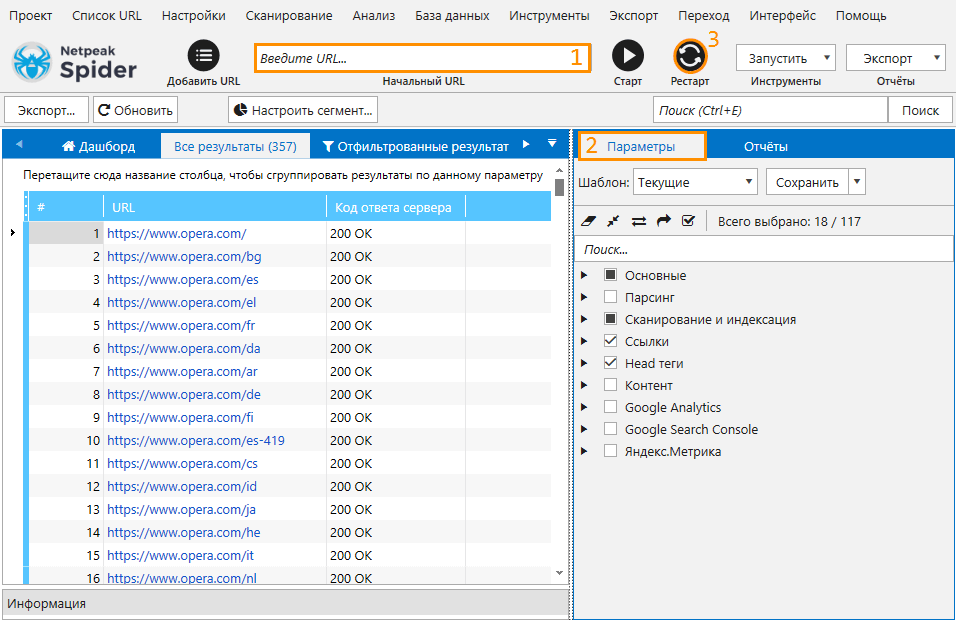

11. Аудит сайта-гиганта

Если вы беспокоитесь, что при сканировании большого сайта не хватит оперативной памяти, следуйте рекомендациям ниже.

Данный вид аудита не только поможет вам сэкономить оперативную память, но и избежать риска потери данных при аварийном завершении работы программы.

I. Сканировать сайта в два этапа

Чем больше параметров учитывается во время сканирования, тем больше оперативной памяти расходуется для отображения результатов. Чтобы снизить вероятность возникновения ошибки из-за нехватки оперативной памяти, просканируйте сайт дважды.

При первом сканировании отключите все параметры, кроме кода ответа сервера. Так программа быстрее найдёт все страницы сайта без существенной нагрузки на оперативную память. По окончании сканирования сохраните проект.

Перед стартом повторного сканирования очистите адресную строку, после чего перейдите вновь на вкладку «Параметры», отметьте нужные пункты и нажмите «Рестарт».

В этом случае сканирование будет осуществляться по списку страниц, что менее ресурсозатратно, чем сканирование в стандартном режиме.

По мере сканирования в таблице результатов будут появляться данные по нужным параметрам.

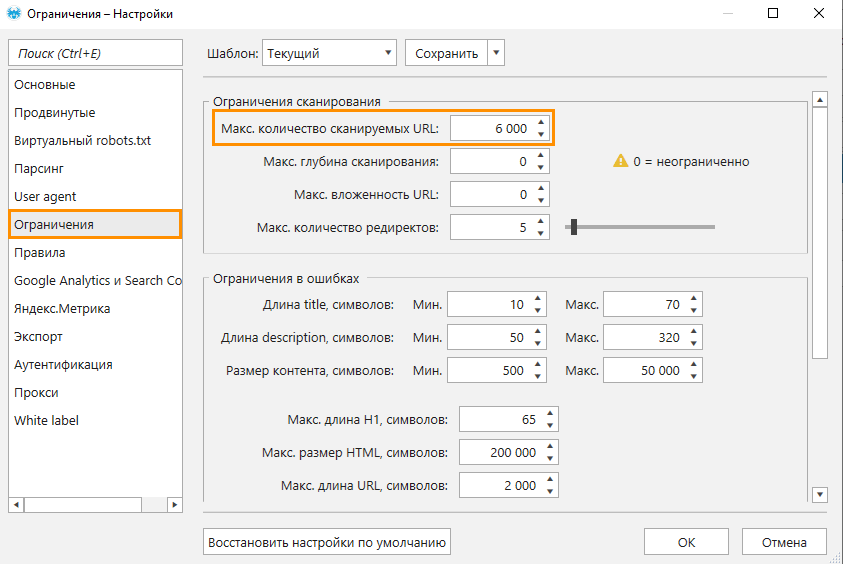

II. Периодически сохранять результаты

Во время сканирования сайта потребление оперативной памяти будет расти. Это может привести к непредвиденному завершению работы программы, если на устройстве будет недостаточно оперативной памяти.

Чтобы снизить риск возникновения подобной ситуации, сохраните проект после сканирования определённой части сайта. На вкладке настроек «Ограничения» задайте ограничение по количеству сканируемых страниц.

12. Аудит мультиязычного сайта

Если сайт ориентирован на аудиторию из разных стран, тут не обойтись без реализации мультиязычного контента. У сайта может быть несколько альтернативных версий для каждой страницы, и чтобы поисковые системы не посчитали их дублями, важно корректно указать версии сайта на иностранных языках в hreflang. Размещение этого тега обусловлено рядом правил, поэтому очень легко допустить ошибку в реализации.

С помощью Netpeak Spider вы можете убедиться, соблюдены ли все правила, а если обнаружится обратное, программа укажет вам на источник проблемы в соответствующих отчётах.

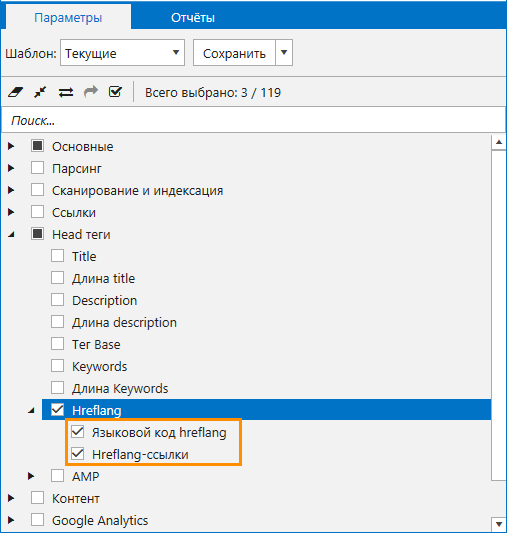

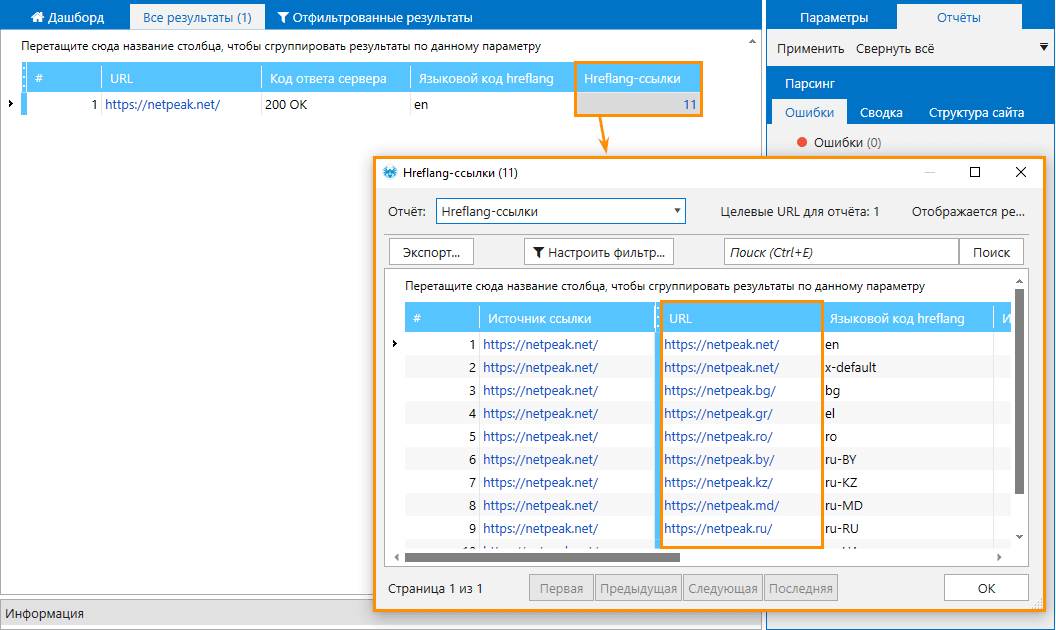

Перед началом аудита вам достаточно включить два параметра из пункта hreflang — «Языковой код hreflang», «Hreflang-ссылки», а также параметр «Код ответа сервера».

Программа будет проверять этот тег и при нахождении ошибок отображать соответствующие отчёты.

В результате такого аудита вы сможете проверить:

- наличие ссылок в hreflang на текущей странице,

- отсутствие битых ссылок в теге hreflang,

- корректность языковых кодов и отсутствие дублирующихся кодов,

- наличие альтернативных URL без дубликатов,

- наличие обратных hreflang-ссылок,

- отсутствие в hreflang относительных ссылок и / или ссылок на индексируемые страницы,

- совпадение языкового кода обратных ссылок.

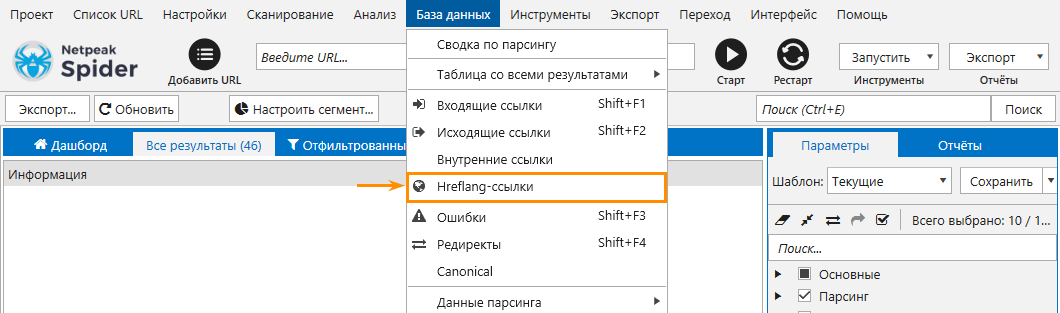

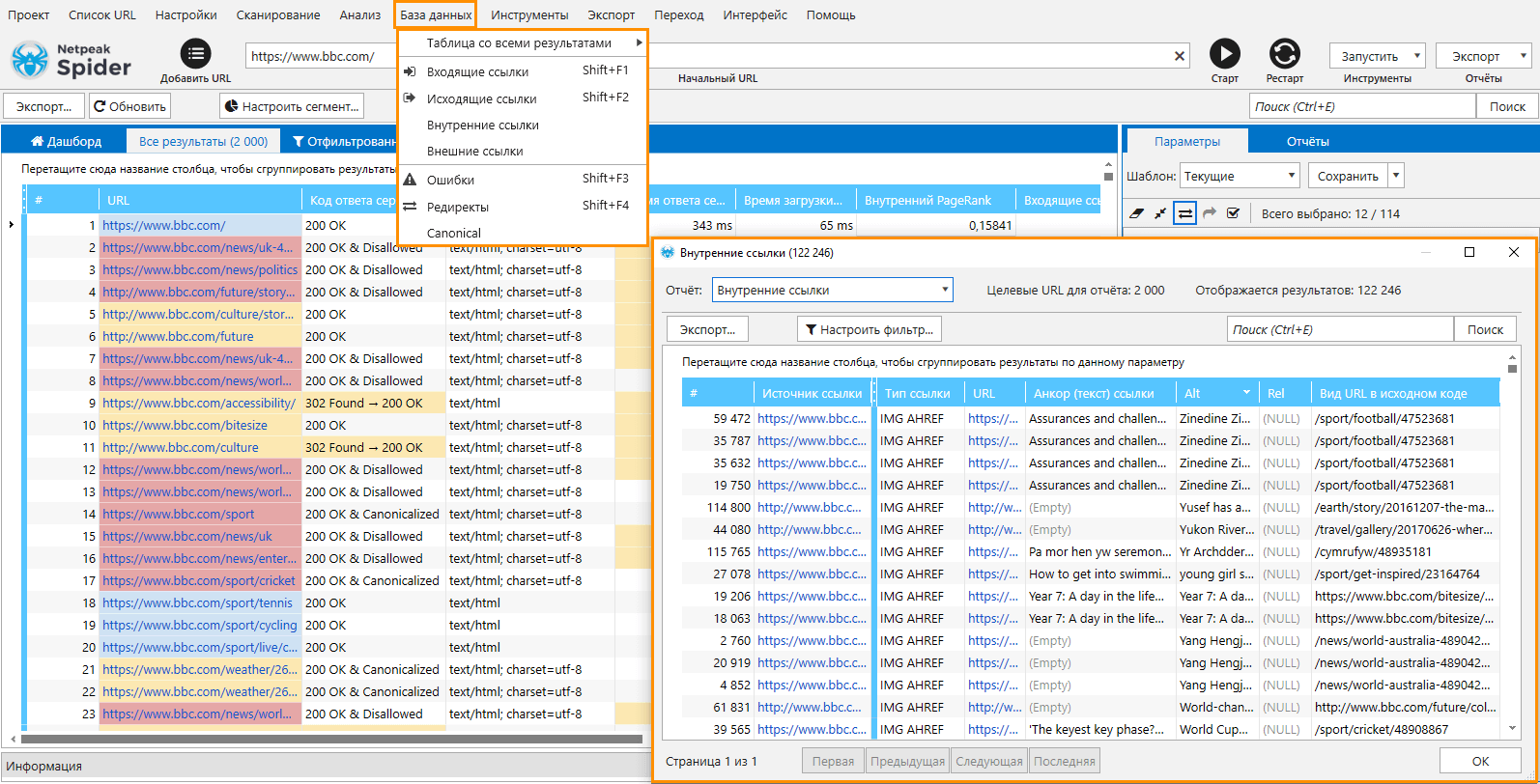

Также в программе доступен отдельный отчёт по ссылкам из тега hreflang. Открыть его можно с помощью меню «База данных».

Если для каждого языка используется отдельный домен, то для сканирования всех версий сайта необходимо использовать аудит группы сайтов.

13. Аудит группы сайтов

Чтобы просканировать несколько доменов с разными языками, сделайте следующее:

- Убедитесь, что проверка тега hreflang включена.

- Вставьте URL одного из доменов сайта в основную таблицу и просканируйте.

- Откройте отчёт по hreflang, скопируйте список всех доменов, которые записаны, и перенесите их в основную таблицу.

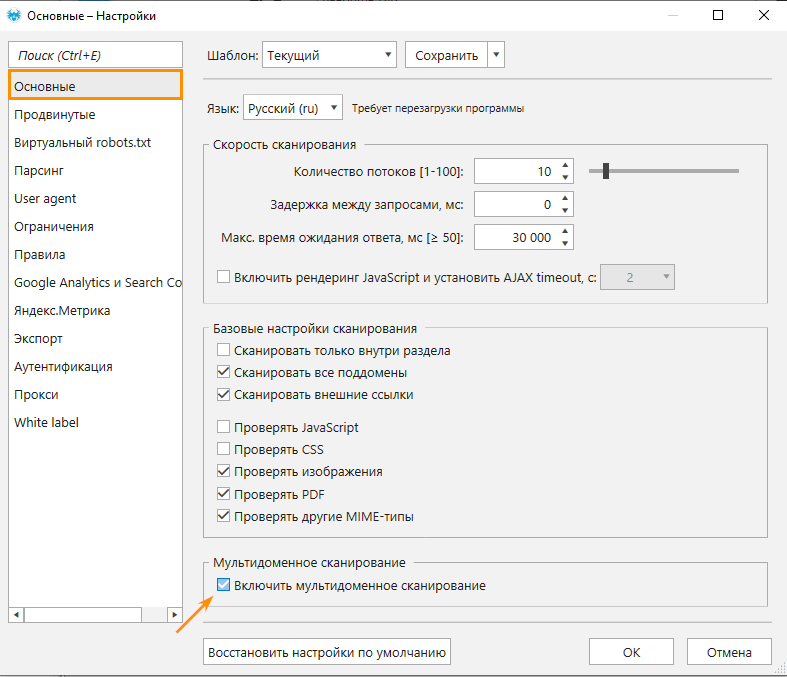

- Перейдите на вкладку «Основные» настроек программы, включите мультидоменное сканирование и запустите краулинг.

В этом режиме программа просканирует все страницы доменов, которые указаны в основной таблице за один сеанс. Таким образом вы соберёте максимально полную информацию обо всех языковых доменах вашего сайта.

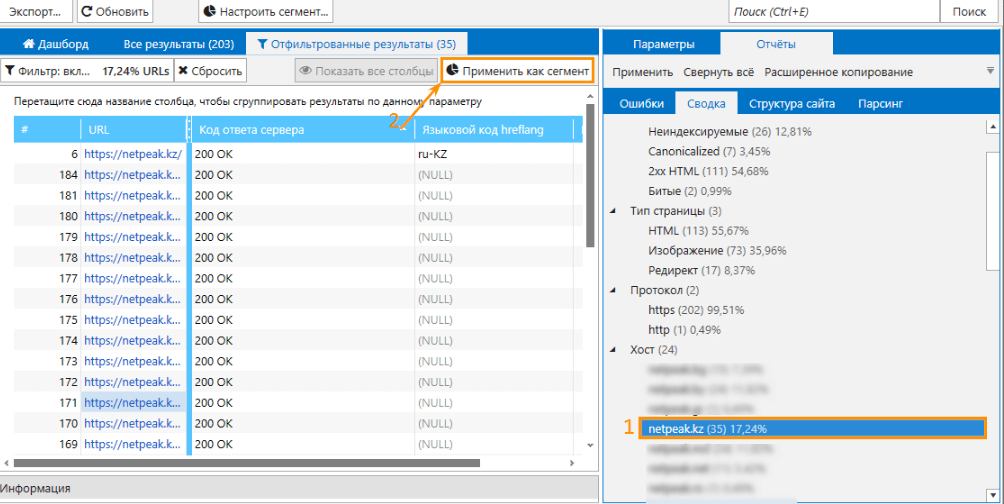

Но учитывайте то, что программа будет смешивать страницы из разных доменов в отчётах по ошибкам. Если вы хотите выгружать отчёты отдельно по каждому домену, используйте сегментацию.

К примеру, чтобы получить отчёты отдельно для сайта netpeak.kz, нужно перейти на вкладку «Сводка», выбрать необходимый домен и применить его в качестве сегмента.

Теперь можно изучить и выгрузить все примеры отчётов аудита для выбранного домена, затем то же проделать с остальными.

Функция мультидоменного сканирования, с помощью которой можно проводить аудит группы сайтов, доступна на Pro-тарифе. Хотите получить доступ к этой и другим PROфессиональным функциям? А именно:

- white label отчётам с возможностью брендирования и добавлением комментария,

- выгрузке поисковых запросов из Google Search Console и Яндекс.Метрики,

- экспорту отчётов на Google Drive / Sheets и др.

Тогда нажимайте на кнопку, чтобы приобрести тариф Pro, и вперёд получать самые крутые инсайты!

14. Аудит перелинковки

Внутренняя перелинковка позволяет улучшить удобство пользования сайтом и распределить передачу ссылочного веса, чтобы поисковые системы лучше индексировали посадочные страницы.

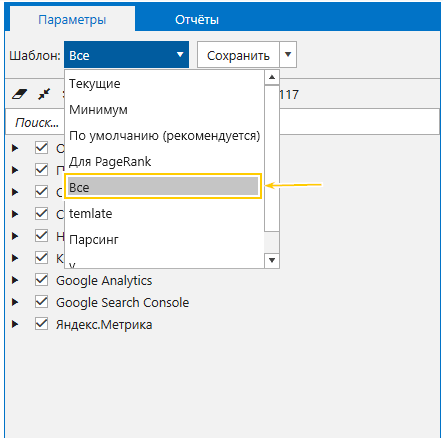

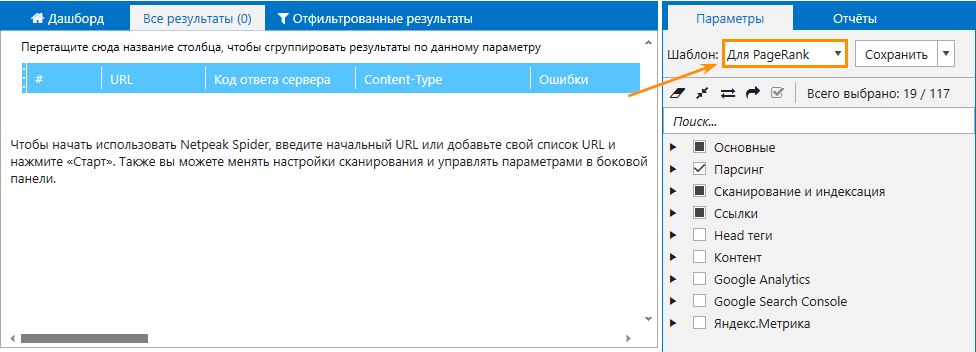

Для выполнения проверки достаточно выбрать шаблон параметров «Для PageRank».

- Внутренние ссылки и внешние ссылки с анкорами, значения в атрибуте rel и вид ссылки в исходном коде страницы. Отчёты хранятся в модуле «База данных».

- Информацию о распределении ссылочного веса внутри сайта: количество входящих ссылок, которые получает каждая страница, наличие важных страниц, получающих недостаточно ссылочного веса и, наоборот, служебные страницы с большим значением PageRank.

Также инструмент «Расчёт внутреннего PageRank» покажет, на каких узлах ссылочный вес «сжигается» и как он в целом распределяется по сайту.

15. Аудит приоритетности оптимизации

Аудит приоритетности оптимизации поможет обнаружить страницы, которые получают недостаточное количество органического трафика в сравнении с платным. Для поиска таких страниц необходимо выполнить две задачи:

- Задача 1. Найти страницы с наибольшим отношением контекстного трафика к органическому.

- Задача 2. Определить среди них страницы, которые плохо ранжируются при помощи Google Search Console или анализа параметров Serpstat в Netpeak Checker.

Приступим к выполнению анализа 😃

Задача 1

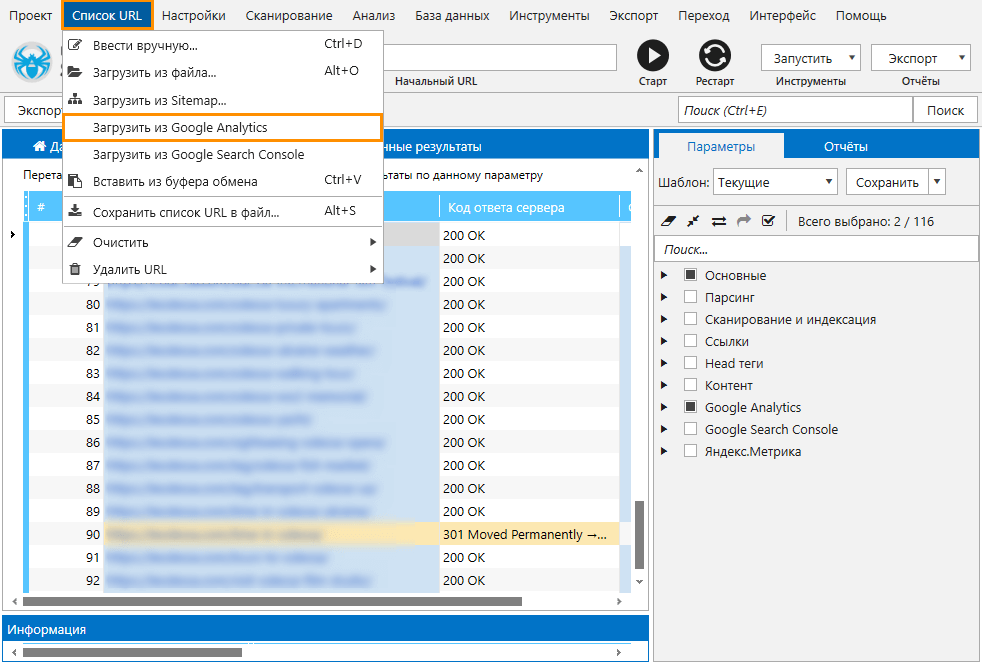

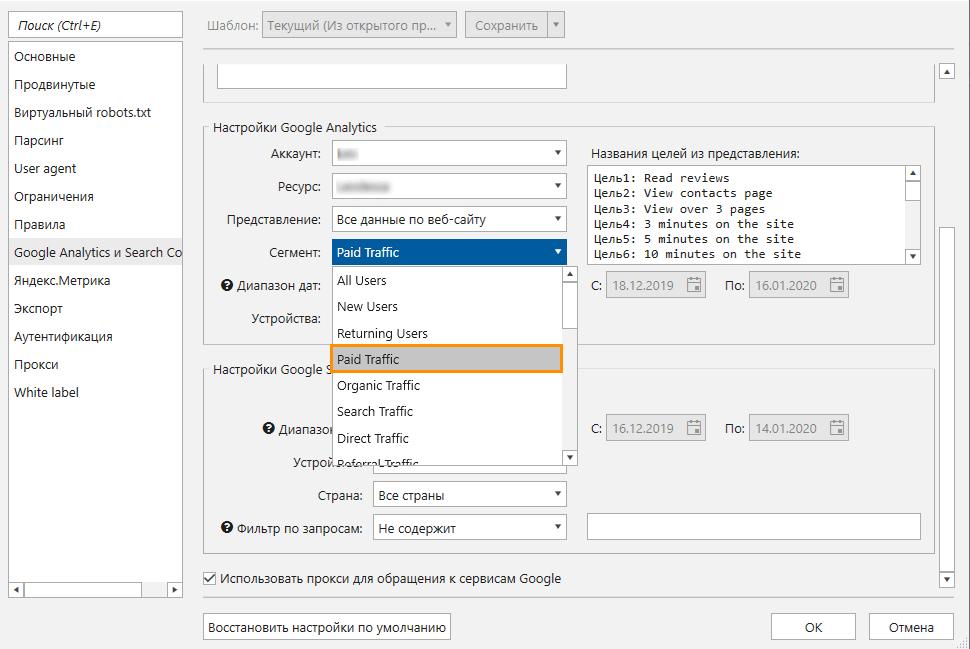

- Подключите аккаунт Google Analytics в Netpeak Spider. Инструкции по подключению описаны в статье «Интеграция с Google Analytics и Search Console».

- Просканируйте сайт или загрузите страницы из Google Analytics (это можно сделать с помощью меню «Список URL»).

- Задайте платный трафик в настройках сегмента Google Analytics.

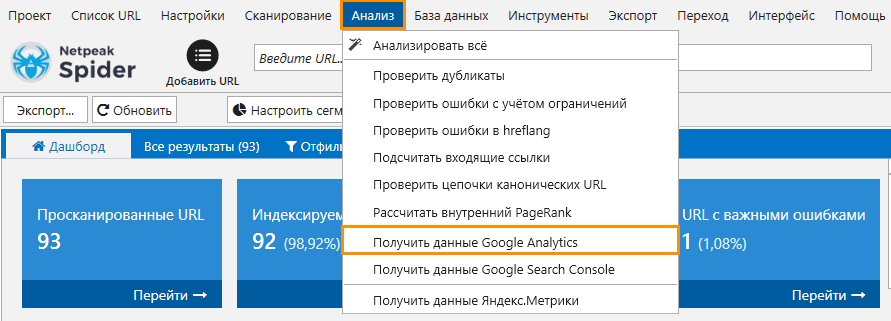

- Выгрузите данные по сеансам в основную таблицу с помощью меню «Анализ».

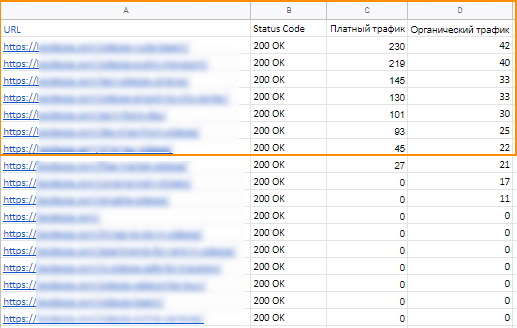

- Экспортируйте полученный отчёт.

- Задайте органический трафик в настройках сегмента и повторите действия, описанные в пунктах 3,4 и 5.

- Объедините два отчёта и сравните отношение платного трафика к органическому. В результате вы узнаете, какие страницы получают больше платного трафика, чем органического.

Задача 2

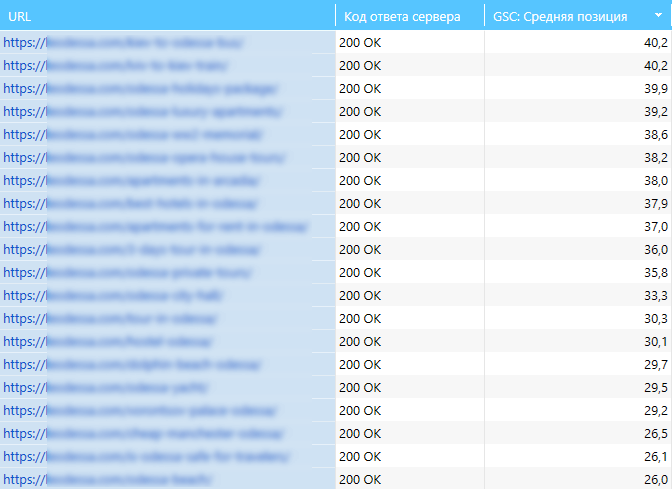

Загрузите полученные страницы в новый проект Netpeak Spider и включите параметр «GSC:Средняя позиция».

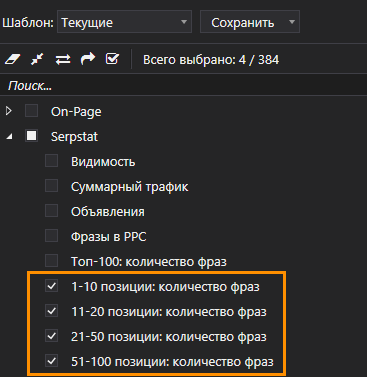

Если у вас есть аккаунт Serpstat, то загрузив список страниц в Netpeak Checker вы сможете получить информацию о количестве слов, по которым ранжируется сайт на определённых позициях в Google. Для этого включите параметры, указанные на скриншоте.

Результат:

Подводим итоги

Комбинируя различные настройки параметров сканирования, вы можете гибко настраивать Netpeak Spider для полноценного автоматического определения специфических ошибок оптимизации и получать отчёты, релевантные поставленной задаче. Это поможет в дальнейшем устранить найденные ошибки максимально эффективно и быстро, а также провести комплексный аудит сайта.

Есть вопросы → задавайте их в комментариях или пишет мне в Telegram 😊