JavaScript SEO: Is Google Catching Up? [Research]

From Experts![JavaScript SEO: Is Google Catching Up? [Research]](https://static.netpeaksoftware.com/media/en/image/blog/post/4eef8553/900x300/javascript-seo.png)

For many years JavaScript was perceived in the SEO community as a nightmare. I have discussed it over and over again with clients who have been blaming migration to a JavaScript framework for drops in SEO visibility and loss of traffic. Problems with content indexing, issues with crawling, or serving proper content to Google were addressed by a relatively new branch in SEO: JavaScript SEO.

1. Is JavaScript SEO Dead?

In the case of JavaScript-powered websites, the page must be rendered by Google to get content indexed. For many years Google used Chrome 41 for rendering, which was released in 2015, and which Web Rendering Service used for the next four years. It led to many problems because developers following trends in web development used the latest technology for building applications. Developers were testing websites and applications using the latest versions of web browsers to make sure that everything worked fine. Still, almost nobody cared how Google rendered pages with their ‘ancient’ Chromium version. As an example, Google failed with rendering applications that used a new syntax introduced by ES6 (ECMAScript 6). It didn’t make SEO or developers’ lives more comfortable because we had to look for workarounds to keep the website crawlable and indexable. 2019 was the crucial moment in JavaScript SEO.

1.2. Launching Evergreen Googlebot

In May 2019, Google announced that they updated Chromium for rendering to the latest version and that they would continue to keep it up to date. It was huge:

Theoretically, now Google shouldn’t have noticeable problems with rendering pages. It was a massive change, and many people questioned whether JavaScript SEO still makes sense.

2. The Cost of JavaScript

In 2019 Google improved significantly. However, it didn’t solve every JavaScript SEO issue. Let’s take a look at the main reason for these problems.

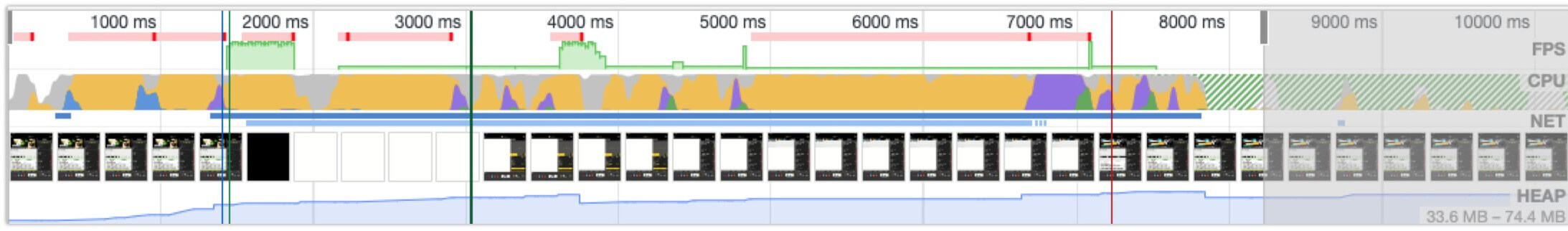

The primary source of the issues with JavaScript is the cost of rendering. In the case of JavaScript-heavy websites, to see written content, navigation, and images, your browser has to download resources and execute JavaScript. If you take a look at the performance metrics in Chrome Developer Tools, you can see how much CPU and memory it takes to load the page:

The yellow shading shows CPU usage during the scripting process. For several seconds, it maxes out.

The same applies to Google and other search engines, which need to crawl and index billions of pages. Even a well-equipped server infrastructure needs to make a significant effort to render all pages on the web effectively. Because of this, Google delays rendering in many cases. Further crawling and content indexing were also postponed. Based on our observations, indexing might be delayed for weeks or even months.

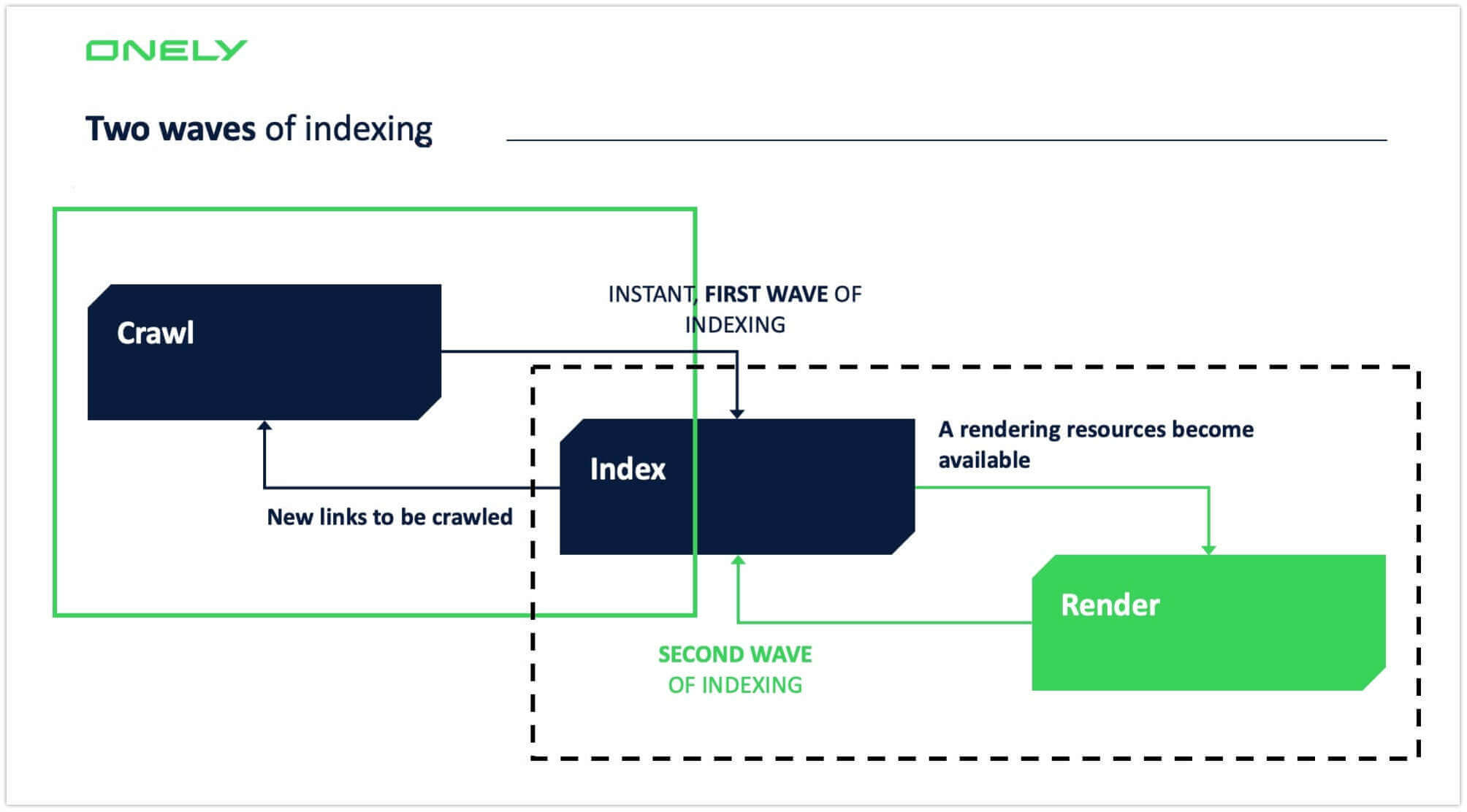

2.1. 2019: Google Still Indexes Pages in Two Waves

Google says that they render almost all pages on the web. If they encounter a brand-new website, they try to render the page and compare the raw HTML with the most recently rendered version to see if there are significant differences between the source code and DOM. If they notice that much content and many new links appear on the site after rendering, it gives a clear hint to them that it should go through two waves of indexing. As a result, rendering and indexing of the rest of the subpages might be significantly deferred. However, many experiments we conducted show that the rendering process is not smooth and may lead to significant delays in indexing.

Let’s start with some basic concepts applied to JavaScript-heavy websites.

Rendering JavaScript-powered websites is challenging and requires many resources, so it’s not always possible to render all pages at the time of crawling. As a result, the first step is to index a page without rendering. In the case of websites rich in JavaScript-dependent content, it would be a blank (or almost blank) page, which is the first wave of indexing. For the second wave, Google moves not-rendered pages to the rendering queue. If they have free resources, they execute JavaScript, render the page, and finally index the content. Unfortunately, there’s no fixed wait time for Google to render.

Google claims that they are working on closer indexing and rendering, so we can expect delays to get smaller and smaller. That would be a big thing that changes SEO as we know it, so I’m keeping my fingers crossed for it.

3. Our Experiment to Check if Google Is Catching Up

At Onely, we are observing processes in crawling and indexing by Google that shed new light on JavaScript SEO. In the past, we saw indexing delays for almost all JavaScript-powered websites we were analyzing. The delay still exists for now, but more and more often, we find examples when rendering and indexing go hand in hand, diminishing delays. Tomek Rudzki, the Head of R&D at Onely, gave that idea of launching an experiment that provides new insight into the two waves of indexing.

We started collecting data about the indexing of the URLs and JavaScript-dependent content to see how big the gap between URL indexing and content indexing is. As mentioned above, we’ve seen massive delays in indexing in the past. We wanted to know how long the waves last. Below is the data that represents the delay in URLs and content indexing caused by the two waves of indexing concept.

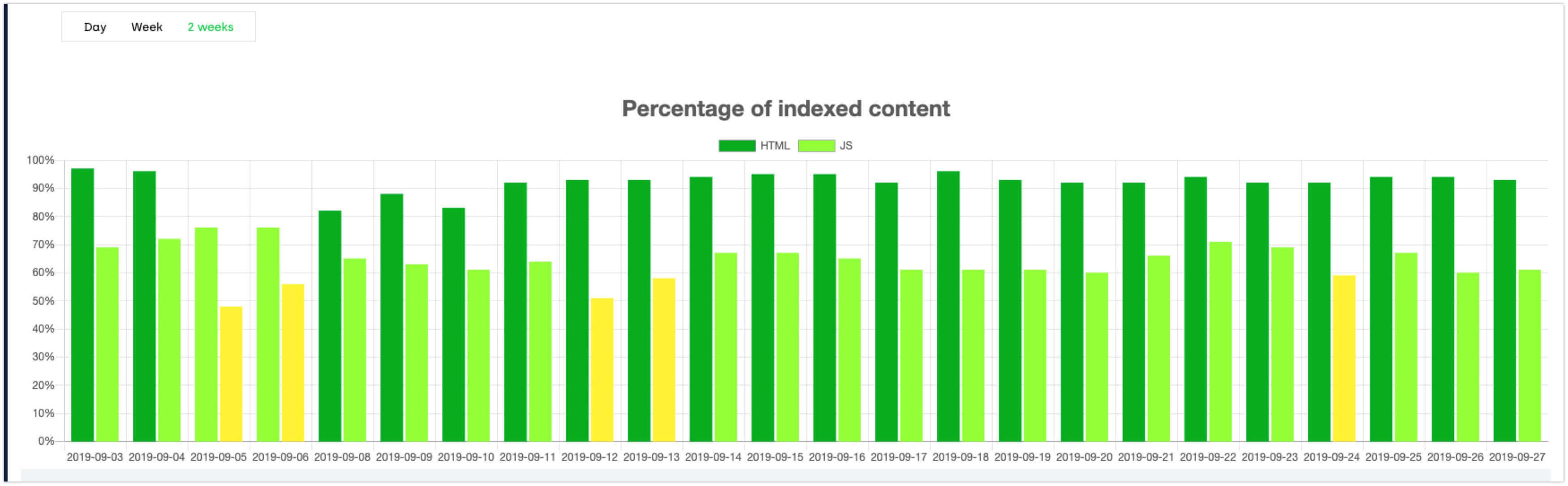

Note: The bars in the dark green show the sample where indexing is above 80%. If the indexing is below 60% of the whole sample, we mark it in yellow.

In the chart, we can also see the gap between URLs and JavaScript content indexing.

The chart shows the percentage of indexed URLs recently added to sitemaps in a given day (HTML, the bar on the left) and indexing of content loaded on the page (JavaScript, the bar on the right). As you can see, the percentage of URLs indexed doesn’t change significantly over time and is more or less at the same level. Around 82-90% of URLs added to sitemaps were indexed on the same day. The gap changes day-to-day, but generally hover around 25-30%.

Our first conclusion: the gap in URLs and JavaScript-loaded content still exists. In both cases, Google indexes a similar percent of URLs and content over time. We can’t see extreme values.

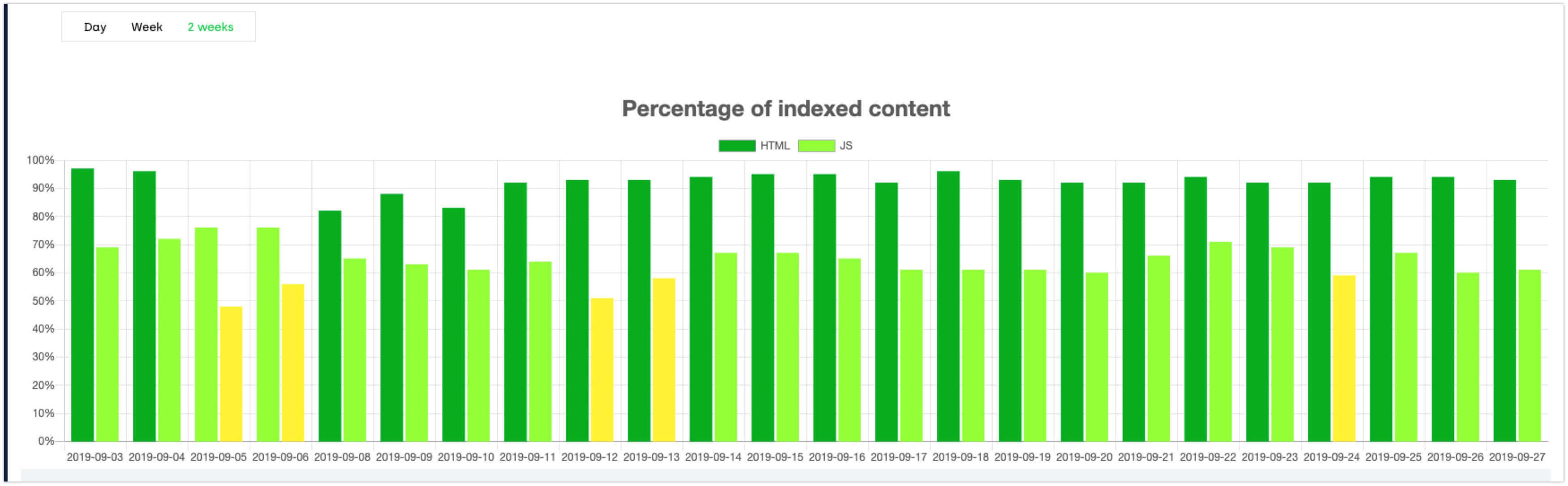

Now, let’s see how it looks after seven days.

After seven days, Google managed to index an additional 5-10% of the URLs, and URL indexing is above 90%. By contrast, JavaScript content indexing increased by 2-3%. That pace of indexing is stable over time.

Our second conclusion: Google is indexing URLs faster than it does JavaScript content.

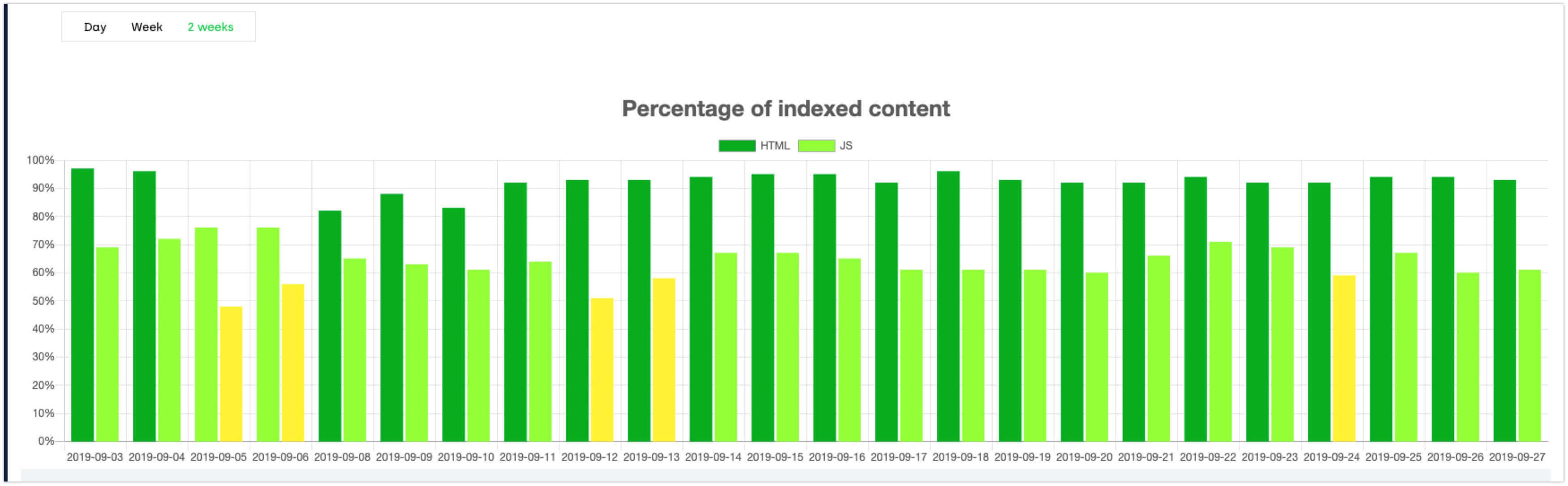

How does it look after two weeks?

After additional seven days, the percentage of indexed URLs is almost the same, meaning that Google probably reached the natural level of indexing for the domains. Google doesn’t index 100% of URLs we submit due to low quality of the page or other technical SEO issues.

Our third conclusion: indexing JavaScript-powered content is increasing very slowly, and Google has a problem with indexing content on more than 30% of the pages.

Note: The data and conclusions presented above apply to JavaScript-powered websites that do not use Server-Side Rendering or Dynamic Rendering.

3.1. What Does This Mean for the Owners of JavaScript-Powered Websites?

For many websites, fast indexing is crucial. If you have a website with offers that change every minute, any delay in indexing may impact the overall SEO performance of your site. We can see that Google doesn’t index 100% of the URLs you submit in the sitemap on the same day.

Moreover, they may reject indexing some pages at all. It may be caused by content duplication, poor internal linking, or crawling budget issues, so we can’t blame JavaScript for everything. Technical SEO can help with mitigating these problems and, in many cases, speed up indexing. However, around 40% of the content won’t be indexed on the same day if JavaScript loads it, meaning that almost 40% of your offer won’t be discoverable as quickly as it should be.

Final Thoughts: JavaScript SEO Is Alive

This year, Google made a massive improvement in terms of processing JavaScript, boosting their efficiency in rendering. Bartosz Góralewicz had a chance to ask Google’s Martin Splitt and John Mueller about the future of JavaScript.

They don’t provide a timeframe as to when JavaScript indexing will catch up with HTML indexing. Martin and John say that JavaScript SEO is definitely not going away, and our research backs it up. The gap between HTML and JavaScript indexing still exists. According to Martin Splitt, technology is changing extremely fast and we can’t be sure that the new component or library will work for Google. In practice, there is still room for error.

Indeed, Google is getting better and better at processing JavaScript-powered websites. However, I wouldn't say they are good enough to let them index the websites as they are:

- Some dynamic rendering or (ideally) server-side rendering should be in use if you want to make sure your website won't suffer because of Google’s two-waves approach to indexing. If the website contains content that needs fast or instant indexing, you can’t rely on Client-Side Rendering.

- As mentioned above, Google doesn’t index every page it finds, and not always due to JavaScript. Don't lose sight of non-JavaScript related issues; they also may be the reason for slow indexing and, in general, the poor SEO performance of your site.

Remember that Google is not the only search engine. Even if Google and Bing (Bingbot goes evergreen too) can render pages like a modern browser, we can’t expect the same of Yahoo or social media channels. Even if Google catches up in the future, keep in mind that some share of the traffic to your site may come from other search engines that are not well-skilled at rendering.